Paid social media marketing is one of the few growth levers you can switch on, measure in hours, and scale with confidence when it’s built on the right fundamentals. It’s also one of the easiest places to burn budget when “boost post” decisions replace strategy, and when teams confuse activity (more ads) with progress (better outcomes).

In 2024, social media advertising revenue hit $88.8B in the U.S. alone, showing how central this channel has become for performance and brand building. IAB/PwC’s full-year 2024 Internet Ad Revenue Report makes the bigger point: paid social is no longer an “optional” line item—most categories treat it like core infrastructure.

At the same time, the playing field is getting tougher. More advertisers are competing for attention, platforms are automating more of the buying decisions, and privacy changes keep forcing measurement upgrades. This guide starts with a clear definition, then moves into a practical framework you can actually run—whether you’re managing campaigns in-house or delivering results as a freelancer.

Article Outline

- What Is Paid Social Media Marketing?

- Why Paid Social Media Marketing Matters

- Framework Overview

- Core Components

- Professional Implementation

- Part 2: Strategy and Offer Foundations

- Part 3: Creative Systems and Testing

- Part 4: Tracking, Analytics, and Reporting

- Part 5: Scaling, Automation, and Ecosystem Synergies

- Part 6: Playbooks, Checklists, and Next Steps

What Is Paid Social Media Marketing?

Paid social media marketing is the practice of buying ad placements on social platforms to intentionally reach specific audiences and drive a measurable outcome—sales, leads, app installs, subscriptions, qualified traffic, or brand lift. The “paid” part matters because you’re not relying on organic reach or luck; you’re entering an auction where your creative, targeting, and measurement determine whether you win attention efficiently.

It helps to think of it as a system with three moving parts: (1) distribution you can control (budget, bids, placements), (2) messaging people will actually respond to (creative and offer), and (3) feedback loops that tell you what’s working (tracking, attribution, and lift testing). When those three are aligned, paid social becomes repeatable—not just a streak of good weeks.

Social platforms are also where modern attention lives at scale. The Digital 2025 Global Overview Report estimates 5.24 billion social media user identities worldwide, which is why paid social keeps showing up in growth plans across B2C and B2B.

Why Paid Social Media Marketing Matters

Paid social matters because it compresses the distance between an idea and evidence. You can launch a new angle, new creative, or a new audience hypothesis, then learn quickly—often faster than SEO, partnerships, or offline campaigns can respond. That speed is not just convenient; it’s a strategic advantage when competition shifts or when product-market fit is still being refined.

It also matters because money follows outcomes. WARC forecasts social ad expenditure worldwide reaching $286.2B in 2025, with spending projected to pass $300B in 2026, which signals where performance budgets continue to concentrate. WARC Media’s social media 2025 outlook frames social as a sustained growth engine, not a temporary trend.

And there’s a practical business reality behind the numbers: marketing teams are being pushed to do more with less. Gartner reports that 2025 marketing budgets are flat at 7.7% of company revenue, which is why channels with clear feedback loops get prioritized. Gartner’s 2025 CMO Spend Survey press release makes it clear that productivity is the mandate—paid social is often where that mandate gets tested first.

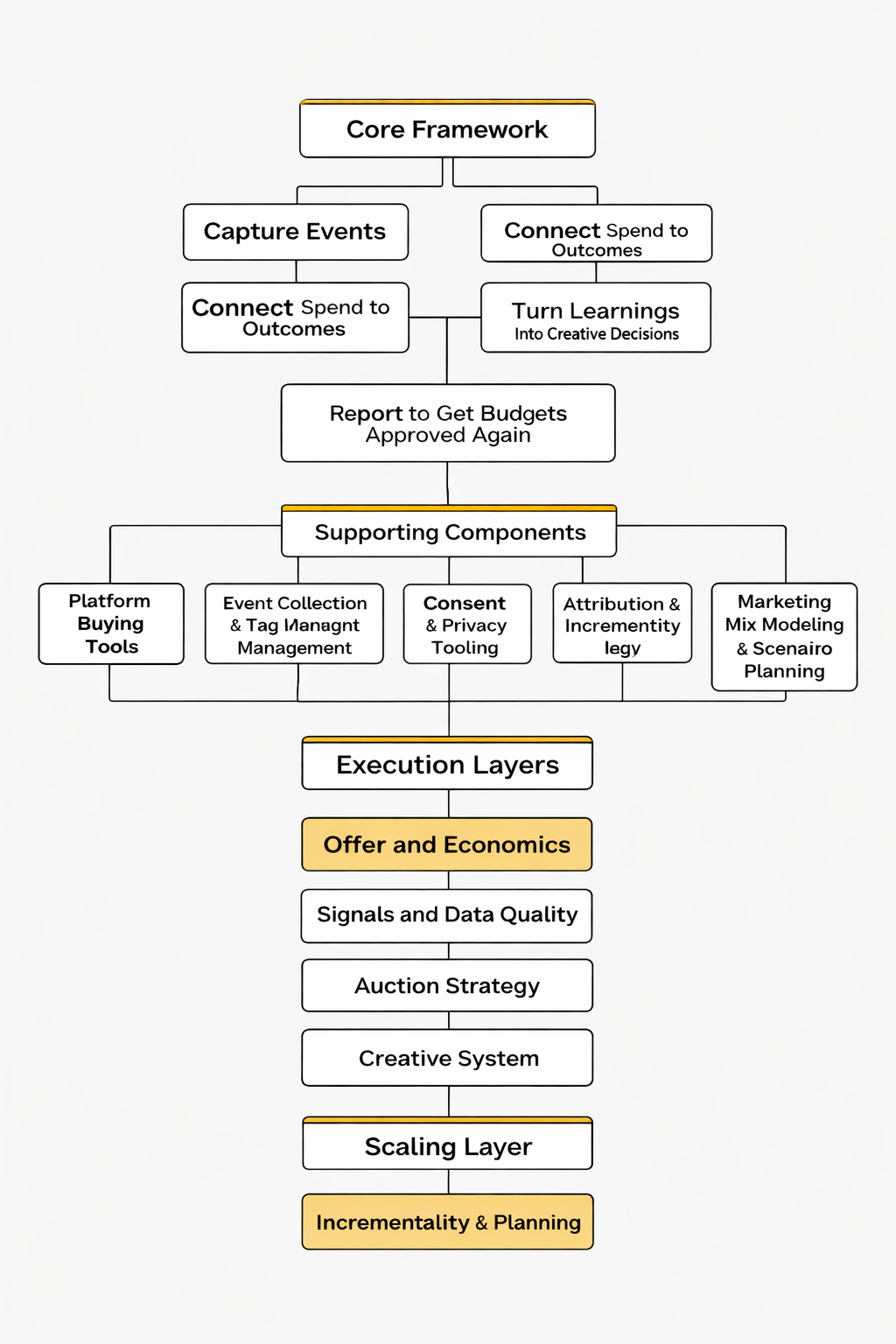

Framework Overview

This article uses a simple operating framework you can apply across Meta, TikTok, LinkedIn, Pinterest, Snapchat, and emerging placements as they evolve. It’s designed to be platform-aware without being platform-dependent, so you don’t rebuild your entire approach every time an algorithm shifts.

The framework is organized into five layers:

- Goal and economics: what success means and what you can afford to pay for it.

- Audience and positioning: who you’re trying to win, and why they should care.

- Creative system: a repeatable way to produce, test, and refresh ads that earn attention.

- Buying and structure: campaign setup, budgets, bids, placements, and learning strategy.

- Measurement and iteration: tracking you can trust, plus decisions you can defend.

We’ll go deep on each layer in later parts, but Part 1 focuses on the foundations so the “how-to” sections don’t turn into platform checklists with no strategy behind them.

Core Components

Targeting and Audiences

Targeting is no longer just picking interests and hoping for the best. Modern paid social media marketing is built around signal quality: first-party data, engagement data, product-level events, and clean conversion definitions. When your signals are strong, platforms can optimize more effectively—especially as automation expands.

This is why integration work is now a performance lever, not a technical footnote. For example, Meta’s server-side tracking guidance (Conversions API) is explicitly positioned as a way to improve data reliability and maximize performance when browser-based signals are inconsistent. Meta’s Conversions API best practices is worth treating like required reading, not optional documentation.

Creative That Wins the Auction

Creative is the most underestimated “targeting” tool because it shapes who self-selects into your funnel. Strong creative filters the right people in, repels the wrong people out, and gives the algorithm clearer behavioral signals to learn from. Weak creative does the opposite: it drives low-quality clicks, noisy engagement, and unpredictable costs.

In practical terms, creative that consistently performs tends to do three things well: it earns attention quickly, makes the offer feel specific (not generic), and reduces uncertainty with proof. The platform changes constantly, but those human dynamics don’t.

Budgeting and Bidding Logic

Budgeting is really decision-making under uncertainty. Your first job is to buy learning at a reasonable cost; your second job is to scale only what deserves more spend. Most accounts fail here because they scale before they’ve stabilized the variables that matter: conversion quality, creative freshness, and measurement integrity.

When you budget, you’re not just buying impressions—you’re buying the right kind of data for your next decision. That’s why “more budget” is not a strategy, and why disciplined testing beats emotional scaling.

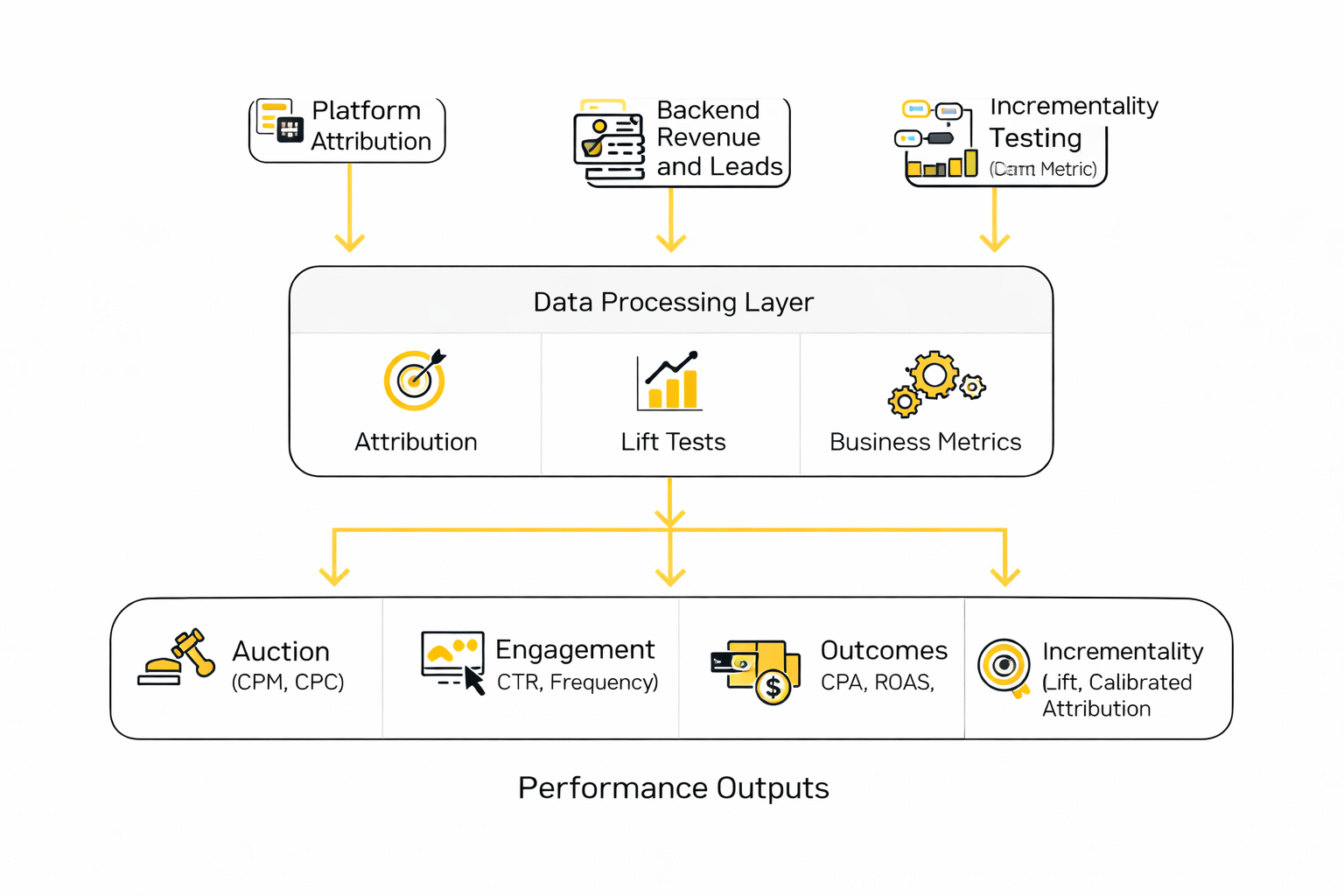

Measurement, Attribution, and Lift

If you can’t measure it, you can’t improve it—and in paid social, “measurement” means more than reading platform dashboards. You need a measurement stack that can survive signal loss, attribution windows, and cross-device behavior, while still giving you actionable direction.

The broader industry has been navigating privacy-driven signal changes for years, and the impact is now structural. IAB’s research on privacy-by-design ecosystems highlights how addressability and measurement challenges have forced teams to evolve their data strategies rather than simply “wait for tracking to come back.” IAB State of Data 2024 is a strong lens on what changed and why it’s permanent.

Operations, Compliance, and Brand Safety

Operational rigor is what keeps performance from collapsing under pressure. That includes naming conventions, creative versioning, QA checklists, approval workflows, and clear rules for when to pause, duplicate, consolidate, or rebuild.

It also includes brand safety. Paid social is powerful precisely because it scales fast—but scale can magnify risk just as quickly. Fraud, misleading claims, and low-quality placements can damage trust and distort your numbers, which is why governance has to be part of the system, not an afterthought. A Reuters investigation into the economics of scam advertising on major platforms underlines how real this risk is at scale. Reuters reporting on scam ad dynamics is a reminder to build controls early.

Professional Implementation

Professional-grade paid social media marketing is less about secret tactics and more about consistency: consistent tracking, consistent testing, consistent creative production, and consistent decision rules. The pros win because they reduce randomness. They don’t guess what changed—they instrument it, isolate it, and respond with a repeatable process.

One practical way to think about “professional” is this: could someone else take over your account tomorrow and understand exactly why each campaign exists, what it’s supposed to achieve, and what would trigger the next action? If the answer is no, you’re not managing campaigns—you’re managing anxiety.

In Part 2, we’ll start by locking down goals, unit economics, and offer clarity so every campaign has a reason to exist. Then we’ll build the creative and measurement systems that let you scale without losing control.

Part 2: Strategy and Offer Foundations

Coming next: defining success metrics that map to real business outcomes, aligning the funnel to buying intent, and building an offer structure that makes paid social profitable instead of merely busy.

Part 3: Creative Systems and Testing

Coming next: a practical creative pipeline, testing cadence, and iteration rules that keep performance stable even when audiences fatigue.

Part 4: Tracking, Analytics, and Reporting

Coming next: how to set up measurement you can trust, what to report, and how to turn campaign data into decisions clients will pay for.

Part 5: Scaling, Automation, and Ecosystem Synergies

Coming next: how to scale winners safely, where automation helps (and where it hurts), and how paid social fits into a wider growth ecosystem.

Part 6: Playbooks, Checklists, and Next Steps

Coming next: execution checklists, common failure modes, and how to package your paid social skills into a reliable client-getting offer.

Step-by-Step Implementation

Good paid social media marketing execution feels boring in the best way. You launch with clean tracking, a clear testing plan, and creative that’s built for the platform you’re buying on. Then you let the system learn long enough to produce signal you can trust.

This step-by-step sequence is designed to work across Meta, TikTok, LinkedIn, Snap, and Pinterest, even though the buttons and names vary. The order matters because each step reduces a different kind of risk: data risk, creative risk, and decision risk.

Step 1: Lock the Conversion Definition and Success Metric

Start by defining what “success” means in one sentence: the action you want (purchase, lead, subscription), the quality bar (qualified lead, margin-safe order), and the cost you can afford. That sentence becomes your optimization metric, your reporting headline, and your weekly decision rule.

Then make the conversion event unambiguous. If you’re optimizing for purchases, the “purchase” event needs to fire exactly once per order, with the right value. If you’re optimizing for leads, the lead event needs to represent the moment the lead is real (form submitted, calendar booked, offline lead validated), not just “page viewed.”

Step 2: Build Resilient Event Collection (Browser Plus Server Where Possible)

Most teams lose performance quietly by feeding platforms incomplete conversion signals. The practical fix is dual-channel event collection: keep the browser pixel for speed and coverage, and add server-side events for reliability and resilience.

Meta’s developer documentation explains what Conversions API is meant to do and why it exists: create a direct connection between your marketing data and Meta’s systems via server-to-server sharing. Meta Conversions API documentation lays out the model, and their guidance on deduplicating pixel and server events is essential so you don’t double-count conversions.

On Pinterest, server-side tracking follows the same principle: send conversions directly via API so optimization and reporting don’t depend on fragile third-party cookies. The product is described in Pinterest’s Conversions API overview, with implementation pathways in Pinterest developer conversion tracking.

Step 3: Choose a Campaign Structure That Protects Learning

Your first goal is to get stable learning, not to build an impressive-looking account tree. That means fewer moving parts at launch, enough budget per ad group to generate results, and enough time without constant edits so the delivery system can actually learn.

TikTok’s documentation is unusually clear about what disrupts learning: new ad groups, significant budget changes, significant bid/ROAS changes, and targeting edits can push delivery back into exploration. TikTok’s Learning Phase guidance also notes that volatility typically declines after roughly 25 results or about 7 days once learning begins, which is a useful sanity check when someone panics on day two.

Step 4: Build Creative for the Feed, Not for Your Brand Deck

Creative should be produced like a system: multiple angles, multiple hooks, and multiple proof points, all built to blend into the feed while still feeling unmistakably tied to the offer. It’s not about making “one great ad.” It’s about giving the algorithm enough variety to learn what resonates, then scaling the winners.

Lean on platform-native guidance for format fundamentals. TikTok’s creative guidance emphasizes TikTok-first production principles and the reality of creative fatigue. TikTok’s creative best practices and the help center’s creative optimization guidance both reinforce the same operational truth: performance drops when creative gets stale, so you need a consistent supply.

For placement and technical requirements, the Meta Ads Guide format specs is the cleanest reference for dimensions and placement requirements, so your creative doesn’t lose efficiency due to avoidable formatting mistakes.

Step 5: Run a Pre-Launch QA That Prevents Expensive Mistakes

Before spend goes live, check four things: (1) conversion fires correctly and only once, (2) values match real revenue or lead quality logic, (3) UTMs or tracking parameters are consistent, and (4) your landing page experience matches the promise in the ad.

If you’re operating in regions where consent is required, bake it in before you scale. Google’s documentation explains how consent mode works and how it integrates with server-side Tag Manager implementations. Implement consent mode with server-side Tag Manager is a practical reference when you’re building a measurement stack you can defend.

Execution Layers

Execution gets much easier when you treat paid social media marketing as a stack of layers instead of a pile of tactics. Each layer has a single job, and when a campaign underperforms, you diagnose the layer that’s failing instead of randomly changing everything at once.

Layer 1: Offer and Economics

This is where you decide what you can afford: allowable CPA, allowable cost per qualified lead, or target margin after ad spend. If the economics don’t work here, no amount of targeting wizardry fixes it later.

In practice, this layer controls how aggressive you can be with bids, how quickly you can scale, and whether you need stronger conversion rate improvements before increasing budget.

Layer 2: Signals and Data Quality

This layer is your event pipeline: what you send, how reliably you send it, and whether platforms can match it to people. If your events are inconsistent, the algorithm “learns” the wrong thing and optimization becomes unstable.

Deduplication is part of signal quality. Meta explains why matching event IDs across browser and server events matters in their deduplication documentation, and Pinterest outlines server-side conversion tracking in its Conversions API overview.

Layer 3: Auction Strategy

This is your structure, objective selection, placements, and the rules you follow so learning isn’t constantly disrupted. The goal isn’t perfection; the goal is a stable environment where the system can allocate spend based on real signals.

When someone wants to change five settings at once, this layer is your safety rail. The right move is almost always to change one variable, then let it run long enough to show you what changed.

Layer 4: Creative System

This is the engine that keeps accounts alive after the first wave of ads stops working. It includes a production cadence, a testing cadence, and a feedback loop that turns performance data into better briefs.

Research on ad variation and repetition suggests memory outcomes change depending on how repetition and variation are managed, which is a useful reminder that “run it until it dies” isn’t the only lever you have. A 2025 study on ad variation-repetition and memory recall is one example of how creative strategy and repetition interact.

Layer 5: Measurement and Decision-Making

This layer is where results become decisions. It includes attribution views for directional optimization and incrementality methods for proving causality when budgets get serious.

Lift testing exists because platform attribution can’t answer the only question finance truly cares about: did the ads create new outcomes, or did they just get credit for outcomes that would have happened anyway? TikTok frames that causality question directly in its Conversion Lift Study overview, and LinkedIn describes the same approach in Conversion Lift Testing.

Optimization Process

Optimization is not “tweaking until it feels better.” It’s a loop: diagnose, propose one change, run long enough to get signal, then either lock it in or roll it back. The fastest accounts aren’t the ones making the most changes; they’re the ones making the fewest changes that clearly matter.

1) Set a Weekly Cadence That Matches How Platforms Learn

If you change settings every day, you guarantee unstable performance because you keep forcing the system back into exploration. TikTok explicitly lists major changes that can trigger learning again in its Learning Phase documentation, which is a helpful way to explain to stakeholders why you’re not going to “just adjust the targeting real quick” five times a week.

A steady cadence usually looks like this: daily monitoring for breakages, twice-weekly creative decisions, and weekly structural decisions. That keeps your account stable while still moving fast.

2) Triage With a Simple Diagnostic Order

When performance drops, start with the obvious and move toward the complex. First check tracking and conversion volumes. Then check creative fatigue and frequency. Then check auction pressure (CPM shifts, delivery changes). Only after that should you change structure or objectives.

If your creative is fading, TikTok’s help center encourages refreshing when results consistently decline and explicitly frames creative supply as a way to keep campaigns healthy. TikTok’s creative best practices for evaluation and optimization is a practical reference for that mindset.

3) Test One Variable at a Time With a Clear Success Metric

Tests fail when you change too much at once. If you change audience, creative, landing page, and bidding together, the result teaches you nothing.

When you do need a clean test, use built-in experimentation tools where available. Meta’s experiments tooling is documented publicly for developers and partners, including resources like Advantage+ shopping campaign API documentation and measurement guidance around event pipelines such as event deduplication. Even when you’re not using the API directly, the documentation clarifies what the platform expects from stable inputs.

4) Scale Like a Risk Manager, Not Like a Gambler

Scaling is where most paid social media marketing accounts get hurt because the team confuses a good week with a proven system. The safe approach is incremental increases, with clear guardrails: what metric triggers a pause, what metric justifies more spend, and what metric means you need new creative before you push budget again.

If leadership wants more certainty than attribution can provide, that’s the moment to run incrementality work. TikTok positions lift testing as a way to quantify true impact in its Conversion Lift Study announcement, and independent experimentation platforms summarize why lift tests often change budget decisions in analyses like Haus’s learnings from incrementality experiments.

Implementation Stories

The most useful implementation stories aren’t “we ran ads and numbers went up.” They’re stories where the team needed proof, hit a wall, then used a disciplined paid social media marketing process to earn the right to scale.

Quay and Monks: Proving Incrementality Before Scaling Hard

The pressure wasn’t subtle. Quay wanted to scale TikTok while keeping cost-per-acquisition goals intact, and the team needed to show the growth was real, not just platform attribution taking a victory lap. When the stakes are budget expansion and bottom-line performance, “trust the dashboard” stops being a strategy.

The backstory is that Quay already had a strong TikTok presence and wanted to push deeper into lower-funnel performance outcomes like search behavior, add-to-cart, and purchases. Their agency partner, Monks, was brought into a mission that sounds simple but rarely is: methodically scale investments while protecting CPA and proving value across the customer journey. TikTok’s own write-up frames the objective as validating incremental outcomes and scaling with confidence. Quay’s TikTok Conversion Lift Study case study

The wall was measurement credibility. Lower-funnel campaigns can show conversions, but the hardest question is whether those conversions were caused by ads or would have happened anyway. Without an incrementality approach, scaling can feel like driving faster while the windshield is fogging up.

The epiphany was to treat measurement as the unlock, not the afterthought. Quay and Monks collaborated with TikTok to execute a Conversion Lift Study that split audiences into treatment and control groups, designed to measure incremental impact directly. The mechanics of the method are spelled out in TikTok’s own overview of lift testing. TikTok’s Conversion Lift Study overview

The journey combined disciplined execution with creative operations. TikTok reports the team ran Catalog Ads Retargeting, Web Conversion, and Smart+ Web Campaigns, and refreshed creatives every 7–14 days to stay relevant. That “creative cadence” detail matters because it turns the story from a one-off win into a repeatable operating model. The solution section of Quay’s case study

The final conflict was that lift testing doesn’t just prove value—it forces clarity. Once you can quantify incremental lift, every future decision gets sharper: what to scale, what to refresh, what to stop funding, and what to measure next. Teams that aren’t ready for that clarity often drift back into “more spend, more ads” behavior because it feels productive.

The dream outcome was earned: measurable lift across the consumer journey plus the confidence to keep investing. TikTok reports a 417% lift in search, a 70% lift in add-to-cart, and a 54% lift in purchases among the treatment group, alongside surpassing ROAS targets by 91%, with the study dated July–August 2024. That’s what professional paid social media marketing looks like when the system is built to prove impact, not just report activity. The results section of Quay’s case study

Statistics and Data

Analytics is where paid social media marketing stops being “opinions in a meeting” and becomes a decision engine. But your dashboards are only as useful as the context around them, which is why you want a small set of numbers that explain the market and a tighter set of numbers that explain your account.

Start with the market reality: scale keeps growing, and so does the competition for attention. $88.8B in U.S. social media advertising revenue in 2024 shows how much money is competing for feed inventory, and €118.9B in European digital ad market revenue for 2024 helps explain why even “stable” categories feel crowded.

Then look at what’s happening inside paid social specifically. A lot of performance teams felt a shift toward more efficiency through 2025, reflected in trend reports that track large cross-platform datasets. Skai’s Q4 2025 report highlights paid social spend up 9% year over year with clicks up 23% and CPMs down 6%, and the Q1 2025 report captures the earlier phase of stabilization where paid social CPMs rose 5% while volume declined.

Benchmark datasets are useful because they stop you from misdiagnosing normal market movement as an account failure. Tinuiti’s Q3 2025 benchmark write-up, based on the firm’s managed spend, reports Meta impressions up 16% year over year with CPM down 2%, and calls out how platform inventory shifts (like Reels) can change delivery patterns without you touching anything.

Performance Benchmarks

Benchmarks are not targets. They’re guardrails that help you separate “the market changed” from “our execution broke.” In paid social media marketing, you want a small set of benchmarks that answer three practical questions: are we buying attention efficiently, are we converting that attention into outcomes, and are the outcomes real (incremental) or just credited?

Auction Benchmarks: CPM, CPC, and Click Volume

CPM is the temperature of the auction. When CPM moves, it can be demand (more advertisers), supply (more or less inventory), or both. When CPC moves, it’s usually a mix of CPM and how compelling your creative is to the people seeing it.

The point of a benchmark is to avoid panic edits. If your CPM rises 10% but the market trend is rising too, you don’t tear down your structure; you focus on creative efficiency and conversion rate. That’s why it helps to keep a running market view from sources like Skai, including the Q3 2025 takeaway that paid social spend increased 11% year over year with clicks and impressions up 18% while CPMs declined 5%, and the Q4 2025 summary where clicks rose 23% as CPMs fell 6%.

Engagement Benchmarks: CTR and Creative Fatigue Signals

CTR is not a vanity metric when you treat it like a creative diagnostic rather than a scoreboard. A sudden CTR drop often signals that the audience is tired of the message, the hook isn’t landing, or the placement mix shifted into environments where your creative is weaker.

You don’t need one universal CTR number, because different placements behave differently. What you need is your baseline by placement and your baseline by creative concept, then an early-warning system when a concept starts decaying. Trend datasets can help you stay grounded; Skai’s Q1 2025 report notes CTR improvements in retail media, and Tinuiti’s benchmarks repeatedly emphasize impression and CPM movement that can change click volume even without creative changes, including the Q3 2025 note that Meta impressions rose 16% while CPM fell 2%.

Outcome Benchmarks: CPA, ROAS, and Conversion Rate

CPA and ROAS only become meaningful when they’re tied to a conversion definition that matches business reality. If your “purchase” event is inconsistent or your lead event isn’t qualified, a perfect CPA can still be a bad week for the business.

For many teams, the most useful benchmark isn’t a single CPA number. It’s the relationship between CPM, CTR, CVR, and AOV. When you track those together, you can see whether a CPA spike is coming from the auction, the creative, the landing page, or product economics.

Incrementality Benchmarks: Lift, Not Just Credit

Platform attribution is built for optimization, not for proving causality. Incrementality methods exist because leadership eventually asks the only question that matters: what would have happened without the ads?

Meta positions conversion lift testing as a way to measure incremental impact across Meta platforms, and TikTok frames lift as a standardized way to measure causal impact in its Conversion Lift Study overview. When you can’t run lift constantly, you run it periodically to calibrate your day-to-day attribution.

Analytics Interpretation

Interpreting paid social media marketing analytics is less about reading numbers and more about reading stories the numbers are trying to tell. A dashboard can show 20 metrics, but only a few of them explain what changed and what to do next.

Use Three Views of Truth

- Optimization view: platform reporting is fast and directional. It helps you make creative and delivery decisions quickly, even if it can over-credit.

- Business view: your backend source of truth (orders, revenue, qualified leads) keeps you honest, especially when platform reporting looks too good to be true.

- Causality view: incrementality work (lift tests, holdouts, MMM) tells you whether performance is real impact or just re-attribution.

This three-view model keeps you from swinging between extremes. You don’t ignore platform reporting, but you also don’t let it be the only judge. That’s the same logic behind why MMM and scenario planning are being pushed closer to marketers; Google’s Meridian ecosystem is explicitly trying to turn MMM into decisions with tools like Scenario Planner, with coverage reinforcing the “decision gap” problem in outlets like Search Engine Land and MediaPost.

Diagnose in a Fixed Order So You Don’t Thrash the Account

When a result changes, keep your diagnosis order consistent:

- First: tracking integrity. If events dropped, deduplication broke, or consent settings changed, your “performance” may be a measurement artifact.

- Second: auction movement. If CPM shifted across the market, your cost pressure may not be creative-driven.

- Third: creative behavior. Look for fatigue signals (declining CTR, rising frequency, worsening thumb-stop metrics) before touching structure.

- Fourth: conversion mechanics. If CTR is stable but CPA spikes, your issue is often landing page, offer clarity, price, or checkout friction.

- Fifth: incrementality calibration. If nothing explains the change, it may be attribution drift, which is when lift testing pays for itself.

This is where professional paid social media marketing feels calmer than amateur work. You’re not “trying stuff.” You’re narrowing the cause before you change anything.

Translate Metrics Into Stakeholder Language

Most stakeholders don’t care about CTR. They care about growth, risk, and predictability. Your job is to translate metrics into those terms: what changed, what it means for revenue or pipeline, and what you’re doing next.

That translation matters more in a world of flat budgets. Marketing leaders continue to report pressure to deliver efficiency, reflected in marketing budgets holding at 7.7% of company revenue in 2025 and the broader discussion about productivity in coverage like ANA’s write-up.

Case Stories

Case stories are useful when they show how analytics changed decisions, not when they just list results. The best ones include the messy part: uncertainty, measurement doubt, and the moment the team realized the dashboard wasn’t enough.

Hummel: When the Dashboard Wasn’t Enough, Lift Testing Unlocked the Next Budget

The numbers were moving, but nobody trusted them. The team saw conversions in their reports, yet the leadership conversation kept circling back to the same suspicion: “Are we driving new demand, or just getting credit?” Every week the pressure increased, because scaling paid social media marketing without trust is like stepping on the gas with your eyes closed.

The backstory was a brand trying to grow efficiently in a competitive market where customers bounce between channels. Meta campaigns were part of the plan, but the team needed a way to show impact beyond what standard attribution could comfortably prove. Meta’s own materials position conversion lift as the method to measure incremental outcomes, which was exactly the gap they were facing. Meta’s conversion lift testing overview

The wall hit when reporting created more debate than clarity. If a channel is only defended with platform-attributed ROAS, budget approvals become fragile, and teams start optimizing for what gets credit rather than what creates growth. That’s the moment where strong execution still feels stuck, because the organization can’t agree on what “working” really means.

The epiphany was to stop asking analytics to do a job it wasn’t designed to do. Instead of trying to “explain” attribution, the team moved toward a causality approach with a conversion lift test that isolates incremental outcomes. Meta’s success story framing makes the intent explicit: measure incremental impact rather than rely on credited conversions. Hummel’s Meta success story

The journey was disciplined: run the campaign, hold out a control group, and let the study do what dashboards can’t. That approach created a clean narrative the business could understand: people exposed to ads behaved differently than people who weren’t, and the difference was measurable. In the success story results, the test revealed lifts that connected paid social media marketing to real demand signals and purchases, not just clicks. Results from Hummel’s conversion lift test

The final conflict was operational, not analytical. Once you prove incrementality, you also inherit a higher standard: leaders expect you to use that proof to plan budgets and creative strategy more intentionally, not just celebrate a win. The team now had to keep measurement credibility intact while maintaining creative supply and managing auction volatility.

The dream outcome was a budget conversation that finally matched reality. Hummel’s reported lift test results include a 20% lift in purchases, alongside lifts in search-related behaviors and traffic, which gave leadership a reason to keep investing with confidence. That’s what a mature paid social media marketing program looks like: not “better attribution,” but better truth.

Professional Promotion

“Promotion” in paid social media marketing isn’t about bragging. It’s about making your work legible to the people who control budgets: founders, CMOs, finance partners, and clients who have been burned by vague reporting before.

The cleanest professional promotion strategy is to package your analytics into proof that feels hard to argue with:

- Lead with the business outcome: revenue, qualified pipeline, purchases, registrations, or whatever the business actually values.

- Explain the drivers in plain language: what changed in the auction, what changed in creative response, and what changed in conversion behavior.

- Show how you validated reality: reference a causality method when it exists, like conversion lift approaches described in TikTok’s lift study overview or Meta’s conversion lift testing model.

- Use a benchmark to set expectations: market trend references like Skai’s paid social efficiency shift in Q4 2025 help stakeholders understand what’s “normal” versus what’s unique to your account.

- Close with the next decision: what you recommend doing next week and why that decision is the best risk-adjusted move.

If you do that consistently, your reporting becomes a sales asset. It becomes the reason a client renews, the reason a founder greenlights a budget increase, and the reason your paid social media marketing work gets remembered when the next big project needs a trusted operator.

Advanced Strategies

Once the basics are stable, advanced paid social media marketing becomes a game of leverage. You stop asking “what tweak improves performance?” and start asking “what change improves the whole system?” That typically means leaning into automation where it’s earned, tightening your measurement so you can scale without hallucinating results, and building a creative machine that can outlast fatigue.

Use automation like a power tool, not autopilot. Platforms are packaging more of the auction into AI-driven products, and the upside is real when inputs are clean. TikTok frames Smart+ as a way to automate campaign and audience optimization across performance goals in its Smart+ Campaigns overview, and LinkedIn positions Accelerate as AI that finds the right mix of targeting, creative, bidding, and placements, with reported improvements including up to 42% lower cost per action.

Calibrate “credit” with causality. The easiest way to overspend is to scale based on attribution that’s drifting. Incrementality work exists to answer the hard question directly, and it keeps scaling decisions honest. Haus summarizes learnings from a large dataset of tests, including that Meta drove an average of about 19% lift to brands’ primary KPI across experiments, which is exactly the kind of input that changes whether you keep funding prospecting or shift spend elsewhere.

Plan budgets with scenarios, not vibes. When you’re scaling beyond “we have a winner,” you need to know what happens if you shift 10–20% of spend across channels, not just what happened last week. MMM and scenario planning are moving closer to practitioners, reflected by Google’s push to make MMM decisions easier with tools like Scenario Planner for Meridian.

Build a creative portfolio, not one hero ad. Scaling usually fails because a single concept can’t carry a bigger budget without fatiguing. Advanced teams run a portfolio: different angles, formats, creators, and proof types that appeal to different pockets of the audience, so delivery can expand without collapsing efficiency.

Scaling Framework

Scaling paid social media marketing is easiest when you treat it like a staged process with guardrails. Each stage has a different job: prove repeatability, increase volume safely, then protect profitability while you expand into new inventory and audiences.

Stage 1: Validate Repeatability

This stage is about confidence, not growth. You keep structure steady long enough to learn, and you only scale what shows repeatable performance across multiple creative batches, not a single spike.

When stakeholders push for rapid changes, it helps to anchor decisions in how platforms learn. TikTok is explicit that significant edits can restart learning in its learning phase guidance, which is why a disciplined cadence often outperforms constant “optimizing.”

Stage 2: Increase Volume Without Breaking Signal

At this point you’re scaling spend, but your real goal is scaling high-quality signals. That means protecting conversion definitions, keeping event quality stable, and adding creative supply before performance forces you to.

Automation products tend to work best here because the algorithm has enough signal to make good decisions. TikTok’s Smart+ Web Campaigns are positioned as AI-assisted setup and optimization for website conversion goals in the Smart+ Web Campaigns overview, and LinkedIn Accelerate is marketed as a faster path to high-performing campaigns in LinkedIn’s Accelerate launch page.

Stage 3: Protect Efficiency While You Expand

Now the problem changes: scaling exposes weaker placements, weaker audience pockets, and weaker creative angles. If you don’t manage this stage, you’ll see the classic pattern where spend rises, efficiency drops, and the team starts blaming the platform.

In this stage, you scale by expanding the creative portfolio, improving landing page conversion rate, and using incrementality work to confirm you’re creating demand rather than harvesting it. The reason this matters is practical: budget pressure is real, reflected in marketing budgets holding flat at 7.7% of company revenue in 2025, so “we need more spend” is rarely persuasive unless you can show scalable impact.

Growth Optimization

Growth optimization is what you do after you’ve proven you can scale. It’s about improving the whole unit economics loop so paid social media marketing becomes a durable growth engine, not a fragile performance trick.

Optimize the System, Not Just the Ads

- Creative throughput: if you want stable scaling, your creative system must outpace fatigue. A weekly pipeline beats “we’ll make new ads when performance drops.”

- Conversion rate and offer clarity: scaling spend into a weak landing experience usually multiplies wasted clicks. When CPA rises while CTR stays stable, the fix is often on-site.

- Budget allocation discipline: use scenarios to decide where the next dollar should go, not only where last-click credit was assigned. Planning tools and MMM workflows are increasingly framed as decision aids, reflected by pushes like Meridian’s Scenario Planner.

- Causality checkpoints: schedule periodic lift work so your scaling doesn’t drift into over-crediting. TikTok’s lift framework is built for this calibration in its Conversion Lift Study overview, and Meta’s experimentation framing is explored in research discussing lift and A/B test interpretation at scale in a 2025 paper on lift testing at Meta.

One useful way to keep this grounded is to track macro efficiency signals. Skai’s Q4 2025 trend report highlights a period where paid social saw spend up 9% with clicks up 23% and CPMs down 6%, which is a reminder that market conditions can improve or worsen independently of your account. Growth optimization means you notice those shifts quickly and decide what to do with them.

Scaling Stories

Scaling stories only matter when they reveal what changed inside the system. The real lesson is rarely “we spent more.” The lesson is usually “we built enough trust and enough creative throughput to handle more spend without losing control.”

Calendly: Scaling Lead Gen Faster by Letting AI Handle the Auction

The moment felt like a trap. Demand was there, the team wanted more pipeline, and every meeting ended with the same ask: “Can we scale LinkedIn without watching cost per lead explode?” The pressure wasn’t just performance pressure; it was credibility pressure, because a bad scaling decision would be visible to everyone who owned revenue.

The backstory is familiar to any B2B marketer. LinkedIn works, but scaling can be slow when the team spends too much time on manual setup, testing every lever, and debating whether results are plateauing because of creative, targeting, or auction shifts. That’s the environment where automation sounds tempting, but only if it can keep efficiency intact.

The wall hit when the usual playbook stopped feeling dependable. Small changes weren’t producing clear learnings, and the team risked falling into the cycle where “optimization” becomes frequent editing without clarity. Scaling spend without a stronger system started to look like the fastest way to create noise rather than pipeline.

The epiphany was accepting that the constraint wasn’t effort, it was leverage. Instead of fighting the auction manually, the team leaned into LinkedIn Accelerate, which is designed to let AI find the right mix of targeting, creative, bidding, and placements. LinkedIn summarizes the premise and the performance claim on the Accelerate product page, and it explains the operating model in its Accelerate FAQ.

The journey was about freeing the team to do higher-value work. With more of the auction mechanics automated, the work shifted toward better inputs: tighter messaging, stronger creative iterations, and cleaner post-click experiences. That’s what advanced paid social media marketing looks like in practice: less time “building campaigns” and more time improving the parts humans are actually best at.

The final conflict was trust. Automation can feel like surrendering control, and stakeholders often worry they won’t understand what’s driving results. LinkedIn’s own reporting on Accelerate includes both efficiency and performance framing, which gave the team a way to communicate value without hiding behind vague claims, including outcomes like a quoted 3X lift in lead form completion rate and 66% cheaper cost per lead for Calendly.

The dream outcome was scale without chaos. The team could push harder on spend while keeping a clear narrative: AI handled more of the delivery optimization, and the humans focused on better inputs and better conversion paths. That’s the kind of story clients remember, because it’s not “we ran ads,” it’s “we built a system that could safely grow.”

Future Trends

Paid social media marketing is moving into a period where “better targeting” matters less than “better inputs.” The auction is still the auction, but what wins inside it is shifting: stronger creative, cleaner first-party signals, and measurement that can survive privacy constraints without turning into guesswork.

Platform automation will keep swallowing complexity. Instead of manually stitching together audiences, bids, and placements, more teams will treat campaign setup as a way to feed the algorithm high-quality ingredients. Products like TikTok Smart+ Campaigns and LinkedIn Accelerate are clear signals of where platforms want practitioners to spend their time: creative, offers, and conversion paths.

Incrementality will become a normal budget conversation, not a niche one. When dashboards over-credit, scaling becomes risky. Lift studies are being positioned as the practical answer for causal impact, with frameworks like TikTok’s Conversion Lift Study and Meta’s conversion lift approach turning “is it real?” into something you can actually test.

MMM will move from “data science project” to “planning habit.” The big shift isn’t that marketing mix modeling exists; it’s that teams are finally getting tools that translate models into decisions. That’s the point of Google’s Meridian ecosystem and its no-code planning layer, described in Google’s Scenario Planner announcement and documented for implementation in Meridian Scenario Planner docs.

Creative supply will become the main scaling bottleneck. If performance drops the moment frequency rises, you don’t have a media problem—you have a creative throughput problem. That’s why more teams will treat creative production like an operating system: briefs, sprints, iteration cadences, and a portfolio of angles that can carry spend without collapsing.

Budget pressure will keep rewarding disciplined operators. When budgets are flat, teams don’t get rewarded for clever tactics; they get rewarded for predictable growth and honest measurement. That context matters, with marketing budgets holding flat at 7.7% of company revenue in 2025 while the broader advertising market keeps expanding toward 2026 in forecasts like WARC’s projection of $1.24T global ad spend by 2026.

Strategic Framework Recap

If you remember only one thing, let it be this: paid social media marketing performs best when it’s treated like a system, not a set of tricks. Strategy decides what you’re trying to change in the market. Creative decides whether anyone cares. Measurement decides whether you’re seeing truth or just credit. Tools and workflows keep it all moving even when the team is tired.

The practical loop looks like this:

- Clarity first: one objective, one primary conversion, and one clear definition of success that matches how the business makes money.

- Inputs over micromanagement: let automation handle delivery while you obsess over creative, offers, and conversion rate.

- Creative as a portfolio: multiple angles, formats, and proof types so you can scale without being held hostage by fatigue.

- Truth with guardrails: platform reporting for speed, backend metrics for reality, and periodic lift/MMM work to calibrate causality.

- Scale responsibly: add creative supply, expand incrementally, and protect signal quality so growth doesn’t turn into noise.

This is why the best operators look “calm” while everyone else looks busy. They aren’t doing more. They’re doing the right things in the right order.

FAQ for the Complete Guide

1) What exactly counts as paid social media marketing?

It’s any time you pay a social platform to distribute your message beyond what your followers would naturally see. That includes conversion campaigns, lead gen forms, app installs, retargeting, and even boosted posts—if money is buying delivery, it’s part of paid social media marketing.

2) Which platform should I start with?

Start where your audience already behaves like a buyer. For B2B, LinkedIn is often the fastest to qualified conversations. For DTC and broader consumer categories, Meta and TikTok usually provide the most scalable reach. If you can’t decide, start with one platform you can execute well, then expand once your creative and tracking are stable.

3) What budget do I need for meaningful learning?

You need enough budget to generate consistent conversion data without changing settings every day. If you’re running conversion campaigns, the right answer is less about a universal number and more about whether you can get enough conversions weekly to evaluate creative and audiences without guessing.

4) How fast should I expect results?

Paid social media marketing can produce signals quickly, but stable performance typically takes time because you’re learning what creative resonates, what audiences respond, and where the funnel breaks. A strong early spike can be real, but it can also be the honeymoon period before fatigue shows up—build your expectations around repeatability, not week-one excitement.

5) Should I rely on automation like Smart+ and Accelerate?

Automation is worth using when your inputs are clean: clear conversion events, solid creative variety, and a functional post-click experience. TikTok positions Smart+ as an AI-led optimization layer in its Smart+ Campaigns overview, and LinkedIn frames Accelerate as AI that optimizes delivery across campaign levers in LinkedIn Accelerate. The win usually comes from freeing you to focus on creative and conversion rate instead of micromanaging settings.

6) Why does attribution feel less reliable than it used to?

Because user behavior is fragmented across devices and privacy constraints limit what platforms can observe directly. That creates a bigger gap between “credited” conversions and “caused” conversions. The fix isn’t obsessing over one dashboard—it’s calibrating with lift tests or other causality methods when possible, using frameworks like Meta conversion lift and TikTok lift studies.

7) How do I know if performance is dropping because of creative fatigue?

If reach and spending are stable but engagement is declining, frequency is rising, and your best ads are slowly losing efficiency, fatigue is a strong suspect. The solution is rarely “new targeting.” It’s usually a new angle, a new hook, or a new proof type that gives the audience a reason to pay attention again.

8) What’s the best way to test without breaking performance?

Test one meaningful change at a time and give it enough time to gather real data. When you test everything at once, you learn nothing. A practical cadence is: keep structure stable, rotate creative in a planned rhythm, and make budget shifts in a way that doesn’t constantly reset learning behavior.

9) Is marketing mix modeling worth it for smaller teams?

It can be, especially as MMM becomes easier to operationalize. The real value is planning: understanding what happens when you reallocate spend, not just explaining last month. That shift is central to tools like Google Meridian and its planning layer described in Scenario Planner.

10) Which KPIs should I care about most?

Pick KPIs that tell you what to do next. For day-to-day optimization, you care about CPM, CTR, conversion rate, and CPA because they reveal where the funnel is breaking. For business decisions, you care about revenue, qualified pipeline, retention, and incrementality because they reveal whether paid social media marketing is actually growing the business.

11) When should I hire a freelancer or specialist?

Hire when you’re stuck in one of three places: creative production can’t keep up, tracking and measurement are unreliable, or scaling decisions feel too risky. A specialist should make the system calmer, not more complicated.

12) What’s the most expensive mistake people make?

Scaling spend before they can explain performance. When you can’t tell whether results are real impact or just attribution credit, scaling becomes gambling. The goal is to build a machine you trust, then turn up the volume.

Work With Professionals

If you’re a freelancer, the hardest part of paid social media marketing usually isn’t running campaigns—it’s keeping your pipeline full without losing momentum to platforms that tax every project or slow down communication. When you’re trying to build a stable income, you don’t need another “network.” You need a place where work moves fast and the economics stay clean.

That’s the promise behind MARKEWORK. The platform positions itself as a marketing marketplace where companies and marketers connect directly with no middleman and no project fees, so you can negotiate directly and keep the relationship (and the upside) in your own hands.

Here’s what makes it feel built for operators rather than spectators:

- Direct communication: the homepage emphasizes messaging and negotiation without a middle layer, described as direct communication built into how the marketplace works.

- No commissions mindset: instead of taking a cut of your work, the product framing highlights predictable membership access with no project fees.

- <strongA pipeline you can actually work: the positioning is “thousands of job listings,” and the marketplace has been referenced publicly with over 1,000 active listings visible on the work board, which is the difference between occasional luck and a repeatable outreach habit.

If you want more clients, the move is simple: put yourself where marketing work is the point, not an afterthought. Build a profile that makes your outcomes obvious. Apply consistently. Follow up like a professional. Then let your results do the selling.