The phrase “top social media companies” gets thrown around as if it’s a simple leaderboard. In reality, it’s a moving target shaped by user behavior, ad markets, product ecosystems, regulation, and the platform’s ability to keep creators and communities invested.

This guide is built for people who need clarity with real-world consequences: marketers choosing where to spend, founders deciding where to build distribution, creators picking where to grow, and analysts trying to understand why one platform suddenly feels “everywhere” while another quietly fades.

Article Outline

- What Are the Top Social Media Companies?

- Why Ranking the Top Social Media Companies Matters

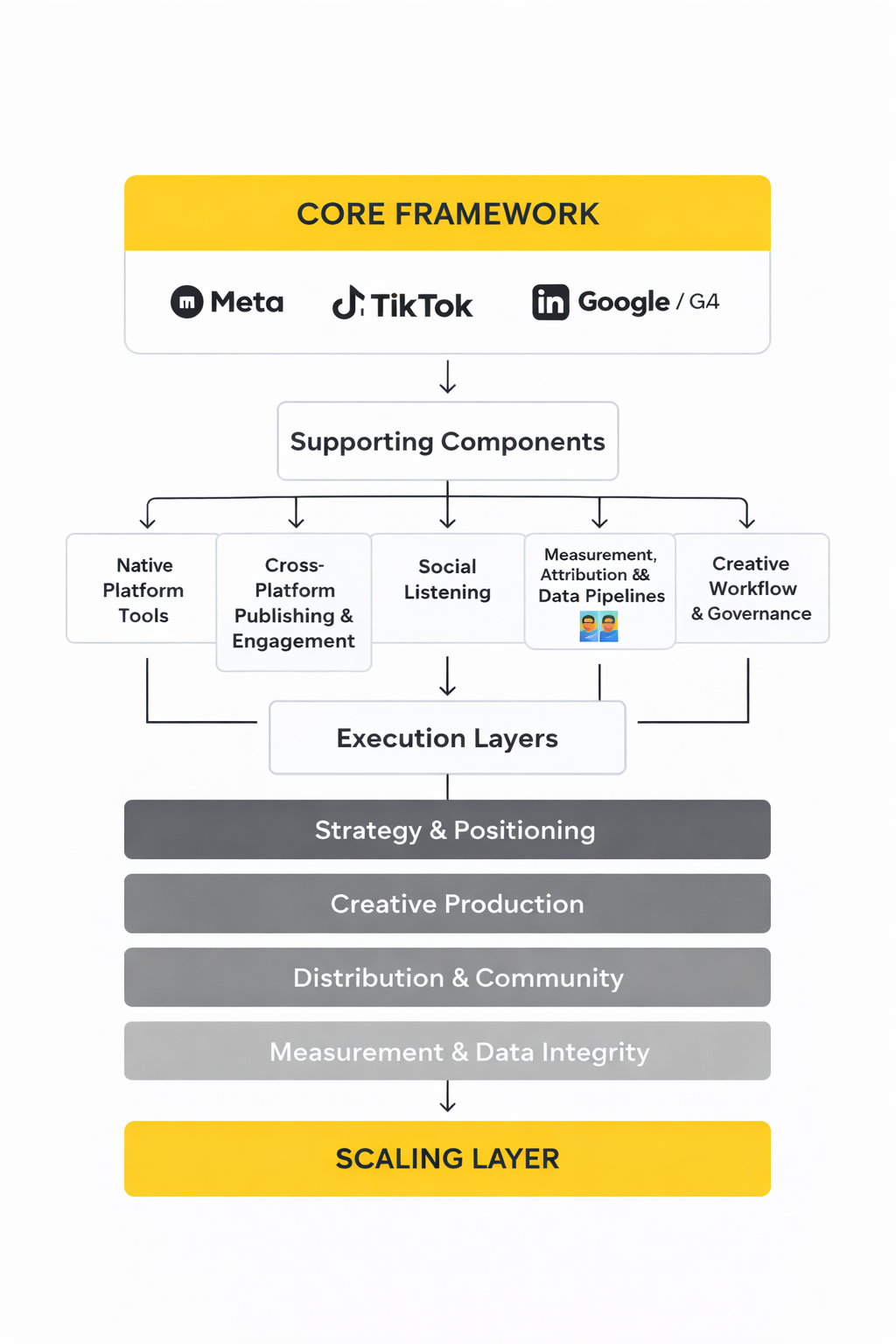

- Framework Overview

- Core Components

- Professional Implementation

What Are the Top Social Media Companies?

At the simplest level, the top social media companies are the firms behind the platforms where people spend meaningful time creating, sharing, messaging, watching, reacting, and buying. But “top” is only meaningful when you specify what you’re measuring.

Some platforms dominate by sheer reach. Meta’s “Family of Apps” reported 3.35 billion Family daily active people in December 2024, which is closer to “global communications infrastructure” than a single social app. Others dominate by attention formats. YouTube is still one of the world’s most influential video surfaces, and Alphabet reported YouTube advertising revenue of about $10.5B in Q4 2024, reflecting how much of modern media buying has shifted into creator-driven video.

Then there are platforms that win because they behave like operating systems for daily life. Tencent’s Weixin/WeChat reported that combined monthly active users exceeded 1.3 billion as of March 2024, and its “super-app” structure makes it less comparable to a typical Western feed-first network.

And finally, some top social media companies are best understood as highly specialized intent machines. Pinterest reported 553 million global MAUs for Q4 2024, built around planning and shopping signals rather than social graph status.

So, in this article, “top social media companies” means the companies that lead on one or more of the following: global scale, time spent, cultural influence, monetization power, and ecosystem leverage (ads, commerce, messaging, creators, developers, and data).

Why Ranking the Top Social Media Companies Matters

Choosing the wrong platform today isn’t just a “lower ROI” problem. It can lock you into the wrong creative format, distort your measurement, and quietly push your growth strategy into a corner where the algorithm is doing you no favors.

The stakes are higher because the market is bigger and more competitive than most people feel day-to-day. In the US alone, the IAB and PwC reported that social media advertising revenue reached $88.8B in 2024. That kind of money reshapes product roadmaps: platforms build what advertisers can buy, not just what users enjoy.

And users are not standing still. Kepios’ global reporting shows there were 5.66 billion social media user identities worldwide as of October 2025. More “identities” doesn’t automatically mean more opportunity for you—it often means more noise, more competition, and a higher bar for creative that actually earns attention.

Ranking the top social media companies also matters because regulation and platform risk now directly affect growth plans. A platform can look unstoppable right up until policy, privacy, or enforcement shifts change targeting, distribution, or even availability in key markets. If you’re building a business, you need a way to judge not only “where the users are,” but “how stable the platform environment is for the next 12–24 months.”

In practice, the goal isn’t to crown a single winner. The goal is to pick the right mix: one platform that reliably converts, one that builds brand memory, one that compounds through community, and one that hedges risk if a major channel gets disrupted.

Framework Overview

To evaluate the top social media companies without getting trapped in hype, you need a framework that separates “popular this month” from “structurally powerful.” This article uses a simple scoring lens you can apply to any platform or parent company.

Instead of arguing about whether one app is bigger than another (often impossible to verify cleanly because companies report different metrics), we evaluate what actually matters for outcomes:

- Reach and retained attention: Not just total users, but whether usage is daily and sticky.

- Monetization strength: How reliably the platform turns attention into revenue (ads, subscriptions, commerce, services).

- Distribution mechanics: Whether growth comes from a social graph, algorithmic discovery, messaging, search, or paid amplification.

- Ecosystem pull: Whether creators, brands, developers, and partners treat it like a core channel.

- Risk and resilience: Exposure to regulatory changes, privacy constraints, content policy shocks, and platform trust issues.

This approach explains why some companies remain “top” even when sentiment swings against them. For example, Snap reported 453 million daily active users in Q4 2024, while also describing how ad systems and pricing dynamics can materially affect results—an example of why “users” alone never tells the whole story.

It also explains why some platforms with smaller apparent scale can be strategically dominant. Reddit reported 101.7 million daily active uniques (DAUq) for Q4 2024, but its value to brands often comes from intent-rich conversations, search visibility, and community credibility—things that don’t show up in a simple MAU chart.

Core Components

Here are the components you’ll use throughout this series to assess top social media companies in a way that stays useful even as products change.

1) Scale vs. Stickiness

Scale answers “How many people can you reach?” Stickiness answers “How often do they come back, and how hard is it to replace that habit?” Daily usage tends to be a stronger sign of durable influence than a single headline MAU number.

Meta reporting 3.35B Family daily active people in December 2024 is a stickiness signal as much as a scale signal: it implies that a large portion of the connected world touches at least one Meta surface every day.

2) Monetization Density

Monetization density is the platform’s ability to turn attention into predictable revenue without destroying the user experience. Strong monetization usually shows up in ad performance tooling, measurement reliability, and a mature advertiser base that keeps spending through economic cycles.

That’s why it matters that the digital ad industry hit a record $259B in US internet ad revenue in 2024 and why social captured a meaningful share of the growth—platforms that prove performance keep compounding.

3) Distribution Engine

Every major platform has a dominant distribution engine, and your strategy should match it:

- Graph-driven: relationships and follows determine what spreads.

- Algorithm-driven: content performance determines what spreads.

- Search-driven: intent pulls content to the user.

- Messaging-driven: sharing happens in private networks and groups.

When you understand the distribution engine, you stop blaming “the algorithm” and start designing content and offers that actually fit the channel.

4) Ecosystem Gravity

The top social media companies create gravity: creators prioritize them, brands build playbooks around them, and agencies hire for them. Gravity often shows up through creator tooling, commerce features, and cross-product integration.

For example, Pinterest’s growth to over 518M MAUs in Q1 2024 and 553M MAUs in Q4 2024 is tightly linked to its role in shopping discovery—people arrive with plans, not just curiosity.

5) Risk Surface

Risk surface is the practical likelihood that external forces change the rules on you: privacy enforcement, youth protection, content moderation requirements, geopolitical constraints, or platform-level product shifts.

OECD work on digital platform impacts highlights how social media can amplify harmful content and how that complexity complicates regulation and enforcement—exactly the kind of environment where platform rules can shift quickly. You can see this theme in the OECD Regulatory Policy Outlook 2025 discussion of digital platforms.

Professional Implementation

Professionals don’t use a “top platforms” list as a checklist. They use it as a decision system. Here’s how to apply the framework in a way that leads to better channel picks and fewer expensive pivots.

Step 1: Define the job the platform must do

Pick one primary job first, then one secondary job:

- Primary job examples: direct response sales, lead generation, brand lift, community retention, creator distribution, employer branding.

- Secondary job examples: retargeting pool growth, customer support deflection, partnerships, market research.

This prevents the most common mistake: trying to force one platform to do every job, and then concluding “social doesn’t work.”

Step 2: Score platform fit using the five components

Create a simple scorecard (1–5) across the components: scale vs. stickiness, monetization density, distribution engine, ecosystem gravity, and risk surface. The output isn’t “truth.” It’s a structured argument you can revisit in 90 days.

If you need a fast sanity check, start with verifiable company reporting. Snap’s DAU disclosure in its 2024 SEC filing, Reddit’s DAUq in its 2024 SEC filing, and Weibo’s MAU/DAU in its FY2024 results give you comparable “platform health” signals without relying on vague third-party estimates.

Step 3: Pick a portfolio, not a single bet

Most teams perform better when they build a deliberate portfolio:

- One conversion channel: where measurement and targeting are mature.

- One attention channel: where creative compounds through discovery.

- One intent channel: where users show up already wanting something.

- One hedge channel: to reduce dependency risk.

This is also how you stay calm when the market shifts. Even large-scale ad markets can wobble, and forecasts change as macro conditions evolve—WPP’s 2025 outlook is one example of how quickly expectations can be revised in public reporting like Reuters coverage of ad growth forecasts.

Step 4: Set a review rhythm that matches platform reality

Quarterly reviews are usually the sweet spot: long enough for creative learning to compound, short enough to respond to product and policy shifts. Use consistent metrics (not vanity spikes), and document what changed on-platform so you can separate “execution issues” from “platform changes.”

Next, in Part 2, we’ll use this framework to map the modern landscape of top social media companies by category (global giants, video-first, super-app ecosystems, professional networks, community platforms, and shopping-intent networks) and explain what each category is best for.

Step-by-Step Implementation

When you’re trying to win attention across the top social media companies, the biggest advantage usually isn’t a secret tactic. It’s building an implementation process that stays stable even when formats, algorithms, and tracking rules shift. The steps below are the “boring” foundation that makes creative experiments actually pay off.

Step 1: Choose your two core channels before you choose your tools

Start by picking two core channels: one that is most likely to drive measurable outcomes (sales, leads, booked calls), and one that is most likely to build steady demand (attention and memory). If you try to cover every platform from day one, the quality bar drops and the algorithm learns you’re inconsistent.

A simple way to decide is to match channel mechanics to how people discover you. For example, algorithmic discovery-heavy platforms reward fast creative iteration, while intent-heavy platforms reward consistency and relevance. The point is to pick two “homes” first, then expand once you’ve proven repeatability.

Step 2: Lock your measurement basics before you scale output

Before you post more or spend more, make sure your measurement isn’t quietly leaking signal. In the EU and UK, consent and privacy requirements can directly affect what platforms can observe and optimize against, so your “baseline” matters more than it used to.

Google’s official guidance on Consent Mode and Consent Mode v2 upgrades, plus the practical steps in GA4 consent verification, help you avoid the common scenario where traffic looks fine but conversions become partially invisible. If you’re running Google Ads alongside paid social, the Consent Mode overview for Ads is the third piece that keeps your reporting aligned.

Step 3: Add server-side events for paid social where it matters

Once consent-aware tagging is in place, the next step for many teams is improving event quality for ad optimization. This is where server-side event connections become less of a “nice-to-have” and more of a competitive baseline, especially when you’re spending on the top social media companies.

Meta documents how Conversions API sends web events from servers directly to Meta, and TikTok explains how its Events API connects web, app, and offline data. Pinterest frames its approach similarly, describing the Pinterest Conversions API as a tagless, server-to-server bridge, and its engineering team has even published a developer-focused view on simplifying Pinterest conversion tracking with packages.

The goal here isn’t “perfect attribution.” The goal is better learning signals so optimization improves, and fewer gaps that cause you to overreact to noisy dashboards.

Step 4: Build a content system, not a content calendar

A calendar tells you what day something posts. A system tells you what happens after it posts. The easiest way to upgrade is to define a repeatable set of content types you can produce weekly without heroics, then set a lightweight review ritual to decide what gets repeated, refined, or killed.

If you need a “CEO-friendly” way to justify this, research like the Sprout Social 2025 Impact of Social Media Report is useful context for why teams are being pushed to prove ROI rather than chase vanity engagement.

Step 5: Create a 90-day loop with clear exit criteria

Your first 90 days should be designed like a controlled experiment: stable publishing cadence, stable measurement, and a small number of creative hypotheses. The exit criteria should be clear upfront, like “we can reliably produce two winning variations of this format per month” or “this channel can generate qualified traffic at a sustainable cost.”

If you can’t define what “works” looks like, you’ll end up debating feelings instead of evidence, and the team will slowly lose confidence in the channel.

Execution Layers

Execution gets easier when you separate your work into layers. That way, you can improve one layer without accidentally breaking another, and you can explain your process to clients and stakeholders without sounding like you’re improvising every week.

Layer 1: Strategy and positioning

This is where you decide how you want to be remembered, and what you want people to do next. Across the top social media companies, the formats look different, but the job is the same: make the next step feel obvious.

A practical check is whether your content still makes sense if someone sees it out of context, on mute, in a feed that didn’t ask for it.

Layer 2: Creative production

This layer is about speed without sloppiness. You want a production rhythm that creates enough volume for learning, while still protecting quality and brand clarity.

If production is fragile, you’ll post less when things get busy, and inconsistency is one of the fastest ways to lose distribution momentum.

Layer 3: Distribution and community

Distribution is both public and private. Public distribution is your feed reach, search visibility, and paid delivery. Private distribution is how often people share your content in DMs, group chats, and internal workplace channels.

Community work matters here because it compounds. When people feel seen, they stick around, and the platform learns your content deserves attention.

Layer 4: Measurement and data integrity

This layer is where serious teams quietly pull ahead. If your consent and tagging setup is inconsistent, the story your dashboards tell becomes less trustworthy, and you start making decisions based on partial visibility.

Keeping measurement stable often means maintaining your Consent Mode implementation via Google’s consent documentation, verifying it in GA4’s consent settings, and aligning Ads measurement with the Google Ads consent guidance.

Layer 5: Automation and governance

Automation is how you protect focus. Governance is how you protect the brand. Together, they stop social from becoming a chaotic side-project that only works when one person is “on.”

This is also the layer that reduces client risk: approvals, access controls, and a clear path for handling mistakes before they escalate.

Optimization Process

Optimization is not “tweak everything every day.” Across the top social media companies, the teams that win tend to optimize in cycles: stabilize, learn, then scale. That rhythm keeps you from chasing noise.

1) Stabilize the baseline

Run a consistent posting cadence for at least a few weeks on your core channels, using a small set of content types. Keep the CTA consistent enough that you can actually learn what’s driving action.

At the same time, make sure your tracking setup is not changing every week. If you’re still migrating consent or tags, treat performance as directional until the foundation is stable.

2) Measure what each channel is good at

Not every channel should be judged by last-click purchases. Some platforms are better at discovery and demand creation, and your measurement should reflect that reality.

GA4’s documentation on attribution in Analytics is useful here because it helps teams understand why the same campaign can look “great” in one view and “weak” in another, depending on model and scope.

3) Improve signal quality for paid

If you’re spending serious budget, optimization often becomes a signal quality game. That’s where server-side event connections can help reduce gaps and improve the platform’s ability to learn.

Meta’s Conversions API overview, TikTok’s Events API explanation, and Pinterest’s Conversions API guidance</a each describe the same principle in different words: better data in, better optimization out.

4) Scale winners through variation, not repetition

When something works, don’t just repost it until it dies. Extract what made it work (hook, framing, proof, format) and build variations that test the same idea in different packaging.

This is how you avoid the “one-hit wonder” trap and turn a single good post into a repeatable content engine.

5) Audit and reset every 30 days

Every 30 days, do a short audit: what created measurable outcomes, what created strong attention, what created meaningful conversations, and what wasted effort. Then adjust your content mix and your spend allocation.

This is also where you catch operational problems early, like a consent change that quietly reduced measurable conversions, or an event mapping bug that broke your campaign learning.

Implementation Stories

Story: Alaan’s Attribution Breakthrough on LinkedIn

Start (high drama): The team was watching leads come in, but the numbers didn’t add up. Good conversations were happening, yet the reporting made it look like the channel wasn’t pulling its weight. In a budget meeting, the quiet threat was obvious: if the data couldn’t defend performance, spend would get cut.

Backstory: Alaan wasn’t selling something impulsive, so the path from first click to signed deal didn’t behave like ecommerce. Multiple stakeholders were involved, and the journey ran through demos, internal reviews, and CRM updates. That complexity made traditional tracking feel like looking at a long movie through a keyhole.

The wall: The core issue was attribution integrity. If the system couldn’t connect campaign touchpoints to real downstream outcomes, optimization would always favor shallow signals. And if optimization favored shallow signals, the team would keep paying for activity that looked good but didn’t close.

The epiphany: The shift was realizing that better creative alone wouldn’t fix a measurement gap. They needed a cleaner bridge between what happened on LinkedIn and what happened after the click, inside their systems. Instead of accepting “partial visibility,” they treated tracking as a product problem that deserved real implementation effort.

The journey: They implemented a Conversions API integration workflow so LinkedIn could align performance reporting with what the business actually cared about. LinkedIn’s customer story explains how Alaan connected attribution by combining online and offline conversions, including CRM outcomes, through a partner integration in Alaan’s LinkedIn Ads + Conversions API case study. With that foundation, optimization could focus on the signals that matched real pipeline quality instead of just form fills.

The final conflict: As soon as measurement improved, the next challenge appeared: internal trust. When dashboards change, stakeholders sometimes assume the team “moved the goalposts,” even if the new view is more accurate. The team had to document the implementation, align reporting definitions, and explain why the new numbers were a better mirror of reality.

The dream outcome: Once the reporting could tie marketing activity to meaningful downstream outcomes, the channel became easier to defend and easier to improve. The team wasn’t just buying clicks; they were building a feedback loop that helped the system learn. And in a world where you’re competing across the top social media companies, that kind of loop is what turns a campaign into a growth asset.

1) Build the foundation before you chase scale

Start with consent-aware measurement and clean attribution logic. Use Google’s Consent Mode documentation as the baseline, verify implementation via GA4 consent checks, and align Ads requirements using Google Ads consent guidance.

Then, if you’re spending meaningfully on paid social, prioritize server-side events: Meta Conversions API, TikTok Events API, and Pinterest Conversions API.

2) Run a two-speed operating model

Operate at two speeds at the same time: a stable baseline (your repeatable weekly content types and offers) and a fast experimentation lane (new hooks, new creators, new formats). The baseline protects performance. The experimentation lane creates upside.

This is how you avoid the most common trap: constantly changing everything, then not knowing what actually improved results.

3) Treat reporting like a product your client “uses”

Professional reporting isn’t about dumping charts. It’s about giving stakeholders confidence to make decisions. Keep a consistent set of metrics, document what changed, and explain trade-offs in plain language.

When teams need a broader benchmark for why ROI proof is becoming non-negotiable, resources like the Sprout Social 2025 Impact of Social Media Report provide useful context for how leadership expectations are evolving.

4) Build reusable playbooks per platform category

Instead of writing a playbook for every single platform, write playbooks by category: discovery-first platforms, intent-first platforms, and community-first platforms. Then adapt those playbooks to whichever of the top social media companies you’re focusing on this quarter.

This keeps your process durable, even when a platform changes features or a new app grabs attention.

5) Commit to quarterly reality checks

Every quarter, review your channel portfolio: what created measurable outcomes, what created demand, and what created operational drag. If a platform is consuming disproportionate effort without a clear role, redefine its job or cut it.

That discipline is what turns “we post on social” into a professional system that can compete, month after month, across the top social media companies.

Statistics and Data

When people argue about the top social media companies, they usually argue with vibes. The fastest way to get back to reality is to look at three numbers: how many people are reachable, how much money is flowing through the ecosystem, and how quickly formats are changing.

On reach, Kepios’ Digital 2026 global overview puts global social media user identities at 5.66 billion as of October 2025. It’s a useful headline, but it’s even more useful as a warning: “user identities” aren’t always unique humans, and the difference matters when you’re planning true incremental growth.

On money, the US market alone shows how aggressively budgets have consolidated around major platforms. The IAB/PwC Internet Advertising Revenue Report for Full Year 2024 breaks out social media advertising revenue at $88.8B in 2024. If you’re competing across the top social media companies, that number explains why every platform is racing to improve measurement, automation, and performance tools.

On format velocity, social video keeps pulling spend. IAB’s 2025 Digital Video Ad Spend & Strategy report highlights that US digital video ad spend grew to $64B in 2024 and is projected to reach $72B in 2025, which aligns with what most teams feel: more creative variants, more creator-style production, and more pressure to prove outcomes.

And when you zoom out beyond the US, the same direction holds. The IAB UK Adspend reporting states the UK’s digital ad market reached £40.5bn in 2025. Meanwhile, WPP Media’s global outlook, covered in Reuters reporting on the 2025 forecast update, describes digital as the dominant share of global ad revenue, reinforcing why the top social media companies are increasingly treated like core infrastructure for growth.

Performance Benchmarks

Benchmarks are helpful, but only when you’re clear about what’s being measured. “Engagement rate” can mean engagement divided by followers, impressions, reach, or views, and those versions behave very differently across platforms.

If you want a clean, cross-platform reference for organic performance trends, the Rival IQ 2025 Social Media Industry Benchmark Report is useful because it looks at millions of posts across industries and makes the same core point many teams noticed in 2024–2025: engagement rates fell across major platforms, with declines called out for Facebook, Instagram, TikTok, and X.

If you want platform-specific “what good looks like right now,” you’ll usually get more actionable insight from reports that separate by platform and post type. Socialinsider’s 2026 social media benchmarks roundup shares current cross-platform benchmarks and emphasizes how engagement behaviors have shifted toward more passive interaction on some networks. For LinkedIn specifically, Socialinsider’s 2025 LinkedIn benchmarks highlight which formats tend to drive stronger engagement, which matters when you’re deciding whether to prioritize documents, multi-image posts, or video.

If your work is heavy on short-form video and you need a benchmark that talks about reach engagement, Emplifi’s 2025 Social Media Benchmarks Report breaks out performance for formats like Reels and TikTok using a reach-based approach. This is a different lens than follower-based engagement, but it’s often closer to how leaders evaluate “was this content actually seen?”

One more benchmark that’s quietly useful is cadence. Hootsuite’s social media benchmarks guide connects posting frequency with engagement patterns in specific industries. It won’t tell you the perfect number of posts per week, but it helps you stop guessing in situations where consistency is the real bottleneck.

The practical takeaway is simple: use one benchmark source consistently per platform, and treat benchmarks as a guardrail, not a goal. Your goal is to beat your own baseline while staying honest about what the algorithm will actually reward.

Analytics Interpretation

Analytics becomes easier when you stop treating social like one channel and start treating it like three layers that happen to share the same apps: attention, intent, and outcomes.

Attention metrics

Attention metrics tell you whether the platform is distributing your content at all. Reach, impressions, view-through, and watch time sit here, and they’re the first signal you need when comparing performance across the top social media companies.

If attention is weak, you can’t “optimize conversions” your way out of it. You need better hooks, clearer packaging, stronger creative rhythm, or smarter distribution choices.

Intent metrics

Intent metrics tell you whether people are moving closer to doing something. Clicks, profile visits, saves, shares, and inbound messages are usually more diagnostic than raw likes, especially in B2B and higher-consideration categories.

This is also where measurement can get messy in Europe. If consent settings are misconfigured, you can end up with a world where intent looks strong but outcomes appear to “vanish.” Google’s Consent Mode guidance and the practical checks in GA4 consent verification help you keep intent-to-outcome reporting from breaking quietly.

Outcome metrics

Outcome metrics are what businesses fund: purchases, qualified leads, booked calls, subscriptions, and pipeline value. The hard part is that outcome reporting is partly a measurement design problem, not a platform problem.

If you’re using GA4, attribution choices matter. Google’s GA4 attribution documentation is worth revisiting whenever stakeholders argue about whether social “worked,” because different attribution models can tell very different stories from the same underlying behavior.

Paid signal quality

For paid campaigns, there’s a second layer beyond attribution: optimization signals. Ad systems learn from the data you feed them, and that’s why server-to-server connections are now mainstream across the top social media companies.

Meta’s Conversions API documentation and TikTok’s Events API guidance both describe the same reality: stronger signals generally lead to more stable delivery and smarter optimization, especially when browser-based tracking is incomplete.

Case Stories

Story: The Times’ Subscription Growth Pressure Cooker

Start (high drama): When subscription targets become non-negotiable, marketing stops feeling like marketing and starts feeling like a countdown. Every underperforming campaign becomes a meeting, and every meeting becomes a debate about whether the channel is broken. The team needed proof that Meta could drive real subscription outcomes, not just engagement.

Backstory: News brands live in a volatile attention economy, and “same creative, longer” rarely works. Content changes by the hour, audience interest shifts fast, and the window to convert a reader is narrow. That makes performance marketing uniquely hard: you can’t rely on one evergreen offer when the product is real-time relevance.

The wall: The team hit the classic wall of scale: creative production couldn’t keep up with the speed the platform rewarded. Even when campaigns worked, it was difficult to sustain performance without burning time on manual creative updates. And when performance dipped, it wasn’t obvious whether the problem was audience fatigue, creative mismatch, or a workflow bottleneck.

The epiphany: The breakthrough was treating creative like a system instead of a sequence of one-off assets. Instead of pushing isolated ads, they leaned into dynamic creative templates that could respond to what readers cared about in the moment. That shift also created something teams rarely get: an implementation path that could scale without becoming chaos.

The journey: The Times adopted creative automation for Meta Advantage+ through Smartly, using templates that could adapt rapidly and keep campaigns aligned with current stories. Smartly’s case study, The Times doubles real-time performance with creative automation for Meta Advantage+, describes the operational change and the impact on subscription acquisition. The same source reports an 84% increase in conversion rate and a 55% drop in CPA compared to business-as-usual, which is exactly the kind of signal you want when you’re proving paid social outcomes.

The final conflict: Of course, performance gains create a new problem: expectations reset. Once leadership sees a lower CPA, they want it forever, even when market conditions shift. The team still had to defend a disciplined test cadence, because scaling spend without protecting creative quality is how “wins” turn into sudden regressions.

The dream outcome: The reported result wasn’t just lower costs; it was a path to compounding. The case study notes they doubled subscriptions year over year since adopting Smartly, which turns the story from “a good campaign” into “a better operating model.” That’s the real lesson for anyone working across the top social media companies: the systems you build decide whether performance is repeatable.

Professional Promotion

Promotion gets easier when your analytics tell a story that feels obvious. Not a story made of vanity metrics, but a story that connects attention to intent to outcomes in a way a busy stakeholder can trust.

Build a one-page performance narrative

Pick one “north star” outcome, one intent indicator, and one attention indicator. Then show how they move together over time. When you do this consistently, it becomes much harder for anyone to dismiss social as “just awareness,” especially when you’re operating across the top social media companies and performance is fragmented across platforms.

Use benchmarks as context, not justification

Benchmarks like Rival IQ’s 2025 report, Emplifi’s 2025 benchmarks, and Socialinsider’s 2026 benchmarks are most powerful when they explain the environment. They help stakeholders understand why “engagement fell” might be a market-wide pattern rather than a team failure.

Then your promotion becomes more credible: you’re not claiming perfection, you’re showing progress against reality.

Make measurement credible before you make big claims

If you want leaders to trust social ROI, you have to protect the integrity of measurement. Consent-aware tagging through Consent Mode, validation via GA4 consent checks, and clean attribution logic using GA4 attribution guidance keep your story from collapsing under scrutiny.

And if you’re running paid, stronger optimization signals via Meta Conversions API and TikTok Events API make your performance claims more defensible because the system is learning from better data.

In Part 5, we’ll zoom out from dashboards and look at the bigger ecosystem forces shaping the top social media companies: creators, commerce, messaging, and the platform incentives that quietly determine what gets distributed next.

Advanced Strategies

Once you have a reliable baseline, the fastest wins across the top social media companies usually come from three advanced moves: proving incrementality, scaling creative without losing quality, and building distribution that doesn’t disappear when a platform shifts the rules.

Prove incrementality before you scale spend

At scale, the biggest risk is paying for conversions that would have happened anyway. That’s why lift testing has moved from “nice experiment” to “budget defense,” especially as privacy changes make attribution noisier. Meta’s Conversion Lift testing and TikTok’s Conversion Lift Study documentation exist to answer one question that stakeholders actually care about: did the ads create new outcomes or just take credit for them?

When you treat lift as a routine tool, your channel decisions get calmer. You’re no longer debating dashboards; you’re working with controlled evidence. And that makes it easier to build a sustainable portfolio across the top social media companies instead of betting everything on the channel that “looks best” this month.

Build a creator partnership engine, not one-off influencer posts

Creator partnerships scale best when they’re treated like a media channel with a process: creator sourcing, briefing, content repurposing, paid amplification, and measurement that ties back to outcomes. That shift is happening because the budgets are real now: the IAB 2025 Creator Economy Ad Spend & Strategy Report projects U.S. creator ad spend hitting $37B in 2025, and the same figure is reinforced in IAB’s official report summary and reporting that references it in mainstream trade coverage like TVTechnology’s breakdown.

Practically, this means you should build a reusable creator system: test multiple creators quickly, find the few who naturally land your message, and then scale their best-performing angles through paid. It’s one of the most reliable ways to create “native” creative that works across the top social media companies without feeling like ads.

Scale creative through variations, not just more posts

When engagement declines across major platforms, brute-force volume often backfires. Rival IQ’s 2025 Social Media Industry Benchmark Report describes platform-wide engagement rate declines, and similar trend direction shows up in cross-platform reporting like Socialinsider’s 2026 benchmarks. The teams who keep growing don’t just post more; they create more angles per idea.

A strong variation system typically looks like this: one core claim, three hooks, three proofs, and two CTAs, then rapid iteration based on watch time, saves, clicks, and conversion signals. This is how you scale what works without exhausting your audience or your team.

Scaling Framework

Scaling across the top social media companies is less about “being everywhere” and more about building a framework that makes growth repeatable. The five-part framework below is designed to protect performance while you increase output and spend.

1) Choose a primary signal for each stage

Pick one primary signal for attention (reach or qualified views), one for intent (clicks, saves, inbound messages), and one for outcomes (purchases, qualified leads, first orders). This keeps your reporting coherent when different platforms optimize around different behaviors.

If your attribution debates never end, it’s usually because the team is mixing stage metrics and expecting them to behave like one number.

2) Build a test calendar with guardrails

Scaling requires disciplined experimentation. A simple guardrail is running one “baseline” campaign structure that changes minimally, alongside one experimental lane that rotates new hooks, creators, and formats. WARC’s Future of Measurement 2025 highlights the growing role of experiments in modern measurement, which aligns with what high-performing teams do in practice: they test continuously, but they don’t destabilize everything at once.

3) Standardize your data foundation

Scaling breaks when your data foundation can’t keep up. Consent-aware tagging is table stakes in Europe, and Google’s Consent Mode guidance plus validation steps in GA4 consent verification help keep your conversion data from quietly degrading as you scale.

Then, for paid, feed the platforms stronger signals so optimization doesn’t rely on partial browser data. Meta’s Conversions API documentation and TikTok’s Events API guidance are the practical pathway many teams use to stabilize learning as spend increases.

4) Scale what is proven, not just what is interesting

“Interesting” creative often wins comments and loses money. “Proven” creative can be scaled through variation, creator expansion, and paid amplification without collapsing performance. This is where lift studies protect you, because they tell you whether the results are real before you double the budget.

5) Build a platform portfolio that reduces dependency

A single-platform strategy is fragile. A portfolio strategy is resilient: one conversion channel, one discovery channel, one intent channel, and one hedge. This matters even more as creator marketing becomes a standalone budget line, reflected in the IAB creator economy spend projections.

Growth Optimization

Optimization is where scaling either compounds or stalls. Across the top social media companies, most teams don’t fail because they lack ideas; they fail because they can’t turn learning into action fast enough.

Use lift to decide budget, not just attribution

Attribution will always be imperfect. Lift testing is how you get closer to causality without pretending dashboards are the truth. TikTok positions its Conversion Lift Study as a way to measure true impact, and Meta frames Conversion Lift testing similarly for its platforms.

A practical approach is to run lift tests at key moments: when scaling spend, when changing creative strategy, or when launching a new product line. You don’t need to test everything; you need to test the decisions that change budgets.

Structure creative learning like a lab

Creative optimization works best when you isolate variables. Test hooks separately from offers. Test proof separately from format. Then recombine what works into new variants so you keep momentum without copying the same ad until it stops working.

This approach also protects your team from burnout: you’re not inventing from scratch every week, you’re building on a library of proven components.

Match optimization to channel mechanics

Some platforms behave like discovery engines, others behave like intent engines, and some behave like community engines. When you optimize a discovery engine using only last-click logic, you often starve the channel before it can do its job. Google’s GA4 attribution documentation is helpful here because it shows how model choice changes credit assignment, which changes what teams think is “working.”

Upgrade signal quality before you blame creative

If performance suddenly gets volatile, don’t assume your creative got worse overnight. Check whether the system is learning from weaker signals. Many teams stabilize results by improving server-side event flows through Meta’s Conversions API and TikTok’s Events API, especially when consent and browser restrictions reduce observable conversions.

Scaling Stories

Story: Careem’s Fight to Prove TikTok Was Driving New Orders

Start (high drama): Careem had a growth problem that didn’t show up as a lack of traffic. People were seeing ads, installs were happening, and the charts looked busy, but the business still needed proof that media was actually creating new first orders. In a performance environment, “we think it helps” is not a safe sentence to bring into a budget conversation. If they couldn’t prove incrementality, the investment would always be vulnerable to cuts.

Backstory: Grocery and quick commerce live on speed and habit. Customers don’t “research” for weeks; they either try you and come back, or they don’t. That makes first order conversion a critical milestone, and it also makes measurement hard because many factors influence whether a person places that first order.

The wall: The team hit the classic attribution wall: platform-reported performance wasn’t enough to convince everyone internally. They needed a way to isolate the causal impact of TikTok from the background noise of other media and organic demand. Without that clarity, scaling spend would feel like guessing with bigger numbers.

The epiphany: The breakthrough was choosing controlled experimentation over debate. Instead of trying to “perfect attribution,” they focused on proving lift on the outcome the business cared about most: first orders. That single decision reframed the entire discussion from opinions to evidence.

The journey: Careem ran incrementality testing that separated exposed and control groups and examined discovery and conversion outcomes. TikTok’s own case write-up, How Careem boosted discovery and conversions, reports a 15.45% lift in first-order conversions on iOS, and the same lift is echoed in TikTok and WARC’s measurement narrative at Why marketing needs a new measurement mindset. The test framing and uplift figures are also reinforced in the downloadable PDF, TikTok Rethinking Attribution, and in Adjust’s independent case study page, Careem + TikTok with Adjust segmentation.

The final conflict: Even with positive lift, the next challenge was operational: scaling without breaking what made the campaign work. When spend grows, creative fatigue accelerates, and teams can accidentally “optimize away” the very signals that drove lift. The team needed to protect the experimental mindset while moving into always-on execution.

The dream outcome: The win wasn’t just a lift number; it was decision clarity. With controlled evidence, the team could scale more confidently, refine where performance was strongest, and reduce internal friction around channel value. That’s the compounding advantage for anyone marketing across the top social media companies: when you can prove what’s incremental, you can scale with less fear and more precision.

Sell the system, not the post

Clients don’t actually want “more content.” They want predictable outcomes and fewer surprises. When you position your work as a system—measurement foundation, creative variation engine, and incrementality proof—you’re selling stability, not deliverables.

Make incrementality your trust signal

If you want to stand out as a professional, bring lift testing into the conversation early. Point to the tools the platforms provide, like Meta’s Conversion Lift and TikTok’s Conversion Lift Study, and explain how you’ll use experiments to protect budget decisions.

This doesn’t just improve results; it improves relationships, because it replaces “trust me” with “let’s test it.”

Anchor your scaling plan to market reality

When stakeholders feel pressure, they often demand shortcuts. Anchoring your plan to market-level signals helps you keep strategy grounded. Creator spend growth outlined in the IAB creator economy report is one example of a macro shift that explains why creator-first creative and faster production cycles are now a competitive baseline.

That’s how you promote professionally: not by promising miracles, but by showing a credible scaling model built for the reality of how the top social media companies operate today.

Future Trends

The next chapter for the top social media companies won’t be decided by who ships the flashiest feature. It’ll be decided by who controls three things at once: signal quality, creator economics, and distribution surfaces that reach people even when they’re not “in the feed.”

Measurement shifts from attribution to experiments

As consent, privacy, and browser changes keep chipping away at deterministic tracking, more teams are leaning on controlled testing to answer the real question: “Did this create incremental results?” You can see that shift in how platforms productize lift testing, including Meta’s Conversion Lift and TikTok’s Conversion Lift Study.

In parallel, the plumbing becomes the competitive baseline. Consent-aware tagging through Google Consent Mode and validating setup with GA4 consent verification increasingly determines whether your “performance” numbers are stable enough to optimize against.

Creators become a standalone media channel

Creators are no longer just “influencer marketing.” They’re becoming a primary media buy with dedicated budgets, operations, and measurement requirements. The IAB’s 2025 Creator Economy Ad Spend & Strategy Report projects U.S. creator ad spend reaching $37B in 2025, and the same report highlights that the category is growing far faster than the broader media industry.

This matters because the top social media companies are quietly optimizing their products around creator supply: better monetization tools, better distribution, better brand safety controls, and more ways to repurpose creator content into paid performance creative.

Social search and intent surfaces keep expanding

People increasingly use social platforms to discover products, places, and opinions, which pushes platforms to behave more like search engines and marketplaces. That trend reshapes what “wins” in content: clearer packaging, stronger proof, and assets that answer questions directly instead of just chasing engagement.

It also means that brands who build evergreen, searchable content libraries can keep collecting demand long after the original post stops trending.

Growth markets drive platform strategy

For many top social media companies, the biggest growth opportunities are coming from high-usage markets where smartphone adoption, data affordability, and youth demographics create huge time-spent potential. Reuters’ January 29, 2026 reporting on India’s market describes roughly 500 million unique social media users in India and highlights how platform reach differs by service.

For marketers, this trend matters because platform priorities often follow market growth. Features, formats, and monetization can evolve quickly when platforms compete for dominance in the markets that define their next decade.

The baseline audience keeps growing

Even with engagement rate volatility, the total addressable audience keeps expanding. Kepios’ analysis shows 5.66 billion social media user identities worldwide as of October 2025, which helps explain why budgets keep flowing into the platforms run by the top social media companies: the scale is still unmatched.

Strategic Framework Recap

If you want a reliable way to evaluate the top social media companies without getting trapped by hype cycles, this is the recap that holds up over time.

- Scale vs. stickiness: prioritize platforms where daily habits exist, not just headline user counts.

- Monetization strength: choose platforms where measurement and buying tools are mature enough to scale responsibly.

- Distribution mechanics: match your strategy to how the platform spreads content (graph, algorithm, search, or messaging).

- Ecosystem gravity: follow where creators, brands, and partners build repeatable playbooks.

- Risk and resilience: bake in consent, privacy, and market shifts so your growth plan stays stable.

The practical idea is simple: you’re not trying to “win social.” You’re building a portfolio across the top social media companies where each platform has a clear job, a clear measurement approach, and a clear reason to stay in the mix.

FAQ – Built for the Complete Guide

1) What does “top social media companies” actually mean in practice?

It means the companies behind platforms that lead on scale, retained attention, monetization, and ecosystem leverage. “Top” changes depending on what you’re measuring, which is why it’s useful to evaluate platforms with a consistent framework instead of a single leaderboard.

2) Is it better to focus on one platform or spread across multiple?

A portfolio approach is usually safer: one channel that reliably converts, one that builds demand through discovery, one that captures intent, and one hedge to reduce dependency risk. This matters more as measurement becomes noisier and platform rules change more often.

3) Why do my results look different in GA4 than in the ad platform?

They’re measuring differently and attributing differently. GA4’s attribution documentation explains how model choice and reporting scope affect credit assignment, while ad platforms emphasize optimization signals that help delivery systems learn.

4) What’s the fastest way to improve paid performance across top social media companies?

Start by improving signal quality: make sure consent-aware measurement is implemented with Google Consent Mode and validated via GA4 consent verification, then strengthen event flows using tools like Meta’s Conversions API or TikTok’s Events API where appropriate.

5) How do I know if my social ads are truly incremental?

Use lift testing instead of relying only on attribution. Meta’s Conversion Lift and TikTok’s Conversion Lift Study are designed to estimate causal impact by comparing exposed and control groups.

6) Are creators still worth investing in, or is it saturated?

Creators are becoming a mainstream media channel, not a side tactic. The IAB’s 2025 Creator Economy Ad Spend & Strategy Report projects $37B in U.S. creator ad spend in 2025, which reflects how brands are formalizing creator investment with real budgets and measurement expectations.

7) What benchmarks should I use so I’m not guessing?

Pick one consistent benchmark source per platform and stick with it long enough to learn. Cross-industry comparisons in Rival IQ’s 2025 benchmark report and current platform benchmarks in Socialinsider’s 2026 roundup are useful context, but your most important benchmark is your own baseline trend over 30–90 days.

8) How often should I audit my channel mix across the top social media companies?

Quarterly is the sweet spot for most teams. It’s long enough to let creative learning compound and short enough to react to platform shifts. Use the audit to confirm each platform still has a clear job and your measurement foundation still holds.

9) What’s the biggest mistake businesses make when choosing platforms?

Trying to do everything everywhere before proving repeatability. The result is inconsistent cadence, shallow learning, and reporting that doesn’t build confidence. Pick two core channels first, stabilize measurement, then expand once you have a format and offer that consistently performs.

10) How big is the audience opportunity still, realistically?

The audience baseline is massive and still growing. Kepios’ reporting shows 5.66 billion social media user identities worldwide as of October 2025. The real question isn’t “is the audience there?” It’s “can you earn attention and trust inside the competition?”

11) Which trend is most likely to change how social is measured next?

Expect experimentation and consent-aware measurement to become the default. More teams are combining lift testing with improved measurement foundations like Consent Mode because attribution alone is increasingly fragile in privacy-constrained environments.

Work With Professionals

Most marketers don’t struggle because they lack ideas. They struggle because the work that actually wins on the platforms run by the top social media companies is relentless: consistent content, fast testing, clean measurement, and the confidence to scale what works without guessing.

That pressure becomes even heavier when you’re freelancing. You’re expected to deliver strategy, execution, reporting, and calm leadership energy—often without the resources an in-house team would have.

That’s why marketplaces that remove friction matter. Markework positions itself as a marketing marketplace where you can build a profile, browse listings, and connect directly, with a simple promise: no middleman and no project fees. The platform also highlights direct communication and simple monthly plans, which can make it easier to keep your pipeline predictable while you focus on doing great work.

If you’re ready to stop chasing scattered leads and start building momentum with companies actively looking for marketing specialists—performance, paid social, SEO, lifecycle, content, analytics—Markework is built for that exact reality. You set the direction, you control the conversations, and you keep the relationship direct.