If you’ve ever looked at a campaign report and thought, “We spent money… but I’m not sure what we actually learned,” you’re not alone. Paid social can feel like a slot machine when the work is rushed, the targeting is vague, and the creative is “good enough.”

A solid social media advertising strategy makes paid social behave less like a gamble and more like a system. It clarifies what you’re trying to achieve, who you’re trying to move, what you’ll show them, and how you’ll know it’s working—before you launch, not after the budget is gone.

Article Outline

- What Is a Social Media Advertising Strategy?

- Why a Social Media Advertising Strategy Matters

- Framework Overview

- Core Components

- Professional Implementation

What Is a Social Media Advertising Strategy?

A social media advertising strategy is the decision system behind your paid social work—what you’ll run, why you’ll run it, and how each decision connects to business outcomes. It sits above platform tactics (like “use Advantage+” or “try lead forms”) and instead defines the structure that makes those tactics useful.

In practice, it’s a set of choices you can defend:

- Outcome: the business result you’re buying (pipeline, sales, qualified leads, subscriptions, store visits, retention).

- Audience: the specific people you want to move, and what makes them “ready” right now.

- Offer: the reason to act today (not just “learn more”).

- Creative: the message and format that earns attention and builds belief.

- Measurement: the proof you’ll collect, and how it will change your next decision.

Think of it like engineering constraints. Platforms change constantly—formats, auctions, targeting controls, even what “best practice” means. A strategy is the stable layer that lets you adapt without starting over every quarter.

Why a Social Media Advertising Strategy Matters

Paid social is now too big—and too competitive—to wing it. In the U.S., social media advertising revenue reached about $88.8B in 2024, and total digital advertising hit roughly $259B. When that much money is flowing through auctions, “average” campaigns get priced out fast.

At the same time, the opportunity is massive because attention is concentrated. Social platforms sit inside daily habits at global scale, and their ad delivery systems are accelerating with automation and AI. Meta’s own reporting shows ad delivery volume continues to climb, with ad impressions up year over year in 2025, while prices also moved upward. Translation: if your message and funnel aren’t tight, you can spend more and get less.

A good social media advertising strategy matters because it solves four real problems teams run into:

- It prevents “random acts of advertising.” Every campaign has a job. If it can’t explain its job, it doesn’t ship.

- It protects budget from algorithm drift. Platforms optimize toward the easiest conversion unless you constrain them with clean signals, real offers, and thoughtful structure.

- It turns creative into an asset, not a cost. You’re not just making ads—you’re building a library of tested angles that compound over time.

- It makes reporting actionable. The goal isn’t prettier dashboards; it’s knowing what to change next Monday.

There’s also a channel mix reality. Creator-led placements are pulling serious budget, with IAB projecting U.S. creator ad spend at $37B in 2025. That doesn’t replace paid social—it changes what wins inside paid social. Your strategy has to account for native storytelling, creator-style creative, and faster iteration cycles.

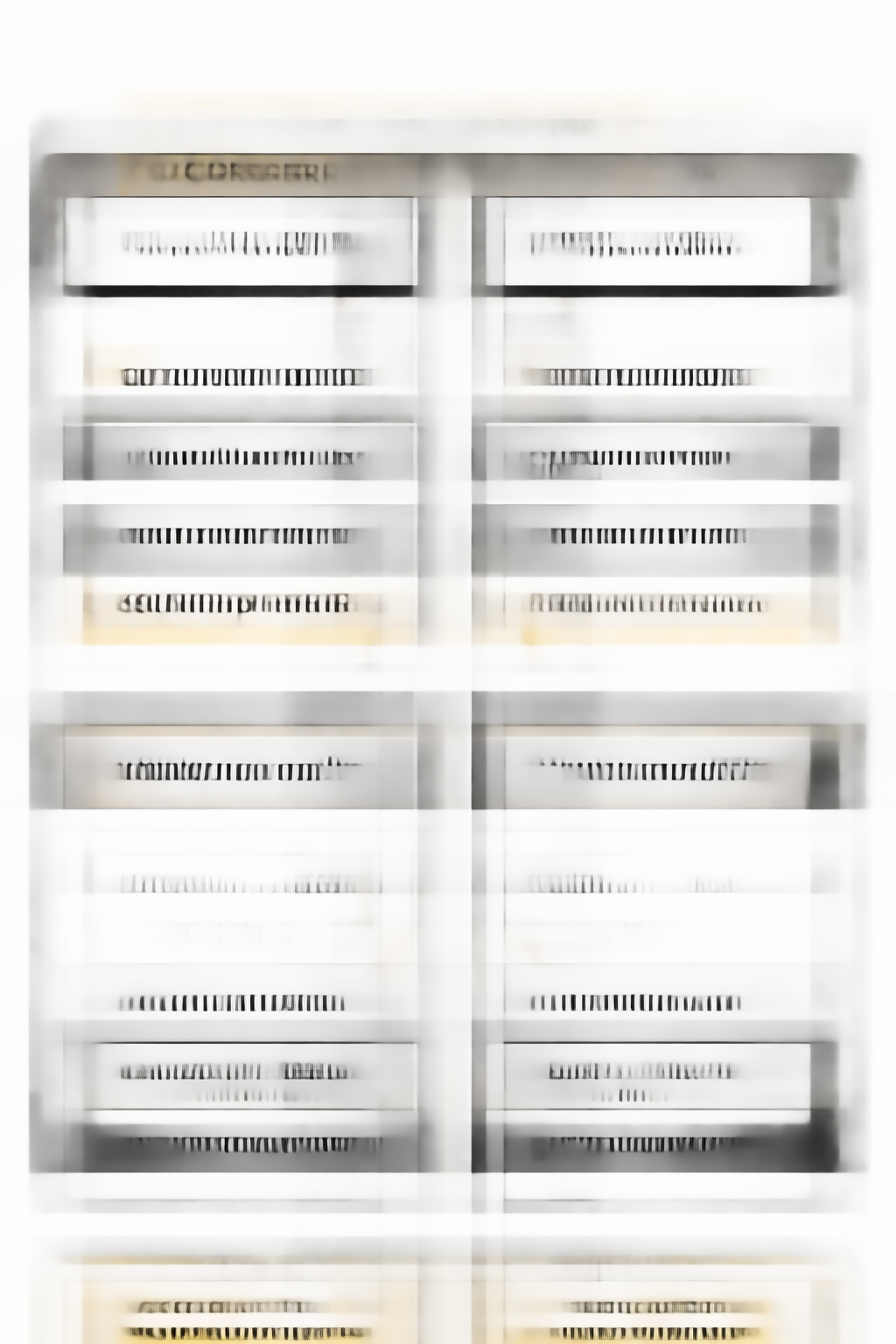

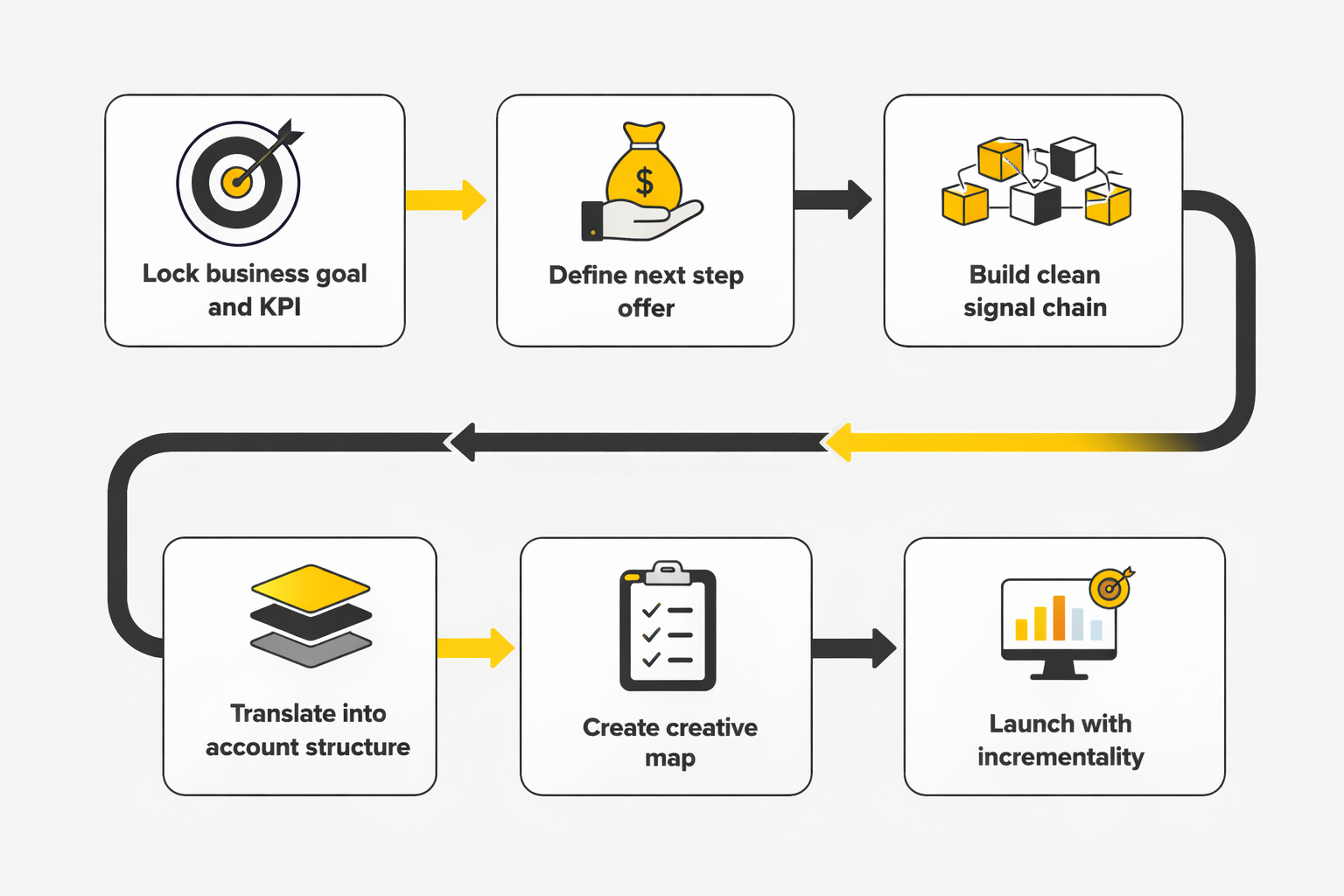

Framework Overview

This framework is designed to be practical: you can use it to build a new social media advertising strategy from scratch, or to diagnose why an existing account feels “busy” but not effective.

It follows a simple logic chain:

- Start with the business constraint. What does the business need most—cash now, pipeline, trials, retention, reactivation, brand trust?

- Translate that into a funnel promise. What must be true for a conversion to happen (beliefs, objections, urgency, trust signals)?

- Build campaigns as experiments, not guesses. Each campaign tests one meaningful idea: an audience hypothesis, an offer, or a creative angle.

- Measure the decision, not just the metric. A number matters only if it tells you which lever to pull next.

Underneath, you’ll keep the account organized around three repeating loops:

- Discovery loop: find audiences and messages that generate efficient attention.

- Conversion loop: turn that attention into qualified actions using friction-reducing offers and landing experiences.

- Learning loop: convert performance data into better creative and better structure.

Those loops don’t depend on any single platform feature, which is why they hold up even when targeting options change or new formats appear.

Core Components

Most “strategy” advice stays abstract. Here are the concrete components that actually make a social media advertising strategy work in the real world.

1) A Single, Non-Negotiable Objective

Pick one primary objective per campaign group, and make it measurable in business terms. “More awareness” isn’t a useful objective unless you define what awareness is supposed to do (increase branded search, improve conversion rate downstream, lift store visits, reduce CAC by warming traffic).

If your stakeholders want everything at once, your job is to sequence it: what comes first, what comes next, and what gets deprioritized. Strategy is often just saying “not yet” to the distractions that dilute learning.

2) Audience Built Around Readiness, Not Demographics

Great targeting is less about who someone is and more about what they’re trying to solve right now. That means your audience definition should reflect intent signals (behaviors, interests, creator ecosystems, search intent, site engagement), and it should be paired with a message that matches that moment.

In many accounts, the fastest way to improve results isn’t “narrower targeting.” It’s better matching: the right promise for the right level of awareness.

3) An Offer That Earns the Click

“We don’t do offers” usually means “we haven’t packaged value tightly enough for cold audiences.” Your offer doesn’t have to be a discount. It can be a consultation with a clear outcome, a diagnostic, a trial with a strong activation path, a limited-capacity onboarding, or a bundle that makes the first step feel safe.

Your social media advertising strategy should define offer tiers by temperature:

- Cold: low-risk, high-clarity next step that builds trust.

- Warm: proof-driven action with stronger commitment (demo, application, consult).

- Hot: direct purchase or high-intent conversion with minimal friction.

4) Creative as a Testing System

Creative isn’t decoration—it’s the targeting. Platforms can find people, but your ad has to win the moment: stop, communicate, and persuade. That’s why a strategy should define a creative testing map, not just “make 10 new ads.”

A simple, effective map tests:

- Hook: what grabs attention in the first second (problem, desire, pattern interrupt, credibility).

- Angle: the core reason to believe (speed, quality, social proof, simplicity, cost, status, safety).

- Format: founder POV, UGC-style, product demo, customer story, comparison, myth-busting.

- Proof: evidence that reduces risk (case study, third-party validation, transparent pricing, guarantees, process).

On platforms where native storytelling dominates, your creative strategy should explicitly borrow from what people already watch—especially creator-led formats. That shift is one reason creator-driven investment keeps rising in the broader ecosystem, highlighted in IAB’s creator economy findings.

5) Measurement That Connects to Decisions

Measurement becomes strategic when it answers questions like: Is the problem the audience, the offer, the creative, or the landing experience? If you can’t diagnose that, you’ll keep “optimizing” by changing everything at once.

At minimum, your measurement layer should define:

- Primary KPI: the metric that reflects the campaign’s job (qualified leads, purchases, pipeline value, CAC).

- Guardrails: metrics that prevent cheap but useless outcomes (lead quality checks, refund rate, retention, qualification rate).

- Attribution expectations: what you will and won’t believe, based on the sales cycle and channel mix.

This matters more as platforms automate. Meta’s reporting shows both delivery and pricing can move quickly over a year, as seen in its 2025 results update. If you don’t have clean measurement guardrails, automation can optimize toward the wrong thing—efficiently.

Professional Implementation

The difference between “we run ads” and “we run a strategy” is operational discipline. Professionals don’t just launch campaigns—they build a repeatable workflow that keeps learning intact even when creative changes, stakeholders change, or platforms change.

In the next part, you’ll see how to implement this social media advertising strategy as a real system: account structure, testing cadence, creative production rhythm, and the specific documentation that prevents the team from relearning the same lessons every month.

Step-By-Step Implementation

A social media advertising strategy becomes effective when it’s implemented as a repeatable operating system, not a one-time campaign plan. The steps below are designed to get you from “we have ideas” to “we have a machine” without drowning in complexity. You’ll notice the focus is less on platform tricks and more on building clean inputs that modern ad systems can learn from.

Step 1: Lock The Business Goal And The One Metric That Decides “Win”

Start with the decision the business needs to make in the next 60–90 days: scale acquisition, improve lead quality, increase repeat purchases, or defend margin. Then pick one primary KPI that reflects that decision, and make every campaign report answer the same question: “Did we move that KPI?” This reduces the “too many metrics” spiral that kills focus.

Budget pressure is real because auctions are growing and getting more automated. Meta reported full-year 2025 ad impressions up 12% and average price per ad up 9%, which is exactly the environment where fuzzy goals get punished. That shift shows up clearly in Meta’s 2025 results.

Step 2: Define The Offer As A “Next Step,” Not A Campaign Theme

Don’t start with “we’ll run a spring campaign.” Start with the next step your audience is willing to take today. For cold audiences, that step needs to feel low-risk and high-clarity; for warm audiences, it can ask for more commitment because trust already exists.

This is where many strategies quietly fail: the targeting is fine, the creative is decent, but the offer doesn’t match the moment. The simplest fix is to write the offer in one sentence that includes who it’s for, what they get, and what happens after they act. If you can’t write that sentence, you don’t have an offer yet.

Step 3: Build A Clean Signal Chain Before You Scale

Modern delivery systems thrive on reliable conversion signals. That usually means pairing browser tracking with server-side events so your optimization doesn’t collapse when browsers, blockers, and consent rules cut visibility.

At the platform level, this looks like implementing server integrations such as Meta’s Conversions API, where event deduplication is explicitly handled by matching the pixel eventID with the server event_id. Meta’s deduplication guidance is straightforward and worth following exactly. For cross-channel analysis and offline capture, GA4’s Measurement Protocol is built to send server-to-server and offline interactions into Analytics as events. Google’s Measurement Protocol docs spell out how it augments (not replaces) normal tagging.

Step 4: Translate Strategy Into Account Structure That Protects Learning

Account structure should make it hard to confuse results. When everything is blended, you never learn what actually worked, and every “optimization” becomes guesswork.

- Separate by objective: don’t mix prospecting and retargeting in one bucket if you need clean reads.

- Separate by offer tier: cold-friendly steps vs high-intent steps.

- Separate by creative hypothesis: if you’re testing a new angle, isolate it so you can trust the outcome.

This doesn’t mean over-fragmenting. It means your structure should mirror the questions you need answered.

Step 5: Create A Creative Map With Only Three Test Variables At A Time

Creative testing fails when teams change everything at once. Instead, decide what you’re testing this week and what stays stable.

- Test variable A: hook (first-second pattern interrupt).

- Test variable B: angle (the core reason to believe).

- Test variable C: proof (evidence that reduces risk).

Keep audience and offer stable while you test creative, or keep creative stable while you test audiences. This is how a social media advertising strategy turns creative into a compounding asset instead of a constant expense.

Step 6: Launch With Measurement That Can Answer “Incremental Or Not?”

Attribution dashboards are helpful for steering, but they are not the same as causality. When budgets scale, you’ll eventually need incrementality methods that compare exposed versus holdout groups.

That’s the logic behind tools like Conversion Lift studies, which TikTok frames as incrementality through experimentation. TikTok’s Conversion Lift Study explainer lays out the rationale clearly. Meta describes similar methodology for measuring true incremental impact using test and control groups. Meta’s Conversion Lift overview shows how the approach is intended to be used.

Execution Layers

Execution gets easier when you separate work into layers. Each layer has its own owner, its own checklist, and its own definition of “done.” This prevents the common failure mode where creative, targeting, and tracking are all being rebuilt mid-flight, and nobody knows what caused the change in results.

Layer 1: Foundation

This layer makes sure your social media advertising strategy has something solid to stand on. It includes naming conventions, event definitions, UTMs, landing page hygiene, and the signal chain that feeds platforms and analytics.

If this layer is weak, you’ll spend your life debating reporting instead of improving performance. GA4 is often used as a neutral ledger here, especially when you need server and offline events through Measurement Protocol. Google’s help documentation explains the role of Measurement Protocol for non-web sources.

Layer 2: Campaign System

This layer is your account structure and budget logic. It defines how you separate prospecting from retargeting, how you handle offer tiers, and how you prevent short-term volatility from killing long-term learning.

It also includes the “budget guardrails” that keep automation from optimizing toward the wrong outcome. If your KPI is revenue, your optimization needs revenue-grade signals, not just cheap clicks. A campaign system should make your intent obvious to the platform and to anyone reading the account.

Layer 3: Creative System

This layer is where you build the inputs that win attention and build belief. It’s your creative map, your production cadence, and your testing rules.

In a market where social ad spend is enormous, average creative is expensive. Social media advertising revenues totaled $88.8B in 2024 in the U.S., which is the kind of number that explains why competition for attention is brutal. That scale is documented in the IAB/PwC 2024 Internet Ad Revenue Report.

Layer 4: Measurement And Learning

This layer turns results into decisions. It’s where you define what counts as a real improvement, how you validate tracking, and when you run incrementality tests to confirm lift.

As privacy and automation reshape reporting, marketing mix modeling is becoming practical again for teams that need channel-level truth. Google positions Meridian as an in-house MMM framework meant to answer ROI and budget allocation questions. Google’s Meridian project documentation explains what it’s designed to do.

Optimization Process

Optimization is not “tweak settings until CPA drops.” A professional social media advertising strategy uses a loop that protects learning: observe, diagnose, change one lever, and validate that the change created the improvement.

The Weekly Loop

Weekly optimization is about protecting efficiency without destroying your tests. You’re looking for delivery issues, creative fatigue signals, tracking anomalies, and obvious mismatches between offer and audience.

- Stabilize signals: verify key events are flowing and deduplicating properly so you’re not “optimizing” based on broken data. Meta’s pixel and server event deduplication rules are simple and non-negotiable.

- Act on creative first: if performance dips, refresh hooks and proof before you rebuild targeting.

- Guard the offer: if clicks are strong but conversions stall, review landing friction and offer clarity before blaming the algorithm.

The Biweekly Testing Rhythm

Every two weeks, ship a controlled test that answers one meaningful question. That question might be a new creative angle, a new offer tier, or a new audience pool, but not all three at once.

This rhythm creates compounding knowledge. It also helps you avoid the emotional rollercoaster that comes from reacting to daily volatility. Your goal is to build a library of “what works here,” not chase a perfect day in Ads Manager.

The Quarterly Proof Cycle

Quarterly is when you prove impact at a level stakeholders trust. This is where incrementality testing belongs, especially before major budget increases or channel reallocation.

TikTok positions Conversion Lift Study as incrementality through experimentation, explicitly comparing a group exposed to ads with one that was not. That methodology is outlined in TikTok’s CLS overview. Meta provides a similar framework for Conversion Lift testing to quantify incremental impact beyond attribution models. Meta’s documentation explains the purpose and structure.

Implementation Stories

The easiest way to understand implementation is to see what happens when a real team tries to prove impact under real pressure. The story below is built from a publicly documented case study, with results and methodology described by the platform that ran the measurement.

Domino’s Spain And The Euro 2024 Pressure Test

The moment the Eurocup schedule locked in, the clock started ticking. Domino’s Spain wanted to ride the surge of attention, but it wasn’t enough to “be present” on TikTok. The real challenge was proving that TikTok wasn’t just entertainment spend, but a channel that could move sales and measurable intent.

The backstory was practical: Domino’s is a delivery-first brand with a reputation for affordability, and big sports moments naturally spike ordering behavior. But spikes don’t automatically mean ads caused the spike. That’s why the team set a clear goal: understand whether TikTok could genuinely impact business outcomes during a major event. That objective is described in TikTok’s published Domino’s case study.

The wall showed up in the measurement problem. If viewers see an ad and later search “Domino’s” on Google or order on the app, a simplistic attribution view can miss the causal link. Even worse, it can make the channel look weaker than it is, which leads to underinvestment. Domino’s needed a way to separate coincidence from incremental lift.

The epiphany was to treat measurement as part of the strategy, not something you do after the campaign. During the Eurocup period, they activated a full-funnel setup that combined brand and performance ads to stay top-of-mind while still driving conversions. They used In-Feed Ads optimized toward web conversions (complete payment) and app installs, then paired that with a Conversion Lift Study built to compare people who saw the ads with people who did not. The solution and test design are detailed in the same TikTok case study.

The journey turned into a disciplined signal plan. The Omni-channel Conversion Lift approach allowed them to capture incremental conversion events from both web and app, which is crucial when customers move between devices. They also measured Search Lift to quantify incremental Google searches among exposed users, because search is often where intent shows up after discovery. The campaign wasn’t treated as one KPI; it was treated as a path from attention to intent to purchase. TikTok describes the omnichannel and search lift measurement approach explicitly.

The final conflict was the reality of event-driven volatility. Results can swing with match schedules, national team moments, and social chatter, making it easy to misread performance if you only look at totals. In the case study write-up, TikTok notes spikes during key matches featuring Spain, and a notable surge during the final. That kind of volatility is exactly why controlled measurement matters, because it helps you interpret peaks as incremental behavior rather than noise. Those match-linked observations are recorded in the published results section.

The dream outcome was proof the channel moved real actions, not just views. The study reported a 14% lift in app install conversion rates, a 6% lift in brand-related searches on browsers, and a 9% lift in complete payments across web and app. The key win wasn’t one metric—it was demonstrating influence across the funnel with statistical significance from an incremental perspective, giving the team a stronger basis for future budget decisions. Those lift results are published directly in TikTok’s Domino’s Spain Conversion Lift Study case study.

The Implementation Checklist Professionals Actually Use

- One-page strategy brief: objective, audience readiness, offer, creative map, measurement plan.

- Signal chain doc: event definitions, deduplication logic, validation steps, and “what breaks what.” Meta’s event deduplication requirements are a good model for this.

- Creative library: every ad tagged by hook, angle, proof, and offer tier so you can replicate what works.

- Testing log: hypothesis, what stayed constant, what changed, what happened, what you’ll do next.

- Quarterly truth layer: at least one incrementality study or calibration exercise so budgeting doesn’t rely on attribution faith. TikTok’s CLS framework is designed for exactly this kind of proof.

Why This Scales

Social platforms aren’t getting simpler, and spending isn’t getting cheaper. Meta’s own financial reporting shows sustained growth in delivery volume and pricing dynamics, which raises the cost of messy execution. Those year-over-year movements are visible in Meta’s full-year 2025 results.

When your implementation is disciplined, you earn the right to scale. Creative tests become cleaner, budget conversations become easier, and measurement becomes something you trust rather than something you argue about. That’s when a social media advertising strategy stops being a document and becomes a predictable growth system.

Statistics And Data

Data is the part of a social media advertising strategy that keeps you honest. Creative can be exciting, and platform features can feel like shortcuts, but the numbers are what decide whether you’ve built demand or just rented attention for a week.

It also helps to zoom out and remember the scale of the environment you’re competing in. Social media advertising revenue in the U.S. reached $88.8B in 2024, and total digital ad revenue hit about $259B. Those 2024 totals are broken out in the IAB/PwC full-year report, reinforced by IAB’s release summary and recapped in industry coverage of the same dataset.

On the supply side, platforms are expanding inventory and improving delivery through AI, which changes what “normal” performance feels like. Meta reported full-year 2025 ad impressions up 12% and average price per ad up 9%. Those year-over-year shifts are stated directly in Meta’s full-year 2025 results, echoed in the distributed earnings release, and summarized in WARC’s write-up.

Performance Benchmarks

Benchmarks are useful, but only when you treat them like guardrails rather than a scoreboard. The easiest way to wreck a social media advertising strategy is to chase a “good CTR” while ignoring whether the clicks are qualified, or to celebrate a low CPA while the sales team quietly hates the leads.

Instead of asking “What’s the average CTR?”, start by asking, “What does healthy look like for our business model?” That baseline should be built from your own last 90 days of data, segmented by:

- Funnel stage: prospecting, consideration, retargeting.

- Offer tier: low-risk next step vs high-intent conversion.

- Creative format: creator-style video, demo, static, carousel, testimonial.

- Placement mix: feed-heavy vs Reels/short-form vs story-heavy.

External benchmarks help you sanity-check direction. For example, multiple paid media benchmark sources have described periods where impression volume grew while CPM pressure eased in parts of 2025, which can make “efficiency” look better even if your creative isn’t improving. Tinuiti’s benchmark commentary highlights how impression growth and CPM movement can change the feel of scaling, and Skai’s quarterly reporting tracks similar auction dynamics across paid social.

The practical benchmark rule is simple: you don’t compare your CTR to the internet. You compare your CTR to your own CTR from the last test cycle, while keeping the offer and audience readiness constant. That’s how benchmarks support learning instead of distracting from it.

Analytics Interpretation

Good interpretation is not “reading metrics.” It’s diagnosing why the metric moved, and what lever you should pull next without breaking the rest of the system.

The Three Questions That Keep Your Reporting Useful

1) Is the problem attention, persuasion, or friction? If impressions and video views look healthy but clicks are weak, the hook or message is likely the issue. If clicks are strong but conversions stall, you’re usually dealing with landing page friction or an offer mismatch.

2) Did performance change because demand changed, or because delivery changed? Auction conditions shift. Inventory expands. Platforms adjust ranking. Meta’s full-year 2025 reporting shows both impression volume and ad pricing moved year over year, which can make “improvements” look real even when they’re partially market-driven. Those delivery and pricing changes are visible in the earnings metrics.

3) Are you measuring what you can actually act on? A metric is only valuable if it tells you what to do next. That’s why professionals combine fast steering signals (creative performance, conversion rate trends) with periodic truth signals (incrementality tests and calibration).

When You Need Incrementality, Not Another Dashboard

If you’re deciding whether to scale budgets, shift channel mix, or defend spend to skeptical stakeholders, attribution alone often can’t carry the conversation. Incrementality testing is built for those moments because it compares a group exposed to ads with a group that wasn’t.

That’s the logic behind conversion lift studies. Meta’s lift study documentation describes experiments designed to measure business impact, and TikTok’s help center frames Conversion Lift Study as a way to answer whether ads drove incremental growth.

Case Stories

Numbers matter, but the real drama in analytics is emotional: the moment a team realizes the report they’ve been trusting can’t answer the question leadership is asking. That’s when a social media advertising strategy either evolves into a measurement-led system, or it stays stuck in “optimize and hope.”

Gruvi’s Holiday Moment When “Good Results” Weren’t Good Enough

The pressure wasn’t subtle. Holiday traffic was surging, competitors were everywhere, and every day felt like a make-or-break decision on budget. The campaigns looked strong on the surface, but leadership wanted a harder answer than “the dashboard says it’s working.”

The backstory was a familiar setup for ecommerce teams. Gruvi was running Meta campaigns during the holiday window and needed to balance aggressive growth with the reality that some sales would happen anyway. The team wasn’t trying to win a reporting argument; they were trying to make spending decisions they could defend after the season ended. The campaign period and measurement context are described in Meta’s Gruvi success story.

The wall came from uncertainty. When sales rise during peak season, attribution can make almost any channel look like a hero. But without a clearer view of incremental impact, scaling becomes risky because you might be paying for demand you didn’t create.

The epiphany was to treat measurement as part of the media plan, not the recap. Rather than relying on attribution alone, the team focused on proving causality through an experiment design that could isolate incremental impact. The social media advertising strategy shifted from “optimize performance” to “prove lift, then scale with confidence.”

The journey required discipline. They ran campaigns through Meta and used a conversion lift approach to separate exposed and holdout groups, then looked at how behavior differed between them. That shift forced better hygiene in signals and events, because experimentation is unforgiving when tracking is messy. Meta’s lift study guidance explains why clean experimental structure matters.

The final conflict was the part nobody posts on LinkedIn. Running experiments during a busy season can create tension: teams want to push every dollar into sales, while measurement requires restraint and structure. When performance fluctuates day to day, it’s tempting to abandon the plan and chase short-term stability.

The dream outcome was clarity that changed decision-making. Instead of debating attribution models, the team had a more credible way to judge whether spend created incremental business impact. That’s the kind of result that turns analytics from “reporting” into a lever inside the social media advertising strategy. The lift-study approach and holiday context are documented in the Gruvi case write-up.

Professional Promotion

If you’re serious about scaling, your social media advertising strategy should include a “data advantage” that competitors can’t copy overnight. That advantage usually comes from three things: clean signals, disciplined experimentation, and interpretation that leads to better creative and better offers.

Professionals earn trust by making measurement practical. They don’t promise perfect attribution. They build a system that gets more truthful over time: server-side events where it matters, periodic incrementality tests when the budget decisions get big, and a reporting rhythm that turns every campaign into usable learning.

If you want to market yourself as the person clients rely on, this is the angle: anyone can launch ads, but very few people can build a measurement-led engine that helps a business spend with confidence. That’s what gets you retained, referred, and brought into bigger budgets.

Future Trends

The next wave of social performance won’t be won by people who “know the platforms.” It will be won by people who design a social media advertising strategy that plays well with automation, privacy constraints, and creator-first creative culture all at once.

AI-Powered Creative Production Becomes The Default, But Not The Differentiator

Creative volume is becoming easier to produce, which means average creative will flood the auctions. The edge shifts to taste, positioning, and proof—because the tools will help everyone iterate faster. You can already see the direction in platform tooling like Meta’s Advantage+ creative and TikTok’s push toward automation through solutions like Smart Performance Campaign.

The brands that keep winning won’t be the ones with the most variations. They’ll be the ones with the best “message inventory”: the clearest offers, the sharpest hooks, and the strongest evidence.

Creator Media Becomes A Core Buying Channel, Not A Side Tactic

Creator-led creative is no longer experimental. U.S. creator ad spend was projected to reach $37B in 2025, up 26% year over year, which is the kind of growth that forces every performance team to rethink creative sourcing and partnerships. IAB’s 2025 creator economy report summary explains the projection and growth rate, with a supporting press release in IAB’s announcement and additional coverage in Marketing Dive’s recap.

Practically, this means your social media advertising strategy needs a creator workflow: sourcing, briefs, usage rights, whitelisting/partnership ads, and a testing system that turns creator content into repeatable performance angles.

Measurement Shifts From “Attribution Certainty” To “Proof Of Incrementality”

As privacy constraints continue to shape data availability, teams that rely purely on last-click thinking will keep losing internal budget fights. Incrementality becomes the language of credibility because it answers the question that matters: did ads cause growth?

That’s why lift testing is being treated as a core measurement muscle, not a luxury, reflected in tools like Meta Conversion Lift and the way TikTok frames Conversion Lift Study.

Signal Quality Becomes More Important Than Targeting Tricks

The best targeting advantage is having better signals than your competitors. When you pass clean conversion and value events, the platform can learn who your best customers are and find more of them, even as targeting controls evolve.

This is why server-side integrations have become foundational for many teams, using pathways like Meta Conversions API and GA4’s Measurement Protocol.

Strategic Framework Recap

If you take one thing from this guide, let it be this: a social media advertising strategy is not a collection of tactics. It’s a system that turns attention into outcomes and outcomes into learning.

- Start with the business constraint: what must improve in the next 60–90 days.

- Choose the offer and the next step: match commitment level to audience readiness.

- Build clean signals: make it easy for platforms to learn from real outcomes and value.

- Run creative as a testing engine: hook, angle, proof, then iterate with discipline.

- Measure for decisions: weekly steering signals plus periodic incrementality proof.

When those parts work together, performance becomes less emotional. You stop guessing, you stop reacting to noise, and you start scaling what’s actually real.

FAQ – Built For This Complete Guide

What is a social media advertising strategy in plain English?

It’s the set of decisions that explains what you’re trying to achieve, who you’re trying to move, what you’ll show them, and how you’ll measure success. The ads are the output. The strategy is the operating system behind the output.

Which platforms should be included in a social media advertising strategy?

Include the platforms your audience already uses and where your offer fits the intent level. For many brands that means Meta and TikTok, sometimes LinkedIn for B2B, and sometimes YouTube placements depending on creative and funnel stage. The “right” answer is the platform mix you can test and measure without breaking signal quality.

How much budget do you need to make paid social work?

You need enough budget to buy learning, not just impressions. If you can’t run stable tests, you’ll keep changing variables without knowing what drove the outcome. Start with a budget that supports consistent creative testing and enough conversions to train optimization, then scale only after you have one proven angle and a clean signal chain.

What matters more: creative or targeting?

In most accounts, creative matters more because it earns attention and creates belief. Targeting helps, but platforms are increasingly good at delivery once you give them clean signals. If performance drops, improving hooks and proof is often the fastest lever before you rebuild audiences.

Is CTR a good KPI for a social media advertising strategy?

CTR is useful as a diagnostic for attention and message clarity, but it’s not a business KPI. A “good” CTR that attracts the wrong people can destroy lead quality or conversion rate. Use CTR to guide creative iteration, and use revenue-grade KPIs to decide what to scale.

Why does attribution feel inconsistent across platforms?

Because attribution is a model built on incomplete paths. People move across devices, browsers, and channels, and privacy controls reduce what can be observed. That’s why teams increasingly use incrementality methods like Conversion Lift testing and lift studies when the budget decision needs stronger proof.

Do you really need server-side tracking?

If you want durable optimization signals, it’s becoming hard to avoid. Server-side tracking helps stabilize event delivery when browser signals degrade, and it supports better measurement hygiene. Common paths include Meta Conversions API and GA4’s Measurement Protocol.

How do you prevent creative fatigue?

You prevent fatigue by rotating fresh hooks and proof before performance collapses. Build a creative pipeline that ships new angles on a schedule, repurposes what’s working into new formats, and keeps your best performers supported with variations rather than forcing one ad to carry the account forever.

What is a realistic testing cadence for a small team?

A realistic cadence is one meaningful test every 1–2 weeks, where only one major variable changes. If you change offer, audience, and creative at the same time, you lose learning. Small teams win by running fewer tests with cleaner reads.

How should a B2B social media advertising strategy differ from ecommerce?

B2B strategies should optimize toward qualified pipeline signals, not just form fills. That usually means tighter lead qualification, stronger proof content, and connecting offline outcomes back to platforms where possible. The goal is to train optimization toward “sales-ready,” not “easy-to-submit.”

What are the most common mistakes that kill performance?

The biggest ones are unclear offers, weak proof, messy tracking, and changing too many variables at once. Another common mistake is scaling spend before you have one proven creative angle and one reliable conversion signal.

How long does it take to build a reliable system?

Most teams can build a stable baseline in a few test cycles if they keep the strategy tight: one objective, one offer tier at a time, disciplined creative testing, and clean signals. Reliability comes from compounding learning, not from finding a single “perfect campaign.”

Work With Professionals

If you’ve read this far, you already know the frustrating truth: most teams don’t fail because they lack effort. They fail because they lack a system. They run ads, they pull reports, they change settings, and they still can’t explain what’s actually driving results.

If you’re a marketer trying to build a career on performance, the opportunity is huge—but only if you can get consistent access to real clients and real budgets. That’s where a marketplace built specifically for marketing work changes the game.

MARKEWORK is set up to remove the two biggest friction points freelancers hate: platform commissions and slow, gated communication. The platform describes itself as “no middleman” with “no project fees,” and the pricing page reinforces “No commissions. No project fees,” alongside direct communication with companies and access to “thousands of job listings.” That positioning is stated on the homepage, supported by the pricing plan details and the “why us” explanation of direct communication and subscription-only access.

Here’s the emotional promise that matters: you can take the social media advertising strategy you’ve built here, turn it into a repeatable client offer, and focus on outcomes instead of fighting platform fees. You build a profile, you find roles and projects that match your strengths, and you talk directly with the people who can say yes.

If you want a cleaner path to more marketing clients—without commissions eating your margins—start here: