Paid social can feel deceptively simple from the outside: pick a platform, boost a post, watch leads roll in. In reality, paid social is closer to running a fast-moving trading desk—creative, targeting, measurement, and landing pages all changing at once, with algorithms reacting in real time.

That’s why a paid social media agency isn’t just “someone who runs ads.” The best agencies build a repeatable system that turns messy inputs (offers, audiences, content, budgets, seasonality) into stable outcomes you can plan around.

Article Outline

- What Is a Paid Social Media Agency

- Why a Paid Social Media Agency Matters

- Framework Overview

- Core Components

- Professional Implementation

What Is a Paid Social Media Agency

A paid social media agency is a specialist team that plans, builds, runs, and measures advertising campaigns across social platforms—then improves performance through structured testing. You’re not hiring “ad posters.” You’re hiring a system that connects business goals to campaign execution and proof.

In a strong engagement, the agency typically owns (or co-owns) five things: strategy, creative direction, campaign operations, measurement, and continuous optimization. The deliverable isn’t “more impressions.” It’s dependable growth signals—sales, qualified leads, pipeline, app events—measured in a way your finance team can trust.

Social ad platforms are built to automate more of the work every year, but that doesn’t reduce the need for expertise—it shifts the expertise toward inputs and validation. Agencies that stay effective know how to feed platforms the right offers, creatives, and conversion data, and they validate impact through experimentation rather than vibes.

Why a Paid Social Media Agency Matters

Social is now a major line item in digital advertising, which means mistakes get expensive quickly. When the overall market is scaling, inefficient accounts don’t just underperform—they quietly consume budget that could have been compounding elsewhere. In the U.S., digital advertising reached $259B in 2024, and social media advertising revenue totaled $88.8B in 2024.

At the same time, the measurement environment is getting tougher. Signal loss, privacy constraints, and cross-device journeys mean you can’t rely on last-click reporting to tell the truth. The teams that win treat incrementality as a core discipline, using holdouts and experiments to separate “ads got the credit” from “ads caused the outcome.” Google frames incrementality testing as a cornerstone of modern measurement in a privacy-first world in its guidance on incrementality testing.

A paid social media agency matters most when any of these are true: you need faster iteration than your internal team can support, you’re scaling spend beyond what “good enough” reporting can safely guide, your creative engine is inconsistent, or performance is volatile month to month.

Framework Overview

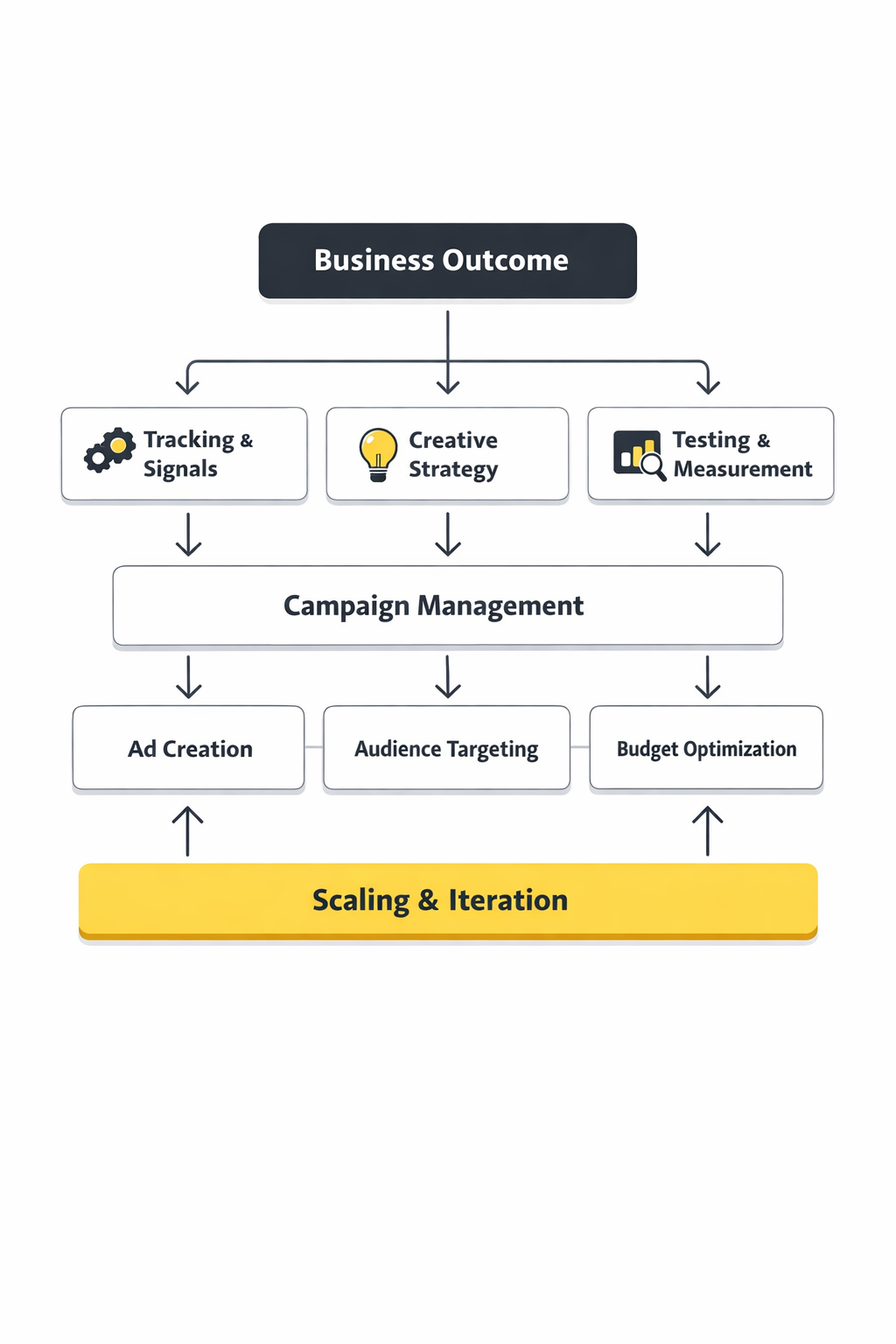

Think of a paid social media agency as operating a loop, not a linear checklist. The loop is what makes results sustainable: define the business outcome, translate it into platform signals, ship creative at tempo, run controlled tests, and feed learnings back into the next cycle.

This loop matters because the market moves even when you don’t. Global advertising forecasts continue to climb, with WPP Media projecting global advertising revenue to reach $1.14 trillion in 2025. As budgets grow, competition grows with them—so the advantage shifts to teams that can learn and adapt faster, not teams that simply spend more.

In the sections ahead, the framework is broken into components you can audit. If you’re evaluating an agency (or building an internal capability), you’ll be able to point to each component and ask: do we have this, is it working, and can we prove it?

Core Components

Strategy That Starts With the Offer, Not the Platform

Paid social performance rarely breaks because “Meta got expensive” or “TikTok changed the algorithm.” More often, it breaks because the offer isn’t specific enough, the audience isn’t clearly defined, or the landing experience doesn’t match the promise. A good paid social media agency begins by sharpening what you’re selling and why someone should care right now, then uses platforms as distribution—not as strategy.

This is also where channel selection becomes rational. Not every product needs every platform. The goal is to pick the platform mix that matches the buying cycle, the creative format you can sustain, and the measurement you can defend.

A Creative System Built for Volume and Learning

Social platforms reward fresh, relevant creative. The agency’s job is to build a creative pipeline that produces variations quickly, tags what changed, and connects outcomes back to inputs. Without that system, “creative testing” turns into random posting with a nicer spreadsheet.

On modern platforms, creative is also a targeting tool. As automation expands, your ads increasingly “find” the right people based on who responds to each creative angle. That only works when you ship enough meaningful angles and let the system learn.

Account Structure and Budgeting That Prevents Self-Sabotage

Many accounts are structured in ways that make learning slower: too many campaigns, too many ad sets, tiny budgets spread across everything, and constant resets that stop algorithms from stabilizing. A paid social media agency should simplify the account so budgets concentrate where learning is fastest, then isolate tests so you can trust the results.

This is where guardrails live: how budgets scale, when to consolidate, when to split, and how to avoid “moving targets” that make reporting meaningless.

Measurement That Proves Causality

Reliable measurement is the difference between scaling confidently and guessing loudly. Platform dashboards are useful, but they’re not neutral—each platform has incentives around attribution and reported value.

A professional approach combines platform reporting, first-party analytics, and experimentation. Meta explains conversion lift as a way to quantify incremental impact by comparing outcomes between exposed and holdout groups in its overview of Conversion Lift studies. TikTok similarly positions its Conversion Lift Study as an incrementality method to identify conversions directly driven by ads.

Professional Implementation

What “Good” Looks Like in the First 30 Days

In a healthy onboarding, you should see clarity, not chaos. That means: tracking and events audited, naming conventions standardized, reporting aligned to one definition of success, and a creative plan that’s realistic for your team to sustain. The output of month one shouldn’t be a miracle—it should be a stable foundation that makes month two meaningfully faster.

You should also see the agency draw a line between what they know and what they need to learn. Paid social is never a one-shot bet; it’s a controlled learning process. If everything is presented as certainty, that’s usually a sign the system is missing.

The Operating Rhythm That Prevents Performance Whiplash

Professional implementation runs on cadence: weekly creative reviews, structured experiment backlogs, consistent budget pacing, and a measurement routine that doesn’t change every time results fluctuate. This rhythm is what keeps you from overreacting to daily noise and underreacting to real trends.

In Part 2, we’ll go deeper into how agencies design campaigns for different goals (lead gen, ecommerce, pipeline, apps), and how to evaluate whether their “framework” is real—or just a slide deck with nice shapes.

Tools Supporting the Framework

A paid social media agency can have world-class strategy and creative, then lose the game because the plumbing is leaking. If conversion signals arrive late, if offline revenue never makes it back to the ad platforms, or if every dashboard tells a different story, you end up “optimizing” noise.

The tool stack is the part that makes the framework real. It’s how creative learning becomes repeatable, how budget scaling stays safe, and how you can defend decisions when results fluctuate. The stakes are high simply because so much money flows through these channels now, with U.S. social media advertising revenue totaling $88.8B in 2024.

What matters most is not having “all the tools.” It’s having the minimum set that (1) sends clean signals, (2) keeps attribution honest, and (3) keeps your team moving fast without breaking measurement every time you launch something new.

Tool Categories

Most paid social stacks look different on the surface, but they’re usually built from the same building blocks. The categories below are the ones a paid social media agency uses to keep campaigns measurable, scalable, and explainable.

Platform Measurement and Signal Capture

This category is about feeding ad platforms the signals they need to optimize while keeping data transfer privacy-conscious and resilient. For Meta, that often means implementing server-side event sharing via the Meta Conversions API (or directly through the Meta Conversions API developer documentation), instead of relying only on browser-side tracking.

TikTok’s equivalent is the TikTok Events API, which TikTok describes as a reliable connection for web, app, and offline data, with customization over what is shared and how. If you’re running Snapchat, Snap documents both the Snap Pixel and its server-to-server Conversions API, and Pinterest provides browser-side and developer options via the Pinterest tag and conversion tracking resources.

Tag Management and Data Governance

When multiple teams and vendors touch tracking, you need a system that prevents “one quick script” from becoming permanent technical debt. Google’s overview of server-side tagging explains why teams move parts of measurement off the browser and into a controlled environment, and the manual setup guide shows how serious the infrastructure side can get when you want reliability.

Even if you don’t go fully server-side, governance is the point: naming conventions, environments (staging vs production), change logs, and a clear owner for what ships. A paid social media agency that treats tracking like “someone else’s problem” eventually pays for it in performance volatility.

Analytics, Warehousing, and BI

Platform dashboards are good at showing platform performance. They’re not built to be your single source of truth. The practical move is exporting raw events to a warehouse so you can reconcile spend, revenue, and lifecycle behavior without being trapped in one vendor’s attribution model.

Google’s BigQuery export overview for Google Analytics covers exporting raw GA events for deeper analysis, and Google’s BigQuery Export schema documentation helps teams understand the structure of what they’re actually querying. This is what makes it possible to run more trustworthy analysis when your business spans Shopify, subscriptions, sales teams, or offline transactions.

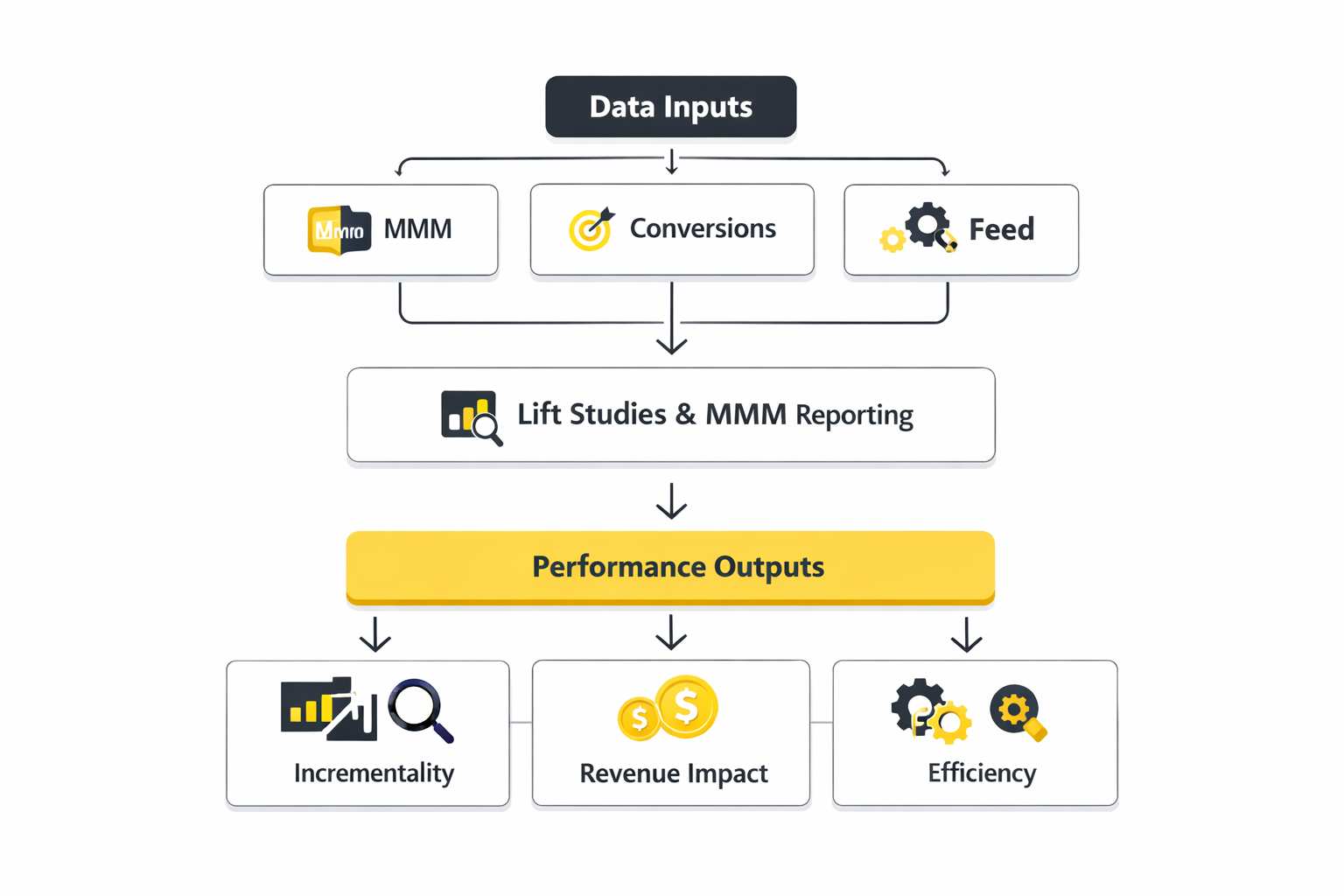

Attribution and Incrementality

Attribution tools tell you how credit is assigned. Incrementality tools tell you whether ads caused the result. You usually need both, because attribution can be directionally helpful while still being wrong about impact.

TikTok positions its Conversion Lift Study as incrementality through experimentation, and Meta offers its own experiment-based approach via Conversion Lift. When a paid social media agency builds a measurement plan, these tools sit next to your first-party analytics and help answer the question that matters most: “Would this have happened anyway?”

Tool Comparison

Tool comparison is where most teams get stuck, because everyone wants a universal winner. In practice, the right stack depends on what you sell, how long people take to buy, and how complex your customer journey is.

If You Need a Clean Baseline Fast

- Start with platform signal capture: implement Meta’s Conversions API and TikTok’s Events API where relevant, then validate event quality before scaling spend.

- Use one analytics source of truth: centralize KPIs in GA4, and plan for raw exports when you outgrow standard reports using GA to BigQuery export.

- Add experimentation when you’re scaling: use Meta Conversion Lift or TikTok’s Conversion Lift Study when budgets are large enough to justify controlled tests.

When Server-Side Becomes Worth It

Server-side tagging is not a badge of sophistication. It’s a reliability decision. If you’re frequently changing landing pages, if consent and browser limitations are creating gaps, or if you need tighter control over what data is shared, then a server-side setup can reduce instability.

Google frames server-side tagging as moving tag instrumentation into a server container, which can improve control and reduce dependency on browser execution. A paid social media agency should be able to explain what server-side changes for you in plain terms: what gets more reliable, what gets more complex, and what it will cost to maintain.

Platform Tools vs Independent Measurement

Platform tools are easiest to implement and fastest to use, but they’re not neutral. Independent measurement can be more trustworthy, but it’s slower and requires discipline. The smart approach is layered: platforms for optimization speed, independent systems for budget confidence.

When you see a paid social media agency insisting on only one layer, treat it as a warning sign. Mature teams build a stack that lets them move quickly without lying to themselves about impact.

Real Tool Stack Stories

Tumble’s Tool Stack Shift From “Platform Noise” to Confident Scaling

The numbers looked fine—until they didn’t. One week the platforms claimed growth, the next week they contradicted each other, and the team couldn’t tell if they were scaling demand or just scaling attribution. With budgets and product launches on the line, “we think it’s working” stopped being an acceptable answer.

Tumble is a home and living brand known for washable rugs, and they were expanding into new product lines and channels with a lean team. As they grew, platform-reported metrics and last-click attribution made it difficult to separate real demand from reporting distortions. They needed a way to evaluate initiatives like a kids rug collection without guessing whether early performance was underreported or genuinely weak.

The wall was psychological as much as technical. Every optimization was a debate because each dashboard told a different story. If they trusted platform numbers, they risked scaling spend into a mirage. If they distrusted everything, they would move too slowly and lose momentum.

The breakthrough came when they treated measurement as a product, not a report. Instead of asking “Which platform is right?”, they asked “What view of the customer journey would let us make decisions without flinching?” That reframed the problem from attribution arguments to building one unified system.

They implemented Northbeam as a centralized attribution platform and adopted a clicks + views approach to better capture longer consideration and multi-touch journeys, described in Northbeam’s Tumble customer story. The setup also created a home for product-level insights and cross-channel creative learnings, so optimization didn’t depend on whichever platform yelled the loudest. With that foundation, they could scale across Meta, TikTok, and AppLovin without constantly resetting the narrative of what was “working.”

Of course, the shift created a new kind of friction. When you move from flattering platform metrics to a unified view, some campaigns suddenly look worse before you learn how to improve them. That can spook teams who were used to celebrating vanity ROAS spikes. The discipline was sticking with the new truth long enough to let it guide better decisions.

The dream outcome was stability—being able to invest without second-guessing every report. The case study highlights 150%+ YoY revenue growth and a 275% increase in Meta spend driving 335% revenue growth, framed as results of clearer performance visibility rather than platform hype. In plain language, they traded noisy confidence for real confidence, then used that clarity to scale.

A Rollout Plan That Doesn’t Break Revenue Tracking

Professional teams roll out tools in layers. First, they instrument the minimum events that represent real business value, then they validate end-to-end data quality, then they expand. That staged approach matters because changing measurement mid-flight can make performance look like it improved or collapsed when, in reality, the tracking changed.

For example, if you add server-side event sharing, you should expect reporting differences because more signals may be captured. TikTok’s Events API overview emphasizes reliable connections across web, app, and offline channels, with control over what data is shared, which is why teams often implement it as a measurement upgrade rather than a campaign tactic via Events API.

QA and Monitoring as a Standing Habit

Most tracking failures don’t happen on launch day. They happen three weeks later when someone updates the checkout, a new cookie banner rolls out, or a “temporary” landing page becomes permanent. A paid social media agency that takes measurement seriously sets a recurring QA routine: event volumes, match quality indicators where available, and spot checks between platform-reported conversions and back-end truth.

When GA data is used for reconciliation, exporting raw events makes it easier to debug anomalies and build consistent definitions, which is why teams lean on GA to BigQuery export once performance decisions start depending on small differences.

When to Upgrade the Stack

Upgrading tools should be triggered by operational pain, not trends. If your team can’t agree on performance, if scaling spend creates unpredictable results, or if you can’t connect ad spend to real business outcomes, that’s when upgrades pay for themselves.

When budgets grow, experiments become more valuable because they let you validate impact rather than debate attribution. That’s where lift studies—like Meta Conversion Lift and TikTok’s Conversion Lift Study—become the difference between scaling confidently and scaling blindly.

Step-by-Step Implementation

A paid social media agency can move fast without breaking things when implementation follows a clear sequence. The goal is simple: get reliable conversion signals, ship creative at a sustainable tempo, and create a repeatable way to learn what’s working so scaling spend feels boring—in a good way.

This matters even more in a world where social is no longer an “experiment budget.” U.S. social media advertising rebounded strongly to $88.8B in 2024, and the same number is repeated in independent coverage like Google’s summary of the report and industry reporting that calls out the rebound. When spend is that large, “close enough tracking” stops being a rounding error.

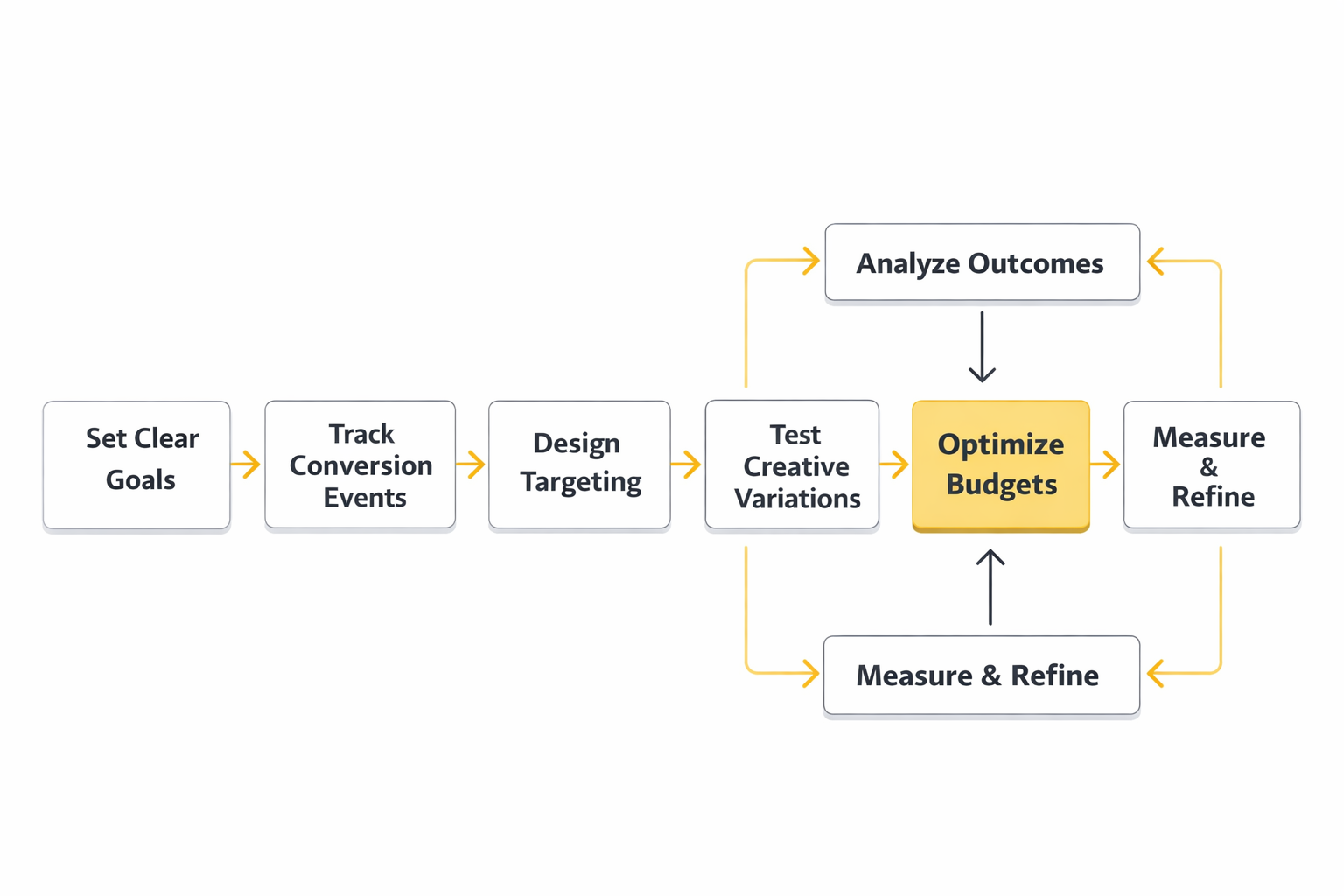

Step 1: Define One Outcome That the Business Will Actually Care About

Start by picking one primary outcome that is measurable and worth paying for: purchase, qualified lead, booked demo, app subscription, or a downstream pipeline event that sales agrees is real. This is where teams get tempted to list five KPIs and call it strategy. A paid social media agency keeps it tighter because every extra “priority” creates conflicting optimization signals.

Then define what counts as success in plain language. If it’s lead gen, what makes a lead qualified? If it’s ecommerce, what counts as revenue (gross, net, first purchase only, subscription included)? This is the step that prevents reporting debates later.

Step 2: Make the Conversion Signal Reliable Before You Touch Budgets

Before scaling spend, the agency should validate that events fire correctly, dedupe properly, and match what actually happened in your backend. If your conversion signal is shaky, optimization becomes a game of chasing ghosts. Your platform can’t learn from what it can’t see.

For Meta, that reliability often means setting up a server-side connection like the Meta Conversions API alongside browser tracking, then verifying that the same purchase or lead isn’t being counted twice. For incrementality, Meta also documents how Conversion Lift uses a randomized control methodology to estimate what ads truly caused, which becomes a safety check when attribution gets noisy.

Step 3: Build a Creative Plan You Can Sustain Weekly

Implementation breaks when creative is treated like a one-time deliverable. A paid social media agency should help you commit to a realistic production rhythm: a set number of new concepts per week, plus variations that test a single change at a time. That pace matters more than perfection, because stale creative can turn stable performance into a sudden drop with no obvious explanation.

Set up a simple creative taxonomy: angle, format, hook, proof, and offer. If you can’t label what you shipped, you can’t learn from it. The point is to build a library of “what tends to work for us” so you’re not reinventing the wheel every month.

Step 4: Launch With Guardrails, Not With Grand Promises

The first launch should be designed to learn quickly without corrupting the account. That typically means fewer campaigns, clearer budget pacing, and controlled tests instead of scattered micro-campaigns that never exit the learning phase. Guardrails also include frequency watchouts, spend caps, and clear rules for when changes are allowed.

Good agencies also set expectations about volatility. The goal of the first few weeks is not a miracle; it’s clarity. Once clarity exists, performance can compound.

Execution Layers

Once campaigns are live, execution is not “manage ads.” It’s maintaining several layers at the same time, each one supporting the others. When a paid social media agency is doing real work, you can usually map their weekly actions to these layers.

Layer 1: Offer and Message Matching

This layer is the contract you make with the customer: what you’re promising, who it’s for, and why now. When performance dips, this is often the true cause—even if the dashboard makes it look like a targeting issue. If the message is generic, the algorithm may still spend your budget, but you’ll pay for low intent.

A strong agency keeps offer testing on the roadmap. Not “constant discounting,” but testing positioning, risk reversal, and the proof that makes people believe you.

Layer 2: Creative Production and Testing Discipline

Creative is your engine. The job is to ship enough variety that the platform can find pockets of response, while keeping each test interpretable. If you change everything at once, you never learn what moved the needle.

This is also where teams win by being practical. You don’t need a studio shoot every week. You do need a system that can repeatedly produce strong hooks, credible proof, and clean calls to action.

Layer 3: Platform Operations and Budget Control

This layer is about keeping the account stable: budgets, pacing, consolidation versus segmentation, exclusions, and keeping learning uninterrupted. It’s where amateurs panic and professionals keep calm. When an agency makes changes, they should be able to explain what they expect to happen, how long they’ll wait, and what signal would prove them wrong.

It also includes protecting the account from “helpful” internal edits. One untracked landing page swap can erase weeks of learning. That’s why agencies with mature processes enforce change management.

Layer 4: Measurement and Experimentation

This layer answers one question: did ads cause the outcome, or did they just show up near it? Attribution can point you in the right direction, but incrementality is what lets you scale with confidence. Meta explains how lift tests work by randomly dividing an audience into exposed and control groups in its lift and holdouts overview, and it provides technical detail on randomized lift study design in the lift studies guide.

When budgets are meaningful, this layer becomes the seatbelt. It’s the difference between “we’re scaling because ROAS looks great” and “we’re scaling because we proved lift.”

Optimization Process

Optimization is not a set of hacks. It’s a workflow: decide what you’re testing, run it long enough to learn something, then turn the result into a new default. A paid social media agency that can’t describe their optimization process usually ends up making random changes and calling it “active management.”

Build an Experiment Backlog That’s Tied to the Business Outcome

The backlog should include creative tests, offer tests, landing page tests, and measurement tests, each written as a hypothesis. “Try broad targeting” is not a hypothesis. “If we lead with outcome-first proof instead of features, we will increase qualified lead rate because the audience will self-select” is closer to how professionals think.

Each hypothesis should also define what success looks like and what would disprove it. That’s what keeps optimization from turning into opinion wars.

Run on a Consistent Cadence

Most teams lose because they optimize on emotion. A calm weekly cadence beats frantic daily tweaks. That cadence typically includes a weekly creative review, a measurement QA check, and a single focused test that the whole team agrees to judge fairly.

When something goes wrong, cadence becomes even more important. Instead of thrashing, the agency should isolate the problem by checking signal quality, creative fatigue, and auction dynamics in a logical order.

Protect Signal Quality Like It’s Performance Itself

Signal quality is performance. When conversion reporting becomes incomplete, the platform learns from a distorted picture of reality, and bidding can drift in ways that are hard to diagnose. That’s why many high-performing advertisers move measurement infrastructure toward server-side collection for better control and durability.

Square describes this directly in its engineering write-up on server-to-server integration with server-side Google Tag Manager, and Google highlights similar outcomes in its Square case study. The same improvement is also referenced in Google’s product announcement about server-side tagging for performance and privacy.

Implementation Stories

Square’s “We’re Flying Blind” Moment and the Fix That Made Measurement Trustworthy

The panic didn’t come from a bad quarter. It came from not knowing whether the quarter was actually bad. Teams could feel spend increasing, but the conversion picture was fuzzy enough that every decision felt like a bet instead of a plan.

Leadership pressure made it worse. When budgets rise, the question stops being “can we get more clicks?” and becomes “can we prove these conversions are real?” In that moment, a paid social media agency’s job is not to reassure—it’s to bring clarity.

Square was already a sophisticated business with a complex ecosystem of online and offline behavior. Their marketing measurement had to survive changing privacy conditions, browser limitations, and the reality that customers don’t always convert in clean, trackable ways. They also needed a setup that didn’t trade privacy for performance.

They hit a wall that many growing advertisers recognize: measurement gaps started to distort optimization. If conversions aren’t captured consistently, the algorithms you rely on learn the wrong lessons. And when every dashboard disagrees, teams waste energy debating numbers instead of improving outcomes.

The turning point was realizing the problem wasn’t “marketing performance” first—it was data collection and control. Instead of trying to argue attribution models into submission, they rebuilt how signals moved from the site to marketing partners. That reframed the work from analytics theater into infrastructure.

They implemented server-side tagging through Google Tag Manager, routing and enriching event data in a more controlled way. Google’s case study notes that early results improved Square’s ability to track conversions by 46%, and Square’s own engineering post repeats the same lift as part of its explanation of observability and durability in server-to-server integration with server-side GTM. Google also references the same improvement in its post on bringing performance and privacy together with server-side tagging.

Then the messy part arrived. Better measurement can make performance look different overnight, which can spark internal fear that “something broke.” The team had to resist overreacting to reporting shifts and instead validate changes calmly, partner by partner, until the new baseline was trustworthy.

The payoff wasn’t just a prettier dashboard. It was decision-making confidence: cleaner conversion visibility, better control over data flows, and a foundation that supports scale without relying on luck. That’s the kind of outcome a paid social media agency should be building toward—stable systems that keep working even when the environment changes.

What You Should Be Able to See Every Month

- A clear learning log: what was tested, what changed, what was learned, and what the next test is.

- A creative performance map: which angles and formats are working, not just which ads spent money.

- A measurement health check: confirmation that events and conversions still match backend reality.

- A scaling plan with constraints: where budget can safely increase, and what would trigger caution.

The Red Flags That Usually Predict Wasted Spend

- Constant changes with no hypothesis: activity without a learning structure is just churn.

- Reporting that shifts definitions: when “success” changes every month, nobody can trust the trend.

- No plan for incrementality: if you can’t measure lift at meaningful spend levels, scaling becomes guesswork.

- Creative treated as a one-off: when new creative stops, performance often slowly collapses.

In Part 4, we’ll go deeper into analytics and how a paid social media agency should build dashboards that executives trust, without confusing attribution with causality.

Statistics and Data

A paid social media agency lives and dies by data quality, but not because dashboards are glamorous. It’s because your ads are competing inside auctions where tiny changes compound fast, and the only way to scale safely is to understand what’s actually happening underneath the surface.

At a market level, the stakes are obvious. U.S. internet advertising revenue reached $258.6B in 2024, and social media advertising revenue grew to $88.8B in 2024. Zooming out further, WPP Media’s latest forecast projects global advertising revenue (excluding U.S. political advertising) to reach $1.14T in 2025.

Now bring it back to campaign reality: efficiency can swing quickly quarter to quarter. Skai’s Q4 2025 digital trends summary describes paid social as a “triple win,” with spend up 9%, clicks up 23%, and CPMs down 6%, and the same topline is repeated in Skai’s planning takeaways for 2026 in its Q4 2025 key takeaways post. That kind of swing is exactly why serious teams treat analytics as a control system, not a retroactive report card.

Performance Benchmarks

Benchmarks are useful when they stop you from panicking, and dangerous when they convince you to copy someone else’s playbook. A paid social media agency should use benchmarks as guardrails, then build your real baseline from your own history, your own margins, and your own funnel.

Market Trend Benchmarks That Help You Sanity-Check Movement

If your CPM rises or falls, you want to know whether that’s your account, your creative, or the broader auction. Reports that aggregate large datasets can help you stay grounded. Tinuiti’s Q3 2025 benchmark notes that on Facebook, ad impressions rose 15% year over year while CPM fell 6%, a pattern that can happen when inventory expands faster than demand. That lines up with Skai’s Q4 2025 finding of CPMs down 6% while clicks surged, which is a very different environment than the “everything is getting more expensive forever” narrative people repeat out of habit.

Use trend benchmarks for context, not judgement. If your costs spike while the market is easing, that’s a strong hint the problem is internal. If everyone’s costs spike, your job is to adapt rather than blame the agency.

Account Benchmarks You Can Actually Operate Against

This is where the agency earns its keep. These aren’t vanity benchmarks; they’re operating baselines that tell you whether the system is healthy:

- Signal coverage: do your tracked conversions reliably match backend reality, or are you “optimizing” on missing data?

- Creative throughput: how many genuinely new concepts ship weekly, and how quickly do winners emerge?

- Efficiency over time: are cost per desired outcome and conversion rates stable enough to forecast, or do they swing wildly?

- Incrementality confidence: can you prove lift at meaningful spend levels, or are you depending on attribution narratives?

Why “Universal CTR” and “Universal ROAS” Benchmarks Mislead

Two brands can both be “doing great” while having totally different CTRs and ROAS. Creative formats, audience temperature, offer type, and attribution windows change what “good” looks like. That’s why platform-run experiments matter more than generic averages: they’re built to estimate what your ads caused, not how your ad account compares to a blended market mix.

Meta frames this directly: Conversion Lift estimates the incremental effect of your ads, which is the kind of benchmark that actually supports decision-making when you’re scaling budgets.

Analytics Interpretation

A clean dashboard is nice. A dashboard that changes decisions is the goal. The best paid social media agency reporting reads like a story of cause and effect: what changed, why it likely changed, what you did about it, and what you expect next.

Separate Leading Indicators From Outcomes

Outcomes like revenue, qualified leads, and pipeline are what you’re paying for, but they’re lagging indicators. Leading indicators help you spot problems early: creative fatigue signals, landing page conversion rate shifts, frequency creep, or sudden changes in conversion signal quality.

When a team only watches outcomes, the first time they notice a problem is after the budget has already been wasted. When they watch leading indicators too, they can intervene while performance is still salvageable.

Don’t Confuse Attribution With Causality

Attribution answers “who gets the credit.” Causality answers “what actually caused the result.” A paid social media agency that can’t explain the difference will always be tempted to scale whatever looks best in-platform, even when that performance is inflated by overlap with other channels.

Lift studies exist precisely to solve this. Google Ads help documentation describes lift studies as going beyond clicks and impressions to measure the true impact of your campaigns. Meta’s Search Lift approach is also built on experimentation; industry coverage explains that Search Lift is powered by a conversion lift study that splits a target audience into test and control groups to estimate incremental search behavior driven by ads.

Read the Shape of Performance, Not Just the Average

Two weeks can have the same ROAS and still mean completely different things. One might be stable with consistent conversion rate and steady creative performance. The other might be a roller coaster where one ad carried the account and then burned out. An agency should help you see distribution: which creatives are carrying results, how performance changes by audience temperature, and whether scale is coming from real demand or from retargeting overlap.

Turn Reporting Into Forecasting

The practical question executives care about is “what happens if we spend more?” That’s why reporting should naturally lead into forecasting: marginal returns, budget scenarios, and what the next constraint will be (creative volume, landing page capacity, sales team follow-up, or inventory).

When paid social is efficient across the market, scaling opportunities open up. Skai’s Q4 2025 report calling out click growth outpacing spend while CPMs fell is exactly the kind of environment where a well-instrumented account can grow fast—if your measurement is strong enough to keep you honest.

Case Stories

Gruvi’s “Holiday Pressure Cooker” and the Moment Measurement Had to Grow Up

The week before holiday budgets ramped, the team faced the classic nightmare: performance looked decent, but nobody trusted it. Paid social was getting credit, search was getting credit, and email was getting credit, all at the same time. If they scaled spend aggressively and the numbers were inflated, the miss would be loud and expensive.

The pressure wasn’t just internal. Holiday windows compress decision-making, and “we’ll learn over the next quarter” is not a luxury you have in mid-November. When everyone is moving at speed, attribution arguments can become an excuse to do nothing—or to do the wrong thing confidently.

Gruvi, an ecommerce brand, ran campaigns across Meta during a high-stakes period and needed to know what the ads were truly contributing. The goal wasn’t vanity engagement; it was incremental value they could stand behind. That’s the moment when a paid social media agency has to shift from optimization mode into proof mode.

The wall hit when the team realized dashboards couldn’t settle the debate. Platform reporting is useful, but it’s still reporting inside a platform’s own logic. When multiple channels are active, “credited” is not the same as “caused,” and last-click logic can flatten a messy customer journey into a misleading story.

The epiphany was simple: stop arguing about credit and run an experiment. Meta’s own documentation explains that Conversion Lift measures incremental effect by comparing outcomes between people who could see ads and those who couldn’t. Gruvi’s Meta success story notes they evaluated campaigns that ran from November 12, 2024 to January 5, 2025 using a Conversion Lift study with Search Lift methodology.

Then came the journey: campaigns were structured so the experiment could run cleanly, and the team focused on learning rather than micro-tweaking every day. They leaned into automation where it helped, including modern shopping-focused setups; Meta’s developer overview describes Advantage+ Shopping Campaigns as an automated approach designed to use machine learning to optimize ecommerce advertising.

The final conflict was the uncomfortable part: experiments can challenge beliefs. When you measure incrementality, you sometimes discover that the campaigns everyone loved were mostly capturing demand that would have converted anyway. That kind of result can create friction, because it forces hard decisions about creative direction, budget allocation, and what “success” really means.

The dream outcome is clarity you can operate from. With a lift-based view, the team can decide where to scale, where to cut, and where to invest in creative that creates new demand rather than just harvesting existing demand. It’s the kind of confidence a paid social media agency should bring to the table: not louder dashboards, but decisions that stay rational even when the calendar gets stressful.

Professional Promotion

“Promotion” in a mature paid social media agency relationship doesn’t mean hype. It means communicating performance in a way that earns trust, keeps stakeholders aligned, and makes it easier to keep investing when results are good—and to make smart changes when they aren’t.

Promote the Process, Not Just the Result

Results fluctuate. Processes compound. When a team earns internal support, it’s usually because leadership understands the system behind the numbers: what’s being tested, what’s being learned, and what the next constraint is. That’s how you avoid the trap of celebrating a spike you can’t repeat.

It also helps stakeholders contextualize performance inside the wider market. When the industry is in an efficiency upswing—like Skai’s Q4 2025 view of click growth outpacing spend with lower CPMs—the conversation should shift toward scaling responsibly, not endlessly squeezing the same budget.

Make Executive Updates Short, Honest, and Decision-Oriented

- One sentence on the goal: what outcome are we optimizing for right now?

- One paragraph on what changed: creative, offer, landing experience, budget, or measurement.

- One paragraph on what we learned: what evidence supports the conclusion.

- One clear decision request: scale, hold, invest in creative production, or run a lift test.

Use Proof Standards That Match the Size of the Bet

When spend is small, directional learning is fine. When spend becomes meaningful, you need causality tools to support big decisions. That’s why lift studies exist across platforms: they create a higher standard of proof than attribution alone. Google’s lift overview frames this as measuring true impact beyond surface metrics, and Meta’s lift methods are designed to do the same for Meta campaigns via Conversion Lift.

In Part 5, we’ll connect analytics to the wider ecosystem—how paid social interacts with creative operations, sales follow-up, lifecycle marketing, and budgeting—so performance becomes a coordinated system instead of a channel-by-channel tug of war.

Future Trends

The next wave of advantage for a paid social media agency won’t come from “new targeting hacks.” It will come from adapting faster to three big shifts: heavier automation, stricter privacy expectations, and higher standards for proving incremental impact.

Automation is accelerating on every major platform, especially around creative generation and delivery. The direction is clear in reporting that Meta plans to enable brands to more fully automate ad creation and targeting with AI by the end of 2026, covered in Investopedia’s report on Meta’s AI ad roadmap. At the same time, the privacy and consumer-trust side is tightening: Meta’s plan to use some AI chatbot interactions as inputs for ad personalization (outside the EU/UK at rollout) has been reported by Reuters and covered in The Wall Street Journal.

The most practical implication is this: creative and measurement will matter more than ever. If platforms automate more of the “who sees what” logic, your edge becomes the inputs you feed the system and the proof you use to validate results.

Trend 1: Creative Becomes Both the Message and the Targeting

As platforms lean into automation, creative diversity becomes a performance lever, not a brand-only concern. If your account ships one angle, the algorithm learns one audience. If your account ships a portfolio of angles, you give the system more ways to find and scale real demand.

At the same time, brands are discovering that “AI everywhere” can create backlash if it feels inauthentic. Aerie’s decision to publicly commit to not using AI-generated people in ads became a major engagement moment, covered in Business Insider’s story on Aerie’s anti-AI stance. This is a preview of a bigger reality: generative tools can help you scale production, but trust still has to be earned.

Trend 2: Incrementality Becomes the Default Standard for “Proof”

At higher spend levels, the question shifts from “what got credit?” to “what caused the outcome?” That’s why lift testing is becoming more central. Meta describes Conversion Lift as measuring incremental effect, TikTok explains Conversion Lift Study as incrementality through experimentation, and Google frames lift studies as measuring true impact beyond clicks and impressions.

Expect more brands to demand this level of proof as budgets grow, especially as teams get tired of dashboards that look confident but don’t match business reality.

Trend 3: Marketing Mix Modeling Gets Practical, Not Academic

Marketing mix modeling is moving from “expensive and slow” to more usable for modern teams. Google’s open-source Meridian was built for privacy-safe measurement and unified planning, explained in Think with Google’s Meridian overview and the technical details in Google’s Meridian documentation.

In February 2026, Google also introduced Scenario Planner to make MMM outputs easier for marketers to use day-to-day, described in Google’s announcement of Meridian Scenario Planner. For a paid social media agency, this matters because MMM can help allocate budgets across channels without relying on user-level identifiers.

Trend 4: Server-Side and API-Based Signals Become the Reliable Baseline

Signal loss isn’t theoretical anymore. Teams are building more resilient pipelines using APIs and server-side approaches. TikTok describes the Events API as consolidating event sharing across web, app, offline, and CRM, and Google explains the logic behind server-side tagging for more control and durability.

Square’s engineering team also documented how they approached server-to-server data flow in their server-side GTM integration write-up, which pairs well with the broader shift toward measurement that keeps working even when browsers and policies change.

Strategic Framework Recap

If you remember one thing from this guide, let it be this: a paid social media agency is valuable when it runs a system, not when it runs “ads.” The system connects strategy, creative, measurement, and iteration into a loop you can scale without losing your mind.

- Start with a business outcome: one primary conversion goal the company agrees matters.

- Make signals reliable: clean tracking and event pipelines so optimization is learning from truth, not missing data.

- Ship creative like a product: steady weekly output, clear hypotheses, and a library of angles that can scale.

- Operate with guardrails: fewer random changes, more controlled tests, calmer budgeting decisions.

- Prove incrementality as spend grows: lift studies and (when needed) MMM so you scale what causes growth, not what gets credit.

This is also why modern measurement tooling is getting so much attention. Digital advertising is enormous—U.S. internet advertising revenue reached $258.6B in 2024—so small improvements in clarity and process compound fast.

FAQ – Built for the Complete Guide

What does a paid social media agency actually do day to day?

They translate business goals into campaign structure, creative direction, and measurement, then run a testing cadence that steadily improves results. The best teams spend as much time on creative planning, landing-page alignment, and signal quality as they do inside ad accounts.

How quickly should I expect results after hiring a paid social media agency?

Expect clarity first, then performance. A strong first month usually looks like tracking stabilization, creative direction, a clean testing plan, and early directional learnings. Sustainable improvement usually shows up once the agency has shipped multiple creative cycles and has enough conversion volume to learn reliably.

Which platforms should a paid social media agency manage?

Only the platforms that match your audience, creative strengths, and buying cycle. The right mix is rarely “everywhere.” A good agency will explain why each platform is included and what role it plays in the funnel instead of defaulting to a checklist.

What is a lift study, and why does it matter?

Lift studies are controlled experiments designed to estimate the incremental impact of advertising by comparing outcomes between people who could see ads and a holdout group. Meta positions Conversion Lift as a way to measure incremental effect, and Google explains lift studies as measuring true impact beyond surface metrics.

Do I need server-side tracking?

Not always. You need reliable signals. If browser-side tracking is stable and your decisions aren’t sensitive to small reporting gaps, you may not need server-side infrastructure yet. If measurement breaks often or you need more control, Google’s overview of server-side tagging shows why teams move parts of measurement into a more controlled environment.

How much creative does paid social really need?

More than most teams think, but less than “daily new ads” panic suggests. The key is a sustainable weekly rhythm: a few new concepts and a few focused variations, with clean tagging so you learn what changed and why it mattered.

What should I keep in-house versus letting the agency own?

Keep your brand voice, customer insights, and business constraints close. Let the agency own the operational cadence: testing plans, implementation detail, reporting structure, and performance iteration. The healthiest setups look like a partnership where the agency runs the system and you provide the context that keeps it grounded.

How do I evaluate if a paid social media agency is good?

Ask for evidence of process, not promises. You should see clear hypotheses, disciplined testing, honest reporting, and a plan for incrementality as budgets grow. If everything sounds like certainty and none of it looks like a method, that’s a warning sign.

When does marketing mix modeling become useful?

MMM becomes useful when you need a privacy-safe way to allocate budgets across channels and you’re no longer satisfied with channel-level attribution alone. Google’s Meridian is positioned as an open-source MMM framework designed to help teams measure impact across channels in its Meridian overview, with the implementation details documented in Meridian’s project documentation.

Why does performance swing even when nothing “changed”?

Because the auction changes: competition, seasonality, and user behavior shift continuously. Creative fatigue and signal drift can also happen quietly. Trend reporting can help you sanity-check auction conditions; for example, Tinuiti’s benchmark reporting shows how Facebook CPM and impression dynamics can change year over year in its Q3 2025 benchmark analysis.

What should I ask for during onboarding?

Ask for a measurement plan (events, QA routine, and definitions), a creative production plan (weekly output and review cadence), a testing backlog (hypotheses and priorities), and a reporting format that makes decisions easier instead of longer.

Work With Professionals

If you’ve read this far, you already know what separates a strong paid social media agency from “someone who runs ads”: systems, proof, and a repeatable way to learn. The funny thing is that these same skills are exactly what companies are hiring for right now—often on a contract basis, often remotely, often with urgent timelines.

That creates a real opportunity if you’re a marketer who wants more freedom without giving up serious work. There are already 11,838 open marketing jobs on Upwork, and the broader remote market keeps moving with active listings across boards like We Work Remotely’s sales and marketing roles and Remote OK’s marketing listings. That’s the reality behind the “10K+ remote marketing contracts” idea: demand is deep, but standing out requires a clean profile, strong positioning, and the confidence to close.

markework.com is built to help marketers and companies move faster without the usual marketplace friction. The platform is positioned as a marketing marketplace where you can post listings, build a profile, and connect directly, with “no middleman” and no project fees. That means you’re not watching a percentage disappear from every invoice—you keep what you earn and stay in direct control of the relationship.

For freelancers, the appeal is simple: you can build a profile that actually represents your strengths (paid social, lifecycle, SEO, analytics, creative strategy), browse roles that match your skill set, and negotiate directly with companies that need help now. For companies, it’s a clean way to post a role, review marketing profiles, and start a conversation without slow back-and-forth.

If you want to turn paid social skills into a steady pipeline of better clients, start by building a profile that reflects the system you now understand: your measurement discipline, your creative process, and your ability to prove impact. Then go where the work is and make it easy for teams to say yes.