Buying (or selling) social media marketing support is oddly hard for something that’s supposedly “easy to post.” The real work sits underneath the posts: strategy, creative systems, community, paid distribution, measurement, and the unglamorous operations that keep everything consistent.

That’s why social media marketing services packages matter. A good package doesn’t just bundle tasks; it bundles outcomes, responsibilities, timelines, and the data you’ll use to decide what to double down on—and what to stop.

This guide breaks down what these packages actually include, why businesses keep moving budget into social, and a framework you can use to choose (or build) a package that’s clear, measurable, and realistic to deliver.

Article Outline

- What Are Social Media Marketing Service Packages?

- Why Social Media Marketing Service Packages Matter

- Framework Overview

- Core Components

- Professional Implementation

- Package Tiers and What They Typically Include

- Pricing, Scoping, and Avoiding Surprise Work

- Measurement, Reporting, and Decision-Making

- Tools, Workflow, and the Modern Social Stack

- Common Mistakes (and How to Fix Them)

- FAQ

What Are Social Media Marketing Service Packages?

Social media marketing services packages are structured bundles of deliverables and responsibilities offered on a recurring basis (usually monthly). The best ones are designed around a specific “job to be done,” like building demand for a B2B company on LinkedIn, driving store traffic through short-form video, or turning social into a reliable customer care channel.

At their simplest, packages answer five practical questions:

- What gets done? Content, community management, paid campaigns, reporting, creative production, and more.

- How often? Weekly posting cadence, monthly reporting, daily engagement windows, and campaign cycles.

- Who does what? What the provider owns versus what the client must supply (approvals, product updates, access, brand assets).

- How success is measured? Metrics tied to business outcomes, not just vanity engagement.

- What happens when priorities change? Rules for scope shifts, add-ons, and testing.

Packages exist because social media is now too big to run on improvisation. When there are billions of active identities across platforms and people spend meaningful time inside social feeds, brands are competing in an always-on attention market—not a once-a-week posting schedule. That scale shows up in the numbers: the global “state of social” snapshot for 2025 tracks 5.24 billion social media user identities worldwide, and it keeps climbing year over year.

Why Social Media Marketing Service Packages Matter

For most businesses, the problem isn’t whether social “works.” The problem is that social is a moving target: formats change, distribution shifts, competitors copy what’s working, and internal teams get stretched thin. Packages matter because they create stability—so you can improve over time instead of constantly restarting.

There’s also a budget reality: many marketing teams are being asked to deliver more without getting meaningfully more money. In Gartner’s 2025 spend research, a majority of CMOs said they don’t have sufficient budget to execute their strategy. In that environment, clear packages reduce waste: fewer ad-hoc requests, fewer “can we also…” surprises, and fewer weeks lost to unclear approvals.

Social also keeps pulling budget because it’s where discovery, evaluation, and purchase decisions increasingly blend together. In Europe, channel growth signals the same direction of travel: IAB Europe notes that social media advertising grew strongly in 2024. And on the consumer side, research on how people shop and research products shows social is no longer a “top of funnel only” channel—McKinsey’s 2025 consumer research highlights rising use of social media for product research across markets.

When you put those forces together—tight budgets, shifting platforms, and social influencing more of the journey—the right package becomes a risk-management tool. It gives you a repeatable system that can run consistently, while still leaving room for experimentation.

Framework Overview

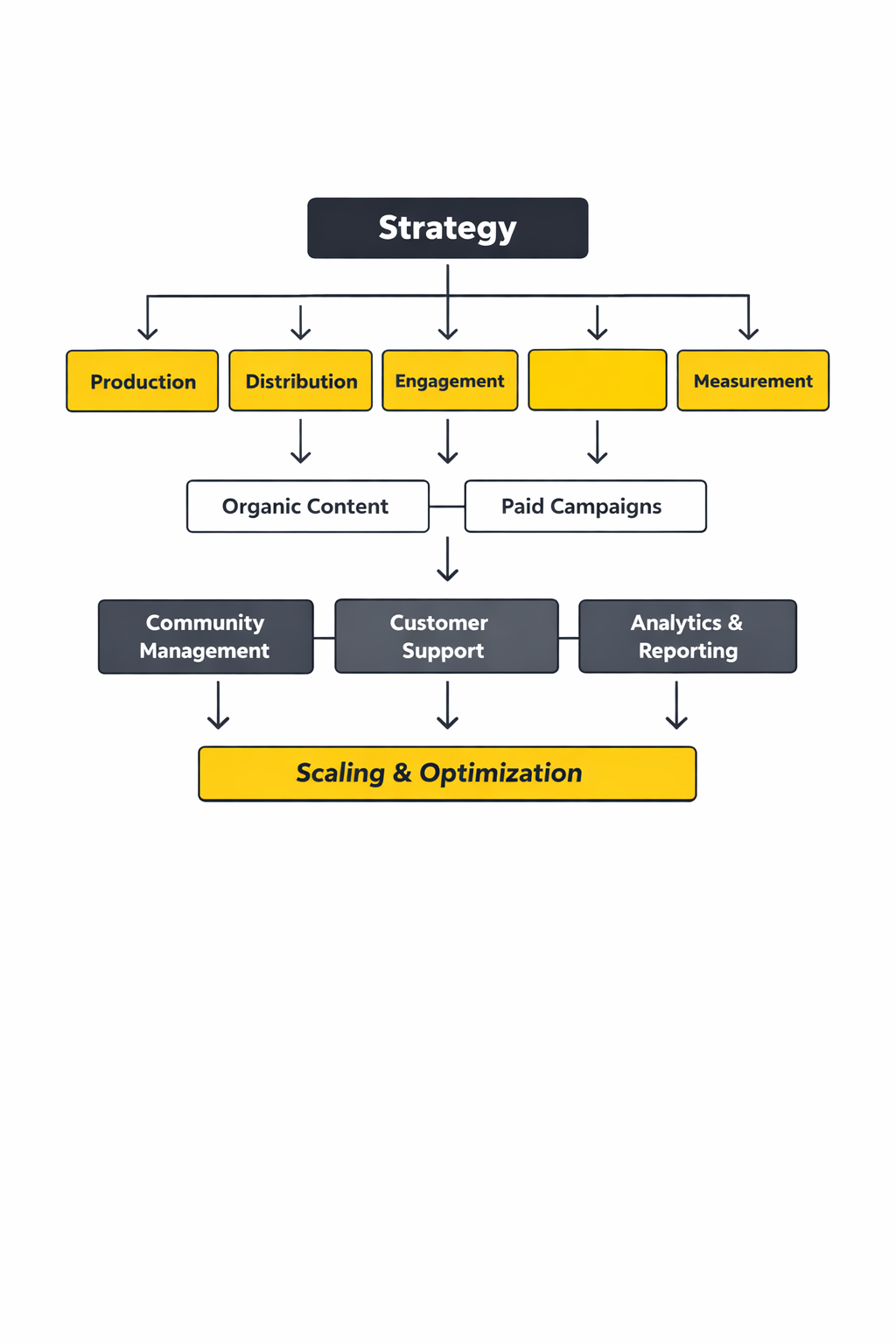

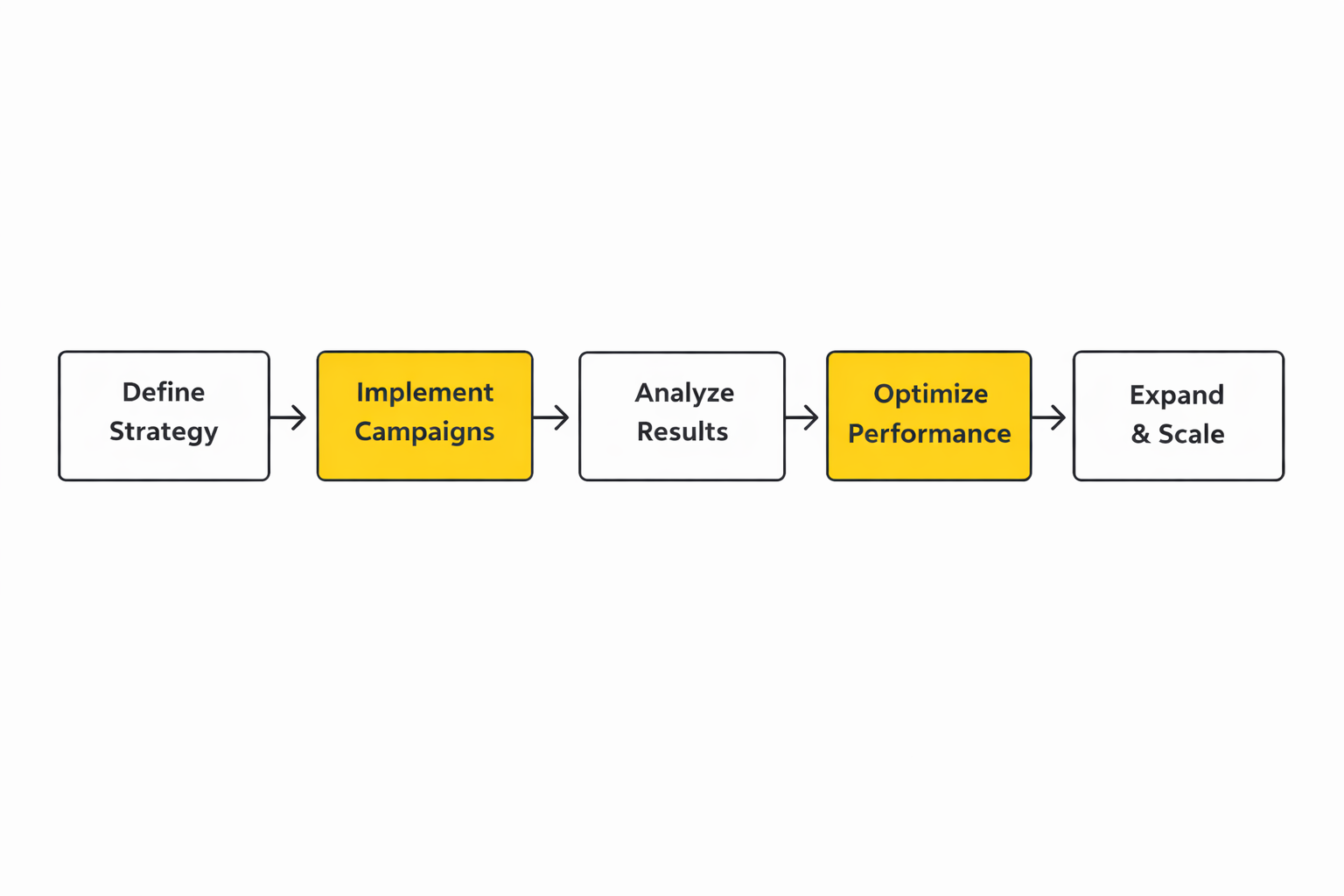

To make social media marketing services packages easy to compare, use a simple framework: Strategy → Production → Distribution → Engagement → Measurement. Every serious package fits into these five layers. If one layer is missing, results become unpredictable (or impossible to prove).

- Strategy: Audience, positioning, content pillars, channel choices, and what “winning” looks like for this business.

- Production: Creative direction, content creation, editing, design systems, and approvals.

- Distribution: Publishing cadence, paid amplification, creator collaborations, and repurposing workflows.

- Engagement: Community management, customer care, social listening, and response playbooks.

- Measurement: Reporting that connects activity to outcomes, plus a decision rhythm for optimizations.

This framework also helps you avoid the most common trap: paying for “a lot of content” without paying for the system that makes that content perform. Even platform giants are openly signaling where the money flows: advertising remains the engine, and the companies that win are investing heavily in the infrastructure behind targeting, relevance, and creative iteration. You can see it in platform earnings disclosures like Meta’s full-year 2025 results, which underline how central ad performance is to the ecosystem.

Core Components

Most social media marketing services packages are combinations of the same building blocks. The difference between a “cheap package” and a “serious package” is usually not the list of items—it’s the depth, the quality controls, and the operational clarity.

Strategy and planning

This is where you define what you will post, why it matters, and how it connects to business goals. It includes channel selection, content pillars, creative direction, and a testing plan. Without it, your calendar becomes a random walk through trends and last-minute asks.

Content system and production

Production includes writing, design, editing, and creative QA—but the real unlock is the system: templates, repeatable formats, and a process for turning one strong idea into multiple platform-native assets. This is how you keep output high without burning out your team.

Publishing and distribution

Distribution is the difference between “we posted” and “people actually saw it.” It covers scheduling, repurposing, and—when it fits—paid boosts to control reach. Reports tracking ad growth help explain why distribution is often the make-or-break layer; for example, Digital 2026 reporting highlights projections that global social media ad spend continued growing in 2025.

Community management and customer care

Engagement isn’t just replying to comments. It’s moderation rules, escalation paths, response templates, and making sure social doesn’t become a brand risk. Many brands now treat social as a frontline relationship channel, which is why research-focused reports like the 2025 Sprout Social Index dedicate significant attention to expectations around responsiveness and brand interaction.

Measurement and optimization

Measurement is only useful when it changes decisions. The best packages include a clear reporting cadence, a small set of KPIs that match the goal, and an optimization rhythm. If reporting is just screenshots and vanity metrics, it won’t help you defend budget—or improve performance.

Professional Implementation

Even a well-designed package fails if implementation is sloppy. Professional delivery is about reducing friction: fewer approval delays, fewer missing assets, fewer “we didn’t know that was included,” and fewer weeks where everyone is busy but nothing ships.

Start with a clean intake and access setup

Implementation begins with access (ad accounts, pages, pixels, analytics, brand assets) and an intake that forces clarity. You want decisions written down early: target audiences, offers, brand voice, compliance constraints, and response policies. This is also where you define how fast approvals need to happen to keep the cadence realistic.

Build the weekly operating rhythm

Strong packages run on a simple cadence: plan, produce, approve, publish, review, iterate. The exact cadence depends on the business, but the principle stays the same: fewer massive “campaign launches,” more continuous improvement.

Make scope boundaries obvious (and painless)

Scope clarity protects both sides. It prevents providers from becoming a catch-all production studio, and it prevents clients from feeling nickel-and-dimed. The goal is not to say “no” more often—it’s to create a predictable way to say “yes” when priorities change.

In Part 2, we’ll translate all of this into concrete package tiers and show how to scope deliverables so the work stays sustainable, measurable, and profitable.

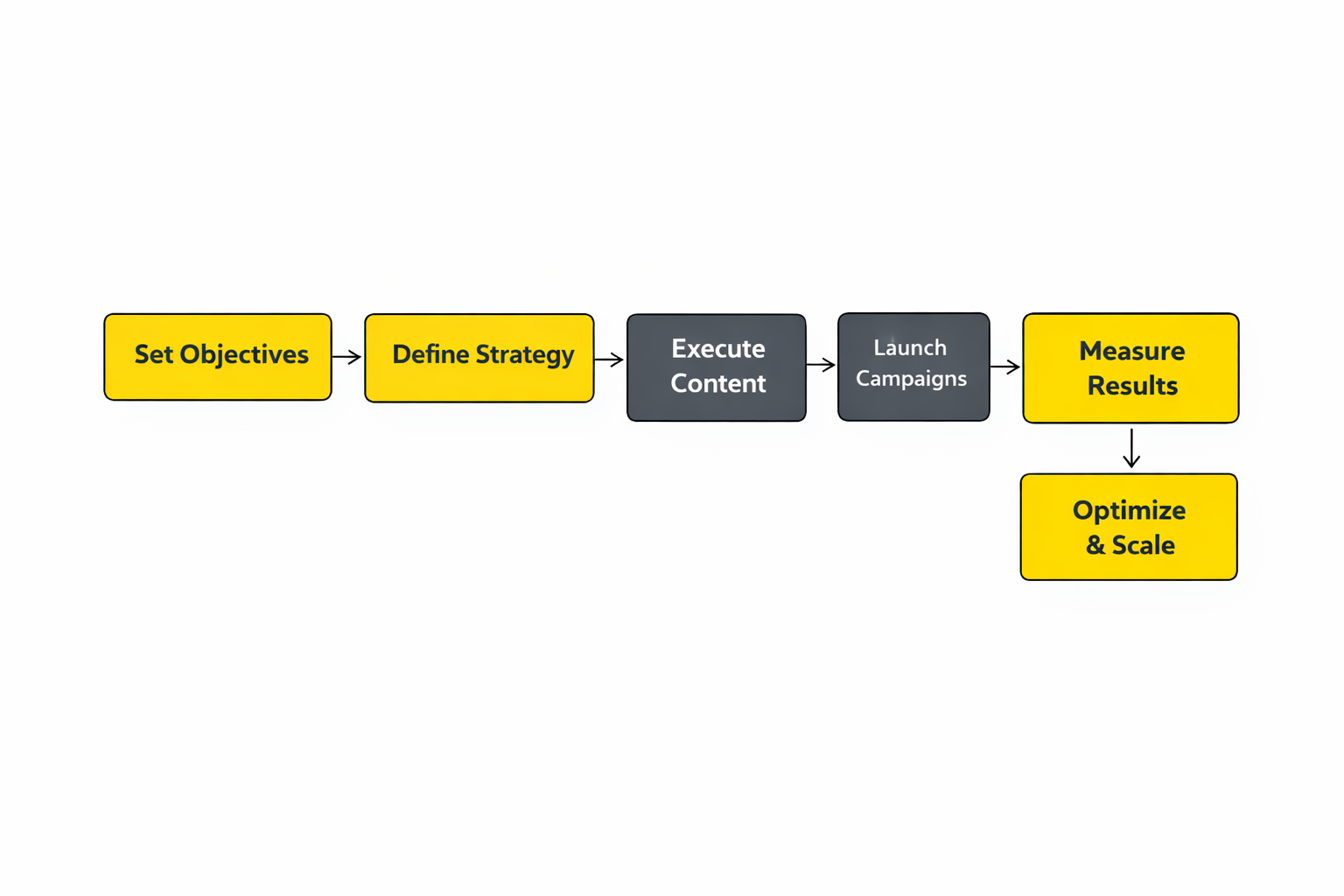

Step-by-Step Implementation

Most social media marketing services packages fail for one simple reason: everyone agrees on “more content,” but nobody agrees on how the work will move from idea to published post to measurable outcome. Implementation fixes that. It turns a package from a menu of deliverables into a reliable operating rhythm.

Step 1: Lock the intake into a single source of truth

Start by choosing one place where requests live (not email, not DMs, not “just Slack me”). That intake should force clarity: goal, audience, channel, deadline, creative format, and what “good” looks like. When this is missing, a package quietly becomes reactive support, and the work expands without anyone noticing.

Step 2: Set access, roles, and guardrails before you create anything

Get the boring setup done early: admin access, page roles, ad account permissions, pixels, and reporting access. If the package includes paid distribution, ensure measurement plumbing is stable so conversions aren’t “mysteries” later. For Meta-heavy packages, server-side measurement is commonly part of a modern baseline, and Meta’s Conversions API documentation is the canonical reference for what that connection actually is.

Step 3: Build a two-layer plan: pillars and a short sprint calendar

Professional packages don’t plan the entire month in stone; they plan what must be consistent, then leave room for what will inevitably change. Define a small set of pillars (themes you can repeat without boring your audience) and map them into a two-week sprint calendar. That sprint calendar becomes the unit of execution: easier approvals, faster iteration, and fewer last-minute “can we redo this?” surprises.

Step 4: Turn content into an assembly line, not a craft project

Batch production by format. Write captions in one session, record in another, edit in another, and design in another. This is how small teams ship at a professional cadence without feeling like they’re constantly context-switching and constantly behind.

Step 5: Make approvals predictable and time-boxed

Approvals should answer one question: “Is this on-brand, compliant, and aligned with the goal?” Everything else is preference and can be tested. If your package includes client approvals, set a clear window (for example, 48 hours) and define what happens if feedback comes late. If you want a straightforward model for how this works end-to-end, Meta’s explanation of A/B testing is a useful reminder that “we don’t agree” can be resolved by testing instead of debating.

Step 6: Publish with tracking you can trust

Before posts go live, make sure links are tagged consistently so reporting stays clean. Google’s GA4 URL builder guidance is the simplest standard to follow for UTMs across organic posts, influencer links, and paid campaigns. If the package includes multiple platforms and multiple people publishing, this one habit prevents months of messy “where did this traffic come from?” reporting later.

Step 7: Operationalize engagement like customer care, not “checking comments”

Set response rules: who replies, what gets escalated, and how fast you aim to respond. For Meta channels, many teams start with a unified inbox workflow in Business Suite, and Meta’s Business Suite Inbox overview is a good checklist of what should be enabled when engagement is part of the package deliverables.

Step 8: Review weekly, decide monthly

Weekly reviews are for patterns: which formats are earning attention, what topics are getting saved or shared, where drop-off happens, and which questions customers keep asking. Monthly decisions are for direction: what to scale, what to stop, and what to test next. That decision rhythm is what makes social media marketing services packages feel like a growth system instead of a content treadmill.

Execution Layers

When delivery is smooth, it’s because the work is layered. Each layer has different tools, different timelines, and different “quality signals.” Mixing them together is how teams end up overwhelmed while still feeling like nothing is moving.

Layer 1: Foundation and governance

This layer is access, roles, naming conventions, brand voice notes, compliance constraints, and where assets live. It’s the least visible part of the work, but it determines whether execution is calm or chaotic. If a package includes paid activity or ROI reporting, this is also where you confirm measurement fundamentals like UTMs and server-side event connections.

Layer 2: Content operations

Content ops is the machine: briefs, drafts, revisions, version control, approval steps, and publishing. The goal is to reduce rework. If your system requires constant “reinventing the post,” you’ll struggle to sustain output no matter how talented the creator is.

Layer 3: Distribution and amplification

Distribution is how you control reach rather than hoping for it. In some packages, distribution is mostly organic (repurposing and consistency). In others, it includes paid support and structured experimentation, where testing features inside ad platforms become a core part of the package promise.

Layer 4: Engagement and care

This layer includes moderation, response handling, escalation, and social listening signals that should feed back into content planning. If the package includes customer care, treat the inbox like a queue, not a conversation you stumble into when you have time.

Layer 5: Insights and decision-making

This layer turns activity into decisions. It’s where you connect performance back to the goal, and where you decide what will change next week. When this layer is missing, teams keep producing because production feels productive, even if outcomes don’t move.

Optimization Process

Optimization is not “changing things because numbers dipped.” Real optimization is a controlled loop: choose what you’re testing, keep everything else stable, run it long enough to learn, then document the decision so you don’t repeat the same mistakes next month.

1) Build a learning agenda before you touch creative

Start with a short list of questions you genuinely want answered: which hook earns attention fastest, which promise gets clicks, which format generates saves, which CTA drives messages, and which audience segment converts. This prevents random tweaks and keeps the team aligned on what “better” means.

2) Design tests around one variable at a time

When you change five things at once, you can’t learn what caused the result. Use platform testing tools to isolate variables where possible. For Meta, the built-in A/B testing workflow is designed specifically for this kind of controlled comparison.

3) Use platform-native split testing when it’s available

Platform-native tools reduce measurement weirdness because the platform controls the split and the delivery. TikTok is explicit about how split tests work in Ads Manager, including which variables you can test and how the audience is divided, in TikTok’s Split Testing overview. When packages include paid TikTok, this should be part of the operating playbook, not an occasional experiment.

4) Keep attribution clean with consistent tagging

Optimization falls apart when tracking is inconsistent. If half your links are tagged and half aren’t, you can’t tell what drove what, and you end up “optimizing” based on partial truth. Google’s guidance on building campaign URLs for GA4 is the cleanest baseline for keeping your reporting consistent across posts, stories, ads, and partnerships.

5) Improve event quality before you blame creative

If campaigns look unstable, it’s often an event signal problem, not a creative problem. Server-side event connections can improve reliability when browser tracking is limited, and Meta’s Conversions API documentation explains the purpose and structure of that direct data connection. In packages that include performance reporting, this belongs in the “professional setup” phase, not as an emergency fix later.

6) Create a review rhythm that matches the speed of the channel

Weekly reviews should be tactical: what to continue, what to pause, what to test. Monthly reviews should be strategic: whether the channel mix, creative direction, or offer needs adjustment. This rhythm keeps packages stable enough to learn, while still moving fast enough to keep up with the platforms.

Implementation Stories

Implementation is easiest to understand when you see it under pressure. The story below is real, documented, and useful because it shows how a “non-social” category can still win when execution is treated like a system rather than a vibe.

Lemonade: Turning a “Non-Social” Category Into a Social System

The problem hit in a way that felt unfair: the team could ship good creative, but the category itself fought back. Insurance isn’t something people follow for fun, and every post had to work harder than a typical lifestyle brand just to earn a moment of attention. When the pressure is that constant, even strong teams start second-guessing what they’re doing. Sprout Social’s Lemonade case study captures that tension from the inside.

Under the surface, Lemonade had already built a modern brand, but social still needed to prove it could do more than entertain. They wanted cultural relevance and trust, not just occasional spikes. They also had to balance humor with real customer needs, because the wrong reply at the wrong time can damage credibility fast. You can see the company’s broader public footprint and reporting context through Lemonade’s investor filings hub, which grounds the story in a real, operating business.

The wall wasn’t a lack of ideas; it was the lack of structure around those ideas. When insights are scattered and publishing is messy, creative teams spend their energy chasing information instead of making work that compounds. Customer questions pile up, leadership asks for proof, and suddenly social feels like a cost center even when it’s doing important work. That’s exactly where many social media marketing services packages stall if they’re sold as “posting” instead of “operating.”

The epiphany was treating social like an ongoing experiment with a lab, not a stage with a spotlight. Instead of guessing, they leaned into systems for tagging, inbox management, and cross-channel visibility so they could learn faster. They also reframed content as a way to earn trust in small moments, not a one-time campaign to “go viral.” The case study describes how Lemonade used a unified hub for publishing, reporting, and community work to support that shift. The Lemonade write-up spells out the operational pieces that made experimentation possible.

From there, the journey looked less glamorous and more repeatable: clearer workflows, tighter feedback loops, and faster decisions. Their team could see what landed across channels without manually stitching together reports, which meant creative reviews became sharper instead of louder. Messages were handled with more consistency, and insights could be organized instead of living as anecdotes in someone’s head. That’s the kind of “quiet progress” that makes a package feel reliable month after month.

Then came the final conflict: scaling systems without losing the personality that made the brand stand out. Structured workflows can accidentally flatten creativity if teams treat templates like rules instead of tools. There’s also the constant pull of short-term performance pressure, where one slow week tempts everyone to abandon the strategy and chase random trends. The only way through is to keep the system flexible enough for creativity while staying strict about measurement and learning.

The dream outcome is not just better posts; it’s confidence and momentum. Social becomes a place where the team can learn what customers care about in real time, respond with consistency, and show leadership a coherent picture of impact. That’s the real promise behind premium social media marketing services packages: not “we’ll post for you,” but “we’ll build an engine you can trust.”

Implementation checklist that prevents scope chaos

- Access map: A written list of who has admin access to what, and where credentials live.

- Approval SLA: A clear approval window and what happens when it’s missed.

- Publishing rules: Naming conventions, tagging, link tracking, and who has final publish rights.

- Engagement playbook: Response tone, escalation triggers, and inbox ownership.

- Measurement baseline: UTMs are standardized via Google’s GA4 campaign URL guidance, and paid measurement foundations are documented where relevant.

Make testing a contracted deliverable, not a “nice-to-have”

Clients love the idea of optimization until they realize it requires discipline. Bake testing into the package: one or two controlled experiments per month, a clear hypothesis, and a decision document at the end. Use official platform tools when possible, like TikTok split testing and Meta A/B testing, so results are easier to trust.

Protect quality without slowing everything down

Quality isn’t more meetings. It’s the right checkpoints at the right moments: a quick internal QA before approvals, a final link-and-format check before publishing, and a weekly review that focuses on decisions rather than post-mortems. When you do this well, clients don’t just keep the package—they expand it, because the system feels like it’s building value every week.

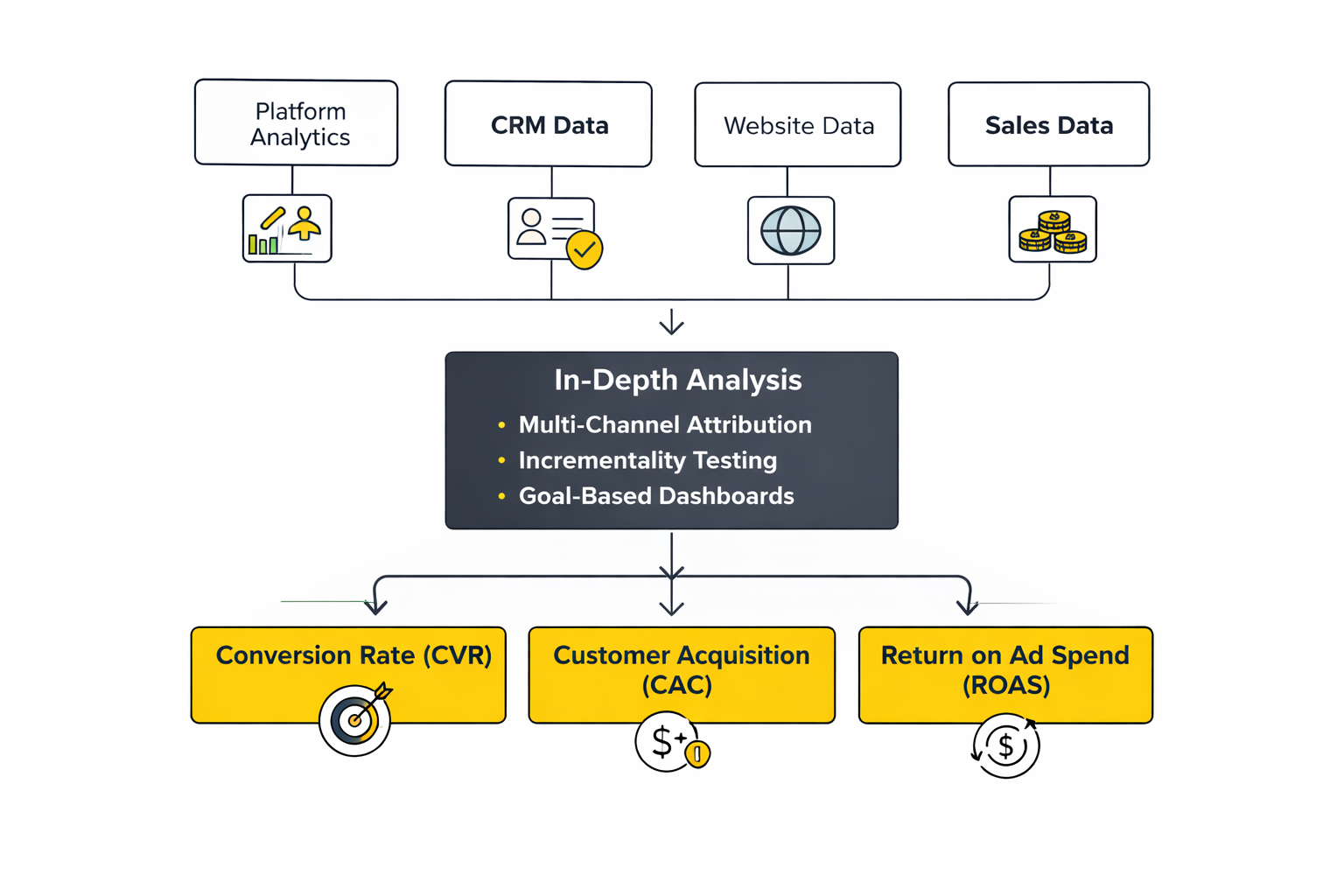

Statistics and Data

The fastest way to improve social media marketing services packages is to stop arguing about opinions and start agreeing on evidence. Not “more dashboards,” but the handful of numbers that tell you whether attention is compounding, whether trust is building, and whether the work is driving outcomes you can defend.

Global scale: why social data keeps getting more valuable

When your client asks, “Is social still worth it?” scale is the first answer. The same annual digital reporting ecosystem consistently shows the audience is still expanding: 5.24 billion active social media user identities in early 2025 appears in the broader Digital 2025 Global Overview and is echoed inside We Are Social’s 2025 Global Digital Report PDF. That doesn’t mean every business should “do everything,” but it does mean the market of potential customers (and potential conversations) remains enormous.

Engagement reality: the platforms reward different behaviors

Engagement is still one of the cleanest signals of whether content is resonating, but it has to be interpreted in context. Multiple benchmark datasets point to the same directional truth: TikTok tends to lead organic engagement, even as engagement patterns shift year to year. You can see that in Dash Social’s cross-channel benchmark framing where TikTok leads Instagram and YouTube when measured with the same engagement calculation, in Socialinsider’s rolling dataset where TikTok remains the engagement outlier versus Instagram and Facebook, and in Rival IQ’s annual benchmark commentary noting that TikTok still leads engagement despite declines across platforms.

What to track inside a package without drowning in metrics

A professional analytics layer inside social media marketing services packages usually works best when it’s built like a ladder:

- Attention signals: reach, impressions, video views, watch time, and saves or shares where available.

- Trust signals: comments quality, replies, repeat engagement, follower growth rate, and community response time if you manage care.

- Action signals: clicks, profile visits, message starts, lead submissions, add-to-carts, purchases, and assisted conversions when tracking supports it.

- Efficiency signals: cost per result for paid layers, creative production cycle time, and approval turnaround time.

Performance Benchmarks

Benchmarks are useful when they help you set expectations and make decisions faster. They’re dangerous when they become a scoreboard with no context. Different reports use different definitions (per follower, per reach, per impression, per post), so the goal isn’t to hunt for a single “correct number.” The goal is to triangulate what “typical” looks like for your category and then beat it with intentional testing.

Cross-channel benchmarks: helpful for platform mix decisions

If you need one simple reference point to start a conversation with a client, cross-channel benchmarks can give you a directional map. Dash Social’s 2025 benchmark release highlights a cross-channel view where TikTok leads engagement (around 5%) while Instagram and YouTube follow, which is reinforced by the dataset framing in Dash Social’s benchmark report PDFs and summarized in Dash Social’s own benchmark post recap. This kind of triangulation is useful for deciding where your package should emphasize distribution versus community, and where a paid layer might be required to stabilize reach.

Industry benchmarks: best for goal-setting and stakeholder alignment

Industry benchmarks matter because “good” looks different in different categories. A university, a retail brand, and a B2B software company won’t behave the same, and neither will their audiences. Reports like Hootsuite’s industry breakdown help teams anchor expectations, especially when a client is comparing themselves to the wrong category. Their January 2025 dataset shows engagement ranges by industry across major networks in one view, which is helpful for initial baselines and goal-setting. Hootsuite’s engagement rate benchmarks by industry

Benchmarks that matter for packages

Here are the benchmarks that usually make social media marketing services packages easier to manage:

- Cadence: how many posts per week you can sustain without quality falling.

- Format mix: what percentage of output is short-form video, carousels, static, live, or creator-led assets.

- Engagement quality: not just rate, but whether engagement includes saves, shares, and meaningful comments.

- Conversion hygiene: click quality, message-to-lead rate, and landing page consistency when you’re driving off-platform action.

- Iteration speed: how fast you can ship a new creative test after learning something.

Analytics Interpretation

Analytics only become valuable when they change behavior. The real skill is interpretation: knowing what a number means, what it doesn’t mean, and what you should do next without panicking.

Separate leading indicators from lagging outcomes

Leading indicators tell you whether your content is earning attention (watch time, completion rate, saves, shares, profile visits). Lagging outcomes tell you whether attention is turning into business results (leads, purchases, revenue, retention signals). Packages run smoother when you define both upfront, so a quiet sales week doesn’t trigger random creative chaos, and a viral week doesn’t trick you into thinking the system is solved.

Make sure everyone is measuring the same thing

One of the most common conflicts in reporting happens when a client compares two numbers that were calculated differently. Some tools measure engagement per follower, others per reach, others per impression, and some reports mix organic and boosted content. Dash Social calls this out explicitly in its benchmarking methodology sections, including the note that each platform calculates engagement rate differently. A professional package prevents confusion by defining the calculation once and keeping it stable.

Always read the “why” behind the number

If reach drops, don’t immediately blame the algorithm. Check the content mix, posting cadence, hooks, and whether your audience is saturated. If engagement rises, don’t automatically celebrate; confirm it’s the kind of engagement you want (saves, shares, qualified comments), not empty reactions from the wrong audience. If clicks increase but conversions don’t, the problem is often off-platform: offer clarity, landing page friction, or broken tracking.

Use controlled tests to resolve disagreements

When teams debate creative direction, testing is the cleanest tie-breaker. Meta and TikTok both document structured approaches to split testing inside their platforms, which is useful when your package includes paid layers and you want to isolate variables instead of guessing. Meta’s A/B testing overview and TikTok’s split testing guide

Case Stories

Stories matter because they show how measurement changes decisions in real life. The example below is real, recent, and documented, and it maps cleanly to how modern packages blend creative testing, product feeds, and performance reporting.

New Look: When “Good Ads” Still Weren’t Enough

The numbers looked fine until they didn’t. New Look had the kind of campaign performance that can lull a team into comfort, right up until a reporting review makes you realize you’re winning in the wrong way. The real pressure wasn’t a single bad week; it was the creeping feeling that the account had stopped learning, and that “more spend” wouldn’t fix it. TikTok’s New Look success story

The backstory is a retailer fighting for attention in a market where everyone has discounts, everyone has influencers, and every scroll is a competition. New Look has been public about its push to strengthen digital performance, including an announced investment aimed at accelerating its data, AI, and e-commerce capabilities. That context matters because it shows the business wasn’t treating marketing as decoration; it was treating it as infrastructure. New Look’s digital growth investment announcement

The wall arrived in a familiar form: the creative looked good, the catalog was full, but the delivery didn’t feel like it was improving. When a team can’t explain why performance changes, they can’t scale with confidence. It becomes harder to defend budget internally, because every result feels like a lucky streak rather than a repeatable system. That’s exactly where many social media marketing services packages either mature into an optimization engine or get stuck as “content output.” The New Look case story setup

The epiphany was treating the creative format itself as a testable variable, not a fixed decision. Instead of assuming one catalog creative approach would carry everything, the team ran a structured comparison within Catalog Ads to learn what the audience responded to more consistently. That shift is subtle, but it’s powerful: the goal moves from “launch ads” to “build evidence.” The A/B test framing in the New Look case story

The journey was the part that looks boring from the outside and brilliant from the inside: align the product feed, choose a measurable hypothesis, run the test cleanly, and read the results like a decision document instead of a victory lap. The takeaway wasn’t only “this format worked better.” The takeaway was “we can keep learning at a predictable pace,” which is what makes optimization sustainable inside a monthly retainer. The New Look results summary

The final conflict is the one most case studies don’t emphasize enough: once a team sees a lift, the organization expects the lift to continue forever. But performance marketing isn’t a straight line. Inventory changes, promotions change, competitors react, and fatigue creeps in. The only way through is to keep the testing loop alive so the account doesn’t freeze around last month’s “winning” creative. New Look’s test-and-learn narrative

The dream outcome is confidence that compounds. New Look didn’t just get a better-performing setup; it got a clearer way to decide what to do next. That’s the quiet superpower of analytics-led execution: it turns social from a series of isolated campaigns into a system that gets smarter every month. New Look’s broader digital intent

Professional Promotion

This part isn’t about flashy ads. It’s about promoting your package professionally, in a way that makes clients feel safe buying it and confident keeping it.

Make your reporting feel like leadership, not like homework

Clients don’t want more numbers; they want fewer, clearer decisions. The easiest way to elevate social media marketing services packages is to build a reporting narrative that answers the questions stakeholders actually ask: what changed, why it changed, what we learned, and what we’ll do next. When you ground that narrative in benchmark context and consistent definitions, it becomes easier to defend budget and easier to expand scope.

Productize proof as part of the deliverable

If your package includes optimization, don’t describe it like a vague promise. Describe it like a product: a fixed number of controlled tests per month, a learning agenda, and a decision log that shows what you’re scaling and what you’re retiring. Platform documentation around testing exists for a reason: it keeps experiments clean and reduces “we changed everything and don’t know what worked.” Meta A/B testing and TikTok split testing

Set expectations with benchmarks, then win with your own baselines

Benchmark reports are a starting line, not the finish line. Use them to set realistic expectations early, then shift the client’s focus toward your internal baseline: month one is about measurement hygiene and cadence stability, month two is about controlled iteration, and month three is where compounding gains start to show. That structure helps clients stay patient long enough for the system to work.

What to show in a “professional” package proposal

- A one-page measurement plan: the handful of KPIs you’ll track, how they’re calculated, and how often you’ll review them.

- A testing cadence: what gets tested monthly, and what qualifies as a “winner.”

- A benchmark reference set: one cross-channel benchmark source and one industry benchmark source so expectations are grounded in reality.

- A decision rhythm: weekly check-ins for tactical adjustments and a monthly review for strategic direction.

Advanced Strategies

Once the basics are stable, the best social media marketing services packages stop behaving like a content calendar and start behaving like a growth system. The difference is strategy density: fewer random posts, more deliberate levers that compound.

Build a creator program you can repeat, not one-off influencer posts

Scaling usually starts when you stop treating creators as “distribution” and start treating them as your fastest feedback loop. Creator partnerships give you language your audience already trusts, angles your internal team wouldn’t write, and a steady stream of creative inputs that keep ads and organic from going stale.

This isn’t a niche tactic anymore. CreatorIQ’s 2025–2026 research reports brands spending an average of $2.9M annually on influencer programs, while IAB’s 2025 Creator Ad Spend and Strategy Report frames creator investment as a central part of modern media planning.

Go platform-native on video, then scale distribution with proof

Short-form video is crowded now, which means “posting more” isn’t a strategy. Your edge comes from building a repeatable set of video formats (hooks, series, creator-led POVs), then supporting winners with paid distribution so reach becomes predictable instead of accidental.

If you want a reality check on how intense the format competition has become, Metricool’s 2025 study analyzed over 5 million short-form videos across major platforms and notes that short-form output has surged, which makes creative differentiation and iteration speed even more important.

In B2B, ride the video shift early while CPMs are still sane

B2B scaling used to mean text posts and webinars. Now, LinkedIn is actively pushing video monetization and publisher/creator inventory, which changes how demand is built and how ads can be placed around trusted voices.

Reuters reports LinkedIn’s video views grew 36% year-over-year (as of February 2025), with video uploads up 20%+, while BrandLink revenue nearly tripled in Q2 2025. That kind of platform momentum is exactly when smart packages expand into new formats before the channel becomes saturated.

Prove incrementality before you scale budgets

The most expensive scaling mistake is assuming last-click numbers tell the full truth. When spend rises, attribution distortions rise too—especially with cross-device behavior, view-through effects, and mixed organic/paid influence.

That’s why incrementality testing is becoming a practical requirement, not a “nice-to-have.” TikTok’s own product framing for Conversion Lift Study positions it as an experiment-based method to isolate what ads truly caused, and the help-center definition emphasizes the core question it answers: did your ads drive incremental growth?

Use automation as a creative multiplier, not a replacement for thinking

Automation scales best when the inputs are strong. The brands that win with automation aren’t “hands-off”—they’re disciplined about feeding systems more creative angles, more formats, and clearer goals so the machine has room to learn.

TikTok’s Smart+ positioning spells this out directly: the promise is speed and scale, but the results are still shaped by the quality and diversity of what you supply. Their Smart+ playbook is useful because it frames automation as a way to reduce guesswork while you focus on better inputs.

Scaling Framework

Scaling social media marketing services packages works best when you treat growth like a sequence, not a leap. Each stage has a different constraint, and your job is to remove the constraint without breaking what already works.

Stage 1: Stabilize the engine

Your goal here is boring on purpose: consistent cadence, clean tracking, predictable approvals, and a baseline content system that doesn’t collapse under pressure. If the engine isn’t stable, scaling just amplifies chaos.

Stage 2: Prove what wins

Run controlled tests and document decisions. Pick a few hypotheses at a time, keep everything else steady, and learn which creative angles and formats reliably earn attention and action. This is where incrementality becomes valuable, because it prevents you from scaling spend based on misleading attribution signals.

Stage 3: Productize what wins into repeatable formats

Once you find winners, turn them into a production system: templates, series, creator briefs, editing rules, and a clear “definition of done.” This is how you scale output without watching quality fall off a cliff.

Stage 4: Amplify with distribution

At this stage, you scale reach on purpose: promote proven creative, widen audiences carefully, and use automation where it reduces manual work without removing learning. This is also where B2B packages can expand into video-led programs while the platform is actively growing inventory, as Reuters notes with LinkedIn’s creator and publisher expansion for video ads.

Stage 5: Expand the ecosystem

Finally, you widen the package: more channels, more creators, deeper community care, richer reporting, and stronger lifecycle connections (email, SMS, on-site personalization). Expansion is where teams often overreach, so the rule is simple: only add a new layer when the existing layers are running smoothly.

Growth Optimization

Optimization at scale is less about “tweaks” and more about protecting what makes growth sustainable: creative freshness, measurement credibility, and operational speed. These are the practices that keep scaled packages from stalling.

Keep creative fresh with a predictable refresh cycle

Creative fatigue is one of the most consistent enemies of scaled performance. You don’t fix it by panicking and rewriting everything; you fix it by scheduling refreshes like maintenance. That might mean rotating hooks weekly, refreshing product stories biweekly, and introducing new creator angles monthly.

Scale variety before you scale spend

Automation and broad targeting tend to work better when the system has options. That’s why creator programs matter: they multiply the number of believable angles you can test without making your internal team do all the heavy lifting.

CreatorIQ’s 2025–2026 report reinforces how normalized this has become, with brands reporting meaningful annual spend and EMEA showing strong investment levels in creator marketing programs.

Use incrementality to defend scaling decisions

When budgets increase, leadership wants proof that growth is real, not just reattributed. Incrementality testing gives you the cleanest defense because it focuses on what your ads actually caused.

TikTok’s measurement framing around Conversion Lift Study is a practical reference for how major platforms are pushing the market: experiment-based measurement that informs budget decisions, not just reporting dashboards.

Treat social like discovery and search behavior, not just entertainment

Scaling gets easier when your content answers real questions people are already asking. Social is increasingly used as a research layer, which is why trend research keeps highlighting “social as search” behavior. HubSpot’s 2025 social media trends report places “social as the new search engine” near the top of its framing, which aligns with how users discover products, compare options, and validate decisions inside feeds.

Scaling Stories

Scaling is rarely clean. The story below is real and documented, and it shows what happens when a team treats scale as a system problem (format, automation, measurement) rather than just “spend more.”

Triumph Arcade: Scaling Performance Without Burning Out Creative

The turning point didn’t feel like a victory at first. Triumph Arcade saw the same pattern every performance team dreads: results were strong, then creative started to wear out, and the curve flattened. When the curve flattens, pressure spreads fast—budgets get questioned, targets don’t move, and everyone suddenly wants a “new idea” yesterday. TikTok’s Triumph Arcade case study

The backstory matters because the business wasn’t trying to win a branding award; it needed performance that could scale. Mobile gaming is brutal: competition is constant, attention is expensive, and users churn if the promise doesn’t match the experience. Traditional video ads can make a game look exciting, but they can also create skepticism when users fear the gameplay won’t match the ad. TikTok’s case study explains why Triumph Arcade leaned into an interactive format to reduce that mismatch. The Playables format context in the case

The wall showed up as a classic scaling trap. Video-only creative was doing its job, but the account needed a way to lift efficiency without endlessly producing more variations. When teams respond by flooding the channel with more of the same, they usually accelerate fatigue instead of escaping it. Triumph Arcade needed a new lever that improved intent and qualification, not just raw volume. The performance goal framing

The epiphany was shifting the question from “how do we convince people?” to “how do we let people experience it?” TikTok Playables let users try a preview of the game directly in the ad, which changes the psychology of the click. The team paired that format with Smart+ automation so the campaign could scale delivery without requiring a complicated manual build. The case study is explicit that the approach combined Playables with Smart+ app promotion for iOS. The campaign approach section

The journey was a structured rollout rather than a one-time stunt. Triumph Arcade launched the interactive format, let the system learn, and then compared performance against the video-only baseline to understand the lift. That baseline comparison is what makes the result usable inside a package—because it creates a decision rule you can repeat, not a lucky win you can’t explain. TikTok reports the result as a clear performance gap versus video-only. The benchmark comparison in the results

Then the final conflict hit: scaling always invites new constraints. As performance improved, the team still had to protect quality inputs and ensure the experience stayed aligned across creative, store listing, and post-install expectations. Interactive formats can also raise new production and QA needs, because the playable has to feel smooth, accurate, and genuinely representative of the game. The case story frames Playables as a way to help users better understand gameplay before installing, which only works if the preview is faithful. The Playables explanation

The dream outcome was a cleaner path to scale with stronger efficiency. TikTok reports Triumph Arcade achieved +85% Day-0 ROAS and +80% Day-7 ROAS versus video-only, which is exactly the kind of lift that turns a “maybe we can scale” conversation into an evidence-backed plan. More importantly, the win wasn’t just the number—it was the lever: a repeatable combination of format and automation that made performance less fragile. That’s what great social media marketing services packages are really selling: predictable growth that doesn’t rely on constant panic production. The reported ROAS lifts

Sell the system: inputs, loop, proof

High-retention packages are easy to explain: what you will do (inputs), how you will learn (the loop), and how you will prove it (measurement). When a client understands that you’re running a disciplined learning engine, they worry less about individual post performance and more about compounding progress.

Use credible external signals to justify expansion

When you pitch a scale-up—more budget, more creators, more channels—tie it to market signals that are hard to ignore. Reuters’ reporting on LinkedIn’s accelerating video ecosystem, including rapid growth in video views and rising ad spend, supports expansion into video-first B2B packages. CreatorIQ and IAB research also support creator program expansion as a mainstream investment category through documented average program spend and formal ad spend reporting.

Pitch scaling as a phased upgrade, not a gamble

Clients buy upgrades when risk feels managed. Present scaling as phases with gates: stabilize, prove, productize, amplify, expand. Each gate has a clear requirement—like a stable cadence, clean tracking, a documented winner format, or incrementality proof via frameworks such as TikTok’s Conversion Lift Study.

What a “scaling-ready” package proposal should include

- A creative scaling plan: the formats you’ll systemize, the refresh cadence, and how creators plug into production.

- A distribution plan: how winners will be amplified, and what guardrails protect budget efficiency.

- A measurement plan: what you’ll prove with dashboards versus what you’ll prove with incrementality testing.

- A decision rhythm: weekly tactical decisions and a monthly scale/stop/shift meeting anchored in documented learning.

Future Trends

The next wave of social media marketing services packages will feel less like “we post for you” and more like “we run your social growth system end-to-end.” That shift is happening because platforms are rewarding operational excellence: faster creative cycles, cleaner measurement, stronger community signals, and smarter distribution.

Trend 1: AI content saturation will raise the bar for originality and verification

As generative tools make it cheap to flood feeds, brands that rely on generic output will get buried. The winners will build packages around provenance and craft: creator-led angles, real customer stories, and content that feels lived-in rather than generated. You can already see mainstream coverage warning about “AI slop” spreading across platforms and blending in with real content, which makes trust and recognizable voices more valuable than ever. the recent breakdown of AI slop and why it’s harder to spot

Trend 2: Community-first formats will outperform broadcast posting

Brands are being pushed toward deeper interaction: serialized content, comment-driven iteration, and community management that looks a lot like customer care. That’s why packages that include response workflows, escalation rules, and social listening will keep gaining ground. Sprout’s trend outlook for 2026 highlights a shift toward community-driven strategies and serialized formats as a way to earn repeat attention. Sprout Social’s 2026 social media trends overview

Trend 3: Social shopping will be shaped by AI “assistants,” not just ads

Discovery is moving toward conversational recommendations that blend creator content with search-like behavior. That matters because it changes what “good content” looks like: you’ll need assets that are easy for people (and agents) to reference, summarize, and trust. Business Insider’s coverage of LTK launching an AI chatbot (built with OpenAI) is a strong signal that creator content is being packaged into recommendation engines, not just feeds. LTK’s AI shopping chatbot rollout

Trend 4: B2B social will tilt harder toward video, creators, and premium inventory

LinkedIn is expanding publisher and creator inventory for video ads, and it’s doing it because video consumption is rising quickly. This creates a real opportunity for B2B-focused packages that combine founder-led content, creator collaborations, and targeted distribution. Reuters reported LinkedIn video views grew 36% year-over-year (as of February) and that BrandLink revenue surged as it expanded the program. LinkedIn’s video ad push and creator/publisher expansion

Trend 5: Creator programs will be treated like a media channel with its own budget line

Instead of one-off influencer posts, more brands are moving toward ongoing creator programs with reporting, governance, and performance measurement. IAB’s creator economy research projects U.S. creator ad spend reaching $37B in 2025, reinforcing that creators are becoming a core media channel rather than an experimental add-on. IAB’s 2025 Creator Economy Ad Spend and Strategy summary

Trend 6: Packages will be built around attention engineering, not “posting schedules”

As feeds get noisier, the ability to earn attention in the first seconds becomes a practical requirement. That doesn’t mean gimmicks; it means stronger hooks, clearer story structure, and better editing discipline. Even mainstream reporting is highlighting how quickly people decide to scroll and how brands are adapting with multi-layered hooks to keep viewers watching. coverage of shrinking attention windows and hook strategies

Strategic Framework Recap

If you take only one thing from this guide, let it be this: social media marketing services packages are easiest to buy, sell, and deliver when they’re built as an ecosystem—not a list of tasks.

The five-layer ecosystem

- Strategy: who you’re for, what you stand for, and what outcomes the package is responsible for.

- Production: repeatable formats, creative systems, and an approval flow that doesn’t stall momentum.

- Distribution: organic consistency plus paid amplification where you need predictability.

- Engagement: community and customer care workflows that protect trust and turn feedback into insight.

- Measurement: a small set of stable KPIs and a decision rhythm that turns data into action.

What the best packages actually deliver

The best packages create compounding progress. They ship consistently, learn quickly, and improve predictably. They also reflect where the market is going: more video, more creators, more AI-driven discovery, and more pressure to prove what’s incremental. If you build around that reality, the package becomes resilient even as platforms change.

FAQ – Built for This Complete Guide

1) What should social media marketing services packages include at a minimum?

A reliable package should cover strategy (what you’re trying to achieve), production (how content is made), distribution (how people will actually see it), engagement (how you handle community and customer messages), and measurement (how you’ll prove progress). If any one of those is missing, results become hard to repeat and even harder to defend.

2) How do I decide monthly deliverables without overcommitting?

Start with what you can sustain at high quality, then add only when the workflow is stable. A good rule is to set a cadence you can hit even in a “busy month,” because consistency beats a burst of output followed by silence.

3) How many platforms should a package cover?

Fewer than you think. Most teams do better by dominating one or two channels with a strong content system than spreading thin across five. Expand only after you have repeatable formats, stable approvals, and consistent reporting definitions.

4) Should a package include paid social, or keep it organic only?

It depends on the goal. If the client needs predictable reach and lead flow, paid distribution often becomes necessary. If the goal is community, credibility, or long-term brand building, organic can work well—but it still needs a system for production and engagement.

5) What if client approvals slow everything down?

Build approval rules into the package: a time window, what counts as “final,” and what happens when feedback arrives late. The goal isn’t to fight the client; it’s to protect the operating rhythm so the package doesn’t collapse into last-minute scrambling.

6) Which metrics matter most for recurring retainers?

Choose a small ladder of metrics: attention (reach and watch time), trust (meaningful engagement and response quality), and action (clicks, messages, leads, purchases). Then define exactly how each metric is calculated and keep those definitions stable month to month.

7) Are social benchmarks actually useful?

Yes—when they set expectations and reduce confusion. They’re not useful when they become a rigid scoreboard. Different reports use different formulas, so benchmarks should be used to anchor “typical” ranges, then your own baseline becomes the real target.

8) How should AI be used inside a package without making content feel generic?

AI works best as a support layer: brainstorming angles, drafting variations, generating outlines, summarizing feedback, and speeding up production tasks. The core creative decisions still need real human judgment, especially as feeds get flooded with low-quality AI content and trust becomes a differentiator. the discussion of AI slop and why it raises the value of authenticity

9) When does it make sense to add creator partnerships?

Add creators when you need more believable angles, more creative variety, or faster iteration without burning out your internal team. Creator programs are also increasingly budgeted like a media channel, which makes them easier to justify when the strategy is mature. IAB’s creator spend projections

10) Is video worth it for B2B brands?

More than ever. LinkedIn is expanding video inventory with publishers and creators, which creates new distribution opportunities for B2B storytelling and demand generation. If your buyers spend time watching video where they make professional decisions, your package should meet them there. LinkedIn’s video ad expansion signals

11) How do social shopping and AI assistants change what I should create?

They reward clarity. Content that’s easy to understand, easy to quote, and rooted in real product experience becomes more valuable when discovery happens through conversational recommendations. LTK’s move into AI shopping shows creator content is being turned into recommendation engines, not just posts. LTK’s AI chatbot launch

12) How do I choose the right provider or agency package?

Look for operational clarity: what’s included, what isn’t, how approvals work, how reporting is defined, and how optimization decisions are made. If a provider can’t explain their workflow and measurement system, results will depend on luck instead of a repeatable process.

Work With Professionals

If you’ve made it this far, you already know the uncomfortable truth: most clients don’t struggle because they lack effort. They struggle because social is now an operating system. There are too many formats, too many platform changes, and too much pressure to prove results for “winging it” to work for long.

For freelancers, that pressure can be a gift. Companies still need help building strategy, producing content, running paid distribution, and turning performance data into decisions. When you can deliver that as a structured package, you stop competing on price and start competing on outcomes.

What usually holds marketers back isn’t skill—it’s pipeline. You can have a strong offer and still spend months stuck in cold outreach, chasing replies, and negotiating through layers of middlemen.

That’s why marketplaces built specifically for marketing work are becoming part of the modern career stack. MARKEWORK positions itself as a marketing marketplace where companies and marketers connect directly, emphasizing no project fees and direct communication so you can negotiate and close work without a broker in the middle. It also offers simple monthly plans with tokens to unlock opportunities and keep activity predictable.

If you want to grow your freelance income, imagine what happens when your week is built around delivery and improvement—not endless prospecting. Picture waking up with a clear list of roles that match your skills, applying quickly, and getting straight into real conversations with teams who already know they need help. When your pipeline is steadier, your packages get better because you’re not constantly switching between “selling mode” and “delivery mode.”

MARKEWORK is designed for that momentum: build a profile, browse roles, apply, and message directly. The structure is simple, which is exactly the point—you spend less time navigating platforms and more time doing the work you’re proud of. If your goal is to land remote marketing gigs and keep 100% of what you earn, start where the workflow is built for speed and clarity, not gatekeeping.

markework.com