If you’ve ever tried to compare agency quotes for social media and felt like you were comparing different planets, you’re not imagining it. One proposal bundles strategy, creative, publishing, community, and reporting into a single “management” line item. Another splits everything into hourly buckets, platform fees, and paid media add-ons. A third looks cheap until you notice what’s missing.

This matters more now because social isn’t a side channel anymore. Global social ad investment was projected to reach $247.3B in 2024, and it keeps pulling budget and attention toward platforms that move fast and change rules without warning, which is why pricing needs to be clear, scoped, and tied to real outcomes you can measure and defend in a budget meeting (WARC’s global social spend forecast).

Article Outline

Use these page jumps to skip to the part you need right now.

- What Is Social Media Marketing Agency Pricing?

- Why Social Media Marketing Agency Pricing Matters

- Framework Overview

- Core Components

- Professional Implementation

What Is Social Media Marketing Agency Pricing?

Social media marketing agency pricing is the way an agency packages, scopes, and charges for the work required to plan, produce, distribute, optimize, and measure social performance. The key word is “work,” because social results are rarely created by a single activity. They’re created by a chain of decisions: what you say, how you say it, who sees it, when they see it, what happens after they click, and whether your team can learn fast enough to improve next week.

In practice, pricing is a translation layer between business goals and operating reality. It answers questions like: How many platforms are we managing? How much content are we producing? Are we running paid social, or only organic? How fast do you need responses and community moderation? Who owns creative direction? What level of analytics and experimentation is included?

It’s also a risk-sharing agreement. Some models push risk onto the client (for example, open-ended hours). Some push risk onto the agency (for example, fixed scope with unpredictable review cycles). The best pricing approach doesn’t just pick a number; it sets boundaries that protect both sides while keeping the work focused on outcomes.

Why Social Media Marketing Agency Pricing Matters

Pricing matters because it shapes behavior. If you pay for output, you’ll usually get more output. If you pay for time, you’ll often get more activity. If you pay for outcomes, you’ll force sharp prioritization and better measurement. None of these are “wrong,” but they produce very different working relationships.

It also matters because marketing leaders are being pushed to prove value with limited room for error. Marketing budgets have been under pressure, and many leaders report they don’t have enough budget to execute their strategy, which makes it harder to justify unclear scopes and surprise invoices (Gartner’s 2025 CMO Spend Survey press release).

On top of that, social is colliding with paid media, creator partnerships, and commerce in a way that makes “just posting” an outdated idea of the job. U.S. creator economy ad spend was projected to reach $37B in 2025, a signal that many brands now treat creators and social distribution as a core line item, not an experiment (IAB’s creator economy ad spend announcement).

Finally, pricing matters because AI is changing the agency value equation. Platforms and tools can generate variations faster than teams used to, but strategy, positioning, governance, and measurement are still hard, messy, and uniquely tied to your brand. When pricing isn’t structured around what humans must own, you end up paying premium rates for tasks that should be automated, while the strategic work that actually moves the needle gets squeezed.

Framework Overview

This framework is designed to make social media marketing agency pricing understandable, comparable, and defendable. The goal is not to push you toward a single “best” pricing model. The goal is to help you evaluate any proposal using the same lens, so you can spot hidden costs, unrealistic assumptions, and mismatched incentives before you sign.

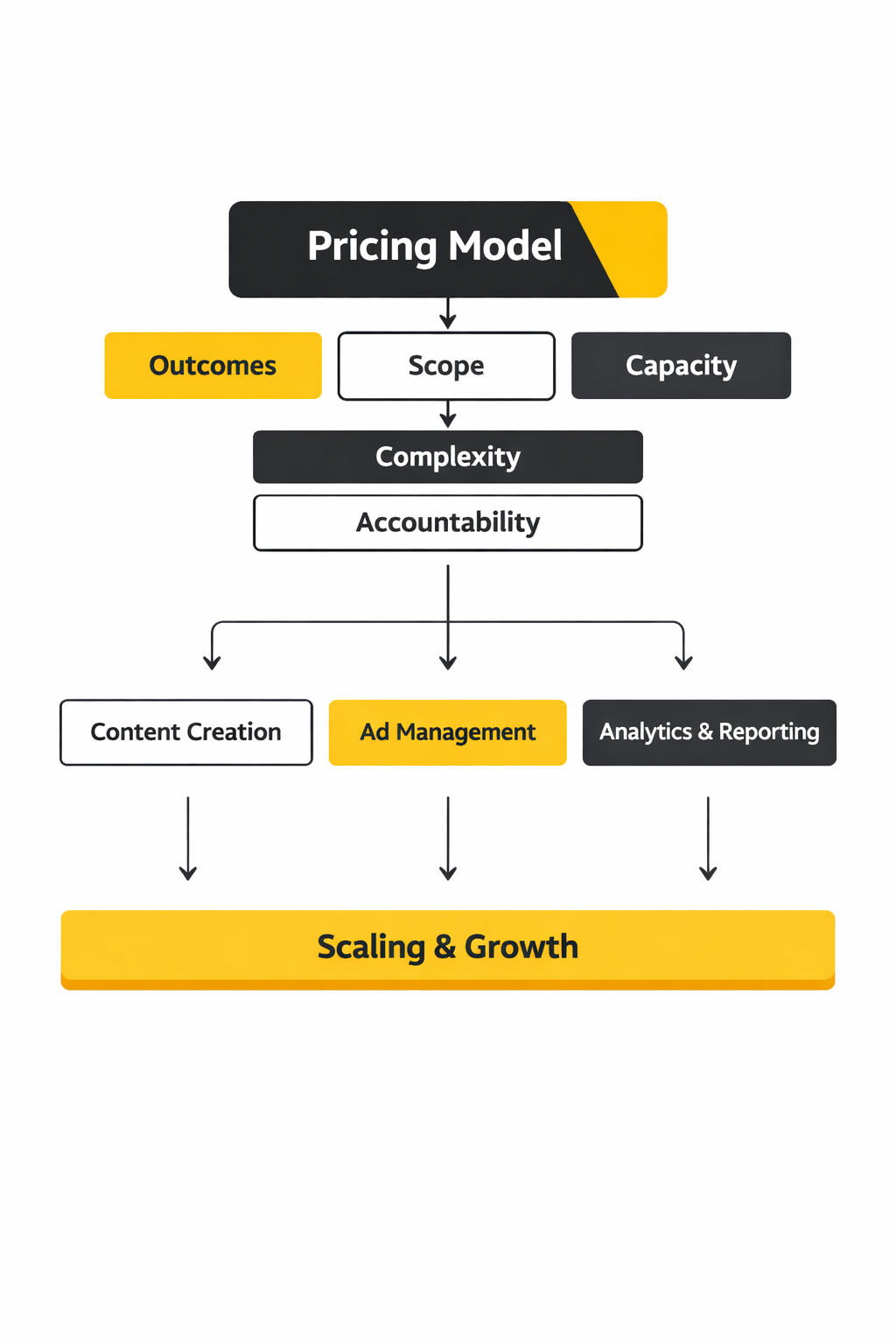

Think of pricing as a stack with five layers:

- Outcomes: what success looks like in business terms, not vanity metrics.

- Scope: which platforms, audiences, and deliverables are included (and what’s explicitly out).

- Capacity: how many hours or “creative cycles” you’re actually buying each month.

- Complexity: what makes your brand harder than average to execute for (approvals, compliance, languages, stakeholders, production load).

- Accountability: how reporting, experimentation, and decision-making are handled so the work improves over time.

When those layers are clear, you can compare pricing models that look very different on paper. A fixed retainer, a project package, and a performance-based agreement can all be fair deals, but only if they’re built on the same transparent assumptions about scope, capacity, and accountability.

Core Components

Most pricing confusion comes from agencies using the same words to mean different things. Here are the core components that should be visible in any serious pricing conversation, even if the agency bundles them into one line item.

Strategy and Operating System

This is the work that prevents social from becoming an expensive guessing game: positioning, content pillars, channel role definitions, audience insights, and a plan for how decisions get made. If your proposal has “strategy” but no mention of how often it’s revisited, how it connects to creative, or how it influences testing, it’s probably more of a kickoff document than an operating system.

Content Production and Creative Direction

This includes ideation, scripting, design, video editing, revisions, and brand consistency. It’s also where costs swing wildly because production isn’t one thing. “Ten posts” can mean ten static images, or ten short-form videos with on-location shoots, motion graphics, and creator coordination. Pricing gets fair when the proposal describes creative complexity in plain language.

Publishing, Community, and Response Expectations

Community management is not just replying to comments. It’s triage, escalation, moderation, and sometimes customer care. A good proposal will spell out response windows, what happens on weekends, what gets escalated internally, and what the agency can’t ethically answer on your behalf.

Paid Social Management

If paid social is included, pricing should separate the management fee from the media budget. The management side covers audience building, creative testing, pacing, optimization, and post-click measurement. If a proposal is vague here, you risk paying for “ads” while getting little more than basic campaign maintenance.

Measurement and Reporting That Actually Changes Decisions

Reporting is only valuable if it drives action. Social leaders increasingly have to justify investment and connect it to acquisition, loyalty, and revenue, which is why many are planning to increase spend in paid social, influencer marketing, and organic social while demanding clearer proof of impact (Sprout Social’s 2025 findings on social budget reallocation).

Professional Implementation

Once you understand components, the next step is implementing pricing in a way that doesn’t break under real-world conditions. Professional implementation is less about picking a pricing model and more about building guardrails so the model survives the messy parts: stakeholder feedback, changing priorities, platform shifts, and the temptation to “just add one more thing.”

Here’s what professional-grade implementation looks like in practice:

- Clear scoping language: deliverables are described by complexity, not just quantity, so both sides know what “a video” means.

- Capacity truth: the proposal reflects real time requirements for approvals, revisions, and coordination, not best-case assumptions.

- Change control: there’s a simple process for adding work without turning every month into a renegotiation.

- Decision cadence: you agree on how often performance is reviewed and what kinds of decisions are expected from those reviews.

- Outcome alignment: success metrics are tied to what the business can actually influence, so the agency isn’t rewarded for noise.

In the next parts of the article, we’ll use this framework to break down the most common pricing models (retainers, packages, hourly, hybrid, and performance-based), show what “good” scope clarity looks like, and give you a practical way to evaluate whether a quote is priced for results or priced for activity.

Step-by-Step Implementation

Frameworks are easy to nod along to and surprisingly hard to run. The difference is implementation: the meetings you keep, the decisions you force, and the guardrails that stop your team (or your agency) from turning social into a never-ending list of “quick asks.”

This is a practical, week-by-week path to implementing social media marketing agency pricing so it stays fair, predictable, and tied to outcomes.

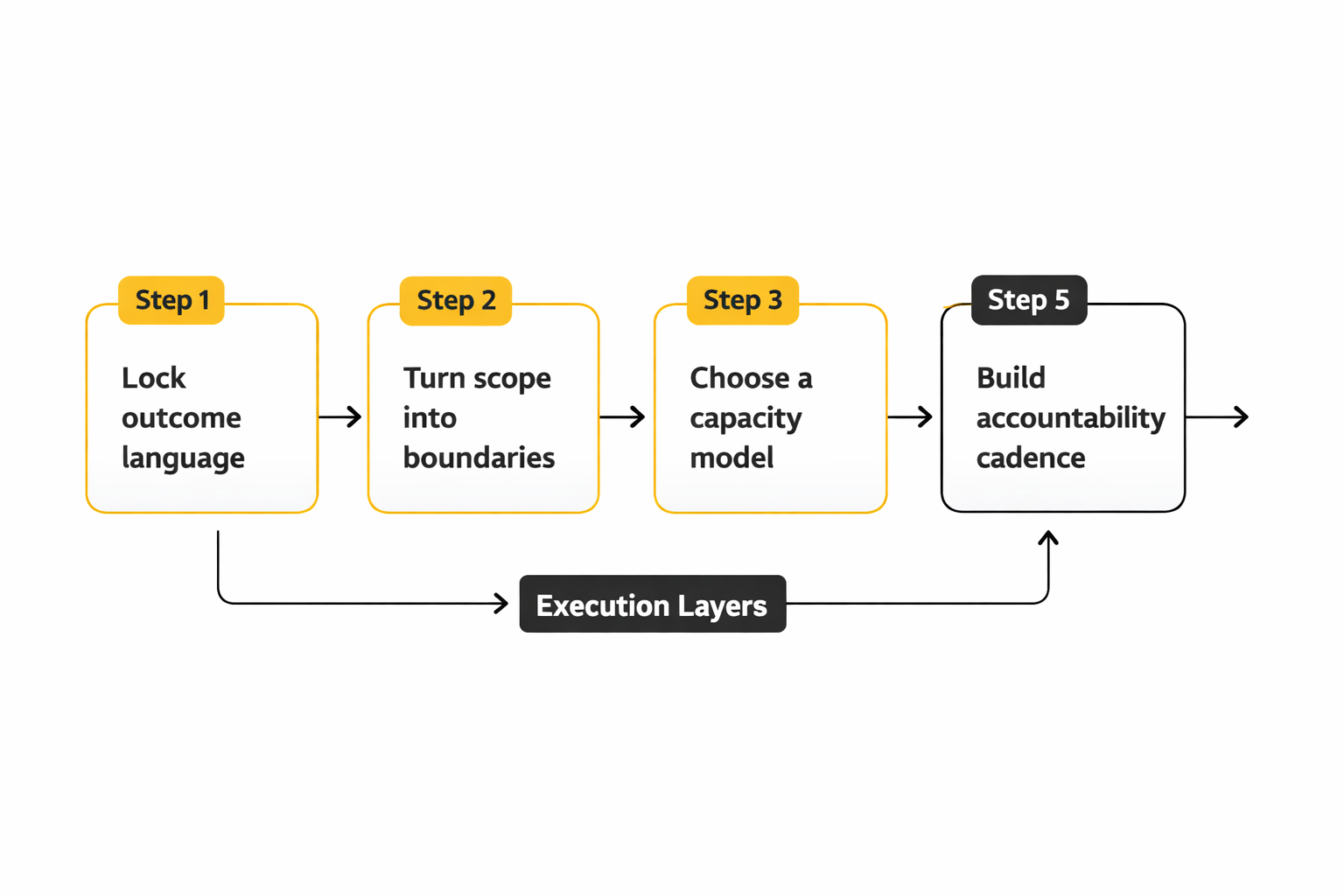

Step 1: Lock the outcome language before you talk deliverables

Start with what finance would recognize as progress: pipeline contribution, qualified leads, ecommerce revenue, booked calls, trial starts, retention signals, or cost-to-serve reductions through social care. If you can’t define success without naming a platform, you’re not ready to price it yet.

When outcomes are clear, it becomes obvious which metrics matter and which are just noise. It also makes it easier to choose measurement approaches that answer the only question leadership cares about: did social create incremental business impact, or did it just “show up” before a conversion?

Step 2: Turn scope into boundaries, not a wishlist

Scope should read like a contract with reality. That means naming what’s included (platforms, formats, posting frequency ranges, paid versus organic, community response expectations) and what is explicitly out (new brand identity work, product photography, creator contracting, event coverage, 24/7 crisis monitoring, and so on).

If a proposal is vague here, the risk is predictable: the agency prices for an average month, and you end up living in a peak month. Great social media marketing agency pricing is built to survive “one more thing” without becoming a resentment machine.

Step 3: Choose a capacity model your team can actually manage

Capacity is the hidden engine behind every price. You can buy capacity as hours, as deliverable bundles, as “content cycles,” or as a hybrid model. The right choice depends on how stable your priorities are and how many internal stakeholders can derail a calendar.

If your business changes weekly, you want a capacity model that can flex without renegotiation. If your business runs on predictable launches, you want a model that rewards production efficiency and keeps quality high.

Step 4: Run a complexity audit and price it honestly

Complexity is why two brands can request “the same thing” and require totally different effort. Complexity includes approvals, legal review, compliance, multiple regions, multilingual publishing, asset bottlenecks, and the number of stakeholders who can request changes.

This is where the best agencies protect both sides: they don’t just charge more, they build systems that reduce complexity over time. In other words, they treat complexity as an operational problem to solve, not a permanent tax you pay forever.

Step 5: Build accountability into the calendar

Accountability is not a monthly report. It’s a decision rhythm: weekly performance review, biweekly creative and testing review, monthly strategy recalibration, and quarterly outcome alignment. If you can’t point to the meeting where decisions get made, you’ll end up with reporting that documents the past instead of improving the future.

This is also where measurement choices matter. Incrementality-focused tools exist because standard attribution can mislead teams into optimizing for the wrong thing, especially when channels influence conversions without being the last touch.

Execution Layers

When social media marketing agency pricing is implemented well, execution looks like layers working together. Each layer has its own “done means done” definition, which stops work from slipping into endless revisions and vague responsibility.

Layer 1: Strategy and positioning

This layer sets the non-negotiables: who you’re for, what you stand for, what you won’t say, and what “winning” means this quarter. It’s also where you decide the role of each channel instead of treating every platform like the same feed with different dimensions.

Layer 2: Creative system and production

This is not “make posts.” It’s building a repeatable creative system: content pillars, format rules, editorial voice, hooks that work for your audience, and a production workflow that doesn’t collapse when a stakeholder asks for a last-minute change.

Layer 3: Distribution and amplification

Distribution includes publishing, community responses, paid amplification, creator partnerships (if included), and the decisions about what deserves budget. It’s where many programs accidentally waste money: they push content out without learning what the audience actually rewards.

Layer 4: Measurement and signal quality

Measurement is only as good as the signals you feed it. For paid social, that often means investing in more resilient event capture and data sharing rather than relying only on browser-side tracking. Meta’s Conversions API exists for exactly this reason: it helps businesses send events directly and improve optimization and measurement robustness when client-side signals are incomplete (Meta Conversions API documentation).

Layer 5: Governance and decision-making

Governance keeps execution healthy: who approves what, what counts as a revision, how fast feedback must come back, and what happens when priorities change mid-cycle. When governance is missing, agencies quietly price in chaos, or they underprice and then burn out trying to keep up.

Optimization Process

Optimization is where social media marketing agency pricing either becomes a compounding investment or a monthly expense. The difference is whether your optimization loop produces learning that changes decisions, not just tweaks that look busy.

1) Maintain a hypothesis log that ties to outcomes

Every meaningful test should start with a clear hypothesis: “If we change X, we expect Y, because Z.” Keep it tied to outcomes, not vanity metrics. Views can be useful, but they’re not a strategy if they don’t connect to business impact.

2) Use incrementality methods when the stakes justify it

For bigger budgets or high-stakes decisions, incrementality testing helps answer the uncomfortable question: would those conversions have happened anyway? TikTok’s Conversion Lift Study is explicitly designed to measure incremental impact by comparing exposed and control groups so teams can make better budget and mix decisions (TikTok Conversion Lift Study overview).

The same mindset shows up across modern measurement thinking: incrementality is about isolating lift that wouldn’t have occurred without advertising exposure (Skai’s overview of incrementality).

3) Run creative testing like a system, not a gamble

Creative wins rarely come from one brilliant idea. They come from structured variation: different hooks, different framing, different proof points, different offers, different creators, different first three seconds. Your process should make it easy to generate and test variations without turning your team into a revision factory.

4) Separate “learning budgets” from “scaling budgets”

Learning budgets exist to reduce uncertainty. Scaling budgets exist to push what’s already working. When those are mixed, teams either scale too early (wasting spend) or learn forever (wasting time).

5) Close the loop with retrospectives that change the next cycle

End each cycle with a short, disciplined retro: what worked, what didn’t, what surprised us, what we’re changing next. The point is not blame; it’s compounding. This is how your agency pricing turns into a predictable system rather than a monthly scramble.

Implementation Stories

Stories are useful here because implementation is where most pricing models break. The work looks fine in a proposal, then reality hits: measurement is messy, stakeholders want proof, and last-click reporting makes good channels look bad.

Torrid and Ovative: When Last-Click Reporting Started a Fight About Budget

Start at a point of high drama: The room went quiet when performance reports landed and TikTok looked “weak” on last-click. Paid search and retargeting were taking credit, and TikTok was being treated like a nice-to-have. The problem was bigger than one channel: the entire measurement story was unstable, and that made every budget conversation feel like a fight (TikTok’s Torrid case study).

Backstory: Torrid was running a major Casting Call designed to drive real actions, not just awareness. They weren’t only trying to create cultural attention; they needed applications and commerce performance to move together. That’s exactly the kind of scenario where simplistic attribution can distort decision-making, because the channel that creates demand doesn’t always get the last click (Campaign context and objectives).

Wall: The wall was measurement credibility. If the team couldn’t explain whether TikTok was driving incremental outcomes, they couldn’t defend spend in a disciplined way. And if they reduced investment based on last-click, they risked cutting off demand creation while keeping only the channels that harvest demand at the end (Ovative’s write-up on last-click undervaluing TikTok).

Epiphany: Instead of debating opinions, they reframed the problem as an experiment design issue. They needed a way to measure both brand impact and conversion impact in a connected, test-based approach. That’s why they leaned into TikTok’s “Unified Lift” approach, which combines brand lift and conversion lift methods into one full-funnel view (TikTok Brand Lift Studies explanation).

Journey they went on to reach the goal: Torrid worked alongside its agency, Ovative, and used TikTok’s measurement support to run a lift-based study during the campaign window. The implementation details mattered: defining the test and control groups, mapping conversion events, and running campaigns designed to drive both awareness and action without collapsing everything into one objective. The point wasn’t to “prove TikTok is good,” it was to build a measurement layer strong enough to guide future decisions with less uncertainty (How TikTok’s Conversion Lift Study is designed to measure incremental impact).

Final conflict: Even after the lift framework is in place, the hardest part is changing what the organization believes. Stakeholders still prefer simple dashboards, and last-click reporting is comforting because it looks precise. That tension is exactly why Ovative pushed public commentary about marketers being misled by last-click models and why they built Enterprise Marketing Return concepts around more holistic measurement (Ovative’s PR announcement).

Dream outcome: The campaign write-up describes lift in both brand health metrics and purchase behavior, alongside stronger confidence in what TikTok was contributing beyond the last click. More importantly, it normalized a better way to run budget conversations: experiments and incremental impact, not arguments and screenshots. That’s the implementation win that makes social media marketing agency pricing feel justified—because the pricing isn’t paying for “posts,” it’s paying for a system that learns and improves (Results and forward plan to continue lift studies).

A professional implementation checklist for social media marketing agency pricing

- One-page scope map: platforms, formats, cadence ranges, and what is explicitly out. If it can’t fit on one page, it isn’t clear enough.

- Decision cadence on the calendar: weekly performance review and a predictable testing/creative review cycle. If decision meetings aren’t scheduled, learning won’t happen.

- Measurement readiness: agreed event definitions, naming conventions, and resilient signal flows for paid social where needed (Meta Conversions API documentation).

- Test-and-learn plan: a documented approach to lift testing when budget decisions require incremental proof, including what methods you’ll use and when (TikTok Conversion Lift Study overview).

- Governance that reduces chaos: revision rules, approval windows, escalation paths, and “feedback deadlines” that protect production quality.

- Transparency on what last-click misses: leadership-facing language that explains why channels can drive demand without being the final touch, so optimization doesn’t punish the very work that creates future conversions (Ovative’s explanation of undervaluation in last-click models).

If you implement these elements, social media marketing agency pricing becomes easier to trust. Not because it’s cheaper, but because it’s built on real operating assumptions—and because the system can explain itself when someone asks, “What are we actually paying for?”

Statistics and Data

When people argue about social media marketing agency pricing, they usually aren’t arguing about a number. They’re arguing about uncertainty. Data is what reduces that uncertainty, but only if you choose the right statistics and interpret them with the right context.

The most useful analytics do two jobs at once. They show whether performance is moving in the right direction, and they explain what changed so you can make the next decision with confidence. That’s why “benchmark data” is helpful as a starting point, but the real value comes from tying benchmarks to your execution choices and your business outcomes.

One trend worth internalizing before you benchmark anything: engagement has been under pressure across major platforms, which changes what “normal” looks like even when your content quality stays steady (Rival IQ’s 2025 benchmark findings). If your agency pricing promises growth, your analytics should show how the work is overcoming the headwinds, not pretending they don’t exist.

Performance Benchmarks

Benchmarks are guardrails, not grades. They help you avoid panic when a metric is normal for your industry and avoid complacency when you’re quietly falling behind. The trick is picking benchmarks that match what you’re paying for in your social media marketing agency pricing model.

Benchmark 1: Organic engagement trends are flattening, so format choices matter more

If an agency is pricing a “content engine,” you should expect their strategy to be format-aware, not just platform-aware. Benchmark reports show that engagement patterns vary by format and that shifts can be dramatic year over year, which is exactly why “we’ll post consistently” isn’t a plan (Rival IQ’s format and engagement analysis).

On Instagram specifically, recent benchmark work continues to show carousels holding up as one of the more resilient formats, which supports a pricing approach that funds creative systems (templates, series, and repeatable concepts) instead of one-off posts (Socialinsider’s Instagram benchmarks).

Benchmark 2: Paid social costs move seasonally, and that should be priced into expectations

If your agency pricing includes paid social management, you need cost benchmarks that explain volatility instead of hiding it. CPMs don’t just rise “because competition.” They rise at predictable moments, especially around Q4 retail pressure, and that affects what your budget can buy and how fast you can test creative (Gupta Media’s seasonal CPM analysis).

At the market level, trend reporting also shows that paid social pricing can rise even when volume declines, creating a weird situation where you’re paying more for reach but getting fewer chances to learn unless your testing system is disciplined (Skai’s Q1 2025 Quarterly Trends Report).

Benchmark 3: Platform CPM spreads are real, but they don’t tell you efficiency by themselves

Comparing platforms by CPM can help you plan reach, but it can’t tell you value. CPM is the entry price to the auction, not the price of a customer. That’s why platform-level CPM comparisons are useful only when you pair them with conversion quality and incrementality checks (EMARKETER’s Q4 2025 CPM comparison across platforms).

Benchmark 4: Attention is huge, but it’s not unlimited

If you’re paying for organic social, you’re paying to earn attention from people who already spend a meaningful part of their day on these platforms. The typical internet user’s daily social media time has been tracked around 2 hours and 21 minutes, but it has also been described as declining compared to two years prior, which reinforces the reality that competition for attention keeps getting tighter (DataReportal’s Digital 2025 state of social).

Analytics Interpretation

Analytics interpretation is where most teams lose the plot. They track everything, then they make decisions based on the easiest metric to understand. If you want your social media marketing agency pricing to feel “worth it,” interpretation needs to be a repeatable decision process, not a monthly slideshow.

Don’t confuse signal with noise

A small dip in engagement can be noise. A consistent drop across multiple formats can be signal. This is why broad benchmark reporting can be calming: when multiple datasets show engagement pressure, you stop blaming your team for a macro trend and start focusing on what you can control (creative, distribution, offer clarity, and cadence) (Rival IQ’s cross-platform engagement trend).

Use leading indicators to protect spend

Leading indicators are the early warnings that tell you whether a campaign is healthy before results fully land. For paid social, that can be creative hold rate signals (thumb-stopping performance), click quality, and early funnel conversion rates. For organic, it can be saves, shares, and return engagement on repeat series rather than single-post spikes.

This matters for pricing because it changes what you pay for: you’re not paying for a post count, you’re paying for a system that notices failure early and corrects it before it becomes a budget leak.

Separate attribution from incrementality

Attribution tells you where conversions were observed. Incrementality tells you whether those conversions happened because of your marketing. When budgets grow, this distinction stops being academic and starts being the difference between scaling and wasting money.

That’s why lift testing has become a serious part of modern measurement. TikTok frames Conversion Lift Studies as a way to quantify incremental conversions by comparing exposed and control groups, which is exactly the kind of methodology that makes pricing conversations easier because it turns “I think it worked” into “we measured the lift” (TikTok’s Conversion Lift Study overview).

Price the reporting work you actually need

Here’s the uncomfortable truth: clean reporting is labor. It requires tagging discipline, consistent KPI definitions, and someone who can translate results into decisions without oversimplifying. If your agency is priced low but promises executive-ready analytics, you should expect one of two outcomes: either reporting is shallow, or the agency quietly cuts corners somewhere else to cover the time.

Case Stories

Stories matter because they show what analytics looks like under pressure. When the business needs proof, your team needs more than dashboards. You need a measurement approach that can survive skepticism and still guide the next decision.

HEMA: When the Business Needed Proof That Social Was Driving Real Sales

Start at a point of high drama: The campaign was live, the spend was real, and the uncomfortable question hit the team at the worst time: “Are these ads driving incremental sales, or are we just paying to show up?” The stakes weren’t vanity metrics; it was total sales and efficiency across channels. In that moment, social media marketing agency pricing stops being a line item and becomes a trust test (HEMA’s Meta success story).

Backstory: HEMA operates in a world where offline and online behavior overlap, which makes measurement messy if you rely on surface-level attribution. Omnichannel campaigns can look expensive if you only credit the last click, even when they’re genuinely changing buying behavior. That’s why the team leaned into an experiment-driven measurement approach rather than debating opinions (Context and study details in the Meta case story).

Wall: The wall was proving incremental impact in a way that leadership would accept. If the only evidence is “platform reporting,” skepticism wins, budgets get cut, and the team loses the ability to test and learn. The team needed a method that could isolate lift and quantify it in business language, not marketing language (Meta conversion lift study description).

Epiphany: Instead of arguing about attribution models, the team moved the conversation to controlled measurement. A conversion lift study design makes the question simpler: compare outcomes between people exposed to ads and people held out. That reframes analytics from “reporting results” to “measuring causal impact,” which is the kind of clarity that stabilizes pricing conversations (Meta’s multi-cell lift approach used by HEMA).

Journey they went on to reach the goal: HEMA ran a multi-cell Meta conversion lift study from July 1 to August 4, 2024, designed to evaluate omnichannel impact. The study structure let them examine how different ad approaches affected outcomes, rather than treating the campaign as one undifferentiated blob of spend. The practical win here is operational: when you can measure lift, you can decide what to scale and what to stop without guessing (Study timing and purpose).

Final conflict: Lift studies don’t remove complexity; they surface it. Teams still need clean event definitions, stable campaign structure, and stakeholder patience while the study runs. If your implementation is sloppy, you can end up with inconclusive results that create more doubt than clarity, which is why professional agencies price the measurement setup and governance, not just the ad ops (Meta’s Conversions API documentation for resilient event sending).

Dream outcome: The published story emphasizes that the lift study helped HEMA understand how omnichannel ads affected total sales and efficiency, which is the kind of business-facing clarity that makes social media marketing agency pricing easier to defend. The real win wasn’t a single metric; it was the ability to make budget decisions with evidence instead of intuition. That’s what mature analytics buys you: confidence under scrutiny (HEMA’s results summary).

Alpro and Pinterest: When Offline Sales Were the Real Scoreboard

Start at a point of high drama: The campaign wasn’t trying to win a click metric; it was trying to move real product off shelves. That’s brutal because offline sales are harder to attribute, and it’s easy for performance teams to dismiss upper-funnel channels when they can’t see a neat last-click trail. The pressure was simple: prove it in-store, or lose budget (Nielsen’s Pinterest x Alpro case study).

Backstory: CPG brands live and die by distribution realities and retail behavior, which means digital performance metrics can feel disconnected from the actual business outcome. Pinterest is often positioned as inspiration-driven, which can be unfairly categorized as “nice awareness” rather than measurable performance. That’s why the measurement method mattered as much as the creative itself (Case framing and measurement focus).

Wall: The wall was connecting digital exposure to offline lift with credibility. Without third-party measurement, teams can end up stuck in internal arguments about what “counts,” and those arguments usually end with conservative budget decisions. The campaign needed independent validation that would hold up when challenged (Nielsen lift study positioning).

Epiphany: The breakthrough was treating offline sales measurement as the primary KPI, not a bonus metric. That flips the analytics hierarchy: clicks become supporting evidence, while sales lift becomes the deciding factor. Once you do that, your agency pricing can be structured around what the business actually values, not what’s easiest to track (Nielsen’s offline sales lift emphasis).

Journey they went on to reach the goal: The campaign was measured with Nielsen’s sales lift methodology, which evaluated in-store outcomes rather than relying on platform-only attribution. The results were described in a way leadership can understand: return on ad spend and offline sales lift. That measurement discipline is what turns social and discovery platforms into defendable budget lines (Published results and methodology summary).

Final conflict: Even when lift is proven, teams still face a messy operational reality: retail factors, seasonality, and competitive activity can muddy the interpretation. Measurement becomes an ongoing practice, not a one-time proof point, and it needs consistent experimentation hygiene. This is where agency value shows up: not in celebrating a win, but in building a repeatable measurement playbook that survives the next quarter (Dentsu’s 2025 forecast noting continued social growth pressure).

Dream outcome: Nielsen’s write-up highlights meaningful offline lift and a strong return profile, including a 2.6x ROAS figure and top-tier performance within its vertical benchmark context. That’s the kind of outcome that changes how a brand prices social work, because it justifies spending on measurement and creative systems that can drive real-world results. In plain terms: the analytics made the spend feel safe to scale (Alpro’s reported lift outcomes).

Professional Reporting

Professional reporting is not “more data.” It’s a translation layer that makes your social media marketing agency pricing feel rational to people who don’t live in social dashboards.

What your reporting should include if the pricing is serious

- One outcome headline: the business result you’re trying to move and whether you’re on track.

- Three drivers: what changed performance this period (creative, audience, offer, spend, seasonality), tied to evidence.

- One risk: what could break next (creative fatigue, rising CPMs, tracking gaps) and the mitigation plan (Skai’s paid social pricing and volume signals).

- One decision: what you’re changing next week and why.

- One learning log: tests run, what was learned, and what is being scaled or killed.

How to use benchmarks without getting tricked by them

Benchmarks should inform expectations, not replace thinking. If engagement is down across the market, your interpretation should focus on whether your creative system is improving faster than the baseline is falling (Rival IQ’s benchmark trend context). If CPMs rise seasonally, your interpretation should focus on whether you protected learning velocity by separating test budgets from scale budgets (Gupta Media’s seasonality breakdown).

And if leadership asks for “proof,” you should already have a plan for incrementality measurement, because that’s the cleanest path from social activity to business confidence (TikTok’s lift testing framework).

Future Trends

The next wave of social media marketing agency pricing won’t be driven by prettier dashboards or new posting schedules. It’ll be driven by risk, proof, and speed: compliance pressure rising, measurement getting stricter, and creative iteration becoming the only sustainable edge.

Trend 1: AI content governance becomes a priced deliverable

As more brands use AI to generate ad variations, scripts, and visuals, the work shifts from “making more” to “making safe.” That means governance: review workflows, disclosure practices, brand-safety rules, and documentation. In the EU, transparency expectations around marking and labelling AI-generated content are being formalized through policy and guidance work tied to the AI Act’s requirements (EU Code of Practice on marking and labelling of AI-generated content).

Outside Europe, the direction is similar. South Korea has moved toward requiring clear labelling of AI-generated advertisements starting in early 2026, driven by concerns about deceptive deepfake-style ads (AP reporting on South Korea’s AI-generated ads labelling requirement).

In pricing terms, “AI capabilities” shouldn’t be sold as a shortcut to cheaper retainers. Mature agency pricing will itemize governance and verification because that’s the real work that keeps brands out of trouble.

Trend 2: Platform regulation pushes agencies toward compliance-first operations

Europe’s Digital Services Act is reshaping how platforms manage risk, transparency, and advertising ecosystems. Even when you aren’t a platform, you feel the ripple effects: more scrutiny on claims, targeting practices, and the integrity of content distribution (2025 academic analysis of the DSA’s impact on social commerce).

This changes social media marketing agency pricing because agencies that serve regulated or high-visibility brands will increasingly price “risk operations” explicitly: escalation paths, content review standards, audit trails, and documentation that proves how decisions were made.

Trend 3: Creators become infrastructure, not an add-on

Creators are no longer a side channel; they’re becoming a core part of how ads and trust are produced. U.S. creator economy ad spend was projected to reach $37B in 2025, reflecting how quickly budgets are moving toward creator-led formats (IAB reporting on creator economy ad spend growth).

That means pricing will shift away from “influencer outreach” as a vague add-on and toward structured creator systems: sourcing, briefing, usage rights, whitelisting options, and creative testing that treats creators as a scalable production layer.

Trend 4: Budget volatility forces performance systems to get more honest

Ad markets move with macro conditions, and planning is increasingly done under uncertainty. Forecasting work continues to show global ad spend growth with strong digital contribution, while acknowledging that expectations can be revised when economic conditions shift (WARC Global Ad Spend Outlook 2024/25).

As volatility grows, buyers will value pricing models that protect learning velocity: clear testing capacity, defined optimization cadence, and measurement approaches that can separate “good luck” from real incremental lift.

Strategic Framework Recap

If you only remember one thing from this guide, make it this: social media marketing agency pricing is easiest to trust when it’s built on transparent operating assumptions. When pricing is vague, you don’t just risk paying too much—you risk paying for the wrong work.

- Outcomes: define success in business terms first, so your pricing reflects value instead of activity.

- Scope: turn “we can do that” into boundaries that survive real life—formats, platforms, response expectations, and what’s explicitly out.

- Capacity: buy capacity in a way your team can manage (hours, cycles, bundles, or hybrid) so execution stays predictable.

- Complexity: price approvals, compliance, and stakeholder load honestly, then reduce that complexity with systems.

- Accountability: build a decision cadence that turns reporting into action instead of a monthly recap.

This ecosystem view matters more as the market shifts. Between AI governance, platform regulation, and creator-led distribution, the agencies that win won’t be the ones that promise “more posts.” They’ll be the ones that run a system you can explain, defend, and scale.

FAQ – Built for the Complete Guide

What’s typically included in social media marketing agency pricing?

Most engagements include strategy, content planning, creative production (or direction), publishing, community management scope (if included), reporting, and an optimization cadence. Paid social management is often priced separately from ad spend.

Why do two agencies quote wildly different prices for “the same” work?

Because the work is rarely the same. Differences usually come from creative complexity (video vs. static), approval and compliance load, response-time expectations, analytics depth, the number of platforms, and how much experimentation is included.

Should I choose a retainer or a project-based engagement?

Retainers work best when you need continuous output and optimization. Projects work best for defined deliverables like an audit, a launch campaign, or a one-time system build. Many strong partnerships use a hybrid: a steady retainer plus project sprints for spikes.

Does pricing usually include the paid media budget?

No. Paid media budget is the money spent on the platforms, while agency pricing covers strategy, creative testing, audience work, pacing, measurement, and optimization. Mixing them makes it harder to see what you’re paying for.

How many platforms should be included in one package?

Fewer than most teams think. It’s usually better to run fewer channels with stronger creative and faster learning than to spread thin across every platform. Pricing should reflect where your audience actually converts, not where trends say you “should” be.

How do I avoid endless revisions that inflate costs?

Agree on revision rules up front: how many revision rounds are included, what counts as a revision vs. a new direction, and how quickly stakeholders must respond. Strong governance protects both sides and keeps pricing stable.

How often should reporting happen?

At least weekly for performance review and decision-making, with a deeper monthly recap and a quarterly strategy reset. If reporting is monthly only, learning slows down and you end up paying for activity rather than improvement.

How should AI affect agency pricing?

AI can reduce some production time, but it increases the need for governance, verification, and brand-safety controls. If an agency claims AI makes everything cheaper, ask how they handle labelling, review workflows, and risk—especially as transparency requirements tighten (EU guidance work on AI-generated content labelling).

Should I judge agencies by benchmark metrics like engagement rate?

Benchmarks are a useful starting point, but they’re not a verdict. A better test is whether the agency can explain what drives the numbers, what they’ll change next, and how those changes connect to business outcomes.

What are the biggest red flags in a pricing proposal?

Vague scope language, no clear optimization cadence, reporting that’s heavy on charts but light on decisions, unclear ownership of creative direction, and promises that ignore measurement reality. If you can’t tell what work is actually being done each week, the pricing won’t feel fair later.

When should I consider switching agencies?

When the system stops learning. If you’re getting outputs but not improvement, if reporting never leads to decisions, or if the agency can’t explain what they’re doing differently next cycle, it’s time to reassess.

Work With Professionals

If you’ve made it this far, you already know the hardest part of social media marketing agency pricing: it’s not finding a number—it’s building a pipeline that makes your skills valuable month after month. Even strong marketers get stuck in a loop of pitching, negotiating, and chasing leads while their actual work takes a back seat.

That’s why marketplaces built specifically for marketing can be a shortcut to momentum. MARKEWORK positions itself as a focused marketplace for marketing roles and projects where you connect directly—no middle layer, no commission model, and no project fees (MARKEWORK home page). For freelancers, the pricing page lays out simple monthly plans and emphasizes direct communication with companies and access to “thousands of job listings” (MARKEWORK pricing).

Here’s the part that changes how you think about growth: instead of spending your week hunting opportunities across unrelated job boards, you can build a marketing-focused profile, browse active roles, and apply consistently. The “Find Work” section shows a large volume of active listings and makes it clear that more roles are available once you’re logged in, which is exactly what you want if you’re serious about building a repeatable client pipeline (MARKEWORK active listings).

And the model is clean. MARKEWORK’s FAQ explains that the platform doesn’t handle contracts or payments—you negotiate directly and manage your own agreements, invoices, and payments, which keeps you in control of the relationship and the economics (MARKEWORK FAQ).

If your goal is simple—more remote marketing contracts, less friction, and a clearer path from “I’m available” to “I’m booked”—it’s worth building your presence where marketing work is the focus, not an afterthought.