A social media campaign can look busy and still do nothing. Posts go out, ads spend money, comments roll in, and the dashboard lights up—yet sales don’t move, sign-ups stall, and the team can’t agree on what “worked.” The real problem usually isn’t effort. It’s that the campaign never had a clear strategy holding it together.

A solid social media campaign strategy is the difference between “we posted a lot” and “we engineered momentum.” It turns social into a system: a focused goal, a defined audience, a creative idea worth caring about, distribution that actually reaches people, and measurement that explains what to do next. This series breaks that system down into a practical framework you can run like a pro—whether you’re building campaigns for your own brand or delivering results for clients.

Article Outline

- What Is a Social Media Campaign Strategy?

- Why a Social Media Campaign Strategy Matters

- Framework Overview

- Core Components

- Professional Implementation

What Is a Social Media Campaign Strategy?

A social media campaign strategy is the plan that connects your business outcome to what people actually see and do on social platforms. It defines the goal, the audience, the message, the creative direction, the channels, and the way you’ll measure success—before anything gets posted or promoted.

Think of a campaign as a “moment with intent.” It has a beginning (a reason to pay attention), a middle (a sequence that builds interest and trust), and an end (a conversion, a commitment, or a measurable shift in behavior). Strategy is what makes that moment coherent, so every asset feels like it belongs to the same story and every dollar supports the same outcome.

It also draws a clear line between three things that often get mixed up:

- Content is what you publish (videos, posts, stories, carousels, lives).

- Campaign is a coordinated push with a single objective and a defined time window.

- Strategy is the logic that decides what to make, where it runs, who it reaches, and how you’ll improve it.

When the strategy is missing, teams tend to argue about surface-level choices—hooks, formats, hashtags, posting times—because there’s no shared decision system underneath. When the strategy is present, those choices get easier, because they’re made in service of one clearly defined result.

Why a Social Media Campaign Strategy Matters

Social is no longer a “nice to have” channel that sits politely on the side of your marketing plan. It’s where people discover brands, compare options, and decide whether they trust you enough to take the next step. That’s exactly why a social media campaign strategy matters: it turns social activity into business leverage.

There’s also a hard reality that forces strategy to the front. The paid side of social is big, competitive, and volatile. In the IAB/PwC Full Year 2024 Internet Advertising Revenue Report, social media advertising is reported at $88.8B in 2024, and the same figure is repeated in the IAB release summary and covered as a key takeaway in MarTech’s analysis. In a market like that, a campaign without a strategy isn’t just inefficient—it gets punished quickly.

On the organic side, expectations are rising at the same time attention is fragmenting. People don’t just want posts; they want relevance, responsiveness, and content that feels made for them. In Sprout Social’s research, 81% of consumers say social media drives impulse purchases and 73% say they’ll buy from a competitor if a brand doesn’t respond—and those same numbers are echoed in Sprout’s social media statistics roundup and summarized in Nasdaq’s coverage of the 2025 Sprout Social Index. A campaign strategy is how you design for that reality instead of reacting to it.

Most importantly, strategy gives you a decision trail. When results are strong, you know why. When results are weak, you know what to change—offer, audience, creative, channel mix, landing experience, or follow-up. Without that trail, teams end up repeating the same work with slightly different captions and hoping the algorithm “likes it” this time.

Framework Overview

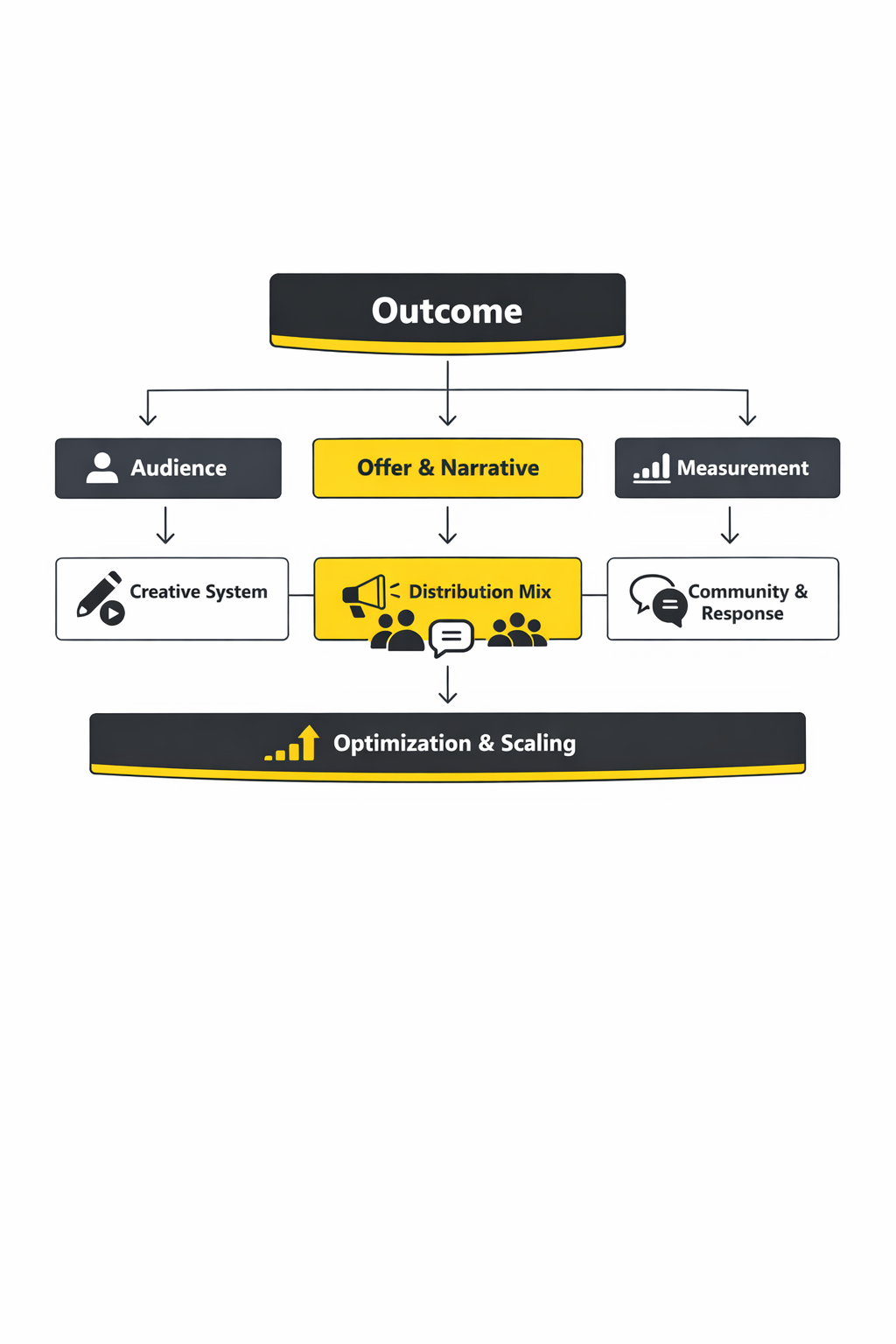

This framework treats a social media campaign strategy like a closed-loop system. You plan with clarity, execute with consistency, measure with purpose, and improve with speed. Each part supports the next, and the loop gets stronger with every iteration.

The core idea is simple: a campaign should be built from the outcome backward. Instead of starting with “what should we post,” you start with “what should change,” then design the message, creative, and distribution that make that change most likely.

Here’s the sequence the rest of this series will expand into detailed, usable steps:

- Outcome: One primary objective that can be measured (not five vague goals competing at once).

- Audience: A specific segment with a real trigger, barrier, and motivation—not a demographic bucket.

- Offer and narrative: The value proposition plus the story that makes it feel urgent, credible, and worth acting on.

- Creative system: Assets designed for platform behavior (attention, retention, and response), not just brand aesthetics.

- Distribution: A deliberate mix of organic, paid, creators/partners, and community that matches the objective.

- Measurement: A small set of metrics tied to decisions—what to scale, what to cut, what to fix.

If you’re building campaigns for clients, this framework also helps you communicate like a strategist. It gives you a professional way to justify choices, manage expectations, and present results as a narrative of learning and improvement—not just a spreadsheet of numbers.

Core Components

A strong social media campaign strategy stands on a few components that are easy to name, but surprisingly easy to skip. The goal isn’t to make the process complicated—it’s to make it complete, so the campaign doesn’t collapse under real-world pressure (limited time, mixed feedback, platform changes, and shifting performance).

One goal that forces trade-offs

Professional campaigns pick a primary outcome and accept the trade-offs that come with it. A campaign built for lead quality will often sacrifice cheap reach. A campaign built for awareness will often sacrifice short-term conversion rate. When the goal is clear, your choices become consistent: creative, targeting, landing page, and follow-up can all align.

An audience definition that explains behavior

“Small business owners” isn’t an audience; it’s a population. A campaign-ready audience is defined by what’s happening in their world right now: the problem they’re trying to solve, what they’ve already tried, what they’re skeptical about, and what would make them believe you. That’s the difference between targeting that looks precise and targeting that actually works.

A message people can repeat

The best campaign messages are easy to retell in one sentence. If your audience can’t explain the value quickly, they won’t share it, they won’t remember it, and they won’t act on it. Strategy forces that clarity early so you don’t waste production time polishing creative that’s built on a fuzzy promise.

Creative built for attention and proof

Social creative has two jobs: earn attention and earn belief. Attention comes from relevance, pattern breaks, and strong hooks. Belief comes from proof—demonstrations, outcomes, testimonials, comparisons, credible explanations, and clear next steps. Campaign strategy makes sure both jobs are covered, instead of hoping great editing will do the heavy lifting.

Distribution that matches how people decide

Most buying decisions don’t happen in a single touch. People notice, then they watch, then they check your profile, then they read comments, then they leave, then they come back. Strategy plans for that loop by combining placements and formats that support discovery, consideration, and conversion—so your campaign doesn’t rely on one post “going viral” to succeed.

Professional Implementation

In practice, running a social media campaign strategy professionally is less about “doing everything” and more about building a tight operating rhythm. That rhythm keeps the campaign coherent while still letting you adapt fast when the data tells you something new.

A simple pro-level workflow looks like this:

- Pre-launch: lock the goal, audience, offer, success metrics, and approvals so execution isn’t constantly renegotiated.

- Launch week: publish your strongest creative first, watch early signals (especially retention and click quality), and adjust quickly.

- Optimization: treat testing as controlled learning—change one meaningful variable at a time so you know what caused the lift.

- Post-campaign: document what you learned in a way you can reuse (winning angles, strongest hooks, best-performing audiences, and the objections that surfaced in comments and DMs).

The professional difference is documentation and intent. Anyone can post content and boost the best-looking one. A strategist runs a repeatable system: every creative choice has a reason, every metric has a decision attached to it, and every campaign produces insights that make the next one stronger.

In Part 2, we’ll turn this framework into a step-by-step planning process—how to set the right campaign objective, translate it into platform-specific KPIs, and define an audience in a way that shapes creative and targeting instead of sitting in a slide deck.

Step By Step Implementation

Implementation is where a social media campaign strategy either becomes a system or turns into a pile of disconnected posts. The difference is rarely talent. It’s almost always process: clear sequencing, clean handoffs, and a daily rhythm that keeps the campaign learning and improving while it’s still live.

Use this step-by-step flow to move from “plan” to “performance” without losing clarity along the way.

1) Lock the one goal and define “done”

Pick a single primary outcome for the campaign and write it down in plain language. Then define what “done” means in a way that won’t change mid-flight when feedback starts coming from every direction. If you can’t explain the goal to a teammate in one sentence, your execution will drift because everyone will optimize for their own version of success.

2) Map the user journey before you build creative

Decide what happens after someone sees the first touch. Do they click to a landing page, follow your profile, watch a second video, book a call, or message you? Your campaign assets should be designed to move people through that journey intentionally, not accidentally.

This is also where you decide what you need to track and where the tracking needs to happen. If the journey is blurry, measurement becomes guesswork and optimization turns into random edits.

3) Build a creative brief that prevents “random content”

Write a short brief that includes the promise, the proof, the tone, and the single action you want. Add the three main objections you expect to see in comments and DMs, because those objections will shape what you publish next. A good brief isn’t long—it’s sharp enough that ten different creators could produce content that still feels like one campaign.

4) Design a testing plan that teaches you something

Decide what you will test and what you will hold constant. If you change the hook, the offer, the landing page, and the audience at the same time, you’ll get noise, not learning. The cleanest tests usually start by varying one of these: the opening hook, the angle, the proof format, or the call to action.

5) Set your operating rhythm before launch day

Pick a cadence for reviewing performance and making decisions. Daily works for fast-moving paid and short-form creative. Two to three times per week can work for longer campaigns where the goal is consistency and sustained lift. Whatever you choose, make it real: a calendar block, an owner, and a written decision log.

6) Launch with your strongest assets, not your safest

Early performance shapes what the algorithm learns and what your team believes. Launching with “safe” creative often delays learning and slows momentum. Start with the clearest promise and the clearest proof you have, then use the first wave of signals to shape iteration.

7) Document learning while it’s fresh

The fastest teams don’t just optimize the current campaign—they build an advantage for the next one. Capture which angles earned attention, which objections showed up, and which pieces of proof changed minds. A social media campaign strategy gets stronger when your learning becomes reusable assets, not forgotten screenshots.

Execution Layers

When campaigns struggle, it’s rarely because one thing is “bad.” It’s usually because one layer of execution is missing, and the rest can’t compensate. Think of execution as layers that stack together; each layer amplifies the others.

Layer 1: Strategy integrity

This layer keeps the campaign coherent. Every post, ad, and reply should reinforce the same promise and move toward the same outcome. If the campaign starts chasing side quests—new audiences, new offers, new messages—you lose compounding momentum because the system can’t learn.

Layer 2: Creative production and versioning

This is where you operationalize variation without chaos. The goal is not “more content,” it’s more meaningful iterations: different hooks, different proofs, different structures, all anchored to the same message. If your team can’t track what version is live where, you’ll waste time and blame the platform when the real issue is workflow.

Layer 3: Distribution and amplification

Distribution decides whether your best creative gets a fair chance. Organic, paid, creators, partners, and community all serve different jobs in the journey. A campaign that relies on one channel alone is fragile, because one algorithm change or one creative miss can stall the whole effort.

Layer 4: Community and trust building

The campaign doesn’t end when you publish. Comments and DMs are where objections surface and trust is either built or lost in public. Treat community management as part of conversion, not as an afterthought—because the questions people ask repeatedly are often the exact content you should publish next.

Layer 5: Measurement and learning

This layer prevents you from “optimizing vibes.” You’re looking for signals that explain what to do next: what to scale, what to pause, what to rewrite, and what to remake. If you can connect the signals to decisions, performance becomes a process, not a mystery.

Optimization Process

Optimization is not “tweaking until it feels better.” It’s a disciplined loop: observe, diagnose, adjust one meaningful variable, and validate. That loop is how a social media campaign strategy stays grounded in reality while still moving fast.

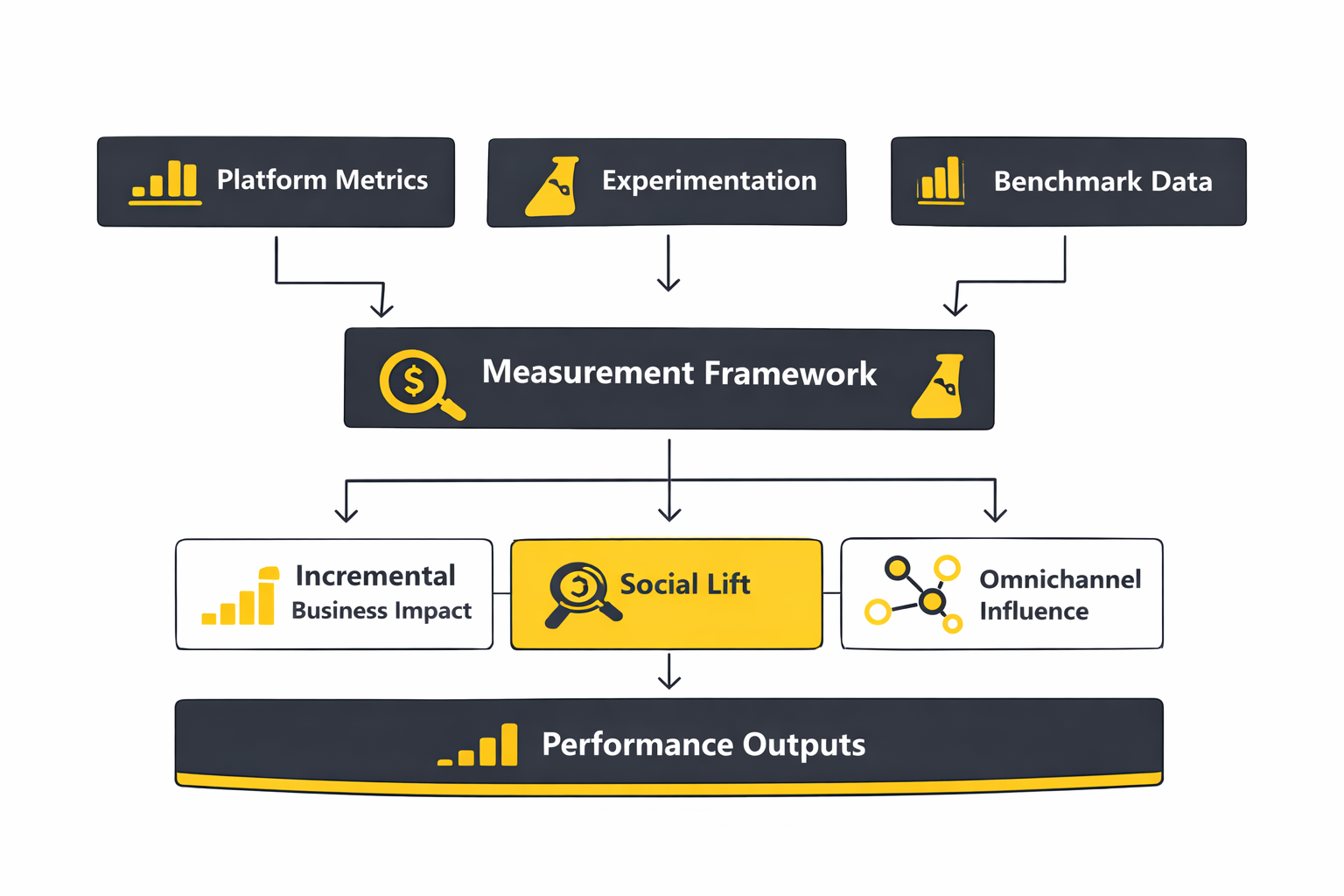

Start with a signal hierarchy

Not all metrics deserve the same attention at the same time. Early in a campaign, you’re watching for whether the creative earns attention and whether the promise is understood. As the campaign matures, you care more about quality actions and outcomes—because attention without progress is just entertainment.

- Attention signals: are people stopping, watching, and engaging?

- Intent signals: are they clicking, saving, sharing, visiting your profile, or messaging?

- Outcome signals: are the right actions happening after the click or message?

Diagnose before you edit

When performance dips, resist the urge to rewrite everything. First decide what kind of problem it is:

- Message problem: people don’t understand the promise or don’t believe it.

- Creative problem: the hook doesn’t earn attention, or the proof doesn’t land.

- Distribution problem: the creative is solid, but it’s not reaching the right people consistently.

- Journey problem: people click, but the landing experience or follow-up doesn’t carry the story forward.

Once you diagnose, you change one meaningful variable and test again. That’s how you build confidence in your decisions instead of chasing randomness.

Use controlled measurement when possible

If the budget and setup allow it, controlled experiments help you separate true lift from “what would have happened anyway.” Many platforms push marketers toward incrementality for exactly this reason. TikTok explains the logic and structure behind test/control measurement in its overview of a Conversion Lift Study methodology.

The mindset matters even if you don’t run a formal lift study. You’re always asking the same question: did our campaign create additional outcomes, or did we just observe demand that already existed? Clear explanations of that concept show up in practical measurement guidance like Northbeam’s 2024 guide to incrementality.

Build a decision log that survives client pressure

Campaigns get noisy: stakeholders want changes, teams want to try new ideas, and everyone has a strong opinion after glancing at a dashboard. A simple decision log protects the strategy. Write down what you changed, why you changed it, what you expected to happen, and what you saw after the change.

This turns optimization into a professional narrative of learning. It also stops the common failure mode where teams “fix” the wrong thing because they forgot what they changed two weeks ago.

Implementation Stories

When you watch a campaign from the outside, it looks like a clean sequence: creative goes live, performance improves, results happen. Inside the work, it’s messier. Tools fail, stakeholders panic, and the first creative draft is almost never the winner. The stories below focus on execution and measurement moments that are documented publicly.

Target’s omnichannel push when the pressure shifted from “ads” to measurable impact

The tension wasn’t subtle. The team needed to drive sales in a world where people move between online browsing and in-store buying without announcing where the decision will happen. If the campaign couldn’t prove impact across that reality, it would be dismissed as “nice marketing” instead of business growth.

In the background, the challenge was operational. An omnichannel campaign isn’t just more placements—it’s a more complicated measurement problem, because outcomes show up in different places. A social media campaign strategy that can’t survive measurement complexity eventually gets replaced by whatever channel feels easier to attribute.

The wall arrived when the team faced the classic question: what’s the real difference between this approach and what we were already doing? Without a clean comparison, every result can be argued away. And when results can be argued away, budgets get reallocated to whoever tells the clearest story.

The breakthrough came from treating measurement design as part of execution, not a report at the end. Target ran a structured A/B test in Meta Ads Manager to compare approaches, which is described in Meta’s “Driving online and in-store sales with Meta omnichannel ads” success story. That structure gave the team a clearer view of what changed because of the campaign.

With the measurement approach in place, execution became more decisive. The team could align creative, placements, and reporting around the same outcome instead of optimizing different parts of the campaign in isolation. That’s what turns a strategy into an operating system: every team member can see how their work contributes to the same measurable goal.

Then came the part that looks boring but decides everything: staying disciplined when performance data starts arriving. The temptation is to change too much too quickly, especially when stakeholders demand instant certainty. Execution maturity is knowing when to iterate and when to let learning stabilize.

The payoff was not just performance—it was proof. The campaign could be explained as a controlled comparison rather than a collection of activity, which is why the story reads like a measurement-driven implementation, not a creative highlight reel in the published Meta success story.

Domino’s Spain when the real fight was proving TikTok wasn’t just “top-of-funnel noise”

The campaign didn’t have the luxury of being seen as experimental. Food delivery is competitive, and attention is expensive; nobody wants to fund a channel that can’t prove it moves real orders. The team needed to show that TikTok could do more than entertain.

Behind the scenes, the difficulty wasn’t creative alone—it was credibility. When teams can’t measure incremental impact, internal skepticism grows, and social gets treated like a brand-only channel. That skepticism becomes the quiet force that limits budget, limits testing, and limits learning.

The wall showed up as a measurement gap: if conversions are happening anyway, how do you know the campaign created additional results? Last-click attribution often under-credits discovery channels and over-credits the final touch. That mismatch can make smart campaigns look weak on paper.

The shift came when they leaned on incrementality testing. Domino’s Spain’s write-up describes using a test/control structure to evaluate impact through a Conversion Lift Study, detailed in TikTok’s Domino’s Spain case study page. That approach changes the conversation from opinion to evidence.

Once measurement was built into the campaign, execution had a clearer direction. The team could optimize toward outcomes without constantly defending the channel itself. That matters because the best creative iteration happens when the team is improving performance—not negotiating whether the platform “counts.”

The final conflict is the one most teams recognize: even when measurement improves, pressure doesn’t disappear. Stakeholders still ask for faster results, and teams still feel the urge to overhaul creative too quickly. Implementation discipline means keeping your learning loop clean even when people want instant answers.

The dream outcome is that the channel becomes strategically usable. When you can demonstrate incremental value, you earn the right to scale, test deeper, and build a longer-term social media campaign strategy instead of treating every campaign like a one-off gamble, which is the core point of the documented Domino’s Spain case study.

Run a pre-flight checklist that prevents avoidable failure

- Tracking: the key events work end-to-end, and everyone agrees what success means.

- Creative system: your variants are clearly named, stored, and easy to swap without confusion.

- Approvals: the rules are set before launch so you don’t pause momentum mid-campaign.

- Community: response ownership is clear, with escalation paths for sensitive issues.

- Reporting: dashboards and decision logs are ready before the first post goes live.

Iterate with discipline, not adrenaline

Make changes in planned cycles. When a campaign is live, it’s easy to chase every fluctuation and turn your strategy into a series of emotional reactions. Professionals decide in advance what triggers an iteration, what triggers a pause, and what triggers a deeper diagnosis.

Build measurement maturity over time

Not every campaign needs a full lift study, but every campaign benefits from the incrementality mindset: prove what you can, don’t guess what you can measure, and treat measurement design as part of execution. If you do run lift experiments, the platform guidance helps you frame the process and expectations, like TikTok’s explanation of how Conversion Lift Studies work.

Turn learning into assets that compound

At the end of each week, capture the three strongest angles, the most common objections, and the proof formats that changed minds. Then turn those into reusable building blocks: hooks, scripts, talking points, and content templates. That’s how a social media campaign strategy evolves from “a campaign we ran” into a system that makes every future campaign easier and more effective.

Statistics And Data

Numbers don’t make a social media campaign strategy “real.” Decisions do. The role of statistics is to reduce uncertainty so you can act with confidence: what to scale, what to cut, what to fix, and what to stop arguing about.

A few data points are especially useful because they describe the market pressure you’re operating inside, not just your internal performance.

- Social ad spend is huge and still accelerating. U.S. social media advertising revenue reached $88.8B in 2024, and the same figure appears in the IAB summary release and coverage of the report’s totals in industry reporting. That scale matters because it means competition is constant, not seasonal.

- Creators have moved from “tactic” to “channel.” U.S. creator ad spend rose from $13.9B in 2021 to $29.5B in 2024 and is projected to reach $37B in 2025 in the IAB 2025 Creator Economy Ad Spend & Strategy Report, with the same $37B projection reinforced in IAB’s announcement and summarized in Business Insider’s coverage. If your campaign ignores creators entirely, you’re often choosing a slower distribution path by default.

- Engagement is shifting, not disappearing. Socialinsider’s 2026 analysis of 70M+ posts reports TikTok engagement around 3.70% while Instagram sits around 0.48% and Facebook around 0.15%, and those headline figures are echoed in a digest summary at Social Media Today. The practical takeaway is that “likes” alone are a weaker signal than watch time, saves, shares, and downstream actions.

Use these market stats as context, not as targets. Your campaign is still won or lost by what your audience does next: watch, click, message, book, buy, or come back later with intent.

Performance Benchmarks

Benchmarks are helpful when they’re used the right way. They’re not a scorecard for self-esteem. They’re a sanity check that tells you whether your campaign is behaving like a normal campaign, or whether something is broken in the message, the creative, the targeting, or the journey after the click.

Three benchmark notes keep teams from using numbers incorrectly:

- Benchmarks depend on definitions. “Engagement rate” can be calculated per follower, per reach, or per impression. Rival IQ explicitly explains its benchmarking methodology and what it measures in its 2025 benchmark report methodology section.

- Benchmarks depend on account size and industry. A global consumer brand and a niche B2B company don’t behave the same way on the same platform, even with excellent creative.

- Benchmarks depend on format. A carousel, a short video, and a static image ask for different behavior, so they produce different “normal” results.

Organic engagement benchmarks you can actually use

If you need a fast reference point for organic performance, it helps to triangulate across multiple benchmark publishers rather than cling to one number.

- Platform-level engagement ranges: Socialinsider’s 2026 benchmarking reports TikTok engagement at 3.70%, Instagram at 0.48%, and Facebook at 0.15%, with the same set of figures summarized in a Social Media Today infographic recap.

- Cross-industry trend direction: Rival IQ’s 2025 report flags broad engagement declines across major platforms and explains how it analyzes engagement and posting patterns in its benchmarking overview.

- Industry-level context: Sprout’s industry benchmark page is useful when you need to explain that performance varies by sector and audience behavior, presented in its 2025 benchmarks by industry roundup.

When your numbers are below these reference ranges, don’t jump straight to “the algorithm hates us.” First check the fundamentals: is the hook specific, is the promise believable, and is the content giving proof early enough to earn attention?

Creator and distribution benchmarks that matter for campaign design

Creator distribution is increasingly treated as a core channel, not a nice add-on. The IAB’s projection that creator ad spend reaches $37B in 2025 is a useful “budget reality” benchmark, backed by the same projection in IAB’s insight page and mainstream coverage like Forbes’ reporting.

For many brands, this changes what “good” looks like. A campaign that combines paid + creators + community can outperform a campaign that tries to brute-force reach through ads alone, because creators compress trust-building into a shorter time window.

Analytics Interpretation

Dashboards are easy. Interpretation is the skill. The fastest way to become dangerous (in a good way) is to connect metrics to decisions, so the team can move from “what happened” to “what we do next” without arguing in circles.

Three questions that turn metrics into action

- Is the message landing? If retention is weak and comments show confusion, your promise isn’t clear enough or your hook is attracting the wrong audience.

- Is belief building? If people watch but don’t click, save, share, or message, the content may be entertaining but not persuasive. That usually means proof is missing, late, or too vague.

- Is the journey coherent? If clicks happen but outcomes don’t, the problem often lives after the platform: landing page clarity, page speed, offer mismatch, form friction, or slow follow-up.

Vanity metrics vs decision metrics

Vanity metrics aren’t useless. They’re just incomplete. Views, likes, and follower growth can signal reach and resonance, but they don’t automatically signal business impact. Decision metrics are the ones that tell you what lever to pull next: which angle to scale, which audience to refine, which proof format to remake, and whether the offer needs to change.

When you need to prove causality instead of correlation, incrementality testing becomes the difference between confidence and guessing. TikTok lays out how a test/control design works in its explanation of Conversion Lift Study measurement, and Meta frames the same concept in its overview of Conversion Lift testing.

What “good” looks like in a live campaign

- Week 1: You should see at least one creative angle show early promise and one audience segment respond better than the rest.

- Week 2: You should be able to explain which variable is driving improvement (hook, proof, audience, placement, or offer), because you didn’t change everything at once.

- Week 3+: You should be building stability: fewer wild swings, clearer forecasting, and repeatable winners you can port into the next campaign.

Case Stories

Case studies are only useful when they reveal the operating truth: where things got messy, what the team changed, and how measurement proved the change mattered. These stories stick to publicly documented campaigns and focus on the analytics moments that shaped execution.

Gruvi’s holiday campaign when “attribution looked fine” but reality felt off

The panic didn’t come from a bad dashboard. It came from a familiar disconnect: the team could see purchases coming in, but they couldn’t prove the ads were truly causing enough of them to justify scaling. If they pushed spend without confidence, they risked burning budget on demand that would have arrived anyway. If they held back, they risked missing the most competitive window of the year.

Before the holidays, the brand was already operating in a noisy market where everybody was advertising and every competitor had a discount. That environment makes “last click” feel comforting because it’s simple, but it also makes it misleading because customers hop between channels. The campaign needed a measurement approach that could survive scrutiny, not just a report that looked neat.

The wall hit when the team tried to answer a simple question: how many outcomes were incremental? Standard attribution could show conversions, but it couldn’t cleanly answer “what would have happened without these ads.” That’s the moment when teams either drift into optimism or spiral into doubt.

The breakthrough was to treat measurement as part of the strategy, not a recap. Gruvi’s campaign results were evaluated using a Meta Conversion Lift study with search lift methodology, described directly in Meta’s Gruvi success story. That choice reframed the conversation from “we think it worked” to “we can isolate impact.”

Then came the execution journey: they ran the campaign through peak season, monitored performance, and used a structured measurement setup to avoid making changes based on noise. Instead of swapping everything at once, they could focus iterations on what the lift results and behavioral signals suggested. The work became less emotional and more operational, because the measurement design anchored decisions.

Of course, the final conflict showed up anyway: holiday campaigns amplify everything—creative fatigue, rising auction pressure, and stakeholder anxiety. Even with a solid measurement plan, the team still had to avoid the trap of “fixing” a campaign just because performance fluctuated day to day. Staying disciplined became its own competitive edge.

The dream outcome wasn’t a single number. It was confidence that the campaign had measurable incremental impact and that the team could explain why scaling made sense, which is the real payoff of the lift-based approach in the published case study. That’s what turns a social media campaign strategy into a repeatable business system instead of a seasonal gamble.

Turkish Airlines’ moment when social needed to prove it could drive intent, not just engagement

The tension wasn’t about creativity. It was about intent. The team needed to show that Meta exposure could move people toward search behavior and consideration, not just generate likes and views that look good in a deck.

In the background, the campaign lived in a reality where travelers research across devices and channels, and search often becomes the “receipt” of interest. If your social media campaign strategy can’t show influence on intent, it risks being treated as a top-of-funnel nice-to-have. That’s a brutal position when budgets tighten and everyone demands proof.

The wall arrived when the team faced the limits of standard reporting. Social metrics could describe attention, but they couldn’t reliably quantify what happened off-platform without a measurement design built for it. That gap creates a familiar argument: “Maybe it helped, but we can’t really tell.”

The shift came through structured lift measurement. Turkish Airlines measured search traffic driven by exposure to Meta ads using a Meta Conversion Lift study, with the approach referenced in Meta’s Turkish Airlines success story. Instead of guessing about influence, the campaign could quantify it.

The journey became a cross-channel learning loop: creative drove attention, lift measurement connected exposure to downstream intent, and the team could refine decisions with more confidence than engagement-only reporting allows. This is where strategy becomes practical—because you can optimize for intent signals rather than chasing cheap engagement. When search behavior becomes part of your measurement story, the campaign gains a more credible business narrative.

Then the inevitable conflict showed up: lift studies create pressure to interpret results correctly. A team can misread lift, over-credit a single variable, or scale too aggressively without checking whether the learning generalizes. The discipline is to treat lift results as a guide for the next iteration, not a trophy for the last one.

The dream outcome is that social stops being judged as “social.” It starts being judged as a measurable driver of demand and intent, exactly the kind of reframing described in the published Meta case documentation. That’s the difference between reporting activity and proving impact.

Professional Promotion

“Promotion” here isn’t about hyping a campaign. It’s about promoting your work internally or to clients in a way that earns trust and protects budget. A professional social media campaign strategy doesn’t just perform—it communicates performance clearly enough that the work gets repeated, funded, and scaled.

Turn analytics into an asset, not a chore

The goal of reporting is to make decisions easier. A clean report tells a story: what you tried, what happened, what you learned, and what you’re doing next. It should feel like a confident narrative of experimentation, not a dump of metrics.

- One page of context: goal, audience, offer, channels, and what “success” means.

- One page of learning: winning angles, losing angles, and the variables that caused change.

- One page of next actions: what you’ll scale, what you’ll pause, what you’ll rebuild, and what you’ll test next.

When stakes are high, prove causality

If the campaign will be judged by business outcomes, consider measurement methods built to show incremental impact. TikTok’s overview of Conversion Lift Studies and Meta’s explanation of Conversion Lift testing give you language to explain why lift is different from attribution, and why the distinction matters when budgets are on the line.

Benchmark with care so you don’t undermine your own work

Benchmarks are powerful when you use them to explain context, not to excuse performance. Triangulate across sources when you need to set expectations, like using Socialinsider’s platform benchmarks in its 2026 report alongside methodology-heavy benchmarking from Rival IQ’s 2025 report. When you present benchmarks this way, it signals competence instead of cherry-picking.

In Part 5, we’ll zoom out from analytics into the broader ecosystem: how creators, paid social, email, search, and community fit together so your social media campaign strategy doesn’t operate like an isolated island.

Advanced Strategies

Once the basics are working, scaling a social media campaign strategy is less about “more content” and more about control: controlled expansion of audiences, controlled expansion of creative variety, and controlled expansion of spend without breaking measurement. The advanced move is to treat scale as a series of small, safe unlocks rather than one big budget jump.

Creative multipliers: scale variety, not just volume

Most campaigns stall because they run out of fresh angles before they run out of budget. Instead of producing endless minor variations, scale by creating a library of distinct “message archetypes” that can each carry the offer in a different way: demonstration, objection-handling, comparison, social proof, behind-the-scenes, expert explanation, and customer stories.

When you refresh creative on a predictable cadence, you protect performance from fatigue while keeping learning clean. TikTok’s Quay case study explicitly describes refreshing creatives every 7–14 days, which is useful not as a universal rule, but as a concrete example of how high-tempo creative operations support sustained performance.

Incrementality-first scaling: prove lift before you pour fuel

At small budgets, attribution debates are annoying. At scale, they’re expensive. Advanced teams use lift methodologies to answer the only question that matters when budgets increase: what did the campaign cause that wouldn’t have happened otherwise?

TikTok frames this as experimentation-driven incrementality in its overview of Conversion Lift Studies, and Meta uses the same logic in its explanation of Conversion Lift testing. Even if you don’t run a full lift study, the mindset changes decisions: you stop optimizing for “pretty” platform metrics and start optimizing for provable business impact.

Creator distribution as a scaling channel, not a tactic

When you scale beyond your owned audience, creators often become the fastest trust accelerator. They shorten the time it takes for someone to believe your claim, because the message arrives with a human face and a community context.

This shift is also reflected in budget reality: U.S. creator ad spend is projected to reach $37B in 2025, reinforced in IAB’s report overview and summarized in mainstream coverage like Business Insider’s breakdown. If your scaling plan excludes creators entirely, you’re often choosing a slower route to reach and belief.

Omnichannel halo: scale what you can’t see in last-click

As budgets rise, social’s impact increasingly shows up outside “direct” conversions: search lift, marketplace lift, and in-store behavior. If you only measure direct outcomes, you will under-invest in the parts of your campaign that create demand.

That halo effect is also showing up in experimentation-based thinking across the industry. A practical framing of omnichannel value appears in analysis like Haus’ TikTok report, which emphasizes that TikTok’s value can look materially larger when brands measure omnichannel impact instead of only DTC conversions.

Scaling Framework

Scaling is a design problem. You’re designing a system that can handle more spend, more creative, more channels, and more stakeholders without losing the single thing that makes campaigns improve: a clean learning loop.

Stage 1: Stabilize one winning loop

Before you scale budget, stabilize one repeatable loop: a message angle that earns attention, a proof format that builds belief, and a journey that converts consistently. If you can’t repeat a result, you can’t scale it—you can only amplify randomness.

Stage 2: Expand with controlled variables

Scale by changing one dimension at a time. Expand audience while holding creative stable, or expand creative while holding audience stable. This prevents the common scaling failure where performance drops and nobody can explain whether the cause was targeting, creative, frequency, seasonality, or the offer.

Stage 3: Build a creative pipeline that never runs dry

At scale, creative becomes a supply chain. If your campaign depends on one “hero” asset, you will eventually hit fatigue and stall. A better system is a weekly pipeline: new hooks, new proofs, new creator variations, and a consistent process for turning community objections into new content.

Stage 4: Upgrade measurement to match budget size

As spend grows, your measurement needs to graduate from descriptive reporting to causal confidence. That’s where lift tests and experimental design matter, because they help you defend budget decisions with evidence rather than screenshots.

Meta’s case studies frequently reference multi-cell lift testing approaches in real campaigns, such as Getnet’s results being determined via a multi-cell Meta Conversion Lift test, and Zalando’s campaign being evaluated using a multi-cell Meta Conversion Lift test. Those examples are valuable because they show what “measurement maturity” looks like when the goal is to scale with confidence.

Growth Optimization

Growth optimization is what keeps scaling profitable. It’s the discipline of improving unit economics while expanding reach, so performance doesn’t collapse the moment you push harder.

Design your signals so teams optimize the right behavior

When a campaign scales, teams often drift into optimizing whatever is easiest to see. That’s how you end up celebrating reach while sales stall. Use a simple hierarchy: attention signals (retention, saves, shares), intent signals (click quality, messages, profile actions), and outcome signals (qualified leads, purchases, bookings).

Benchmarks can help you sanity-check whether engagement patterns are shifting platform-wide, which is important when stakeholders panic over a dip that’s actually industry-wide. Rival IQ’s 2025 benchmark analysis notes that engagement rates fell across major platforms, detailed in its 2025 Social Media Industry Benchmark Report, and Socialinsider’s 2026 update provides current platform-level engagement reference points like TikTok at 3.70% and Instagram at 0.48%.

Manage frequency and fatigue like a business constraint

Scaling increases repetition. Repetition increases fatigue. Fatigue lowers performance and forces you to pay more for the same outcomes.

This is why creative refresh cadence and format variety matter so much at scale. Emplifi’s 2025 benchmarks report highlights the growing importance of short-form video formats and provides data-based context on video engagement patterns across platforms in its 2025 Social Media Benchmarks Report.

Use budget guardrails that prevent “panic scaling”

Panic scaling is when a campaign looks promising and someone doubles the budget overnight. Sometimes you get lucky. More often, performance gets worse because delivery expands too fast into lower-quality inventory or less-relevant audiences.

Guardrails keep scaling calm: increase budgets in steps, keep a control group when possible, and protect winning creatives from being drowned out by too many experiments at once.

Optimize the mix: paid, creators, community, and owned channels

Scale gets easier when you don’t ask one channel to do every job. Paid can drive consistent reach, creators can build belief, community can remove friction, and owned channels can capture demand for cheaper follow-up. A social media campaign strategy scales best when each channel is assigned a clear role in the journey.

Scaling Stories

Scaling stories matter when they reveal the messy moments: the pressure, the measurement uncertainty, the decision that changed the trajectory, and the operational discipline that kept the campaign from breaking. The two stories below are built from publicly documented case studies.

Zalando’s two-week sprint when scaling required proof, not confidence

The pressure hit fast. A short campaign window meant they couldn’t “learn slowly” and fix things later. If the approach didn’t work quickly, the opportunity would pass and the team would be left defending spend with little more than platform metrics.

There was also a reputational risk inside the organization. When budgets scale, scrutiny scales too. A campaign can look successful in a dashboard and still lose support if stakeholders don’t trust what those numbers mean.

The backstory was a familiar one: a major retailer operating in a competitive environment where efficiency matters and growth needs to be explainable. The campaign wasn’t just about getting attention; it needed to demonstrate incremental business impact. That demand for proof is what separates small tests from scalable systems.

Under the surface, the team was also dealing with complexity. Multiple creatives, multiple placements, and rapid delivery can quickly muddy learning. Without structured measurement, teams can’t explain whether improvements came from creative, targeting, timing, or sheer spend volume.

The wall arrived at the exact moment scaling decisions needed to be made. It’s one thing to see conversions. It’s another to show that the campaign caused incremental results rather than simply capturing demand that would have happened anyway. Without that clarity, scaling becomes a gamble disguised as optimism.

The ambiguity created tension. Stakeholders can accept uncertainty when budgets are small, but they rarely accept it when budgets grow. The campaign needed a way to turn uncertainty into evidence quickly enough to matter.

The epiphany was to treat measurement design as part of the campaign, not a post-campaign report. Zalando determined results using a multi-cell Meta Conversion Lift test, described in Meta’s Zalando success story. That choice reframed the conversation from “performance looks good” to “impact is measurable.”

Once the team had a lift framework, decisions became cleaner. Creative and targeting changes could be evaluated against a stronger standard than last-click attribution. The campaign could be scaled with a clearer sense of what was actually working.

The journey then became operational. They executed the campaign within the defined window, monitored outcomes, and used the measurement setup to support confident decisions. Instead of debating gut feelings, they could tie decisions to evidence and keep execution aligned with the strategy.

They also gained a stronger internal narrative. When teams can explain impact clearly, they protect budget from being reallocated to channels that merely “look” easier to measure. That narrative is often what determines whether campaigns scale into programs.

The final conflict showed up in the place it always does: scaling creates noise. More spend introduces more variability, and stakeholders often demand rapid changes when they see day-to-day fluctuations. Without discipline, teams can break a winning system by “fixing” it too aggressively.

The team had to resist the impulse to change everything at once. Scaling required patience and controlled iterations so the learning stayed interpretable. The measurement approach made that discipline possible because it created a higher-confidence baseline.

The dream outcome was not just a positive result—it was a scalable standard. The campaign produced lift results that could be used to justify future investment and improve future execution. That’s the real win: a social media campaign strategy that can scale because it can be defended, as documented in the published case study.

Getnet’s long-run campaign when scaling meant surviving months of real-world variability

The campaign didn’t face one dramatic launch day. It faced something harder: months of reality. Budgets, creative, and audience behavior don’t stay stable from October through March, and performance teams don’t get to pause the market when results wobble.

The tension built slowly. Each week added more data and more opinions, and that’s exactly when campaigns drift into reactive decision-making. The risk wasn’t only wasted spend—it was losing confidence in the strategy because the story became too messy to explain.

The backstory was a sustained campaign period with real business consequences. When a campaign runs for months, stakeholders expect more than “we ran ads.” They expect learning, clarity, and a path to scale what works while controlling what doesn’t.

Operationally, long-run campaigns are vulnerable to measurement confusion. Teams change creatives, audiences shift, and external factors influence demand. Without a disciplined system, you can’t tell whether you’re improving performance or just experiencing natural market swings.

The wall arrived when the team needed to prove results under scrutiny. Sustained campaigns generate questions: is performance improving because of strategy, or because of seasonality? Are we getting true incremental outcomes, or are we paying for conversions we would have received anyway?

This is where many campaigns collapse into politics. People argue about channel value, teams defend their work with cherry-picked metrics, and decision-making slows down. Scaling can’t happen in that environment because nobody trusts the foundation.

The epiphany was to anchor results in a structured measurement approach. Getnet determined results using a multi-cell Meta Conversion Lift test, documented in Meta’s Getnet success story. Instead of relying on “what the dashboard says,” the campaign could evaluate lift with a stronger causal lens.

That measurement anchor changed execution behavior. The team could make iterations without losing interpretability because they weren’t changing everything blindly. It also gave stakeholders a more credible reason to stay the course when performance fluctuated day to day.

The journey became a disciplined loop: maintain creative supply, monitor meaningful signals, and document decisions. Over time, this turns scaling into a controlled process rather than a series of emotional budget swings. The campaign also had a stable way to communicate progress, which is crucial when the timeline is measured in months.

As the campaign progressed, the system had to absorb real friction. Creative fatigue, audience saturation, and shifting market conditions test the integrity of any strategy. A campaign that can’t adapt without losing measurement clarity will eventually stall.

The final conflict was exactly that friction. When performance teams feel pressure, they often overcorrect—changing targeting, messaging, and budgets at the same time. That can create a temporary bump while destroying learning, making the next decision harder instead of easier.

The team’s challenge was to keep changes controlled and explainable. A lift-based measurement approach helps because it keeps the focus on incremental outcomes rather than superficial wins. It also gives stakeholders a reason to support disciplined iteration instead of demanding constant reinvention.

The dream outcome was sustained confidence. The campaign didn’t just generate results; it produced a decision system that can be reused in future efforts. That’s what scaling really is: building a social media campaign strategy that survives time, variability, and scrutiny, supported by the documented case study narrative.

Explain scaling as a system, not a mood

Stakeholders don’t want to hear “we feel like it’s working.” They want to hear what changed, why you believe it caused lift, and what guardrails protect the budget. Lift frameworks help you communicate causality, which is why platform-level measurement education like TikTok’s Conversion Lift Study overview and Meta’s Conversion Lift explanation are useful even for non-technical audiences.

Position creator investment like a channel allocation decision

Creators are easier to sell internally when you frame them as a distribution channel with measurable objectives, not as “influencer vibes.” Budget context helps: the IAB’s projection of $37B in U.S. creator ad spend in 2025 gives stakeholders a market-level reference for why creators are no longer experimental.

Use benchmarks to calm panic, not to excuse weak strategy

When performance dips, teams often assume they failed. Sometimes the platform environment changed. Benchmarking sources can help you separate “our campaign issue” from “market shift,” like combining Rival IQ’s platform trend notes in its 2025 benchmark report with current platform engagement references in Socialinsider’s 2026 benchmarks.

Deliver a scaling readout that makes the next decision obvious

- What scaled: which audience, which creative archetype, which distribution lever.

- Why it scaled: the signals that indicated incremental value, not just higher volume.

- What protected efficiency: frequency controls, refresh cadence, and budget guardrails.

- What’s next: the next controlled expansion and the next measurement upgrade.

In Part 6, we’ll pull the entire series together with a practical wrap-up, answer the most common questions in an FAQ, and show how to turn this framework into a repeatable offer you can sell as a freelance marketer.

Future Trends

The next wave of social media campaign strategy work is going to feel less like “posting” and more like operating a living system: creative supply chains, creator partnerships, fast community response, and measurement that can survive attribution skepticism. The brands and freelancers who win won’t be the ones who automate the most. They’ll be the ones who stay unmistakably human while using AI to move faster and learn quicker.

Human-made content becomes the differentiator

As feeds fill up with low-effort AI content, audiences are getting pickier about what feels real. That shift shows up directly in consumer-facing research where human-generated content is positioned as the #1 priority for users in 2026. Hootsuite’s Social Media Trends 2026 frames the same tension as “human-made authenticity wins, but AI tools are table stakes,” which is a useful way to think about it operationally: use AI for speed, but make your campaign feel like it came from a real point of view.

Provenance and content labeling get messier before they get cleaner

Platforms are experimenting with ways to label AI-generated media, but the implementation is inconsistent and the metadata often gets stripped as content moves across apps. The reality check is captured in reporting on how C2PA-style labeling is being promoted and unevenly applied in coverage of AI authenticity labels. For campaign teams, the practical takeaway is simple: build trust into the content itself with proof, transparency, and clear claims, because relying on platform labels alone is fragile.

Creator partnerships shift from “awareness” to measurable performance

Creator spend is already being treated like a core media channel. U.S. creator ad spend is projected to reach $37B in 2025, reinforced in IAB’s report overview and summarized in Business Insider’s coverage. The trend isn’t only “more influencer work.” It’s more accountability: clearer briefs, cleaner tracking, stronger creative iteration, and partnerships designed for outcomes instead of vibes.

Social search becomes a planning constraint

More discovery is happening inside social platforms, which changes how you write hooks, captions, on-screen text, and even how you name your creative files. The shift is highlighted in recent stat roundups where social platforms are discussed as major discovery engines in Sprout’s 2026 social media statistics collection. A modern social media campaign strategy needs searchable creative: specific language, clear benefits, and content that answers real questions instead of only trying to entertain.

Rapid-response campaigns become normal operating behavior

Campaign calendars are getting disrupted by real-time culture and real-time customer expectations. Hootsuite labels this “fastvertising” and rapid-response behavior in its 2026 trends snapshot. At the same time, customer expectations stay intense: nearly three-quarters of consumers expect a response within 24 hours, discussed in Sprout’s 2025 business impact analysis. The operational implication is that “campaign” and “community” can’t be separate teams anymore—response is part of the campaign experience.

Strategic Framework Recap

If you want a social media campaign strategy that performs consistently, treat it like an ecosystem. Each part supports the others, and the system gets stronger every time you run the loop and document what you learned.

- Outcome first: one primary goal that forces trade-offs and keeps decisions consistent.

- Audience clarity: a defined segment with a real trigger, barrier, and motivation.

- Offer and narrative: a promise people can repeat and proof that makes belief easy.

- Creative system: intentional variation (hooks, angles, proof formats) without chaotic versioning.

- Distribution mix: paid, creators, and community each assigned a job in the journey.

- Measurement that drives decisions: metrics tied to actions, plus incrementality thinking when stakes are high, framed clearly in platform measurement explanations like Meta’s Conversion Lift overview and TikTok’s Conversion Lift Study guide.

The point of the framework isn’t to make campaigns feel “official.” It’s to make them explainable, repeatable, and scalable—so you can do better work faster without relying on luck.

FAQ – Built For A Complete Guide

What counts as a campaign versus regular content?

A campaign is a coordinated push with one objective, one central idea, and a defined time window. Regular content can be ongoing and exploratory. In a social media campaign strategy, the campaign exists to move a measurable outcome, not just to fill the calendar.

How long should a social campaign run?

Long enough to learn, not so long that creative fatigue destroys performance. A practical rule is to commit to a learning window (often 2–4 weeks for many teams), then refresh creative and adjust distribution based on what’s working. If you’re using creators, you may run shorter bursts around drops and longer arcs around trust building.

What metrics matter most for campaign success?

The best metrics are the ones that change your next decision. Early, you’re looking for attention and understanding (retention, saves, shares, comment themes). Mid-campaign, you’re looking for intent (click quality, messages, profile actions). Ultimately, you’re looking for outcomes (qualified leads, purchases, bookings). When stakes are high, add incrementality measurement so you’re not confusing correlation with causality, using frameworks explained in Meta’s lift testing guidance.

What if engagement is high but sales are low?

That usually means the campaign is entertaining but not persuasive. Move proof earlier, tighten the promise, and remove friction after the click. Also check whether your content is attracting the wrong audience—big engagement from people who will never buy is a common trap.

How many creative versions should I produce?

Enough to test meaningfully, not so many that you can’t track what changed. Start with a small set of distinct angles (3–5) and build 2–4 variations per angle. The goal is structured learning: you should be able to say why something won, not just that it won.

Should every campaign use creators?

Not every campaign needs creators, but many campaigns scale faster with them because they compress trust. Creator investment is increasingly treated like a core channel, supported by spending projections like IAB’s creator ad spend outlook. If your offer requires belief (most do), creators can make the proof feel more human and more immediate.

How do I handle negative comments during a campaign?

Don’t hide from them. Treat them as objections you need to answer publicly with clarity and calm proof. Fast, thoughtful response is part of performance because it shapes trust in real time, and expectations around response speed remain high in consumer research discussed in Sprout’s analysis of social’s business impact.

When should I scale the budget on a winning campaign?

Scale when you have a repeatable loop: an angle that earns attention, proof that builds belief, and a journey that converts without breaking. Then scale in steps and protect learning by changing one meaningful variable at a time. If you can run lift tests, scale becomes easier to defend with causal evidence rather than “dashboard confidence,” as explained in TikTok’s lift study overview.

What tools are essential for running campaigns professionally?

You need a minimum stack that supports execution rhythm: scheduling and approvals, community management, creative version control, and reporting that ties metrics to decisions. If you’re working with clients, the “essential tool” is often documentation—clear briefs, clear naming conventions, and a decision log that survives pressure.

How do I prove ROI without getting trapped in attribution arguments?

Start by agreeing on what “success” means before launch. Use platform-native reporting to monitor performance, but add experimentation thinking when the question becomes “did this cause incremental results?” Lift testing frameworks exist specifically for this problem and are explained in platform documentation like Meta’s Conversion Lift resources. Even when you can’t run a formal lift test, you can still act like an experimenter: keep controls where you can, change one variable at a time, and document what happened.

How do I use AI without producing “AI slop” content?

Use AI to accelerate production and iteration, but keep the campaign voice grounded in real experiences, real proof, and clear claims. The growing concern about low-quality synthetic content and inconsistent labeling is reflected in reporting on authenticity and labeling efforts like coverage of provenance systems and AI labels. If your content feels hollow, the market will treat it as disposable.

Work With Professionals

You can build a strong social media campaign strategy on your own, but there’s a moment every serious freelancer hits: you’re good at the work, yet finding consistent clients feels like a second full-time job. You’re pitching into crowded marketplaces, competing on price, and watching project fees eat into the money you worked hard to earn.

That’s the exact pain Markework is built to remove. The platform is positioned as a focused marketing marketplace where you can build a profile, apply to roles, and message companies directly with no middleman and no project fees. Instead of paying a percentage of every invoice, you use simple monthly plans designed to keep your costs predictable, laid out on Markework’s pricing page.

And the work is not hypothetical. The marketplace publicly shows that there are over 1,000 active listings visible right now, with full access unlocked through membership. If you’re serious about landing better clients, this is the kind of environment that makes momentum possible: clear listings, direct communication, and a marketplace focused specifically on marketing roles and projects, explained in the “Why Us” overview.

If you want your next month to look different—less chasing, more closing—build a profile that shows proof, rates, and the exact campaign outcomes you deliver. Then use your social media campaign strategy framework as your edge: a clear offer, a clear process, and a results story that makes hiring you feel like the safe decision.

markework.com