Hiring social media advertising companies used to be mostly about buying attention. Now it’s about buying clarity.

The platforms move fast, targeting rules keep tightening, and “good results” can disappear the moment attribution changes. That’s why the best teams treat a social partner less like a vendor and more like a system: strategy, creative, media, measurement, and governance working together.

This guide gives you that system. Part 1 defines what social media advertising companies actually do today, why they matter more than ever, and the framework you can use to evaluate and manage them without getting buried in jargon.

Article Outline

- What Are Social Media Advertising Companies?

- Why Social Media Advertising Companies Matter

- Framework Overview

- Core Components

- Professional Implementation

What Are Social Media Advertising Companies?

Social media advertising companies are specialist teams that plan, build, run, and prove the performance of paid campaigns across platforms like Meta, TikTok, LinkedIn, Pinterest, Snapchat, and others. Some are full-service agencies; others are boutiques focused on one platform, one industry, or one capability (like creative testing or measurement).

In practice, they typically do five jobs at once:

- Translate business goals into paid social strategy (what outcomes matter, which audiences to prioritize, and what “success” means).

- Build creative that fits the platform (format, pacing, hooks, offers, and landing page alignment).

- Operate the ad systems (campaign structure, bidding, targeting, feed health, pixel/server events, and optimization).

- Measure what’s real (incrementality, attribution alignment, and reporting that matches how the business actually makes money).

- Protect the brand (policy compliance, brand safety, fraud prevention, and process controls).

If you’ve ever felt like paid social is “working” but you can’t explain why, that’s exactly the gap a good partner is supposed to close. And in 2026, closing that gap is the work.

Why Social Media Advertising Companies Matter

Paid social is no longer a side channel. It’s one of the biggest line items in modern marketing, and the ecosystem keeps expanding.

In the U.S. alone, social media advertising revenue reached $88.8B in 2024, a sharp rebound that signaled budgets flowing back into social formats after a choppy period. That’s not “nice to have” spend—those dollars typically come with scrutiny, internal politics, and pressure to show impact.

Globally, social investment has also climbed into the “can’t ignore it” tier. WARC projected global social spend of $247.3B in 2024, reflecting how central social has become to brand and demand strategies worldwide.

At the same time, platform economics push advertisers toward more automation, more inventory, and more AI-driven delivery. Meta’s own filings show how massive that machine is: Meta reported $196.175B in advertising revenue for 2025. When a platform generates that scale of revenue, it will relentlessly optimize its marketplace—sometimes in ways that help you, sometimes in ways that hide what’s really driving results.

Finally, the compliance and transparency environment is tightening. For example, EU regulators have pushed platforms on ad transparency requirements under the Digital Services Act, including action like the European Commission’s preliminary findings against TikTok related to ad repository transparency. Whether you’re a brand or an agency, this raises the bar on documentation, targeting rationale, and audit readiness.

That combination—big budgets, fast-changing ad tech, and higher accountability—is why skilled social media advertising companies matter. They reduce waste, prevent preventable mistakes, and help you defend performance with evidence instead of screenshots.

Framework Overview

Most people choose a social partner based on surface signals: a famous client logo, a confident audit, or a cheap management fee. That’s how you end up with “busy” campaigns that don’t move the business.

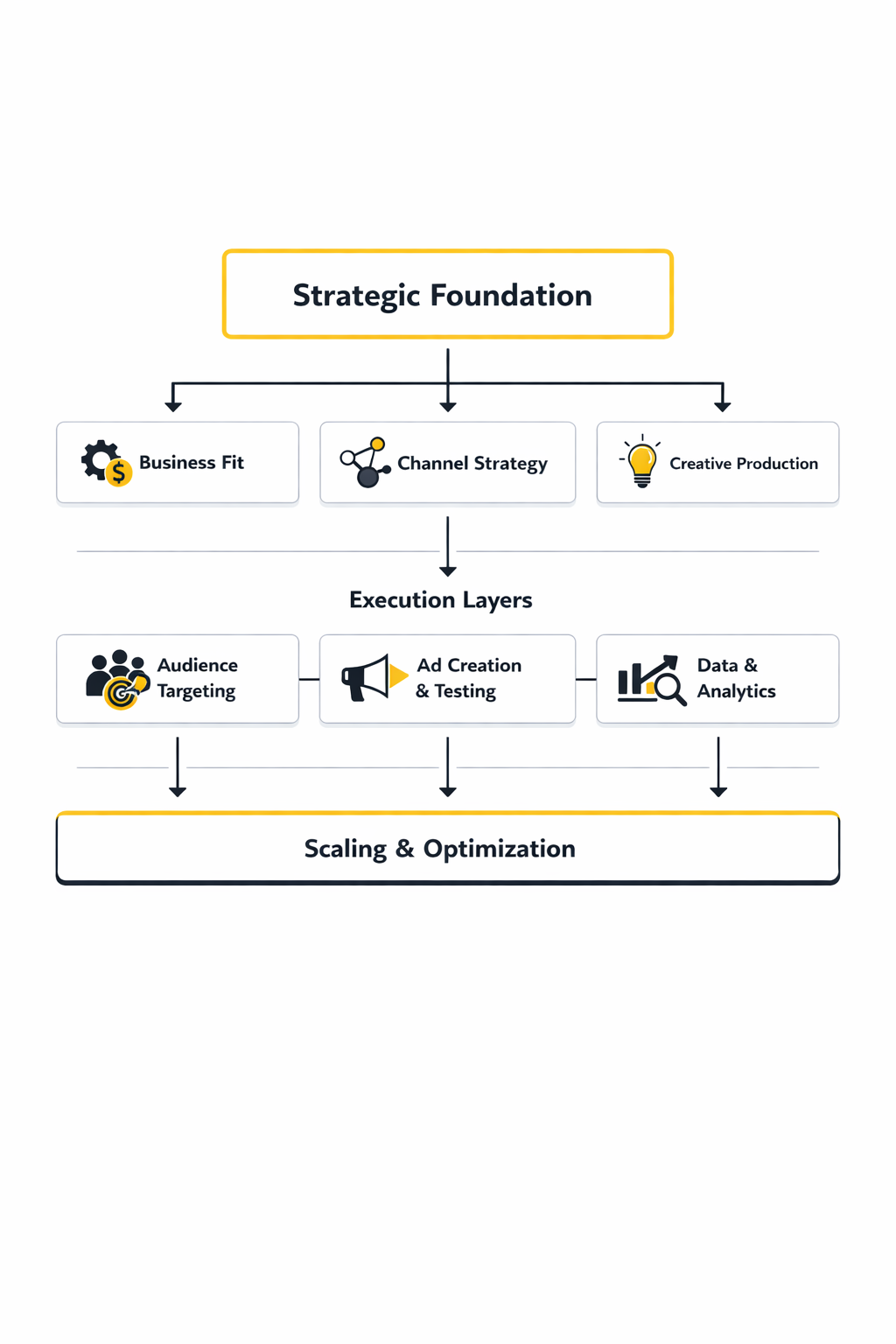

This framework is designed to make the choice (and the working relationship) more objective. You’ll evaluate a partner across five connected layers:

- Business fit: Do they understand your margins, sales cycle, and constraints—so they can optimize for what actually matters?

- Channel strategy: Can they design an account structure that matches the customer journey (not just platform defaults)?

- Creative engine: Do they have a repeatable way to produce and iterate creative at the pace social demands?

- Measurement truth: Can they separate correlation from causation using the right tools and tests for your scale?

- Operating system: Do they run with clear roles, QA, documentation, and decision rules—or is everything improvisation?

When these layers connect, performance becomes easier to predict and easier to defend internally. When they don’t, you get random spikes, unexplained drops, and reporting that never quite matches the finance team’s reality.

Core Components

Regardless of whether you’re working with a global agency, a specialist studio, or a freelance operator, high-quality social media advertising companies tend to share the same core components. The difference is how mature each component is, and how well they connect them.

1) Strategy That Starts With Unit Economics

Strong strategy starts with the numbers your business can’t negotiate with: contribution margin, payback window, and sales cycle length. Without that, “optimize for ROAS” becomes a shortcut that can quietly destroy growth (especially when attribution credits the wrong touchpoint).

2) Creative Built for Speed and Signal

Creative is the main lever most teams under-invest in because it’s harder to systematize than media buying. The best partners treat creative like a testable product: clear hypotheses, rapid iterations, and disciplined learning loops—so you’re not guessing why something worked.

3) Media Buying That Balances Control and Automation

Platforms increasingly reward consolidated signals and smart automation, but “fully automated” can also mean “fully unexplainable.” A professional operator knows where to simplify structure for learning, and where to keep controls for brand safety, pacing, and offer separation.

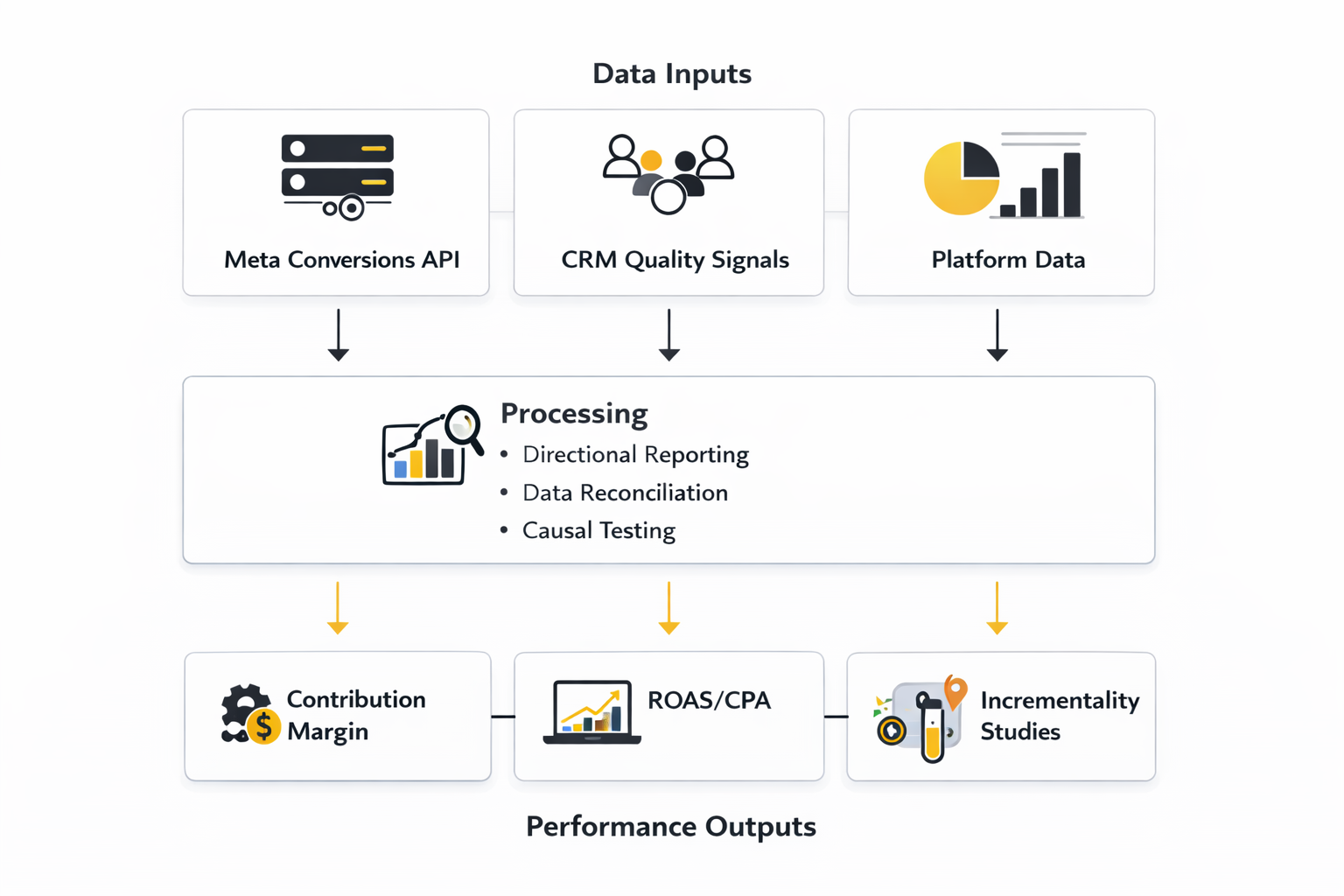

4) Measurement That Proves Incremental Impact

Attribution tells a story; incrementality tests whether the story is true. If your budget is meaningful, you eventually need causal measurement. Tools like Meta Conversion Lift studies exist for a reason: they’re designed to estimate what would have happened without the ads, not just what happened after a click.

5) Governance That Prevents Expensive “Oops” Moments

Brand safety, policy compliance, tracking hygiene, and QA are not glamorous, but they are often the difference between steady growth and an account that collapses overnight. Governance also includes decision rights: who can change budgets, pause campaigns, or launch new messaging—and how those decisions get documented.

Professional Implementation

This is where most relationships with social media advertising companies either become a compounding advantage—or a monthly headache.

Professional implementation is less about secret tactics and more about getting the working model right:

- Briefing: A good brief makes tradeoffs explicit (speed vs. precision, volume vs. quality, brand voice vs. direct response). It also defines the business “north star” and the guardrails the partner must respect.

- Access and tracking: Clean pixel/server events, consistent naming conventions, and a clear map from campaign objectives to downstream outcomes. When this layer is weak, you can’t trust learning.

- Creative workflow: A predictable cadence for concepting, production, review, and iteration—so creative doesn’t become the bottleneck that starves the algorithm.

- Measurement plan: Reporting that ties to decisions. If a metric can’t change what you do next week, it shouldn’t dominate the dashboard. For larger budgets, plan for lift testing, not just platform attribution.

- Risk controls: Policies, brand safety, and documentation matter more as regulators push for transparency. Develop a habit of recording targeting logic, exclusions, and change logs—especially in sensitive categories.

One more reality: the best partners don’t promise permanent wins. They build a process that survives inevitable changes—creative fatigue, auction volatility, tracking shifts, and new platform defaults—so results don’t depend on luck.

Step-by-Step Implementation

When you hire social media advertising companies, the biggest risk isn’t “bad ads.” It’s a messy implementation that makes every result debatable. The goal of a step-by-step rollout is simple: create a clean signal path from ad exposure to business outcomes, then build a repeatable creative-and-optimization rhythm on top of that signal.

This is a practical sequence used by teams that need performance they can defend, not just performance they can screenshot.

Step 1: Lock the goal and the constraint

Start by agreeing on one primary outcome and one constraint. The outcome might be qualified pipeline, first purchases, trials that convert to paid, or store visits. The constraint might be margin, payback window, inventory, capacity, or brand safety requirements.

Write both down in plain language and treat them as non-negotiable. It stops the common drift where social media advertising companies optimize what the platform can see, while the business cares about something else entirely.

Step 2: Build a tracking map that matches reality

Before any campaign goes live, map the events you’re going to optimize toward and the events you’ll use to validate quality. For many teams, that means pairing browser tracking with server-side events, then making sure deduplication is handled correctly.

- Meta: confirm event setup and server events through the Meta Conversions API documentation and validate that event parameters match what the platform expects.

- Tag control: if you need more control and resilience than browser-only tracking, implement a server container following Google Tag Manager server-side guidance.

- B2B conversions: if LinkedIn is in the mix, align your conversion rules and attribution settings using the LinkedIn Conversions API playbook.

Don’t treat this as a one-time checklist. Treat it like a release process: every change to events, naming, or attribution windows gets reviewed, documented, and tested.

Step 3: Align the offer, the landing page, and the measurement window

Paid social rarely fails because targeting is “wrong.” It fails because the offer doesn’t land, the landing page doesn’t convert, or the measurement window is mismatched to the buying cycle. If your product has a longer consideration period, expecting immediate last-click conversions will push the system toward low-quality shortcuts.

Match the campaign objective to the way your customers actually decide. Then make sure your tracking and reporting can see far enough downstream to judge quality, not just volume.

Step 4: Build a creative system, not a one-off shoot

Social platforms reward constant learning. That means creative has to move on a schedule, with a structure that makes learning possible.

- Create a creative brief template with one hypothesis per concept (the hook, the proof, the offer, the CTA).

- Define “tests” vs. “winners” so the team knows what gets protected and what gets iterated.

- Build modular assets (multiple hooks, multiple proofs, multiple CTAs) so you can remix without starting from zero.

This is where social media advertising companies either feel like a growth engine or a content bottleneck. The difference is whether creative has an operating cadence.

Step 5: Launch with guardrails and a learning plan

Launch isn’t “turn ads on.” Launch is the moment you decide what you’re willing to learn first. Keep the first iteration tight enough to diagnose, but not so tight that delivery can’t find an audience.

- Guardrails: budget caps, frequency checks, brand safety exclusions, and approval workflows.

- Learning plan: what you’ll test first (offer, hook, audience, landing), what you’ll hold constant, and how long you’ll wait before deciding.

- Measurement method: decide whether you’ll rely on platform reporting for early directional signals, or run lift-style validation when stakes are high, using tools like Meta Conversion Lift.

Execution Layers

The easiest way to keep an implementation clean is to separate execution into layers. Each layer has a different job, and mixing them is how teams end up “optimizing” their way into confusion.

Foundation Layer: Data, access, and governance

This layer covers access control, tracking integrity, and documentation. It includes server-side tagging decisions, event dictionaries, conversion definitions, and who can change what inside accounts.

If this layer is weak, everything above it becomes opinion. If it’s strong, the relationship with social media advertising companies becomes calmer because disagreements can be resolved by checking the system, not debating vibes.

Strategy Layer: Budget logic and funnel architecture

This is where you decide how the account is structured to match the customer journey. It includes campaign objectives, the funnel split (prospecting, consideration, remarketing), and the budget rules that prevent constant emotional reallocations.

Good strategy also defines what “incremental” means for your business, and which decisions require stronger validation than platform attribution.

Creative Layer: Production and iteration engine

This layer is the supply chain for attention. It includes creative intake, production, approvals, and a library that makes iteration fast.

Modern paid social rewards teams that can ship, learn, and ship again. The creative layer is what allows that rhythm without burning out stakeholders.

Optimization Layer: Decision rules and quality control

This is where your team defines what changes get made, when they get made, and how you’ll know whether they helped. It includes pacing, quality checks, and the ability to separate “the platform needs time to learn” from “this is genuinely not working.”

When social media advertising companies operate professionally, they don’t “tweak” endlessly. They make changes with reasons, and they record what those reasons were.

Optimization Process

The best optimization processes feel almost boring. That’s a compliment. They create stable learning loops that survive creative fatigue, auction volatility, and tracking changes without turning the account into a constant experiment.

The weekly loop: decide, don’t admire

A weekly optimization meeting should exist to make decisions. If the team is mostly looking at charts, the process is broken.

- Start with outcomes: revenue quality, pipeline quality, retention signals, or whatever the business has agreed is real.

- Move to diagnostics: which ads, audiences, and landing experiences are driving those outcomes, and where drop-offs appear.

- End with commitments: what will be launched next week, what will be paused, and what measurement will validate the decision.

If a decision can materially shift budget, it often deserves validation beyond click-based reporting. That’s why lift and experimentation frameworks exist, including the implementation paths described in Meta’s lift studies guide.

Testing that stays honest

Testing falls apart when teams “test everything” and learn nothing. Keep it honest by limiting variables and documenting what you expected to happen.

- Creative tests: one hypothesis per concept, with consistent landing pages and offers.

- Audience tests: keep creative constant while you compare signals.

- Measurement tests: when you need causal confidence, use lift-style tests or geo-based frameworks like GeoLift rather than relying on last-click assumptions.

Quality control and tracking maintenance

Optimization isn’t only about bids and budgets. It’s also about keeping data healthy. If your event mapping breaks, you can “optimize” perfectly and still drive the wrong outcomes.

Professional teams keep a regular QA cadence, especially when using server-side implementations like GTM server-side and platform event APIs like Meta Conversions API, because small mistakes can silently distort the learning system.

Implementation Stories

Implementation is the moment where strategy either becomes a real system or collapses into guesswork. These stories are based on publicly available case materials and interviews, and they focus on how the implementation choices changed what teams could confidently do next.

Newton Baby: The Implementation That Started With a Hard Question

The panic didn’t start with bad performance. It started with the opposite: numbers that looked “fine” while the leadership team still felt exposed. If the dashboards were right, why did budget changes create so much anxiety? If the marketing machine was truly working, why did every conversation about cutting spend feel like stepping off a cliff?

The backstory is a business that needed to grow while staying disciplined. Newton Baby’s leadership described shifting priorities toward efficiency, which made them treat measurement like a survival tool rather than a reporting layer. The team wasn’t just trying to buy customers; it was trying to build confidence that growth was real and repeatable.

The wall was attribution comfort. Brand search and other bottom-funnel channels can look like heroes because they capture demand right before purchase, even when they didn’t create that demand. When the team tried to answer “what’s truly incremental,” platform and last-click narratives weren’t enough to justify major reallocations.

The epiphany came through adopting a causal mindset. In a published interview, Newton Baby’s marketing leader described working with incrementality testing partner Haus and being challenged on assumptions around branded search. That push led to a turning point captured in industry reporting about the company’s decision to move away from branded search after incrementality findings.

The journey became an implementation project, not a single test. Newton Baby described layering approaches by using tools like Rockerbox for broader measurement visibility while leaning on incrementality methods to validate high-stakes decisions. Instead of treating measurement as a one-time audit, they treated it like an operating system that continuously pressures assumptions and forces clarity.

The final conflict was that not everything is neatly testable. Marketplaces and multi-channel environments can make clean holdouts difficult, and the team discussed real constraints around experimental design in certain contexts. That forced a more disciplined stance: test where you can, triangulate where you can’t, and stop letting the easiest-to-measure channel automatically win the budget argument.

The dream outcome was decision freedom. The implementation created a path to reallocate spend with less fear and more evidence, and it also created a reusable standard for future channels and campaigns. The real win wasn’t just a better chart; it was the ability to look at performance, make a hard call, and trust the reasoning behind it.

Set an operating cadence that protects learning

Daily: monitor delivery health, tracking continuity, and obvious anomalies. Weekly: make decisions on creative, budgets, and targeting based on documented hypotheses. Monthly: reconcile against finance-grade outcomes and decide which big questions deserve lift-style validation.

If the team is constantly changing everything, the platform never learns and you never learn either. A cadence protects signal quality.

Document decisions like you’ll need to explain them later

A strong partner keeps notes that make future diagnosis possible: what changed, what the hypothesis was, what data was used, and what the outcome was. This becomes especially important when you run structured measurement work like lift studies, which Meta documents as a formal process in its lift studies guide.

Documentation isn’t bureaucracy. It’s how you prevent “random wins” from turning into “random losses” a month later.

Build escalation paths for tracking and compliance issues

When tracking breaks, you need a fast path to rollback or fix, plus a clear owner. When compliance changes or platforms update policies, you need a process that reviews creative and targeting choices before they become account risks.

Teams that pair server-side tracking setups like GTM server-side with platform APIs like Meta Conversions API typically formalize escalation because small implementation errors can have outsized performance consequences.

What “good” looks like after 90 days

- You can explain performance without relying on one platform’s attribution story.

- Creative iteration is steady and learning is documented, not improvised.

- Tracking is stable and changes are treated like releases with QA.

- Budget decisions are calmer because the system produces evidence, not noise.

That’s the point of professional implementation: turning social media advertising companies into a compounding advantage, not a recurring mystery.

Statistics And Data

The fastest way to misunderstand paid social is to stare at platform metrics in isolation. Social media advertising companies that stay profitable treat the numbers as a chain: market reality shapes auction prices, auction prices shape what you can buy, and measurement decides whether what you bought actually mattered.

Start with the market reality. U.S. digital advertising revenue hit $258.6B in 2024, with multiple industry write-ups highlighting the same topline figure and growth rate. One recap noted the 14.9% jump, and another highlighted the same 2024 total, which is a reminder that “noise” is often just a growing, competitive marketplace.

Inside that market, social has been one of the big acceleration engines. Social media ad revenue rose to $88.8B in 2024, and the same figure shows up in third-party coverage like this summary of the IAB/PwC findings and an additional breakdown that repeats the number while framing the rebound in social. That coverage also called out the 36.7% year-over-year rise, which matters because it explains why “it’s getting more expensive” is not a personal failure. It’s competition.

At the platform level, the auction moves too. Meta’s own reporting shows revenue rising to $200.97B for full year 2025, alongside changes in ad delivery and pricing signals, which is the kind of macro context that helps you interpret why your CPM can feel like it has a mind of its own.

Performance Benchmarks

Benchmarks are only useful when they’re treated as ranges and patterns, not targets. Social media advertising companies use them to answer two practical questions: “Is this account behaving normally?” and “If it’s not, do we have a creative problem, a measurement problem, or an offer problem?”

One of the clearest recent patterns is that efficiency can improve even when competition stays intense. In Q4 2025, paid social showed a rare “triple win” where clicks rose 23% year over year while CPMs fell 6% across Skai’s benchmark dataset, and the same headline numbers were reiterated in the full Q4 2025 digital trends report landing page. Separate benchmark work also pointed to lower costs in the same period for Meta buying specifically, where analysis tied to Tinuiti’s dataset described impressions up 17% with CPM down 7% in Q4 2025, attributing the change to inventory and placement mix shifts.

So what should you actually benchmark, week to week?

- Cost to create a qualified action: not “a click,” but the first event that proves intent (qualified lead, booked demo, add-to-cart with value thresholds, trial that reaches activation).

- Creative efficiency: whether new concepts reliably beat the account average, which is why social media advertising companies track performance by concept family, not by one-off ads.

- Delivery health: stability of reach and frequency for the audiences that matter, especially when platform inventory shifts (like the Reels mix change described in the Meta benchmark analysis above).

If your benchmark checks fail, it’s not automatically a “media buying problem.” Very often it’s a signal that the data feeding optimization is drifting away from business reality.

Analytics Interpretation

Analytics interpretation is where campaigns become decisions. The easiest trap is to treat every metric as equally meaningful. The better approach is to sort metrics into roles, then interpret them in the correct order.

Separate directional signals from decisive proof

Platform-reported ROAS and last-click attribution can be useful early signals, but they’re rarely decisive proof on their own. When budgets get serious, social media advertising companies lean on methods built to isolate incremental impact, including lift-style approaches that platforms formalize and document.

That’s why you’ll see measurement teams reference tools like TikTok’s conversion lift study methodology or platform lift frameworks elsewhere: not because they’re “fancier,” but because they’re designed to answer the business question, not just the platform question.

Interpret the funnel break, not the funnel average

If CPM improves but conversions don’t, you don’t immediately “blame creative.” You look for the break: did reach move to different placements, did landing page speed change, did the offer lose urgency, did lead quality slip, did attribution windows change, did event matching degrade?

This is the reason serious social media advertising companies document tracking changes like product releases. A tracking change can masquerade as a performance change, and you’ll waste weeks “optimizing” the wrong thing if you can’t line up what changed with when performance moved.

Triangulate with independent context

When the market grows quickly, your account can “get worse” even if your execution gets better, simply because the auction tightens. That’s why macro context matters. Forecast and market analysis like WARC’s social media spend outlook helps explain why more advertisers pile into the same attention streams, while business-side reporting like WPP Media’s global ad revenue forecast adds another lens on how much money is chasing performance outcomes.

Case Stories

Real analytics stories rarely begin with a dashboard. They begin with a moment where someone realizes the dashboard might be lying, or at least telling a smaller truth than the business needs.

When “Performance” Becomes a Risk: The Brand-Safety Wake-Up Call

The alarm wasn’t a dip in ROAS. It was the message from a customer: they had clicked an ad that looked like the brand, only to end up somewhere else. The marketing lead pulled up the platform reporting and saw the usual comfort blanket of impressions, clicks, and stable costs, but the team could feel something was off.

The backstory was a company scaling paid social fast, leaning on social media advertising companies for volume, and trusting that the platform’s policing would catch the worst actors. They weren’t naïve, just busy. Growth targets were aggressive, and the team’s energy went to creative output and campaign iteration.

The wall hit when the team realized “the account is fine” didn’t mean “the customer experience is safe.” There were patterns they couldn’t explain—odd spikes in comments, confused support tickets, and reputational anxiety that never shows up in a CPM chart. The team needed proof of what was happening outside the ad account.

The epiphany came through investigative reporting. Internal documents reviewed in a widely circulated investigation described how Meta projected a meaningful portion of revenue tied to scams and banned content and how enforcement thresholds were handled in practice. That reporting forced many marketers to treat brand safety as a measurement problem, not just a policy problem.

The journey became a rebuild of how performance was evaluated. The team stopped celebrating cheap clicks and started instrumenting risk signals: comment moderation velocity, impersonation monitoring, landing-page integrity checks, and tighter creative verification processes. They also changed how they worked with social media advertising companies, requiring documented guardrails and escalation paths, not just weekly performance summaries.

The final conflict was brutal: tightening controls can reduce delivery, and delivery reductions can scare stakeholders. The business had to choose between comforting numbers and a safer customer experience. For a stretch, it felt like they were paying more for less—until the “less” became visibly higher-quality traffic that didn’t trigger support fires.

The dream outcome wasn’t a single heroic metric. It was the return of calm. The marketing team could scale again without feeling like every new campaign carried reputational debt, because analytics now included safety and quality signals alongside performance.

Professional Promotion

Professional promotion isn’t about bragging. It’s about making your work legible to someone who wasn’t in the meetings. The best social media advertising companies build promotion materials that connect spend to outcomes in a way that survives skepticism.

What to show when you want to be trusted

- One page of business outcomes: revenue, pipeline, retention signals, or whatever the company actually values—anchored with the same definitions every month.

- One page of “why it moved”: the few changes that mattered (new offer, new creative angle, new placement mix), backed by benchmark context like marketwide CPM/click shifts or platform-level patterns like inventory-driven CPM changes.

- One page of measurement integrity: what tracking changed, what attribution assumptions are being made, and where lift-style measurement is being considered, using frameworks like conversion lift methodologies when stakes justify it.

How to say it without sounding like a platform brochure

Keep the language human. “We bought cheaper reach” is not a win if the business didn’t gain anything. “We increased incremental checkouts” is only persuasive when you explain how you measured incrementality, what you controlled for, and what risks remain.

When your reporting sounds like it could have been written for any account, it’s not promotion—it’s wallpaper. The goal is to make the story specific enough that the reader can see the decisions, the tradeoffs, and the reasoning that a real operator would recognize.

Future Trends

The next era for social media advertising companies will feel less like “buying media” and more like operating a living system that blends creative, automation, privacy, and compliance. The teams that win won’t be the ones with the most dashboards. They’ll be the ones who can keep performance stable while the rules keep changing.

One shift is already obvious: platform automation is getting more opinionated. Creative variations, placement expansion, and algorithmic targeting are becoming default behaviors, which means your edge moves upstream into stronger creative direction and stronger signals. Platforms increasingly reward brands that can feed the system consistent, high-quality conversion feedback through setups like the Meta Conversions API and similarly structured solutions across other networks.

Privacy and tracking uncertainty will keep pushing teams toward first-party measurement and experimentation. Google’s own advertiser guidance discusses how its tools are adapting to the changing state of third-party cookies, including FAQs for advertisers preparing for a world where user choice and evolving policies shape what signals are available in Chrome. That guidance is a reminder that measurement strategy can’t be a one-time setup. It has to be maintained.

Compliance will also get more real, especially for advertisers operating in Europe. The EU’s Digital Services Act lays out obligations for online platforms, and it has increased pressure around transparency and accountability. The DSA policy overview explains the intent and scope, while the EU’s transparency explainer details how the DSA has introduced new transparency requirements. Even if you’re not running political ads, the direction is clear: advertisers and their agency partners will need stronger documentation, clearer targeting rationale, and better governance.

The bottom line: social media advertising companies that treat creative as a product pipeline, measurement as an experiment discipline, and governance as part of performance will be the ones that can scale without panic.

Strategic Framework Recap

At this point in the guide, the framework should feel simple, even if the execution is complex. Social media advertising companies do their best work when every part of the system supports the same truth: real outcomes, not just platform stories.

- Business fit: the partner understands your margins, cycle length, capacity constraints, and what “quality” means for your business.

- Channel strategy: account structure matches how customers decide, so optimization aligns with the journey rather than fighting it.

- Creative engine: creative is produced and iterated on a cadence, with hypotheses, learning notes, and a portfolio mindset.

- Measurement truth: reporting is layered (directional, reconciled, causal when needed) so scaling decisions can be defended.

- Operating system: governance, QA, and documentation prevent avoidable failures and keep performance stable through change.

If you remember one thing, remember this: the best results come from a system you can explain. When you can explain it, you can improve it. When you can improve it, you can scale it without relying on luck.

FAQ – Built for the Complete Guide

What do social media advertising companies actually do beyond “running ads”?

They translate business goals into platform execution, build and test creative, manage budgets and campaign structure, maintain tracking quality, and turn performance data into decisions. The best partners also protect the brand through governance, compliance, and documented processes.

How do I choose between a boutique specialist and a full-service agency?

Choose a specialist when one channel or one capability is the main bottleneck (for example, paid social creative testing or measurement). Choose full-service when coordination across channels and teams is the bottleneck. Either way, your decision should be based on the operating system they bring, not the size of their team.

What should I ask for in an audit so it’s not just a sales pitch?

Ask for a diagnosis that names tradeoffs and risks, not just “opportunities.” A real audit explains which assumptions might be wrong, what data can’t be trusted yet, and what change would most likely produce a measurable lift in the next 30–60 days.

Do I need server-side tracking before I hire social media advertising companies?

Not always, but you need reliable conversion signals. Many teams start with browser-based tracking and then upgrade when spend grows or signal quality drops. If you’re investing meaningfully in Meta, it helps to understand how server events work via the Meta Conversions API documentation, because stronger signals often make optimization more stable.

Is platform ROAS enough, or do I need incrementality testing?

Platform ROAS can be a helpful directional metric, especially early. Incrementality testing becomes important when decisions get expensive and you need to prove the ads caused outcomes, not just showed up before them. When the stakes are high on Meta, methods like Conversion Lift are built specifically to estimate incremental impact.

How quickly should I expect results after onboarding?

Expect clarity first, then performance. The first weeks should stabilize tracking, define success metrics, and establish a creative and optimization rhythm. If a partner promises immediate scale without fixing signal integrity and creative throughput, you’re being sold excitement, not a system.

Is there a minimum budget where hiring an agency makes sense?

It’s less about a universal minimum and more about whether the cost of mistakes is greater than the cost of expertise. If you’re spending enough that a few weeks of poor tracking, weak creative, or bad structure could waste meaningful money, professional help can pay back quickly.

What matters more today: targeting or creative?

Targeting still matters, but platforms are increasingly automated. Creative often becomes the primary lever because it shapes who engages, what the algorithm learns, and how efficiently you can scale. That’s why strong teams build creative pipelines instead of betting everything on one “winning ad.”

Why can reporting look great while the business feels stuck?

Because dashboards can reward the easiest-to-measure outcomes, not the outcomes that are truly incremental or profitable. This is common when branded intent or retargeting is over-credited. A mature measurement approach layers reporting with experiments and reconciliation to finance-grade outcomes.

How long should I commit to a social media advertising company?

Long enough to build the system properly, but not so long you can’t exit if fundamentals are weak. Many teams structure an initial engagement around an implementation phase (tracking, structure, creative system, reporting), then continue only if the partner proves they can sustain learning and accountability.

Work With Professionals

If you’re a marketer reading this and thinking, “I could run this system for clients,” you’re not wrong. The hard part isn’t the theory. The hard part is getting in the room with teams that actually need help and are ready to pay for results.

That’s where markework.com can change the game. It positions itself as a marketing marketplace where you can build a profile, connect directly with clients, and avoid the typical platform tax that eats into your earnings. Its homepage describes “no middleman” and “no project fees,” which is exactly what freelancers want when they’re trying to turn skill into income.

And the demand is real. Just to put a grounded number behind it, Upwork’s marketing category regularly shows over 12,000 open marketing roles, which hints at how many teams are actively looking for help right now across paid social, lifecycle, SEO, content, and more.

Imagine waking up to a pipeline of companies who already want what you do. Not “maybe later” leads, but teams trying to ship campaigns, hit revenue targets, and move fast. Imagine keeping 100% of what you earn, building direct relationships, and making your process the product.

If that sounds like the kind of momentum you’ve been chasing, start where modern marketing teams are already looking for specialists: markework.com