Paid social used to be the “boost a post and hope” part of marketing. Now it’s one of the most measurable ways to create demand, generate pipeline, and scale revenue—if it’s run with the kind of discipline you’d expect from a performance channel.

That’s where a paid social agency earns its keep. Not by “managing ads,” but by building a repeatable system that ties creative, targeting, landing experiences, and measurement into one operating framework—so budget turns into business outcomes you can actually defend in a leadership meeting.

Article Outline

- What Is a Paid Social Agency

- Why a Paid Social Agency Matters

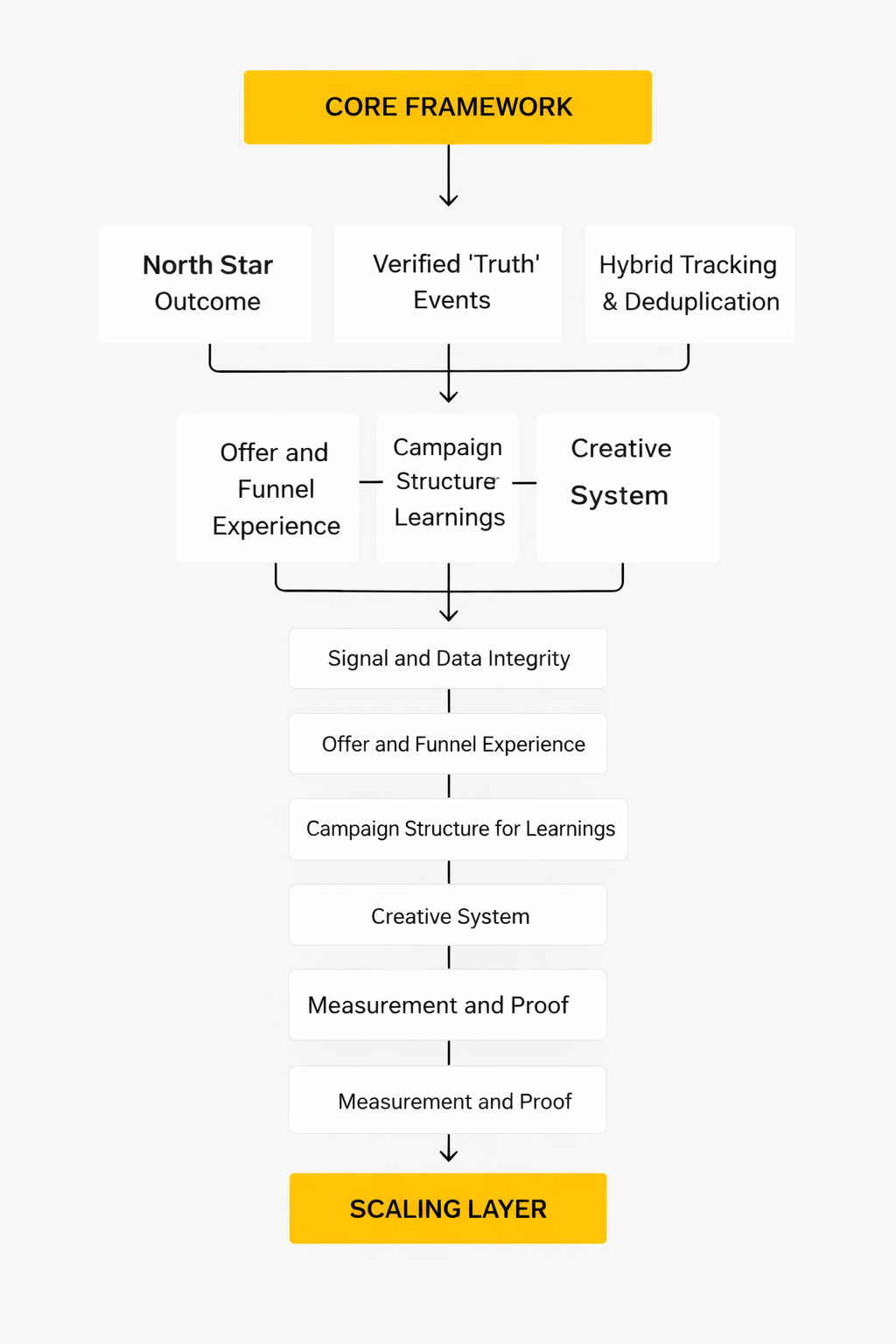

- Framework Overview

- Core Components

- Professional Implementation

- Part 2: Creative Production System

- Part 3: Measurement and Attribution

- Part 4: Optimization and Scaling

- Part 5: Team, Process, and Governance

- Part 6: FAQ

What Is a Paid Social Agency

A paid social agency is a specialist partner that plans, produces, runs, and measures paid advertising across social platforms (Meta, TikTok, LinkedIn, Pinterest, Snapchat, X, and others) with one job: reliably turning spend into outcomes. Those outcomes might be purchases, qualified leads, booked demos, app installs, or even brand lift—but the key is that the goal is explicit, the system is intentional, and the results are tracked end-to-end.

Think of it less like “someone who posts ads” and more like a performance team that happens to buy media inside social ecosystems. Social platforms are now massive advertising machines; Meta alone reported over $200.97B in revenue for 2025, driven primarily by ads. When channels operate at that scale, the difference between random activity and professional execution becomes expensive—fast.

What separates a capable agency from a “we’ll manage your ads” vendor is how it treats the work:

- Strategy: connecting the offer and funnel to a clear customer journey, not just picking interests and hoping.

- Creative as a system: iterating against real signals, not one-off “nice-looking” ads.

- Measurement you can trust: designing tracking and incrementality so the numbers mean something, even in privacy-constrained environments.

- Cross-functional execution: aligning landing pages, conversion paths, and CRM or analytics so paid social isn’t isolated from the business.

In other words, a paid social agency is an operating partner. The deliverable isn’t ads. The deliverable is confidence: confidence that your spending decisions are based on reality, and confidence that the next optimization cycle will predictably move performance.

Why a Paid Social Agency Matters

Social ad platforms are maturing at the same time that measurement is getting harder. Budgets continue shifting toward digital, and social remains a core line item even when teams are under pressure to do more with less. Gartner’s 2025 marketing spend research shows social advertising holding a meaningful share of spend (with social at 12.2% of total marketing spend in that snapshot), which makes “good enough” execution a risky place to live.

At the same time, the biggest threat to paid social performance usually isn’t the platform. It’s inconsistency: inconsistent creative, inconsistent tracking, inconsistent landing experiences, and inconsistent decision-making. One month the team optimizes for cheap leads; the next month leadership wants pipeline quality; then the CFO asks why reporting doesn’t match finance. That chaos quietly burns budget.

A strong paid social agency matters because it provides structure in three places where most brands struggle:

- Focus: turning business goals into a small set of campaign objectives that don’t fight each other.

- Speed: shipping enough creative and testing cycles to keep up with a fast-moving auction.

- Truth: building measurement that survives privacy changes and still answers the only question that matters: “Did this create incremental value?”

And yes—this is also about the reality of where attention and money already are. The U.S. internet advertising market’s latest IAB/PwC reporting shows social media advertising reaching $88.8B in 2024, reflecting how central these platforms are to modern growth. When a channel is that material, it deserves specialist operations, not part-time attention.

Finally, a professional partner helps teams avoid expensive “false positives.” It’s easy to celebrate a dashboard spike that later turns out to be attribution noise, brand-driven demand you would have captured anyway, or lead volume that never converts. Agencies that know what they’re doing design for incrementality using methods like conversion lift tests, where platforms explain how they use test/control groups to estimate incremental impact through Conversion Lift studies. The point isn’t the tool—it’s the habit of proving impact, not assuming it.

Framework Overview

The easiest way to understand how a paid social agency creates results is to look at the work as a loop, not a checklist. The loop has four stages, and every stage feeds the next one.

- Diagnose: define the business outcome, identify funnel friction, and establish what “good” looks like in metrics that matter.

- Build: design the account structure, creative testing system, landing experience, and tracking foundations.

- Learn: read signals across platform data, site/app analytics, and downstream systems (CRM, revenue) to understand what’s truly driving performance.

- Scale: allocate budget to the combinations of audience, offer, and creative that show repeatable lift—while expanding into new angles to prevent saturation.

This loop matters because platforms increasingly automate delivery. Meta reported both higher ad impressions and higher average price per ad year-over-year in 2025 through its public results metrics, which is a reminder that auctions evolve continuously. If your process is “set it and forget it,” the auction will outpace you.

The loop also respects a simple truth: in paid social, creative is often the biggest lever you control, but measurement determines whether you can confidently pull that lever. You can ship brilliant ads and still lose money if your tracking lies to you. You can also have decent ads and win if your testing and decision system is tight.

Core Components

Most winning paid social programs look different on the surface—different brands, platforms, audiences—but the underlying components are surprisingly consistent. A capable paid social agency builds around these pillars.

1) Outcome-first strategy

Everything starts with the business goal and the conversion you can truly count. If the goal is pipeline, the agency must define what “qualified” means and how it appears in the CRM. If the goal is e-commerce revenue, it must define contribution margins and how to interpret ROAS in a way that doesn’t hide unprofitable growth.

This is where many programs quietly fail: they optimize platform metrics that look great but don’t match the business. Strategy is the translation layer between the business and the ad auction.

2) Structured account architecture

Great performance comes from clear separation of intent and learning. That usually means distinct campaign buckets (prospecting, retargeting, retention, or lifecycle), consistent naming, and a structure designed to answer questions like “What creative themes actually drive new-customer conversions?”

Structure is also what makes reporting trustworthy. Without it, you end up with blended results that can’t explain why performance changed.

3) Creative iteration engine

In paid social, the best targeting is often the message. A professional agency treats creative as a hypothesis: angle, hook, proof, offer, format, and CTA—each tested with intent. The goal isn’t to find “the best ad.” The goal is to build a library of winning patterns you can reuse and adapt.

This is increasingly important as the ecosystem shifts toward algorithmic distribution and content-like ad formats. The more creative variants you can responsibly test, the more opportunities you give the platform to find pockets of efficient demand.

4) Measurement that survives privacy constraints

Teams don’t need “more data.” They need better truth. That means building measurement across layers: platform tracking, first-party analytics, and incrementality methods when attribution gets noisy.

Privacy and ecosystem shifts are real and ongoing. Google’s own Privacy Sandbox documentation notes that some Privacy Sandbox technologies are being phased out, and the UK CMA continues to document oversight and changes in Google’s approach in its case updates. The practical takeaway for paid social isn’t panic—it’s designing measurement so decisions don’t collapse when one signal changes.

5) Budget governance and scaling rules

Scaling isn’t “spend more.” Scaling is increasing investment while protecting efficiency and learning. Agencies that scale well use rules: when to raise budgets, when to consolidate, when to expand into new creative angles, and when to pause because results are being propped up by retargeting or attribution artifacts.

This is also where agencies protect businesses from wasting money during hype cycles. If a platform, format, or trend is “hot,” the question isn’t whether it’s popular—the question is whether it produces incremental value for your specific offer and audience.

Professional Implementation

So what does it look like when a paid social agency implements all of this professionally? It looks like operational discipline: clear inputs, consistent cycles, and decision-making that doesn’t depend on someone’s mood.

A solid implementation typically starts with a short discovery phase that clarifies the outcome, constraints, and current performance. From there, the agency builds a launch plan that includes:

- Tracking and data integrity checks: verifying events, deduplication, and downstream visibility so reporting isn’t built on broken signals.

- Offer and funnel alignment: ensuring the landing experience matches the promise of the ad and makes conversion feel inevitable.

- Creative plan with a testing calendar: not “we’ll make some ads,” but a mapped set of hypotheses and formats to test weekly.

- Measurement plan: deciding in advance what the team will trust for optimization versus what will be treated as directional.

- Feedback loops: piping sales/CS insights back into creative and targeting so the system learns from reality.

This matters because marketing teams are operating inside a broader spend environment where every line item is scrutinized. Marketing budgets have been under pressure to justify themselves; Gartner’s 2025 CMO spend findings highlight that paid media remains the largest category of marketing spend in many orgs with paid media at 30.6% of budgets. When the stakes are that high, professional implementation isn’t “nice to have.” It’s what keeps paid social from becoming a recurring argument about numbers.

In the next part, the focus shifts to the engine that most directly moves results: the creative production system. That’s where a paid social agency builds repeatable output, prevents fatigue, and turns learning into scalable creative patterns.

Step-by-Step Implementation

A paid social agency implementation only works when it’s treated like a product launch: clear requirements, clean instrumentation, controlled rollout, and a feedback loop that turns learnings into the next build. The steps below are the version that survives real-world messiness—multiple stakeholders, multiple platforms, and an attribution environment that doesn’t politely behave.

1) Define the one outcome everyone will defend

Pick a single outcome that matters to the business and can be verified downstream. For e-commerce, that’s usually purchase value that finance can reconcile. For B2B, it’s often a CRM stage that sales leadership agrees is “real,” not just form fills.

This step sounds obvious, but it’s the moment a paid social program stops optimizing for the platform’s favorite metric and starts optimizing for the company’s reality.

2) Map the conversion path into events you can actually trust

Write down the full path: ad click or view, landing page, key actions, conversion, and any offline steps (sales calls, demos, in-store purchases). Then decide which events are “optimization events” (fast feedback) and which are “truth events” (business outcomes).

When teams skip this, they end up with tracking that looks complete but can’t answer simple questions like “Which campaign drove high-quality customers rather than just easy conversions?”

3) Implement hybrid tracking (browser + server) and deduplicate

Most professional setups keep the pixel for breadth and add server-to-server delivery for durability. Platforms describe these server-side connections as more reliable ways to share conversion signals, which is why implementation guidance exists directly inside official documentation for Meta Conversions API, TikTok Events API, and LinkedIn Conversions API.

The detail that matters here is deduplication. If browser and server events double-count, your dashboards inflate, your bidding learns the wrong thing, and the next scaling decision becomes expensive.

4) QA like you’re trying to catch quiet failures

Implementation isn’t “it fired once in preview.” QA means testing event payloads, checking match quality indicators where available, validating UTMs, and confirming the same conversion is visible in analytics and downstream systems without drift.

Teams that treat QA as optional usually discover broken tracking only after performance drops—when every stakeholder suddenly has an opinion and no one trusts the numbers.

5) Build a campaign structure that answers questions

Structure should make it easy to learn. Separate new demand from existing demand. Separate creative tests from scaling campaigns. Separate prospecting from retargeting where it clarifies decision-making.

When structure is clean, reporting becomes a tool for decisions instead of a weekly argument about what “really happened.”

6) Launch with controlled learning, not maximum spend

Start with budgets that allow stable delivery and clean learning. Ship multiple creative angles early so the platform has options, but keep the environment controlled enough that you can interpret results without guessing.

This is where a paid social agency earns trust: it prioritizes clarity first, then speed, then scale—because scale without clarity is just expensive confusion.

Execution Layers

A strong paid social agency runs implementation across layers, because paid social performance isn’t one lever—it’s a stack. When a layer is weak, the layers above it look “broken” even if they aren’t.

Layer 1: Signal and data integrity

This is the foundation: events, deduplication, and first-party data alignment. When this layer is solid, platforms can optimize with better signals, and your team can analyze outcomes without second-guessing.

This is also where privacy shifts hit first. If your signals depend entirely on brittle browser behavior, performance swings feel random. If your signals are routed through stable server-side connections, you at least control the failure modes.

Layer 2: Offer and funnel experience

Paid social amplifies what’s already true. If the offer is unclear, the landing page is slow, or the conversion path is awkward, media buying can’t save it.

Agencies that move the needle here don’t just “recommend landing page tweaks.” They align the ad promise with the page reality so the click feels like the next obvious step.

Layer 3: Creative system

Creative is where social platforms decide whether you deserve reach at an efficient price. The execution layer isn’t a single ad; it’s the ability to produce variations fast, learn from performance signals, and turn those signals into the next round of better creative.

If creative is sporadic, you get spikes and collapses. If creative is a system, you get compounding learnings.

Layer 4: Media operations and controls

This is how you translate learnings into action: budget changes, bid strategy choices, audience expansion, placement decisions, and guardrails that keep experiments interpretable.

Great media operations are calm. They don’t chase every fluctuation. They move in deliberate steps that protect learning while steadily pushing growth.

Layer 5: Measurement and proof

This is where teams graduate from “the platform says it worked” to “we can prove it created incremental value.” That proof often lives in experimentation and lift studies, which is why Meta documents structured approaches like Conversion Lift rather than leaving teams to guess.

When this layer is strong, internal trust increases, budgets become easier to defend, and scaling decisions stop feeling like leaps of faith.

Optimization Process

Optimization is where a paid social agency either becomes a compounding system or a never-ending cycle of “tweak and hope.” The difference is whether the process is designed to learn.

Weekly cadence: learn, decide, ship

A healthy cadence usually has three parts: read performance signals, make a small number of clear decisions, and ship the next set of tests. If the “ship” part disappears, performance eventually stalls because you’re optimizing yesterday’s creative against today’s auction.

Week by week, the goal is to replace guesswork with a growing library of what works: hooks that earn attention, proof that builds trust, and offers that convert without feeling desperate.

Creative-first optimization that doesn’t ignore measurement

Many teams say “creative is the lever,” then treat measurement as an afterthought. A professional approach links the two: every creative test has a hypothesis, every hypothesis has a success metric, and every metric is tied back to a conversion definition the business trusts.

When measurement is stable, creative iteration becomes faster because you don’t waste cycles arguing about whether the result is real.

Guardrails that prevent self-inflicted damage

Scaling too fast can reset learning phases, distort tests, and cause whiplash. Cutting too aggressively can destroy momentum and make results look worse than they really are. Guardrails are the middle path: controlled budget shifts, consistent test windows, and a refusal to “optimize” based on a single day of noise.

This is especially important when multiple platforms are in play and each one uses different attribution logic. Clear guardrails keep the team focused on what’s actually changing versus what’s merely being reported differently.

Implementation Stories

The best way to understand implementation is to watch it happen under pressure. The story below is built from public case study material, with the details grounded in the published write-up and the platform’s measurement methodology.

DSW and Tinuiti: When measurement uncertainty threatened omnichannel growth

It started with a problem that doesn’t show up in ad managers until it’s already hurting you. Online performance looked strong in the dashboard, but leadership couldn’t tell whether paid social was driving real omnichannel value or simply taking credit for customers who would have bought anyway. Store sales were the elephant in the room, and the team felt the pressure because a “maybe” answer wasn’t going to protect budget in the next planning cycle in Tinuiti’s DSW case study.

The confusion was costly in a subtle way. Every additional dollar of spend required a leap of faith, and the larger the spend became, the larger the risk of being wrong. Without a credible way to connect Meta ads to both online and in-store outcomes, the organization was one skeptical meeting away from pulling back investment simply to feel safe as the case outlines.

The backstory was classic omnichannel reality: customers didn’t behave neatly. Some saw ads and bought later in-store, some browsed on mobile and purchased on desktop, and some engaged with multiple touchpoints that made last-click stories sound cleaner than they were. The team needed a measurement approach that respected how people actually shop, not just how cookies and clicks want to label them in Meta’s conversion lift overview.

They also had a strategic constraint. The business couldn’t afford to “choose” between online optimization and store impact because the brand lived in both worlds. So the question became sharper: could they design an implementation that proved incremental lift while capturing omnichannel value—and do it in a way that stakeholders would accept as fair?

The wall arrived when they tried to solve a causal problem with observational reporting. If you only look at attributed conversions, you can’t separate correlation from causation. The team could optimize for online sales all day and still miss the real story, because in-store lift might be invisible or misassigned. The result was an uncomfortable stalemate: lots of activity, lots of reporting, and not enough proof in the test rationale described by Tinuiti.

Even worse, the absence of proof created emotional noise. Performance conversations drifted into opinions, and opinions turn into politics. When teams don’t have a shared truth, they stop debating strategy and start debating credibility—usually right when they need alignment most.

They needed a measurement design that could withstand skepticism, not just a prettier dashboard.

The epiphany was to treat measurement as part of the implementation, not something you bolt on afterward. Instead of arguing about attribution models, they built a test designed to produce a defensible comparison: a holdout group that wouldn’t receive Meta ads, so the team could observe the difference between “with ads” and “without ads” in a controlled way using the holdout approach described in the case.

That shift changed the tone of the work. Suddenly, the goal wasn’t to make the platform numbers look good. The goal was to create an experiment the business would believe, even if the result was smaller than the platform’s claimed impact.

Implementation became less about “launching campaigns” and more about “launching proof.”

The journey began with a structured conversion lift design. The case describes Tinuiti setting up a holdout and using it to compare an omnichannel optimization approach against business-as-usual online-sales optimization, so the test could isolate incremental impact and include both online and in-store outcomes in the test setup details.

Then they operationalized the implementation around the test, not around convenience. That meant aligning conversion events, ensuring the measurement framework could capture the outcomes they cared about, and running the test long enough to reach credible conclusions—because a rushed experiment is just a slower form of guessing.

As the test ran, the team had something rare: a way to make decisions without needing to “believe” in any single attribution story.

The final conflict was the part nobody likes to admit: tests create tension. When you hold out a portion of the audience, you’re intentionally leaving money on the table in the short term to buy clarity for the long term. That can feel terrifying when teams are judged on weekly performance and short-term revenue targets.

On top of that, omnichannel measurement introduces operational complexity. Data needs to be connected responsibly, stakeholders need to understand what the test is proving, and leadership needs to stay patient long enough for the results to mean something. It’s easy for teams to abandon the discipline right before the learning becomes valuable.

The only way through is treating the test as the strategy, not as a side project.

The dream outcome was a program that could scale with confidence because it could prove value in the language the business cared about. The published case frames the outcome as an incrementality-driven approach that tied Meta investment to omnichannel outcomes and enabled clearer decision-making between optimization strategies as summarized in the case study.

That’s the real win a paid social agency delivers: not just performance improvements, but a measurement system that earns trust. Once trust exists, budget conversations get easier, creative gets bolder, and optimization becomes a compounding loop instead of a weekly scramble.

And when the team can defend spend with proof, growth stops feeling like a gamble.

The implementation playbook that scales without drama

- One shared truth: a documented conversion definition the business will stand behind, tied to downstream verification.

- Hybrid tracking: pixel for coverage, server-to-server APIs for durability, implemented through official methods like Meta Conversions API, TikTok’s Events API server-side tagging guidance, and LinkedIn’s Conversions API migration notes.

- QA and monitoring: validation checks that catch silent failures before performance drops become a fire drill.

- Structured experimentation: when the business needs proof, use controlled designs like lift studies and holdouts, grounded in documented methodology such as Meta’s conversion lift approach.

- Operational cadence: weekly creative shipping, weekly learning synthesis, and decisions that are small enough to be reversible but consistent enough to compound.

When these pieces are in place, paid social stops feeling like a black box. It becomes an operating system: predictable inputs, measurable outputs, and a feedback loop that gets smarter every cycle. That’s the version of a paid social agency implementation that leadership trusts—and that teams can scale without burning out.

Statistics and Data

A paid social agency can’t “optimize” its way out of bad signal. The data you trust determines the decisions you make, and the decisions you make determine whether you scale calmly or spend your way into a performance cliff. So this section is less about vanity metrics and more about the few data points that genuinely shape strategy.

Here are the market-level numbers that matter because they explain why competition keeps tightening, even when your creative is strong. US digital ad revenue hit $259B in 2024, up 15% year over year, a reminder that the auction is not slowing down just because your internal targets feel aggressive in IAB’s release summary and the full 2024 report with industry coverage that mirrors the same topline figure.

Inside that growth, social media advertising revenue reached $88.8B in 2024, rising by $23.8B from 2023, which is a blunt signal that more brands are buying attention in the same feeds you’re competing in from the IAB/PwC report’s breakdown supported by IAB’s summary page and echoed in MediaPost’s recap of the same dataset.

Zoom out further, and you see why leadership conversations get harder: budgets aren’t exploding, but expectations still are. Gartner’s 2025 survey work puts marketing budgets at 7.7% of company revenue, and it also notes digital channels now account for 61.1% of total marketing spend in the 2025 CMO Spend Survey press release and Gartner’s digital share release. Within those constrained budgets, paid media takes the biggest slice at 30.6% in Gartner reporting, which helps explain why scrutiny on ROAS, CAC, and incrementality keeps increasing via Campaign’s coverage and MarTech’s recap.

Finally, there’s the “auction inflation” reality you feel every day. Meta’s own results show ad impressions grew 12% for full-year 2025 while average price per ad rose 9%, which is a tidy way of saying: more inventory, higher demand, and a more expensive fight for attention in Meta’s FY2025 earnings release and the prepared remarks PDF with trade coverage repeating the same operational highlights.

Performance Benchmarks

Most “benchmarks” floating around the internet aren’t trustworthy enough to steer real budgets. A paid social agency uses benchmarks in a more practical way: to catch obvious problems early, to set expectations with stakeholders, and to avoid making emotional decisions when numbers wobble.

The three benchmark types that actually help

- Market pressure benchmarks: treat macro spend growth as the reason efficiency gets harder over time, not as an excuse. When US digital ad revenue is growing at a 15% clip and social is still accelerating, “costs are up” becomes a market fact, not a personal failure in the IAB/PwC report and IAB’s summary.

- Platform auction benchmarks: use platform-reported price and inventory signals as the background music for your CPM and CPC. If Meta’s price-per-ad is up year over year while impressions also rise, you expect pressure on efficiency unless creative and conversion rate improve to compensate in Meta’s FY2025 results and WARC’s breakdown.

- Business outcome benchmarks: your most important benchmarks are internal: contribution margin, payback period, LTV:CAC, pipeline-to-cash velocity. These are harder to compute, but they’re also harder for a bad attribution window to distort.

What “good” looks like for a paid social agency

Instead of chasing a universal CTR or CPC target, professional teams anchor on a few sanity checks: stable event tracking, consistent conversion definitions, and a clear view of incrementality. That’s why lift studies keep showing up inside serious programs—because they answer the question stakeholders actually care about: “Did the ads create new outcomes, or did they just capture credit?” Meta’s Conversion Lift overview and TikTok’s Conversion Lift Study explanation.

When lift tests are too heavy for every cycle, a paid social agency still borrows the mindset: compare like with like, keep test conditions stable, and make decisions based on repeatable patterns rather than a single report screenshot.

Analytics Interpretation

Analytics gets dangerous when it turns into a story you want to believe. A paid social agency interprets data the way a good investigator does: it looks for competing explanations and tries to disprove itself before it scales.

Interpretation habits that prevent expensive mistakes

- Start with tracking integrity: if events are missing or duplicated, optimization is fiction. Platforms explicitly push server-side connections because they’re designed to make conversion signal sharing more reliable via Meta Conversions API docs and TikTok’s Events API overview plus LinkedIn’s Conversions API documentation.

- Separate “delivery” from “business impact”: CPM, CTR, and frequency tell you how the auction is behaving; they don’t prove incremental profit. This is exactly why incrementality frameworks keep being positioned as the gold standard by platforms and measurement vendors in TikTok’s CLS framing and Meta’s lift resources.

- Watch for lagged effects: some channels and creative concepts convert later than attribution windows suggest. Experimentation platforms publishing aggregated learnings note that post-treatment windows can capture delayed impact that short windows miss in Haus’s TikTok experiment analysis.

- Use attribution models as lenses, not truth: GA4 attribution reporting is useful for comparing models, but it’s still a model. Treat it like a flashlight, not a verdict in Google’s GA4 attribution documentation.

A simple interpretation flow you can run every week

First, confirm the measurement layer didn’t break (events, deduplication, UTMs). Then, read delivery signals (CPM, frequency, placement shifts) as the “why” behind cost movement, not as the outcome itself. Finally, connect spend to real business outcomes through cohort performance, CRM stages, or lift testing—whatever your organization can verify without hand-waving.

This is also where the tone of the team matters. When performance dips, weak teams panic and thrash. Strong teams slow down, isolate variables, and only change what they can explain.

Case Stories

Real analytics work rarely looks heroic in the moment. It looks like uncertainty, pressure, and the uncomfortable decision to test what you’re afraid might be true. These stories are built from public case materials, and they follow the exact arc that shows up when a paid social agency has to prove value, not just report it.

Turkish Airlines: When the biggest question wasn’t conversions, but intent

The team stared at the results and felt that sick uncertainty you can’t put in a dashboard. They were investing in Meta campaigns, but the cleanest “proof” seemed to live elsewhere—search demand—and nobody could confidently connect the two. The fear wasn’t that the ads weren’t working; the fear was worse: that they were working in a way the organization couldn’t prove in Meta’s Turkish Airlines success story summary.

Backstory made the problem messier. Turkish Airlines operates in a category where journeys don’t begin with an impulse click; they begin with curiosity, planning, and comparison. People see inspiration, then they search, then they return, then they decide—often across devices and days. If your measurement only celebrates last-touch conversions, you end up undervaluing the moments that start the journey as the case frames via search traffic impact.

Then the wall hit: the team needed a credible way to quantify the search demand created by ad exposure. If they couldn’t show that paid social was moving search behavior, they risked losing the narrative inside the business. And when narrative collapses, budget usually follows.

The epiphany was to stop arguing with attribution models and instead run a test built to answer the real question. They used a Meta Conversion Lift study designed to measure the search traffic driven by exposure to Meta ads. In other words, they treated “search lift” as a measurable outcome rather than a vibe the team had to defend through the study approach described in the story.

The journey wasn’t glamorous, but it was disciplined. The campaign ran across a defined period, and the measurement design compared exposed versus control groups to isolate impact. That structure gave the team a way to speak in cause and effect, not correlation and hope using the lift methodology Meta documents.

Of course, things got tense in the middle. Lift tests require patience, and they often challenge assumptions teams got comfortable with. If the lift is smaller than the platform’s attributed conversions, stakeholders can misread that as “worse,” even though it’s often “truer,” which forces hard conversations about what success should mean.

The dream outcome is exactly what measurement is supposed to buy: confidence. With lift-based evidence connecting ad exposure to search behavior, the team could defend investment with a straighter face and a clearer story. That’s the quiet superpower a paid social agency brings to leadership—proof that survives skepticism, not just numbers that look good in a single interface in the published case framing.

Aerie: When “mid-funnel success” wasn’t enough to unlock incremental purchases

Everything looked fine—until it didn’t. The team had campaigns running, creative getting traction, and performance that felt respectable, but there was a nagging question nobody could answer cleanly: were they creating new buyers, or just catching people already on the way? The moment leadership asked for incremental impact, “respectable” stopped being a satisfying word in TikTok’s write-up of the Search Ads Campaign lift results.

The backstory is the modern buyer journey in one sentence: discovery and intent now blend together. People scroll for entertainment, then search inside the same app when curiosity spikes. TikTok itself points out the growth and behavioral shift around search on the platform, which changes how you should think about capturing intent in the Search Ads Campaign overview.

The wall was strategic, not tactical. If you treat search-like behavior as an afterthought, you can miss the moment people are actively evaluating what to buy. And if you can’t prove incrementality, you can’t confidently shift budgets, because every shift risks cannibalizing something else.

The epiphany came from pairing formats instead of treating them like separate worlds. The campaign combined Search Ads Campaign with In-Feed Ads, then used a Conversion Lift Study to isolate incremental conversions versus a 100% in-feed allocation. That’s a measurement-first mindset: design the campaign around what you want to prove, then let the creative do its job as described in the lift study summary and TikTok’s CLS methodology explainer.

The journey was about building a system that could scale. They didn’t just launch a new placement; they created a structure where intent capture had a dedicated lever, and lift testing could validate whether that lever genuinely added incremental purchases. That kind of setup is how a paid social agency turns “we think it helps” into “we can show it helps.”

Then came the inevitable final conflict: mixing formats creates operational complexity. Reporting gets noisier, stakeholders ask why results don’t match the old playbook, and teams have to resist the temptation to retreat to the safest channel just because it feels more familiar. Measurement discipline is what keeps the team from quitting halfway through the learning.

The dream outcome was measurable incremental growth rather than purely attributed performance. TikTok’s published results for the Aerie example cite incremental purchases, conversion lift, and iROAS improvements when combining Search Ads Campaign with In-Feed Ads compared to an all in-feed setup in the case section describing lift and iROAS. When you can prove incremental purchases, scaling stops being a gamble and becomes a decision.

Professional Promotion

If you want the work of a paid social agency to be valued inside a business, you need to package analytics in a way that earns trust. Not “pretty dashboards.” Trust. That means your reporting has to answer the questions stakeholders are too polite to say out loud.

How to present paid social performance like a professional

- Lead with what changed and why: connect efficiency changes to market pressure signals (auction inflation, seasonality, creative fatigue), not just “CPM went up.” Meta’s own reporting around impressions and price-per-ad is a useful reminder that the auction itself shifts year to year in Meta’s FY2025 highlights.

- Separate short-term optimization from long-term proof: use platform metrics for fast iteration, but reserve “did it work?” for lift tests, holdouts, and downstream outcomes. Both Meta and TikTok explicitly frame lift studies as the reliable way to measure incremental impact in Meta’s lift documentation and TikTok’s CLS post.

- Translate results into business language: contribution margin, payback period, pipeline quality, repeat purchase, refund-adjusted revenue. Stakeholders don’t argue with numbers they recognize.

- Use benchmarks to set expectations, not excuses: when digital ad revenue keeps growing and social keeps accelerating, efficiency pressure is normal. The job is to adapt faster than the auction through creative, offer, and funnel improvements as the IAB/PwC market data makes clear.

When reporting is done this way, it stops being a weekly ritual and becomes a decision engine. The team ships more confidently, leadership asks better questions, and the agency relationship upgrades from “vendor” to “strategic partner.” That’s what professional analytics is really for: not to impress people, but to help them choose the next move without fear.

Future Trends

The next wave of change won’t come from a single platform update. It will come from multiple forces colliding at once: heavier automation, messier measurement, faster creative cycles, and new “walled gardens” where commerce, search, and social blend together. A modern paid social agency stays ahead by building systems that survive these shifts, not tactics that depend on last year’s rules.

AI becomes the default execution layer

Platforms are steadily moving toward automation-heavy buying, where the advantage comes from the quality of inputs (creative, offer clarity, first-party signals) more than manual levers. Meta positions Advantage+ as an AI and automation suite, and the direction is consistent with how the ad business itself is scaling and optimizing delivery at massive volume in Meta’s FY2025 results. For a paid social agency, this means the best teams will look less like “button-pushers” and more like operators of a creative-and-signal machine.

Incrementality becomes the language of trust

As auctions grow and attribution gets noisier, stakeholders will push harder for proof that ads are driving outcomes that wouldn’t have happened otherwise. That’s why experimentation and lift testing keeps moving closer to the center of professional practice, including frameworks like Meta Conversion Lift and TikTok Conversion Lift Study. It’s not that attribution disappears; it’s that attribution becomes a navigation tool while incrementality becomes the evidence you use to defend spend.

Privacy and browser decisions keep reshaping signal quality

Privacy shifts won’t stop just because timelines change. Google’s evolving approach to Chrome cookie changes and Privacy Sandbox commitments has been tracked publicly through the UK regulator process in the CMA case file, and industry reporting has highlighted how the ecosystem keeps adapting to what is effectively an ongoing “privacy posture” transition rather than a single deprecation event in Reuters coverage. For a paid social agency, the implication is simple: first-party data strategy and server-to-server event delivery aren’t “nice to have,” they’re how you keep signal stable when the surface layer changes.

Social search and commerce media blur the funnel

Search behavior is increasingly happening inside social environments, and platforms are packaging that behavior into performance products. TikTok’s own reporting on Search Ads Campaign performance shows why teams are testing search-plus-feed combinations to unlock incremental gains and defend budget allocation decisions with lift methodology in TikTok’s Search Ads lift example. In parallel, retail and commerce media continues rising as brands seek closed-loop measurement and first-party signals, with industry expectations of strong growth and strategic importance in IAB’s 2025 outlook release.

Creators become a performance lever, but measurement stays uneven

Creator-driven content is no longer just “brand awareness.” It’s increasingly part of the performance system, even though returns can be volatile when strategy and measurement are weak. WARC has emphasized the variance in creator returns and the need for better measurement discipline in its Future of Media 2026 coverage, while industry reporting tied to IAB research shows creator ad spending continuing to expand rapidly in Business Insider’s summary. The agency opportunity is building a creator pipeline that’s testable, repeatable, and connected to outcomes the business can verify.

Strategic Framework Recap

If you want a simple way to remember what separates a “campaign runner” from a real paid social agency, it’s this: professionals build an ecosystem where creative, measurement, and operations reinforce each other.

The five pillars that make the system work

- Signal you can trust: stable event definitions, deduplication, and first-party alignment so optimization isn’t built on noise.

- Creative as a system: consistent throughput, clear hypotheses, and a library of learnings that compounds instead of resetting every month.

- Campaign structure that teaches: separation of testing vs scaling, clean naming, and reporting that answers questions instead of creating arguments.

- Optimization with guardrails: deliberate changes, interpretable steps, and an operating cadence that protects learning while scaling.

- Proof at the right moments: attribution for navigation, lift and experimentation for confidence—using the same measurement standards platforms describe for incrementality in Meta’s lift framework and TikTok’s lift framework.

When these pillars are in place, the work feels calmer. Decisions get easier to defend. Scaling becomes a controlled process. And stakeholders stop asking for miracles because they can finally see a system.

FAQ – Built for a Complete Guide

What does a paid social agency actually do beyond launching ads?

A paid social agency builds the full operating system around ads: conversion tracking you can trust, a creative testing engine that produces learnings every week, and a measurement approach that can stand up in a finance conversation. Launching is the easy part. Turning paid social into a repeatable growth channel is the real job.

When should a company hire a paid social agency instead of keeping it in-house?

Hire an agency when speed and specialization matter more than internal control—especially if you need creative testing velocity, cross-platform experience, or measurement discipline that your team can’t maintain alongside everything else. If your internal team is strong but bandwidth-limited, agencies often work best as a force multiplier rather than a replacement.

Which platforms should a paid social agency focus on first?

Start where your customers already spend attention and where your business model can support the payback window. For many brands that’s Meta, but TikTok, LinkedIn, Snapchat, and retail media environments can be equally important depending on the category. Platform selection should follow the customer journey, not the trend of the month.

How can I judge whether a paid social agency is good before signing a contract?

Ask how they define “truth” (what conversions they trust), how they handle tracking reliability (including server-to-server event delivery), and how they prove incrementality when budgets scale. If they only talk about CTR and ROAS screenshots without explaining measurement and experimentation, you’re probably buying optimism rather than a system.

What’s the difference between attribution and incrementality?

Attribution assigns credit across touchpoints. Incrementality estimates what the ads actually caused. That’s why platforms and measurement teams keep investing in experimentation approaches like Meta Conversion Lift and TikTok Conversion Lift Study. A professional paid social agency uses attribution to steer, then uses incrementality to prove.

Do I really need server-side tracking like Conversions API or Events API?

If you care about signal durability, yes. Browser-only tracking is more vulnerable to blockers, browser behavior changes, and inconsistent environments. Server-side connections exist specifically to make conversion signal sharing more reliable for optimization and measurement, which is why official documentation exists for Meta Conversions API and TikTok Events API.

How many new creatives should we test each month?

Enough to keep learning ahead of the auction. The exact number depends on spend and category, but the principle stays the same: creative fatigue is predictable, so you want a steady pipeline instead of occasional bursts. If your best ad is carrying performance for weeks without replacements in progress, you’re running on borrowed time.

Why does performance suddenly drop even when nothing changed?

Sometimes things did change—you just didn’t see it. Auction pressure moves, creatives fatigue, or tracking breaks quietly. Meta’s own reporting shows that price and impression dynamics shift year over year in FY2025 operational metrics, which can raise costs even when your ads are “the same.” That’s why monitoring and controlled experimentation matter as much as optimization tactics.

Should I use industry benchmarks for CTR, CPC, or ROAS?

Benchmarks are useful as smoke alarms, not steering wheels. They can help you spot obvious problems (bad creative resonance, broken landing pages), but the best targets are internal: contribution margin, payback period, LTV:CAC, and incremental lift. A paid social agency uses benchmarks to ask better questions, not to declare victory.

How do you scale without wrecking efficiency?

Scale in steps you can interpret, protect a testing lane, and expand supply (new creative angles, placements, cohorts) before squeezing targeting. Then add incrementality checkpoints at meaningful spend levels so you know the growth is real, using the same experimentation logic platforms describe for lift testing in TikTok’s help documentation.

Is targeting still important, or is everything creative now?

Targeting matters, but the advantage increasingly comes from creative and signals. As automation grows, platforms can find audiences faster than humans can manually assemble them—if you feed the system the right inputs. That’s why AI and automation suites are framed as the future of campaign delivery in Meta’s Advantage+ overview.

Work With Professionals

If you’ve read this far, you already know the uncomfortable truth: building a real paid social agency capability—whether inside a company or as a freelancer—requires more than platform knowledge. It requires a pipeline of opportunities, a way to get in front of serious clients, and a system that lets you move fast without giving away your earnings.

That’s where Markework fits. It’s a focused marketing marketplace where companies and marketers connect directly—no middleman layers, and no commission-based project fees draining your upside as described on the platform homepage and reinforced on the pricing page.

For freelancers, the difference is emotional as much as financial. When you’re trying to grow your client roster, the hardest part isn’t doing the work—it’s finding work consistently without losing momentum to gatekeepers and fee structures. Markework is built around direct communication, public discovery, and simple membership plans, so you can spend your energy on pitching and delivering outcomes, not negotiating around platform friction in the “Why Us” page.

And there’s proof that the marketplace is active: the job feed shows more than 1,000 active listings visible on the work board, with the full set unlocked through membership in the listings page summary. That matters because consistency is what turns “freelancing” into a stable business.

If you want more clients, more control, and a cleaner path to opportunities that match your skills, build a profile, browse listings, and start conversations directly with companies that are hiring for paid social, performance marketing, lifecycle, analytics, and more from Markework’s homepage.