Paid social advertising used to be the “boost button” you pressed when you wanted more attention. Now it’s closer to an operating system for growth: a place where creative, targeting, measurement, and iteration have to work together or the whole thing collapses.

The stakes are obvious in the numbers. Social media ad revenue in the U.S. reached $88.8B in 2024, and the rebound was sharp enough that it changed budget conversations across industries. At the same time, the platforms keep evolving—AI-driven delivery, privacy constraints, and new commerce formats mean the “rules” you learned two years ago can quietly stop working.

This guide is built for people who want paid social advertising to produce consistent business outcomes, not random spikes. You’ll get a clear definition, why it matters now, and a practical framework you can use to plan, execute, and improve campaigns without guesswork.

Article Outline

- What Is Paid Social Advertising

- Why Paid Social Advertising Matters

- Framework Overview

- Core Components

- Professional Implementation

What Is Paid Social Advertising

Paid social advertising is the practice of buying distribution on social platforms so your message reaches specific people, in specific contexts, with a measurable business goal attached. That goal might be demand generation, ecommerce sales, app installs, lead capture, in-store visits, or simply making sure the right audience remembers you when they’re ready to buy.

What makes paid social advertising different from other ad channels is the combination of (1) identity and interest signals, (2) creative-first experiences, and (3) algorithmic delivery that learns in real time. You’re not just selecting “who sees what.” You’re feeding a system signals—creative, conversion events, audience constraints, and budget pacing—and the platform optimizes delivery based on predicted outcomes.

It’s also broader than “Meta ads.” Most modern paid social advertising programs treat platforms as a portfolio. Meta is often the largest line item because of scale and mature optimization (Meta reported advertising revenue of $196.2B in 2025), but growth teams commonly pair it with YouTube, TikTok-style short-form video, LinkedIn for B2B intent, Pinterest for high-intent discovery, and Snap for younger reach and direct response experimentation (for example, Snap’s investor materials break out Q4 2025 advertising revenue).

In practice, “paid social advertising” is less a single tactic and more a disciplined loop: define the outcome, build creative that earns attention, route traffic into a conversion system you can measure, and iterate faster than your competitors.

Why Paid Social Advertising Matters

Paid social advertising matters because attention is shifting and buying behavior is getting messier. People don’t move in straight lines from ad to purchase anymore. They watch, swipe, compare, ask friends, search, and come back later—often across devices and platforms.

On the macro level, budgets have followed attention. Global ad spend reached close to US$1.1T in 2024, and digital continues to be the primary driver of that growth. Meanwhile, social platforms have become a center of gravity for video consumption and discovery—Deloitte’s consumer research describes how social video platforms are pulling more time and advertising dollars into their ecosystems (Digital Media Trends 2025).

On the business level, paid social advertising is one of the few channels where you can still do three crucial things at once:

- Create demand by shaping what people believe about your category and your offer.

- Capture demand by converting people who are already looking for a solution.

- Learn faster because creative and audience feedback shows up quickly, and you can run controlled tests.

But the same things that make paid social advertising powerful also make it risky. Measurement can be distorted by privacy changes and cross-device behavior. Platform automation can hide what’s really driving performance. And bad actors can exploit ad systems at scale, which is why serious advertisers treat brand safety and account hygiene as part of performance, not an afterthought (Reuters reported on Meta’s internal concerns around scam ads and enforcement pressure in late 2025).

The takeaway is simple: paid social advertising isn’t optional if you want to compete for attention, but it can’t be managed like a side project. It needs a framework.

Framework Overview

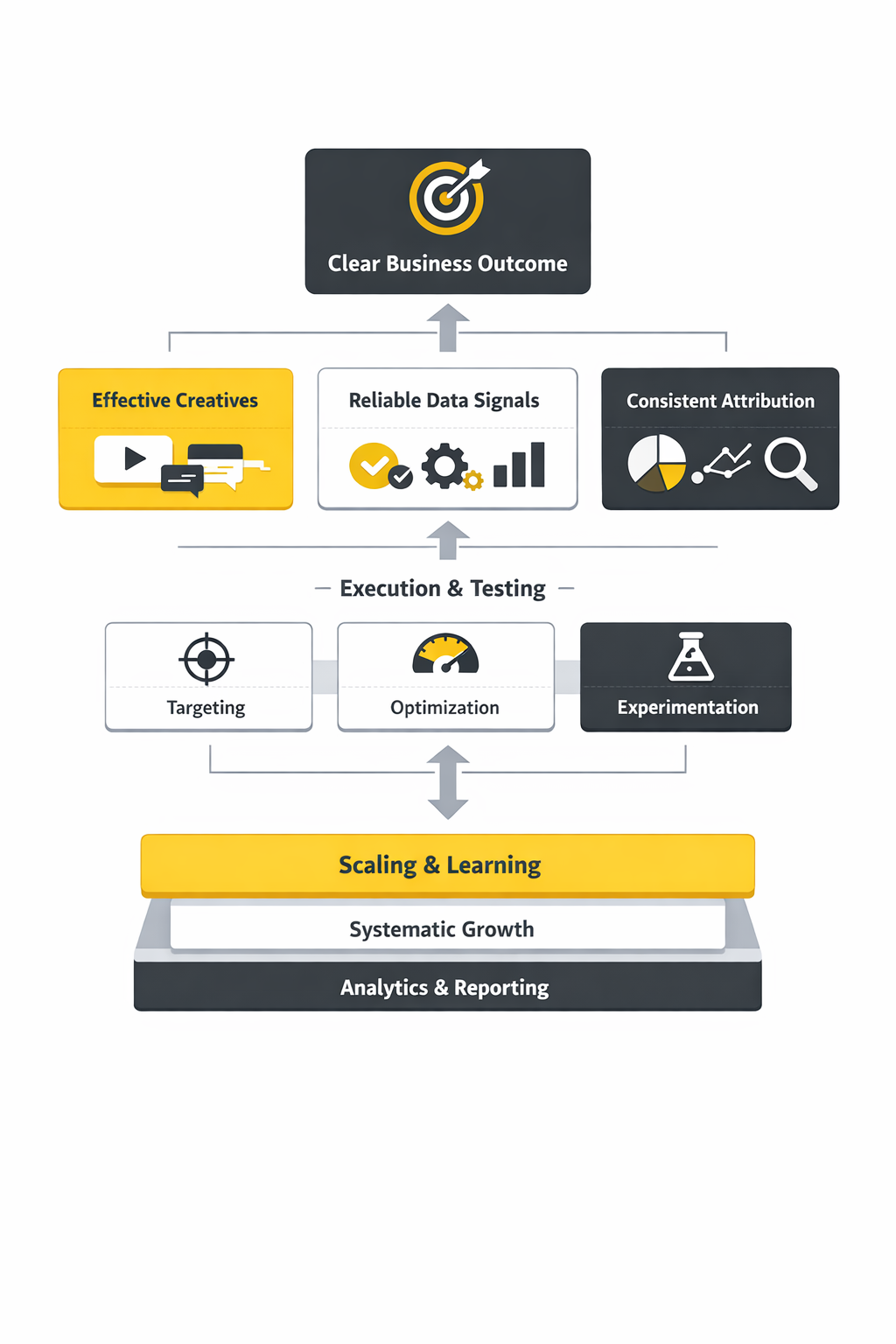

This framework is designed to make paid social advertising predictable. It’s not a “hack list.” It’s a system you can run every week, even when platforms change features or costs fluctuate.

1) Strategy

Start by defining the job the campaign must do: grow pipeline, drive first purchases, increase repeat rate, or expand into a new segment. Then decide how you’ll win: better offer, better positioning, better creative, better distribution, or better conversion system. If you can’t explain the win condition in one sentence, the campaign will drift.

2) Creative That Earns Attention

In paid social advertising, creative is targeting. The ad itself determines who stops, who clicks, and who converts. Strong creative is specific, proof-based, and built for the native behavior of the platform (scrolling, tapping, sound-off video, short attention windows).

3) Distribution and Delivery Controls

This is where you choose platform mix, campaign objectives, audience constraints, placements, budgets, and pacing. The goal isn’t to micromanage the algorithm—it’s to give it clean inputs and guardrails so learning happens quickly and performance doesn’t get “optimized” toward the wrong outcome.

4) Measurement You Can Trust

Attribution is not measurement. Click-based reporting is useful, but it’s not the truth. The closer you get to serious spend, the more you need incrementality methods (lift testing, holdouts, geo experiments) and server-side signals. Platforms themselves encourage this shift—for example, Meta positions Conversion Lift testing as a way to estimate incremental impact rather than relying on last-click behavior.

5) Iteration Cadence

Iteration is where most accounts either compound or stall. A simple cadence works: weekly creative refresh, biweekly audience and offer review, and monthly measurement validation. When performance drops, you diagnose in the same order every time: tracking integrity, conversion friction, creative fatigue, audience saturation, and competitive pressure.

If you run the loop consistently, paid social advertising becomes less emotional. You stop reacting to daily fluctuations and start improving controllable inputs.

Core Components

Every successful paid social advertising program is built from the same components. The differences between “average” and “elite” performance usually come down to how tightly these parts connect.

Offer and Positioning

The offer is what people get. Positioning is why they should care right now. If performance is unstable, most teams assume it’s targeting or bidding. Often it’s simply that the offer is generic, or the positioning sounds like every competitor. In social feeds, “good enough” is invisible.

Creative System

Think in systems, not one-off ads. A practical creative system includes: concept angles (different reasons to believe), format variations (UGC-style video, founder POV, demo, comparison), and proof assets (reviews, case studies, product results). Your goal is to build a library you can recombine, not reinvent every month.

Audience Architecture

Modern paid social advertising tends to work best with a simple architecture:

- Prospecting for new demand (broad, interest stacks, lookalikes where appropriate).

- Engaged retargeting (video viewers, site engagers, lead form openers).

- Customer expansion (upsell, cross-sell, winback).

The nuance is in sequencing and exclusions. You want people to see the right message at the right stage without trapping the algorithm in tiny audiences that can’t learn.

Conversion Path

Paid social advertising can’t compensate for a broken conversion path. If the landing page is slow, the form is painful, the product page is confusing, or the checkout is fragile, you’ll pay for it in CPM-to-CAC inflation. The best teams treat conversion rate optimization as part of the media plan, not a separate department.

Signal Quality and Data

Platforms optimize based on the signals you send them. If you feed low-quality events (or noisy, inconsistent conversion tracking), delivery will chase the wrong users. This is why many advertisers prioritize stronger first-party signals and server-side integrations. When you’re evaluating platforms, it helps to look at how they describe their own revenue engines and ad products in investor disclosures—Meta’s results emphasize the scale of its advertising business (2025 advertising revenue), while other platforms break out advertising lines in investor letters (for example Snap’s Q4 2025 investor letter).

Budgeting and Experimentation

A healthy paid social advertising program separates money into two buckets: “performance that must deliver” and “experiments that expand what’s possible.” Experiments are where you find new creative winners, new segments, and new offers. Without them, you’re just harvesting whatever demand already exists until it dries up.

Professional Implementation

Professional paid social advertising is less about knowing every platform feature and more about running a clean operating process. This section gives you a practical way to set up execution so results are repeatable—and so performance doesn’t fall apart when one campaign stops working.

Build an Operating Model

Decide who owns outcomes, who ships creative, who handles tracking, and who validates measurement. When ownership is unclear, accounts get stuck in endless “it depends” debates. A simple model works well:

- Growth lead owns targets and prioritization.

- Media buyer owns structure, pacing, and testing discipline.

- Creative producer owns volume, format adaptation, and iteration speed.

- Analytics partner owns data integrity, lift testing, and reporting clarity.

Select Platforms Like a Portfolio

Instead of debating which platform is “best,” treat paid social advertising like portfolio management. You want a mix of scale, intent, and experimentation:

- Scale engines where you can spend confidently (Meta’s advertising business scale is documented in its latest annual results).

- High-intent discovery where users arrive closer to purchase (Pinterest’s growth and user scale are reflected in its 2025 results).

- Video attention where demand is shaped at scale (Alphabet highlighted YouTube’s annual revenue exceeding $60B across ads and subscriptions in 2025).

This approach keeps you from being over-dependent on one algorithm or one audience pool.

Set Campaign Build Standards

Standards prevent chaos. A professional paid social advertising setup usually includes:

- Naming conventions that make reporting usable.

- Consistent conversion definitions so campaigns optimize toward the same “truth.”

- Creative testing rules (how many new assets per week, how long before a decision, what qualifies as a winner).

- Budget pacing rules that prevent overspending early and starving learning later.

Use a Measurement Stack, Not Just Platform Reports

Platform dashboards are great for diagnosing delivery and creative performance, but they’re not enough for financial decisions. As spend grows, you’ll want a measurement stack that includes clean analytics, CRM visibility, and incrementality methods. If you’re using Meta heavily, it’s worth understanding tools like Conversion Lift because it pushes you toward a question that actually matters: did the ads cause additional outcomes, or just claim credit?

Protect the Account Like a Business Asset

Paid social advertising accounts are targets: for fraud, policy violations, brand impersonation, and billing issues. Treat governance as performance work. That means strong access controls, verified domains, clear approval workflows, and brand safety monitoring. If you operate in categories that attract scammers or impersonators, it’s not paranoia—it’s basic defense, especially given how widely ad fraud has been reported across the industry (Reuters coverage is one example of how serious the issue can be at platform scale).

Define “Good” in Plain English

The final professional move is clarity. Before launching, define what success looks like in human terms: “We will acquire customers at a cost that allows profitability by the second purchase,” or “We will generate qualified pipeline at a sustainable cost per opportunity.” When the definition is clear, your testing becomes sharper, your creative briefs improve, and your reporting becomes useful instead of decorative.

With that foundation, the rest of paid social advertising becomes a repeatable craft: build, measure, learn, and improve—without needing luck.

Tools Supporting The Framework

When paid social advertising is working, it rarely comes down to one magical platform setting. It’s usually a clean flow of signals: your ads generate demand, your site or app captures it, your tracking records it reliably, and your measurement tells you what actually caused the result.

Tools exist to protect that flow. Some tools help you collect better conversion signals when browsers and apps are stingy with data, like the server-side options inside Google Tag Manager’s server-side tagging or the direct event pipeline in Meta Conversions API. Other tools help you stop believing your own dashboards too quickly by validating incrementality through experiments, such as Meta’s lift methodology explained in its Conversion Lift guidance or TikTok’s documentation for Conversion Lift Study.

And then there are tools that help you make big budgeting decisions without getting trapped in last-click thinking. Google’s open-source MMM, Meridian, is one of the clearest signals that modern measurement is shifting toward privacy-safe modeling that can be calibrated with experiments. That matters because ad spend is still expanding in a way that punishes sloppy measurement; U.S. social media advertising alone hit $88.8B in 2024, so “good enough” reporting can become “expensive mistakes” very quickly.

Tool Categories

The easiest way to choose tools for paid social advertising is to stop thinking in logos and start thinking in jobs. Each category below supports a specific failure point in the system, which keeps your stack focused and easier to maintain.

1) Platform-Native Execution Tools

These are the ad managers you actually run campaigns in: Meta, TikTok, LinkedIn, YouTube, Pinterest, Snap, and others. They matter because every platform’s delivery system is built to learn from your inputs, and the fastest way to damage performance is to feed conflicting goals, inconsistent conversion events, or constantly changing structures.

Use platform-native tools for creative testing, placement controls, pacing, and diagnosing delivery issues. But be careful about turning platform reporting into your only source of truth, because the same system that optimizes delivery also “attributes” outcomes in a way that can flatter itself.

2) Signal Collection and Tagging

This category is about getting reliable events into the platforms so optimization has something real to learn from. In paid social advertising, weak signals show up as unstable results, unexplained fluctuations, and campaigns that can’t scale without efficiency collapsing.

Two common building blocks are:

- Server-side tagging to move measurement away from fragile browser-only tracking, using patterns described in Google’s server-side tagging introduction.

- Server-to-platform event sending for cleaner conversion data and better matching, using Meta Conversions API and similar event APIs on other platforms.

If your paid social advertising program depends on lead quality, offline sales, or CRM status, this is where you earn the right to optimize for what actually matters instead of what’s easiest to track.

3) Analytics and Attribution

Analytics tools help you understand paths and on-site behavior; attribution tools attempt to assign credit to touchpoints. They are related, but not interchangeable. A clean example is Google Analytics 4’s attribution features, where the platform explains how attribution models assign credit across touchpoints in its attribution documentation.

For paid social advertising, analytics is often most useful for conversion friction, audience behavior, and funnel drop-offs, while attribution is most useful for directional decision-making and channel comparisons. The moment you treat either one as a perfect representation of causality, your budget decisions get riskier.

4) Experimentation and Incrementality

This category answers the question your CFO actually cares about: did the ads cause additional business outcomes, or did they just collect credit for outcomes that would have happened anyway?

- Meta describes how randomized test and control designs can estimate incremental impact in its Conversion Lift best practices.

- TikTok positions experimentation as the way to measure “true impact” in its Conversion Lift Study documentation.

- Mobile growth teams often use platforms like AppsFlyer for structured incrementality testing, including remarketing incrementality experiments and newer guidance such as Incrementality for UA.

If your paid social advertising spend is meaningful, incrementality isn’t a “nice-to-have.” It’s how you stop optimizing for illusions.

5) Marketing Mix Modeling

MMM is built for strategic decisions: how to allocate budget across channels and time, even when user-level tracking is incomplete. The most notable recent shift is the push toward open, privacy-safe models that advertisers can run and audit. Google’s Meridian is a clear example of that direction, and Google’s longer-form thinking about what it’s designed to do is outlined in its Meridian overview.

For paid social advertising, MMM becomes dramatically more useful when it’s calibrated with incrementality experiments. That combination keeps you from “over-correcting” based on noisy attribution swings.

6) Data Warehouse and BI

Warehousing and BI are where paid social advertising becomes operationally mature. You consolidate platform spend, conversion events, CRM outcomes, and margin data so reporting reflects reality, not just ad dashboards.

This category is also where you reduce argument cycles. When everyone is looking at the same definitions of revenue, lead quality, and cohort behavior, you spend more time improving outcomes and less time debating whose numbers are “right.”

7) Governance, Security, and Policy Hygiene

Tools and processes here protect your assets: ad accounts, pixels, domains, catalogs, and data access. This isn’t glamorous, but it’s part of professional paid social advertising because one policy issue or compromised tag can disrupt performance overnight.

Even in measurement tooling, security considerations matter. For example, server-side tagging introduces new controls and responsibilities that Google documents in its server-side tagging release notes and broader server-side materials, because you’re now operating a tagging environment, not just pasting scripts on a site.

Tool Comparison

Comparing tools for paid social advertising only works if you compare them against the job you need done. The same tool can be perfect for one company and unnecessary for another, depending on spend, sales cycle, and how much of your revenue happens outside the browser.

Platform Tools: Fast Learning, Limited Truth

Platform-native tools are unbeatable for speed. You can ship creative, adjust budgets, and see directional signals quickly. They’re also the most fragile place to make strategic calls because attribution is optimized for platform usefulness, not for proving causality.

If you want a practical rule: use platform tools to run and diagnose paid social advertising day-to-day, then validate the business impact with experiments and triangulation.

Server-Side Tools: Better Signals, More Responsibility

Server-side measurement improves signal reliability when client-side tracking is disrupted. Google frames server-side tagging as a way to move measurement instrumentation into a server container with more control and flexibility in its server-side overview. Meta’s Conversions API similarly exists to send events directly from servers in its developer documentation.

The tradeoff is ownership. You need engineering support, version control discipline, and ongoing monitoring, because you’re now operating part of your measurement pipeline as infrastructure.

Attribution Tools vs. Incrementality Tools: Explanation vs. Proof

Attribution explains. Incrementality proves. Both are useful, but they answer different questions.

- Attribution helps you understand paths and relative contribution, with GA4 laying out attribution concepts in its official attribution guide.

- Incrementality helps you estimate causal lift through experimentation, with Meta and TikTok outlining lift methodologies in Meta’s Conversion Lift guidance and TikTok’s Conversion Lift Study documentation.

In paid social advertising, attribution is how you steer. Incrementality is how you make confident, high-stakes budget decisions.

MMM: The Strategic Layer That Handles Messy Reality

MMM is strongest when you need cross-channel budget decisions and you can’t rely on perfect user-level tracking. Meridian is positioned as a modern MMM approach built for today’s journeys in Google’s announcement that it’s available to everyone. The practical value shows up when you can connect MMM insights back to experiments and real business constraints like margin, capacity, and seasonality.

Real Tool Stack Stories

The easiest way to understand tool stacks is to see how real teams behave when the numbers stop making sense. These stories aren’t about shiny software. They’re about what happens when paid social advertising meets real-world measurement problems and the team has to fight their way back to clarity.

Dentsu’s Search Lift Wake-Up Call

The first sign something was off wasn’t a small dip. It was the kind of performance swing that makes people start blaming creative, then blaming targeting, then blaming the algorithm, all in the same meeting. Search teams insisted they were carrying the final conversion, while paid social advertising teams argued they were “warming the market” and getting none of the credit. Everyone had dashboards, and none of the dashboards agreed.

Backstory: Dentsu had been granted early access to Meta’s Search Lift tool and decided to treat it like a serious measurement program rather than a one-time experiment. They ran a wide set of studies across luxury and fashion ecommerce brands to understand whether Meta exposure changes how people search on traditional search engines. Their approach was built around a simple idea: if the ads change intent, the impact should show up in search behavior, not just in clicks inside social feeds.

The wall hit when last-click models kept telling the same story: paid search looked like the hero, and paid social advertising looked like an “assist” at best. But in real consumer behavior, people don’t see a social ad and immediately buy; they often go search, compare, and return later. The measurement gap wasn’t theoretical anymore—it was actively misallocating budgets and creating internal conflict.

The epiphany came out of aggregated lift results. In their published write-up of the program, iProspect (a dentsu brand) reported that exposure to Meta ads was associated with a 10.4% average increase in search volume compared to baseline, and it highlighted increases in paid search volume for brands as well. That reframed the conversation: paid social advertising wasn’t “stealing” credit from search, and search wasn’t “saving” social—it was one system shaping demand and then harvesting it in different places.

The journey that followed wasn’t glamorous, but it was decisive. Teams started treating lift testing as a recurring input, not a one-off proof point. They adjusted reporting to account for cross-channel influence, and they stopped making weekly budget cuts based on attribution swings that ignored the search behavior social campaigns were driving. Instead of asking “which channel gets credit,” the question became “what mix creates the most incremental demand?”

Then the final conflict arrived: people tried to operationalize lift insights using the same old habits. Search teams wanted to claim the entire uplift because conversions happened there, while social teams wanted to call it a pure branding effect. The fix was governance: shared definitions, agreed experimentation cadence, and budget decisions tied to what the lift tests were actually measuring, not who owned which dashboard.

The dream outcome wasn’t a perfect attribution model. It was something better: a calmer, more profitable decision loop. Paid social advertising campaigns could be evaluated for the demand they created, search could be planned with the reality of that demand in mind, and budgets could move with confidence instead of politics. Once the organization accepted that “searches originate from somewhere,” the entire system got easier to manage, echoing the same industry framing seen in coverage of Meta’s search lift approach in Campaign Live’s write-up.

The Meridian Moment for Budget Decisions

It starts with a familiar kind of panic: performance looks fine inside each platform, but the business result doesn’t match the story the dashboards are telling. The paid social advertising team is scaling spend because ROAS “looks strong,” yet finance is seeing uneven revenue and weaker profitability. Everyone feels like they’re being gaslit by metrics that used to line up.

Backstory: privacy changes, modeled conversions, and cross-device behavior have made user-level certainty harder to achieve at scale. Many teams responded by adding more attribution tools and more dashboards, hoping the “right view” would appear. But more views often just meant more contradictions, because the underlying data is still incomplete.

The wall is when leadership asks a simple question that no one can answer cleanly: “How much budget should we move from channel A to channel B next quarter?” If your only answer is last-click trends or platform-reported ROAS, you’re making a strategic bet on the least reliable type of evidence. That’s where teams realize they need a measurement layer built for allocation, not just reporting.

The epiphany is why open MMM has been getting attention. Google’s launch of Meridian made it easier for teams to run a modern marketing mix model designed for today’s fragmented journeys, and Google’s thinking on how it fits into strategic planning is spelled out in its Meridian overview. The key shift is psychological as much as technical: you stop demanding impossible certainty from user-level attribution and start optimizing with a model built for reality.

The journey looks like grown-up measurement work. Teams connect spend and outcome data, test model assumptions, and then calibrate the model using experiments so it doesn’t drift into fantasy. Lift tools become the guardrails: TikTok frames this causal question directly in its Conversion Lift Study documentation, and Meta pushes similar discipline through Conversion Lift methodology.

The final conflict is internal: people want MMM to spit out a single “true ROAS” number that ends all debate. It won’t. MMM is a decision tool, not a moral authority, and it still needs human judgment about constraints like capacity, margins, and brand risk. The teams that win are the ones that treat measurement as a system that improves over time, not a one-time software install.

The dream outcome is strategic calm. Paid social advertising decisions stop being whiplash reactions to weekly attribution changes and become deliberate quarterly bets backed by modeled insights and causal validation. Instead of endlessly fighting over credit, the organization focuses on what matters: which mix produces incremental growth at sustainable unit economics.

Map Your Signal Chain Before You Buy Anything

Start with the end: what outcome matters most, and where does it live? If it’s a purchase, it might live in Shopify or your payment processor. If it’s pipeline, it might live in a CRM. If it’s an app subscription, it might live in your billing system. Once you know where the truth lives, you can decide how to pipe that truth into paid social advertising platforms so optimization is learning from real outcomes, not proxy events.

Choose One Attribution View for Steering

You can still look at platform dashboards, but you need a consistent attribution lens for week-to-week decisions. GA4 explains the basics of attribution models and how credit is assigned in its attribution documentation. The goal isn’t to find a “perfect” model; it’s to pick a consistent one that helps you steer while you validate impact elsewhere.

Harden Tracking With Server-Side Where It Matters

If paid social advertising is a core growth driver, server-side isn’t a buzzword—it’s reliability. Google’s documentation on server-side tagging lays out the concept and why it changes what you can control. Meta’s Conversions API shows the parallel approach for sending events directly from servers so your optimization signals aren’t entirely dependent on browser behavior.

Implement server-side where it has real leverage: lead qualification, purchase events, subscription starts, offline conversions, and any “high-value” event that tends to break when browsers update.

Add Incrementality on a Cadence

The most common mistake is treating incrementality as a rescue mission you only run when performance drops. Lift testing is most valuable when it’s routine, because you can see how incrementality changes across seasons, creative strategies, and audience saturation.

- Meta outlines lift study discipline in its best practices.

- TikTok’s framework is clear in its Conversion Lift Study documentation.

- AppsFlyer provides concrete guidance for structuring incrementality experiments, such as remarketing tests and updated UA incrementality guidance.

Once you have incrementality results, use them as calibration points for attribution and MMM rather than letting every dashboard compete for the last word.

Build a Decision Dashboard, Not a Vanity Dashboard

A decision dashboard answers: what changed, why it likely changed, and what we’re going to do next. It combines spend, creative coverage (what’s live and how fresh it is), conversion rate health, and a few business outcomes that reflect quality. When your stack supports those decisions, paid social advertising becomes a repeatable operating system instead of a constant emergency.

Keep the Stack Small and Auditable

The more tools you add, the more chances you create for mismatched definitions and silent data loss. A strong default stack for many teams is:

- Execution: native platform ad managers

- Collection: server-side tagging and/or direct event APIs like Meta Conversions API

- Steering view: one analytics and attribution layer guided by GA4 attribution concepts

- Proof layer: lift testing via TikTok, Meta, and/or structured platforms like AppsFlyer

- Strategy: MMM where needed, with tools like Meridian

That stack won’t win awards for novelty, but it will do something far more valuable: it will keep your paid social advertising decisions grounded in reliable signals, causal checks, and business outcomes you can trust.

Step By Step Implementation

The fastest way to make paid social advertising feel “unpredictable” is to launch with loose inputs and then try to optimize your way out of the chaos. A cleaner approach is to build the foundation once, then run a repeatable loop where the platform can learn from stable signals and you can learn from stable reporting.

1) Define the win condition in business terms

Start with a sentence your finance partner would recognize. “Acquire first-time customers at a margin-positive CAC,” “Generate qualified pipeline that converts to closed-won,” or “Drive incremental bookings during a seasonal window.” This matters because the platforms will happily optimize for whatever you ask for, even if the requested outcome is a proxy that doesn’t map to profit.

2) Pick one primary conversion event that reflects real value

If you’re optimizing for purchases, optimize for purchases. If you’re optimizing for leads, don’t stop at form submits if lead quality is the real constraint; plan how qualified leads (or offline outcomes) will flow back into your measurement and optimization setup. When the conversion event drifts, paid social advertising starts “improving” while your business results stay flat.

3) Set up measurement you can defend

Use an analytics view to understand on-site behavior and a platform view to diagnose delivery, but don’t confuse either with causality. If you’re using GA4, its attribution setup explains how credit can be distributed across touchpoints, which helps you stay consistent week to week as you steer decisions in one direction instead of five at once (GA4 attribution documentation).

For higher-stakes decisions, plan incrementality early. Meta supports lift studies for causal measurement through its Marketing API guidance (Lift studies on Meta), and it also explains lift and holdouts in its business help materials (lift and holdouts overview).

4) Build a simple campaign architecture

A practical default for paid social advertising is to separate campaigns by job-to-be-done, not by personal preference. One prospecting campaign that can learn, one engaged retargeting campaign that supports decision-making, and one customer expansion campaign if you have meaningful repeat behavior. Complexity should be earned by clear constraints, not by habit.

5) Create a creative test plan before you launch

If you launch without a plan for creative velocity, you’re basically promising future-you a crisis. Decide what “new” means (new hook, new proof, new format), how many new assets ship each week, and how you’ll decide winners. Paid social advertising runs on learning, and learning slows down when creative refresh becomes an occasional event instead of a routine.

6) Set budgets to support learning, not just to limit risk

Budgets should be large enough to generate signal. If your conversion volume is low, micro-budget tests tend to create false confidence because the system can’t learn. This is also why many teams treat budget as an input to measurement maturity: the U.S. digital ad market hit $259B in 2024, and the brands that scale spend responsibly tend to pair spending growth with stronger measurement discipline.

7) Run a preflight checklist

Before you hit publish, confirm the basics that often cause “mystery performance issues”: the conversion event fires cleanly, landing pages load quickly, UTMs are consistent, exclusions prevent wasted overlap, and reporting definitions match what your team will use in meetings. This checklist doesn’t feel exciting, but it’s where professional paid social advertising separates itself from frantic trial-and-error.

Execution Layers

Paid social advertising works best when execution is layered. Each layer has its own job, and if one layer is weak, the others have to compensate in expensive ways.

Layer 1: Strategy and offer

This is where you decide what you’re asking people to do and why they should care. If the offer is vague or the positioning sounds like everyone else, your CPM becomes the tax you pay for being ignorable.

Layer 2: Creative and messaging

Creative is where your targeting becomes real. It’s also where you can control differentiation. A strong paid social advertising operation builds repeatable creative angles (pain, aspiration, proof, comparison, behind-the-scenes), then adapts them into native formats that match how people actually consume content.

Layer 3: Media and delivery controls

This is the engineering of distribution: objective selection, placement strategy, pacing, and guardrails that prevent the system from optimizing toward low-quality outcomes. You’re not trying to outsmart the algorithm; you’re trying to feed it clear, consistent inputs so it can learn efficiently.

Layer 4: Conversion experience

Landing pages, forms, checkout, and onboarding are part of paid social advertising even when teams pretend they’re not. When conversion friction is high, the platform compensates by finding cheaper clicks instead of better buyers, and you end up chasing volume that doesn’t translate into revenue.

Layer 5: Data and measurement

This layer is what keeps you from “optimizing a hallucination.” Use analytics to spot behavior patterns, use platform diagnostics to manage delivery, and use experiments when you need causal confidence. If you operate at meaningful scale, lift testing and holdouts are how you keep decision-making grounded (Meta lift and holdouts).

Layer 6: Governance and safety

Account access, domain verification, and policy hygiene are not admin chores. They’re risk controls. Paid social advertising is an operating asset, and disruptions here can pause growth just as effectively as a bad creative streak.

Optimization Process

Optimization is where paid social advertising either compounds or collapses into constant fire drills. The trick is to optimize in the same order every time so you don’t waste weeks “fixing” a symptom while the real problem stays untouched.

1) Validate tracking before you touch campaigns

If conversion events are broken or inconsistent, every other optimization is just guesswork. Confirm that your primary conversion event is firing cleanly and that reporting definitions haven’t drifted. If you’re using lift tests, keep the basics in mind: Meta’s lift study guidance is built around controlled experiments designed to measure incremental impact, not just attribution credit (Lift studies on Meta).

2) Diagnose creative fatigue before you blame targeting

Most performance drops that feel like “the audience stopped working” are often creative saturation in disguise. Look for declining hold rates on video, falling click quality, and rising frequency without conversion improvement. Then refresh the message, not just the audience settings.

3) Improve conversion friction before you scale spend

Scaling a leaky funnel makes the leak more expensive. Use your analytics view to identify where users hesitate or abandon, and make fixes that remove friction. GA4’s attribution documentation is useful here because it helps you keep your steering view consistent while you focus on the actual user experience that converts (GA4 attribution documentation).

4) Scale with constraints, not optimism

When performance is strong, scale in a way that preserves learning. Increase budgets gradually, avoid constant structural changes, and keep your conversion event stable so the system can continue optimizing. At platform scale, the economic incentive for optimization is enormous—Meta reported $200.97B in 2025 revenue, so its delivery systems are built to maximize outcomes within your constraints, not to protect you from messy inputs.

5) Use experiments to settle arguments

When teams argue about whether paid social advertising is “incremental” or “just retargeting people who would buy anyway,” stop debating and test. Lift studies and holdouts are designed for this exact situation (Meta lift and holdouts). Once you have results, you can calibrate your reporting and budget decisions around what actually changes outcomes.

6) Zoom out with MMM when channel interactions get messy

As spend grows across platforms, attribution gets noisier and channel interactions get stronger. MMM becomes useful because it’s built for allocation decisions even when user-level tracking is incomplete. Google’s open-source MMM, Meridian, is positioned specifically to help advertisers measure outcomes across channels and allocate budgets with more confidence.

Implementation Stories

A good paid social advertising implementation doesn’t just produce metrics. It changes behavior inside a business: how teams decide, how they tell stories, and how they turn attention into outcomes. One of the cleanest real-world examples comes from travel, where seasonal pressure turns weak execution into immediate pain.

Turkish Airlines Turning Black Friday Into Demand

It started with a familiar kind of dread: Black Friday was approaching, competition was loud, and the easiest move—discounts—risked making the brand feel interchangeable. Every day that passed meant more travelers locking in plans, and the window to influence decisions was shrinking. The team didn’t need “more ads”; they needed a way to break through without racing to the bottom.

Backstory: Turkish Airlines partnered with Skyscanner with a clear intention to stand out in a crowded moment without relying on price cuts. The goal was to build an emotional connection and spark interest for Istanbul among UK and US travelers inside Skyscanner’s ecosystem (Skyscanner’s case study). The plan leaned into storytelling, high-impact placements, and a format that could hold attention long enough to create real intent.

The wall showed up in the obvious place: the internet scroll. People were bombarded with deals, and “another travel ad” was the easiest thing to ignore. Even if someone felt a spark of interest, it could fade instantly if there wasn’t a reason to engage. Without a hook strong enough to pull people into a moment, paid social advertising would end up as background noise.

The epiphany was simple: make the campaign an experience, not a banner. Skyscanner built a bespoke landing page and wrapped it around an easy, one-question quiz with a high-value prize—two return flights to Istanbul—so engagement felt like play, not a hard sell (campaign description). Instead of trying to outbid competitors, the team tried to out-story them, using culture and curiosity as the entry point.

The journey became a coordinated multi-format rollout that surrounded travelers with consistent messaging: homepage hero banners, display placements, paid social content, affiliate promotions, and targeted emails were orchestrated to feel like one cohesive narrative rather than scattered tactics (multi-format strategy). The campaign ran for three weeks, timed to the peak seasonal booking surge, with the goal of turning attention into searches and searches into bookings. Even the content itself did heavy lifting, using a cultural article to make Istanbul feel vivid and specific rather than generic and “touristy.”

Then the final conflict arrived, because short campaigns have a cruel flaw: momentum dies the moment the spend stops. Interest is fragile, and many campaigns waste it by ending without a follow-through plan. The team addressed that risk with post-campaign re-engagement using exclusive promo codes to convert the initial excitement into bookings (re-engagement approach).

The dream outcome was the kind that changes how teams think about paid social advertising. The case study reports a 38% year-over-year increase in searches for Istanbul, nearly three million travelers reached, and 4.6 million impressions, plus performance signals like a 500% higher click-through rate compared to benchmarks (reported results). More importantly, the campaign demonstrated a repeatable principle: when you combine a strong hook, native storytelling, and a coordinated distribution plan, you can create demand in a short window and still capture it after the peak moment passes.

Run a weekly cadence that ships creative and learns fast

Pick one day for creative shipping, one day for analysis, and one day for adjustments. This keeps execution steady and prevents the common failure mode where teams tweak campaigns daily while creative stays unchanged for weeks. Your goal is to make learning predictable, not to chase every fluctuation.

Maintain one source of truth for decision-making

Choose one analytics view for steering so your team speaks the same language in meetings. GA4’s documentation on attribution is helpful here because it clarifies how different models distribute credit, which reduces confusion when results shift across reporting views (GA4 attribution documentation).

Treat experimentation as routine, not emergency response

If the budget is meaningful, incrementality testing should be scheduled, not improvised. Lift tests and holdouts exist to answer the “did this cause lift?” question in a way attribution can’t (Meta lift studies). Once the organization trusts the proof layer, paid social advertising becomes calmer because fewer decisions are based on fragile assumptions.

Document standards and protect the account

Write down naming conventions, conversion definitions, and who can change what. This sounds basic, but it prevents silent drift that makes performance feel mysterious. The biggest performance wins often come from removing self-inflicted chaos so the platform can learn and your team can iterate with clarity.

Know when to use MMM to guide allocation

When you’re running multiple platforms and performance depends on interactions across channels, MMM can help you allocate budgets without relying on perfect user-level tracking. Meridian is a good example of the direction measurement is moving: a privacy-safe, open approach designed to support smarter allocation decisions (Meridian). Used well, it complements lift tests and keeps strategic planning grounded.

Statistics And Data

Paid social advertising is one of those channels where it’s easy to feel confident and still be wrong. The dashboards are rich, the numbers update hourly, and the platforms are happy to show you a clean story. The job of analytics is to turn that story into decisions you can defend when the spend gets real.

Start with the scale you’re operating in. U.S. digital advertising hit a record $258.6B in 2024, and social media advertising inside that total reached $88.8B. At the same time, the addressable audience is enormous: Kepios’ tracking of “user identities” shows 5.66B social media user identities globally as of October 2025, which is why paid social advertising keeps pulling budget even when measurement gets harder.

What these macro numbers really tell you is this: paid social advertising is not a side channel anymore. It’s a core budget line that needs the same level of measurement rigor you’d expect from any major investment.

Performance Benchmarks

Benchmarks are useful when you treat them like context, not like targets. A “good” CTR or CPM doesn’t exist in isolation; it changes with creative quality, audience saturation, auction competition, seasonality, and what the platform is optimizing for.

Auction pricing trends worth watching

One of the cleanest benchmark signals is pricing movement over time. Tinuiti’s Q4 2024 Digital Ads Benchmark Report shows that across Meta properties, CPM rose 5% year over year in Q4 2024 while overall Meta spend grew 15% year over year in the same period. Inside that mix, Tinuiti reports Instagram CPM up 15% year over year in Q4 2024, a reminder that “efficient” can flip quickly when competition intensifies around high-performing inventory.

Placement mix as a benchmark for creative reality

When placement mix shifts, your creative requirements shift too. Tinuiti’s Q4 2024 report shows Instagram Stories reaching 47% of ad impressions in Q4 2024, with Instagram Feed down to 29% and Reels at 18%. If your best assets are still static feed-first layouts, that distribution is a quiet warning that your creative system is fighting the platform, not flowing with it.

Platform mix benchmarks for budget allocation

Spend share can be a helpful sanity check when you’re trying to understand where the auction pressure really is. In Tinuiti’s Q4 2024 Meta breakdown, Facebook accounted for 64% of spend and Instagram for 35%. That doesn’t tell you what you “should” do, but it does tell you where many performance advertisers are placing their bets at scale.

Content-format benchmarks that influence paid performance

Even when you’re focused on paid social advertising, organic format shifts often show where audiences are spending attention. Emplifi’s 2025 Social Media Benchmarks report (covering 2023–2024 activity) shows TikTok leading brand account growth with 21% median monthly follower growth, while Instagram maintained 6% monthly growth. The same report notes that Reels became the most-used Instagram post type by year’s end, reaching 38% of brand posts, which is a useful proxy for where short-form video skills are becoming table stakes.

Analytics Interpretation

Analytics interpretation is the difference between “reporting” and “running a system.” Paid social advertising generates a lot of numbers, but only a few of them are decision-grade at any given moment.

Stop chasing single metrics and start reading relationships

A rising CPM isn’t automatically bad; it can be the cost of reaching a better audience or winning more competitive placement. A falling CPC isn’t automatically good; it can be the platform finding cheaper clicks that don’t convert. The real signal is how CPM, CTR, conversion rate, and average order value move together, because that combination tells you whether the system is buying attention that turns into outcomes.

Separate delivery problems from conversion problems

Delivery problems show up as instability in reach, frequency, or learning. Conversion problems show up after the click: bounce rates, form abandonment, checkout errors, or low lead quality. When you treat both problems the same, you end up “optimizing” paid social advertising inside the platform while the real leak lives on the landing page or in the sales process.

Triangulate attribution with proof when the stakes rise

Attribution is a steering wheel, not a courtroom verdict. GA4 lays out how attribution models distribute credit across touchpoints in its attribution documentation, which helps you keep internal reporting consistent. When you need causal confidence, use experiments designed for incrementality; Meta’s lift studies are explicitly built around test and control groups to estimate incremental impact in its lift study guide.

Use market signals as guardrails, not excuses

Sometimes performance shifts because the auction shifts, not because your campaign got worse. Meta’s 2025 results show that its average price per ad increased year over year in both Q4 and the full year, reflected in the company’s 2025 earnings release. That doesn’t mean you accept worse results; it means you interpret changes with the reality that pricing is moving and your advantage needs to come from creative, conversion rate, and measurement discipline.

Case Stories

Real analytics stories in paid social advertising rarely feel tidy while they’re happening. They feel like pressure, confusion, and competing narratives—right up until a team finds the signal that makes the chaos legible.

QUAY’s Moment of Truth With a Conversion Lift Study

It started with a gut punch that didn’t show up in the dashboard. Spend was rising, creative was shipping, and the platform numbers looked fine, but the team still felt the fear that they were paying for activity instead of paying for growth. The worst part was how plausible both stories sounded: “the ads are working” and “the ads are just claiming credit.” In paid social advertising, that’s the moment where teams either double down blindly or demand proof.

Backstory: QUAY is a sunglasses brand that has to win in a market where people can browse endlessly, hesitate, and buy later from somewhere else. TikTok offered attention at scale, but attention is not the same as incremental revenue. The brand partnered with TikTok and ran campaigns with the intention of measuring impact beyond clicks, not just reporting it (TikTok’s QUAY conversion lift case study).

The wall hit when the team realized that the usual reporting language couldn’t settle the internal tension. If someone clicked and purchased, the platform claimed a win, but that didn’t answer the real question: would they have purchased anyway? Without a causal method, every strong week could be a mirage, and every weak week could be a false panic. That ambiguity is expensive, because it makes budgeting feel like gambling.

The epiphany was to stop arguing about attribution models and run a structured test. TikTok describes the setup directly: QUAY’s audience was split into test and control groups through a Conversion Lift Study so the brand could measure incremental impact on behaviors like search, add-to-cart, and purchases (study design details). Instead of asking the dashboard to “be honest,” they built a measurement environment that could answer the causal question.

The journey that followed looked like grown-up paid social advertising work, not a creative brainstorm. The team used the experiment to interpret what the campaigns were truly doing across the funnel, rather than treating a single metric as the full story. They focused on what changed between exposed and unexposed groups, which is the only comparison that matters when you’re trying to understand incremental value. When you run the test this way, the output becomes a budgeting tool, not a vanity chart.

The final conflict is the part most teams don’t anticipate: once you have lift results, you have to live with them. If the lift is smaller than expected, you can’t hide behind “but ROAS looked good.” If the lift is strong, you have to resist the temptation to scale without improving the system that converts demand into revenue. The discipline is to treat lift as guidance for where to fix the machine—creative, offer, landing experience, or targeting constraints—before you pour in more fuel.

The dream outcome is clarity that changes behavior. TikTok reports lift results from the study, framing the impact across lower-funnel actions and purchases in its published QUAY case study (reported results). More importantly, the team gets a new operating posture: paid social advertising stops being a debate club and becomes a decision system backed by experimentation.

Professional Promotion

When people hear “promotion,” they often think of hype. In professional paid social advertising, promotion is the opposite: it’s the skill of presenting performance in a way that builds trust, earns budget, and sets expectations that won’t collapse next month.

Promote through clarity, not cherry-picked wins

The cleanest way to “sell” paid social advertising internally is to make the numbers harder to misread. Use a consistent reporting lens for week-to-week steering, like GA4 attribution concepts (GA4 attribution documentation), then show where causal proof exists and where it doesn’t. When you separate directional reporting from incrementality, your stakeholders stop feeling like they’re being marketed to.

Connect results to market context

If costs rise, show whether that’s an internal problem or an auction reality. Meta’s earnings disclosures show year-over-year movement in ad pricing and scale, including an increase in average price per ad referenced in its 2025 results. Tinuiti’s benchmark reporting also shows quarter-specific CPM changes, like the Meta CPM increase in Q4 2024, which helps stakeholders understand that pricing pressure is not always a creative failure.

Promote the system, not the campaign

One campaign can win because of timing or novelty. A system wins because it compounds. When you present paid social advertising performance, show the operating cadence: how many new creative assets shipped, what experiments ran, what changed in conversion friction, and what lift evidence exists. Teams that do this consistently get more budget not because they “promise” more, but because they demonstrate control.

Use macro numbers to justify rigor

When leadership questions why measurement and experimentation matter, bring it back to the scale of the channel. U.S. social media advertising reached $88.8B in 2024, and the broader U.S. digital market hit $258.6B. In a market this large, sloppy measurement doesn’t just create confusion—it creates expensive, repeatable mistakes.

Advanced Strategies

Once the basics are stable, paid social advertising stops being a “campaign” and starts behaving like a growth engine. The difference is that you’re no longer trying to get one set of ads to work; you’re building advantages that compound: better signals, faster creative learning, smarter sequencing, and measurement that doesn’t collapse the moment attribution gets noisy.

The most reliable advanced strategies tend to share one trait: they make the platform’s automation work harder for you without handing it the steering wheel. That balance matters more than ever in a market where pricing pressure moves quickly, reflected in Meta’s disclosure that average price per ad increased in 2025, while overall U.S. digital ad revenue keeps expanding at pace, reaching $258.6B in 2024.

Build a creative system that feeds automation

Automation doesn’t save weak creative. It just finds more people who ignore it. The scalable move in paid social advertising is to create an assembly line of angles and formats so you can keep learning while costs fluctuate and placements shift.

- Angle libraries: proof, comparison, objections, “behind the scenes,” and category education, each written as distinct hooks that can stand alone.

- Format families: short-form video, UGC-style explainers, product demos, creator-led storytelling, and static assets that support retargeting and offer clarity.

- Proof modules: reviews, guarantees, expert credibility, product outcomes, and third-party validation that can be swapped into different concepts without rebuilding the whole ad.

When you run creative like this, scaling doesn’t require “finding a unicorn ad.” It requires shipping enough high-quality iterations that the platform can keep learning without your account getting stuck in fatigue.

Sequence demand, then capture it deliberately

Paid social advertising is at its best when it shapes intent first, then captures it with a tighter message. The mistake is trying to force cold audiences straight into a decision without building belief. A more scalable approach is sequencing: introduce the why, reinforce with proof, then present the offer when the person is warmed up.

If you want one practical signal that sequencing matters, look at how social exposure can influence downstream search behavior. iProspect (dentsu) summarized Search Lift learnings showing Meta exposure associated with a 10.4% average increase in search volume, which is exactly the kind of “invisible” impact that sequencing is designed to create and then harvest.

Improve signal quality with feedback loops, not guesswork

Scaling paid social advertising usually fails when optimization signals drift away from real value. If your business cares about qualified opportunities, not just leads, the platform needs to learn from qualified outcomes. That’s why teams increasingly invest in better measurement infrastructure and causal validation instead of relying on last-click reporting alone.

- Attribution consistency: keep a single steering view for weekly decisions, using concepts explained in GA4’s attribution documentation.

- Causal validation: use controlled experiments like Meta’s lift studies when budget decisions need proof, not vibes.

- Strategic allocation: add MMM when cross-channel interactions make user-level certainty unrealistic, with modern approaches like Google’s Meridian designed for privacy-safe modeling.

Scaling Framework

Scaling paid social advertising is not “turning up the budget.” It’s preserving what made performance work in the first place while expanding reach and volume. A simple framework keeps scaling decisions disciplined, especially when the auction gets more expensive and stakeholders expect predictable outcomes.

1) Verify headroom before you scale

Headroom means there are still people to reach, creative that can stay fresh, and conversion capacity that won’t break. If frequency is rising and marginal results are slipping, you don’t have headroom. If conversion rate is improving and creative is still producing new winners, you likely do.

2) Scale the system, not the one winning ad

One winning creative can carry an account for a moment, then crash under saturation. A scalable paid social advertising system spreads risk across multiple creative concepts, multiple proof points, and multiple audience entry paths. This is also why benchmark reports often show continued spend growth even as auction pressure increases; Tinuiti’s Q4 2024 benchmark report shows Meta spend rising while CPM moved upward, including a year-over-year CPM increase across Meta properties in Q4 2024.

3) Expand in three directions

- Creative expansion: new angles and formats that reach new pockets of attention without sacrificing message clarity.

- Audience expansion: broader targeting with clear guardrails, plus segment-based messaging that improves relevance without fragmenting learning.

- Offer expansion: bundles, entry offers, financing, trials, or guarantees that raise conversion rate without cheapening the brand.

If you only expand budgets, you’re relying on the platform to “find more of the same” in a market that’s already competitive. If you expand the inputs, you create new ways to win the auction.

4) Protect measurement as spend rises

The bigger the budget, the more dangerous it becomes to trust a single reporting view. Keep one consistent attribution lens for weekly steering, then validate major decisions with experiments. Meta explicitly frames lift studies as a way to estimate incremental impact through controlled tests in its lift study guide, which is exactly the kind of method you want when you’re making scaling bets.

Growth Optimization

At scale, “optimization” is less about tweaking bids and more about removing constraints that limit learning. The goal is to increase the amount of truth flowing through your system so the platform can optimize delivery and you can optimize decisions.

Use creative velocity as a performance lever

If performance declines and creative hasn’t changed in weeks, the diagnosis is usually obvious. Creative velocity is a controllable input that often matters more than minor targeting tweaks. It also aligns with real platform consumption patterns; Emplifi’s benchmark report highlights how formats like short-form video gained dominance across 2024 activity, including Reels becoming a major share of brand posting behavior (Emplifi Social Media Benchmarks Report 2025).

Make conversion rate the scaling key

Scaling paid social advertising gets dramatically easier when conversion rate improves, because you can buy more traffic without buying more waste. This is why the best growth teams treat landing pages, product pages, and checkout flow as part of the paid program. When conversion friction drops, the algorithm has better outcomes to learn from and you can scale with less efficiency decay.

Use automation with human guardrails

Automation performs best when it’s paired with a human-led creative and measurement system. Pinterest’s Performance+ positioning is built around AI-driven efficiency and scale (Pinterest Performance+), but the brands that win with automation still control the levers that matter most: message, proof, and conversion experience. Think of automation as a powerful engine that still needs a skilled driver.

Budget with experiments and models, not only dashboards

At a certain point, channel interactions become too complex for last-click thinking. That’s where incrementality tests and MMM earn their place. Meridian’s release as an open MMM option reflects the direction measurement is moving toward privacy-safe allocation decisions (Meridian overview), and it becomes much more valuable when you calibrate it with real experiments instead of trusting the model blindly.

Scaling Stories

Scaling stories in paid social advertising are rarely about a “brilliant hack.” They’re about teams hitting a wall, finding the real constraint, and then rebuilding their system so growth becomes repeatable.

Castlery’s Scaling Shift: Automation That Didn’t Kill Efficiency

It started with a quiet fear that looked like a normal optimization problem. The campaigns were working, but scaling felt like stepping onto thin ice, where every extra dollar could trigger diminishing returns. The team could already see the warning signs: the kind of creeping inefficiency that turns a growth channel into a cost center.

Backstory: Castlery sells furniture, which means paid social advertising has to do more than generate clicks. It has to create enough intent for people to commit to a high-consideration purchase, often after multiple sessions and comparisons. The brand tested Pinterest Performance+ with the goal of improving lower-funnel efficiency while still unlocking scale, detailed in Pinterest’s Castlery success story.

The wall was the classic scaling paradox. Manual structures can feel “controlled,” but that control often comes at the cost of slower learning and less efficient delivery when the platform could be doing more heavy lifting. At the same time, handing everything to automation can feel risky, because you worry it will chase cheap volume and erode profitability.

The epiphany came from treating automation like a tool, not a religion. Castlery ran a structured test comparing its existing manual setup against Pinterest Performance+ full suite, using the platform’s automation to improve efficiency while maintaining business intent. The results were clear enough to change behavior: the Performance+ test produced a 2.3x increase in ROAS, alongside a 14% decrease in CPA compared to the manual setup.

The journey wasn’t just “turn it on and hope.” The team approached it as an operating change: they validated performance under test conditions, then used the learnings to make scaling decisions with less fear and more evidence. They aligned creative and product intent so the automation had quality inputs to learn from, rather than expecting the system to solve messaging problems. They also used the comparison to clarify what mattered most for their paid social advertising program: not clicks, but profitable outcomes tied to purchase behavior.

Then the final conflict showed up in the form that catches many teams off guard: success creates pressure. When results improve, stakeholders want scale immediately, and that’s when sloppy decisions can destroy what you just fixed. The team had to resist “panic scaling” and keep the discipline of testing and guardrails so efficiency didn’t collapse under volume.

The dream outcome was the kind of scaling story growth teams want to repeat. The test didn’t just improve efficiency; it created confidence that the system could scale without trading away profitability. And that’s the real win in paid social advertising: not one good month, but a pathway to grow spend while protecting the business case.

Promote the method, not the moment

Stakeholders don’t fund vibes for long. They fund repeatability. When you present scaling progress, show the operating method: creative velocity, conversion improvements, measurement validation, and how decisions are made when results fluctuate.

Use proof for big bets and direction for small ones

Weekly steering can rely on a consistent attribution lens, grounded in definitions like those explained in GA4’s attribution documentation. Bigger budget moves should be defended with causal methods, like Meta’s lift studies, so your scaling decisions are backed by evidence that the spend is creating incremental outcomes.

Frame cost changes with market context

If costs rise while results hold, that can be a sign of stronger competition rather than weaker execution. Tinuiti’s benchmark reporting shows real auction movement that can inform expectations, including Meta CPM increasing year over year in Q4 2024. Meta’s own reporting also reflects ad pricing shifts, noting average price per ad increased in 2025, which helps you explain why creative and conversion improvements often need to offset market pricing pressure.

Anchor everything to business reality

As budgets scale, connect paid social advertising performance to the numbers the business actually cares about: margin, payback periods, lead quality, and retention. The channel is big enough that this rigor is justified; U.S. digital ad revenue reached $258.6B in 2024, and social media alone accounted for $88.8B. In a market of that scale, the most “professional” thing you can do is make your growth story auditable and your scaling decisions defensible.

Future Trends

Paid social advertising is moving into a new era where the platforms do more of the “how,” and marketers are responsible for the “why.” If you can’t clearly define what success looks like, what signal represents real value, and what creative proof makes people believe, automation will optimize your spend toward outcomes that look good inside a dashboard and disappointing inside your bank account.

AI is the obvious accelerant. Meta is pushing deeper into AI-driven ad delivery and retrieval infrastructure, including its Andromeda ads retrieval work that’s designed to improve performance and efficiency at scale (Meta Engineering on Andromeda). On the product side, Meta’s Advantage+ positioning makes the direction unmistakable: more automation across targeting, budget allocation, and campaign optimization (Meta Advantage+).

TikTok is building in the same direction, but with an emphasis on creative throughput and full-funnel behavior. Its TikTok World updates highlight AI-powered tools, stronger search capabilities, and automation intended to improve performance across the funnel (TikTok World 2025). TikTok Symphony’s generative features are also being positioned as a way to scale native video production faster without needing a full studio workflow (TikTok Symphony AI tools).

Privacy and regulation continue to reshape measurement, but not in the simple “cookies disappear” storyline many people expected. Google publicly stepped back from introducing a standalone third-party cookie prompt in Chrome, choosing to keep controls in existing settings while continuing Privacy Sandbox APIs work (Reuters on Chrome third-party cookie prompt decision). Google’s Privacy Sandbox communications also reflect that parts of the initiative are being phased out or adjusted over time (Privacy Sandbox cookies update).

For mobile advertisers, privacy-safe attribution frameworks are now part of the baseline. Apple’s SKAdNetwork documentation frames the system as a privacy-preserving approach to measuring campaign success without user-level tracking (Apple SKAdNetwork), and SKAdNetwork 4 guidance clarifies how the newer version works and what’s required for postbacks (SKAdNetwork 4 release notes).

All of this pressure is pushing brands toward measurement methods that don’t rely on fragile user-level certainty. That’s one reason MMM is resurging, especially in forms that can be calibrated with experiments. Google’s Meridian is positioned as an open-source MMM built for today’s consumer journeys and cross-channel decision-making (Meridian release), and Google has also emphasized that it can learn from real-world experiment results to improve confidence in budget recommendations (Meridian measurement overview).

Finally, attention is consolidating around social video ecosystems that behave like entertainment platforms. Deloitte’s 2025 Digital Media Trends describes social video platforms as a new “center of gravity” for media time and ad spending (Deloitte Digital Media Trends 2025). That shift matters because it changes the performance playbook: creative has to earn attention like content, not like a banner, and measurement has to account for delayed, multi-touch decision-making.

Strategic Framework Recap

If you want paid social advertising to feel predictable, the goal isn’t to control everything. It’s to control the few inputs that shape everything: outcome clarity, creative quality, signal integrity, and a repeatable learning cadence.

- Start with a win condition: define success in business terms so optimization doesn’t drift into proxy metrics that look impressive and pay poorly.

- Build creative as a system: ship angles, proof, and formats consistently so learning compounds instead of stalling in fatigue.

- Protect signal quality: clean conversion events and stable definitions give platforms something real to learn from, and give you reporting you can defend.

- Use attribution for steering, experiments for proof: GA4 attribution helps you stay consistent (GA4 attribution documentation), while causal tools like lift studies exist to measure incremental impact when budget decisions get serious (Meta lift studies).

- Scale the system, not the lucky win: expand through creative, audience, and offer improvements so higher spend doesn’t simply accelerate diminishing returns.

- Modernize measurement for reality: MMM is becoming more practical as open approaches mature, with Meridian positioned as a modern option designed for cross-channel allocation decisions (Meridian).

Once you run the loop consistently, paid social advertising stops feeling like a slot machine. It becomes a craft: you ship, you learn, you validate, and you scale with discipline.

FAQ – Built For The Complete Guide

What is paid social advertising, in plain English?

Paid social advertising is when you buy distribution on social platforms so the right people see your message, and you can measure what that exposure changes. It’s not just “running ads.” It’s a system that ties creative, targeting constraints, conversion signals, and measurement into one feedback loop.

Which platform should I start with?

Start where you can get enough volume to learn and where your audience naturally spends time. Many teams start with Meta because of scale and mature optimization tools (Meta Advantage+). Others start with TikTok when short-form video is their strength and discovery behavior is central (TikTok World 2025).

How much budget do I need for paid social advertising to work?

You need enough budget to generate learning signals. If your conversion volume is low, tiny daily budgets often create misleading results because the platform can’t learn. The practical approach is to start with one core conversion event, a focused campaign structure, and creative testing discipline, then increase spend once performance is stable.

What metrics matter most?

The metric depends on the business outcome. Early on, watch relationships: CPM, CTR, conversion rate, and cost per conversion moving together tells you whether you’re buying attention that turns into outcomes. For higher-stakes decisions, validate causality with experiments like lift studies rather than relying only on attribution (Meta lift studies).

Why do platform dashboards disagree with my analytics?

They’re measuring different things with different attribution rules and incomplete data. GA4 explains how attribution models assign credit across touchpoints (GA4 attribution documentation). Platforms also use modeled reporting and their own conversion windows, so the numbers won’t always line up perfectly.

How do I know if paid social advertising is truly incremental?

You test it. Incrementality is a causal question, and the cleanest way to answer it is with controlled experiments like lift studies and holdouts (lift study methodology). Attribution is useful for steering, but it isn’t proof.

What is MMM, and when do I need it?

Marketing mix modeling helps you allocate budget across channels and time when user-level tracking is incomplete. It’s most helpful when you’re running multiple channels and attribution becomes noisy. Google’s Meridian is positioned as an open-source MMM built for modern consumer journeys and cross-channel outcomes (Meridian release).

What changed with privacy, cookies, and measurement?

The landscape shifted in a more complicated way than “cookies disappear.” Google decided not to roll out a standalone third-party cookie prompt in Chrome and kept controls in existing settings (Reuters coverage). At the same time, Privacy Sandbox messaging reflects ongoing changes and phased adjustments (Privacy Sandbox cookies page), so measurement strategies increasingly rely on first-party signals and experiments.

How do mobile apps measure paid social advertising performance now?

Many rely on privacy-safe frameworks like SKAdNetwork, which Apple describes as a way to measure campaign success while maintaining user privacy (Apple SKAdNetwork documentation). SKAdNetwork 4 guidance adds details about how version 4 postbacks work and what conditions are required (SKAdNetwork 4 release notes).

What do I do when performance drops suddenly?

Follow a consistent diagnostic order: confirm tracking integrity, check for creative fatigue, review conversion friction, then evaluate audience saturation and market pricing pressure. When the auction gets more competitive, costs can rise even when execution is solid, which is why stakeholders benefit from context on market dynamics and platform evolution (Meta discusses ongoing AI-driven improvements in its ads systems through work like Andromeda).

How is AI changing paid social advertising right now?