Social media used to be the “nice-to-have” channel you posted on when you had time. Now it’s where attention is traded, trust is earned (or lost), and buyers quietly decide whether you’re worth a click, a follow, or a contract.

This guide is built for people who want repeatable results, not random bursts of reach. You’ll get a clear definition of what social media marketing digital really includes, why it matters in 2026, and a framework you can use to plan, produce, distribute, and measure work like a professional.

Article Outline

- What Is Social Media Marketing Digital?

- Why It Matters

- Framework Overview

- Core Components

- Professional Implementation

What Is Social Media Marketing Digital?

Social media marketing digital is the disciplined use of social platforms to create demand, capture demand, and strengthen customer relationships across the entire buying journey. It’s not just posting content. It’s the combined system of positioning, creative, distribution, community, paid amplification, and measurement—connected to real business outcomes.

It also matters that “social” is no longer only a top-of-funnel channel. People use platforms as discovery engines, customer support desks, review hubs, and cultural barometers. When global social media user identities reached 5.24 billion, it wasn’t just an interesting milestone—it was a signal that your future customers are already there, forming preferences long before they ever visit your website. The same 2025 global digital report summary reinforces how quickly social behavior scales once it becomes a default daily habit.

In practice, social media marketing digital includes five things working together:

- Strategy that defines who you’re for, what you stand for, and what “success” means beyond vanity metrics.

- Creative systems that produce platform-native content at the pace the market expects.

- Distribution that mixes organic reach, creator partnerships, and paid media so results don’t depend on luck.

- Community and trust through conversations, replies, and visible proof that real humans are behind the brand.

- Measurement that ties attention to outcomes—leads, pipeline, sales, retention, and lifetime value.

When these pieces aren’t connected, social becomes noisy and exhausting. When they are connected, social becomes one of the most efficient ways to build preference at scale, then convert that preference when timing is right.

Why It Matters

Social matters because the economics of attention have shifted. Buyers don’t wait for you to show up in their inbox—they learn your point of view through your feed. They watch how you respond to questions. They judge your credibility by the clarity of your message and the consistency of your proof.

There’s also a budget reality behind the strategy. Digital advertising keeps taking a bigger share of global ad spend, and social is a major driver inside that shift. When marketers spent “close to a quarter of a trillion” dollars on social ads in 2024, and social placements represented “more than 3 in every 10” digital ad dollars, that wasn’t hype—it was the market voting with its wallet. Those figures are summarized in Kepios’ Digital 2025 advertising trends section, and the broader ad-spend milestone is also echoed in GroupM’s global forecast coverage and WARC’s reporting on ad spend passing $1T.

But “more spending” doesn’t automatically mean “easy wins.” Social is crowded, algorithms are unpredictable, and brand trust is fragile. Even platform scale comes with complexity: Meta’s own reporting shows how massive the ecosystem is, with Family daily active people at 3.58 billion (December 2025 average) and full-year revenue of $200.97 billion in 2025. That scale creates opportunity—but also competition, rising creative standards, and a stronger need for measurement discipline.

So the real reason social media marketing digital matters is simple: it’s where demand is shaped. If you’re absent, you’re not neutral—you’re invisible. And if you show up without a system, you’ll spend time and money without learning what’s working.

Framework Overview

A professional social system needs to be tight enough to execute every week, but flexible enough to adapt when platforms change. The framework below is designed to do exactly that. It treats social as a loop you can run continuously—so your work compounds instead of resetting every time you post.

Think of it as five connected phases:

- Positioning: your promise, your audience, your category, and your “why you.”

- Content engine: repeatable formats, a realistic production cadence, and creative direction that fits each platform.

- Distribution and amplification: organic reach plus deliberate boosts through paid, partners, and creators.

- Conversion paths: what happens after attention—landing pages, offers, lead capture, and sales enablement.

- Measurement and iteration: a testing rhythm that turns data into better creative and better business outcomes.

This isn’t theoretical. It matches how modern teams actually operate when they’re serious about performance: they align on goals, run a content pipeline, distribute intelligently, and measure continuously. Even broad industry measurement work points to the same direction: better marketing outcomes come from tighter alignment between data, planning, and execution, emphasized in Nielsen’s annual marketing research for 2024 and its follow-up publishing around marketing adaptation in Nielsen’s 2025 report release.

Core Components

Most brands don’t fail because they “need better content.” They fail because core components are missing, and the system can’t hold together under real-world pressure—tight timelines, shifting priorities, and changing algorithms.

Here are the components that keep social media marketing digital stable:

1) Strategy That Can Survive the Feed

A workable strategy is specific enough to guide decisions when you’re moving fast. It answers: who you’re for, what problem you own, what you want to be known for, and what people should do after they trust you.

If your strategy can’t be explained in two sentences, your content will wander. If your strategy doesn’t include a clear outcome (pipeline, retention, hiring, brand preference), your reporting will drift into vanity metrics.

2) A Creative System, Not Just Ideas

Ideas are unreliable. Systems scale. A creative system is a library of repeatable content formats—each designed to do one job well: educate, persuade, demonstrate proof, or convert.

That’s also why social video keeps growing as a budget focus. Digital video spend growth has been projected in the double digits, with details captured in IAB’s 2024 digital video ad spend and strategy research. Even if your niche isn’t “video-first,” the market’s creative expectations are moving that way—shorter, clearer, more visual, more native to the platform.

3) Distribution That Doesn’t Depend on Algorithms Being Nice

Organic reach is valuable, but it’s not a plan by itself. Professional distribution uses three levers:

- Owned distribution: your own profiles, email list, community spaces, and retargeting audiences.

- Earned distribution: shares, mentions, partnerships, and creator collaborations.

- Paid distribution: boosting what already works to reach the right people consistently.

When you treat distribution as a lever—not a hope—you can scale winners without burning out the team.

4) Trust, Community, and Brand Safety

Social is public. That’s a feature and a risk. Trust is built in comments, replies, and visible consistency, but it can be damaged quickly if your content sits next to low-quality inventory or if moderation failures dominate the narrative.

If you run paid campaigns at scale, brand safety and placement decisions are part of performance, not a separate “PR concern.” It’s another reason measurement matters: you want growth, but you also want it in an environment that strengthens your brand.

5) Measurement That Connects to Revenue

Social measurement isn’t just counting engagement. The best teams set up a small set of business-linked signals and review them on a weekly rhythm. That could mean lead quality, demo requests, assisted conversions, or churn reduction tied to social support.

For the wider industry context, the digital ad market’s scale makes measurement discipline unavoidable. The U.S. digital advertising industry reached $258.6 billion in 2024, also summarized publicly in IAB’s release page and covered in mainstream finance reporting like Yahoo Finance’s write-up of the same report. When budgets are that large, teams that can’t measure impact don’t just waste money—they lose budget to teams that can.

Professional Implementation

At a professional level, execution is less about heroic effort and more about operational design. The goal is a workflow that produces quality consistently, without relying on last-minute scrambling.

In practical terms, professional implementation means:

- A weekly planning rhythm that turns business priorities into content themes and campaign angles.

- A production pipeline where writing, design, video, and approvals move predictably from draft to publish.

- A testing cadence that treats creative, hooks, and offers as experiments—not one-off bets.

- A reporting routine that answers “what should we do more of, what should we stop, and what should we change next week?”

- Clear roles so content doesn’t stall: strategy, creator/editor, community, paid, analytics.

The rest of this series will get specific about how to set up that system, including how to choose a realistic cadence, how to build content pillars that don’t get repetitive, and how to measure performance without drowning in dashboards.

Tools Supporting The Framework

A strong social media marketing digital framework is simple on paper, but it gets messy fast once real work starts: multiple platforms, multiple stakeholders, constant creative iteration, and a never-ending stream of comments, DMs, and edge-case customer issues. Tools exist for one reason—to protect the system from chaos.

The best stacks don’t try to “do everything.” They reduce friction at the exact points where teams typically break: planning and approvals, publishing and community, paid amplification, measurement, and cross-channel attribution. That matters even more as platforms scale. When Meta reported 3.58 billion Family daily active people in December 2025, it was a reminder that you’re not competing with a handful of brands—you’re competing with the entire internet for attention and response-time expectations.

Tools also exist to keep your decisions honest. Social budgets are large and getting larger; the US social media ad category alone reached $88.8 billion in 2024. When money moves at that scale, “we think it’s working” stops being acceptable, and the stack has to make performance measurable without turning reporting into a full-time job.

Tool Categories

Instead of thinking in brand names first, it’s cleaner to think in capabilities. Most social media marketing digital stacks are built from five to eight categories, depending on maturity and risk tolerance.

- Social suite (publish, engage, manage): Scheduling, inbox, approvals, asset reuse, basic reporting, and sometimes paid workflows. This is the operational backbone for day-to-day execution.

- Social listening and consumer intelligence: Trend detection, sentiment signals, emerging issues, competitive monitoring, and audience language. Listening becomes most valuable when it feeds creative and customer care decisions, not just dashboards. Meltwater’s 2025 global research found 77% of teams using social listening rated it most useful for monitoring brand reputation, and the same report highlights other common use cases like competitive tracking.

- Creative production and asset management: Design, video editing, templates, brand kits, and a place to store and retrieve “approved” assets quickly. Without this, teams rebuild the same formats repeatedly and burn out.

- Creator and influencer workflows: Discovery, outreach, contracts, content approvals, and tracking. This category matters because creator partnerships are no longer a side experiment; creator ad spending in the US was projected to hit $37 billion in 2025, which changes how serious brands must be about governance and measurement.

- Paid social execution: Platform-native ad managers plus creative testing workflows, comment moderation, and conversion tracking. In most cases, native tools remain the source of truth for delivery settings and real-time troubleshooting.

- Web analytics and attribution: GA4, server-side tracking, link tagging conventions, and dashboards that combine paid + organic. This is where social stops being “content” and becomes revenue analysis.

- CRM and customer support integration: Routing, ticketing, escalation paths, and shared history. Social customer care is operationally different from marketing, and it needs guardrails.

- Compliance, access control, and archiving: Permissions, audit trails, retention, and policy enforcement. This becomes non-negotiable in regulated industries and for any team managing many accounts.

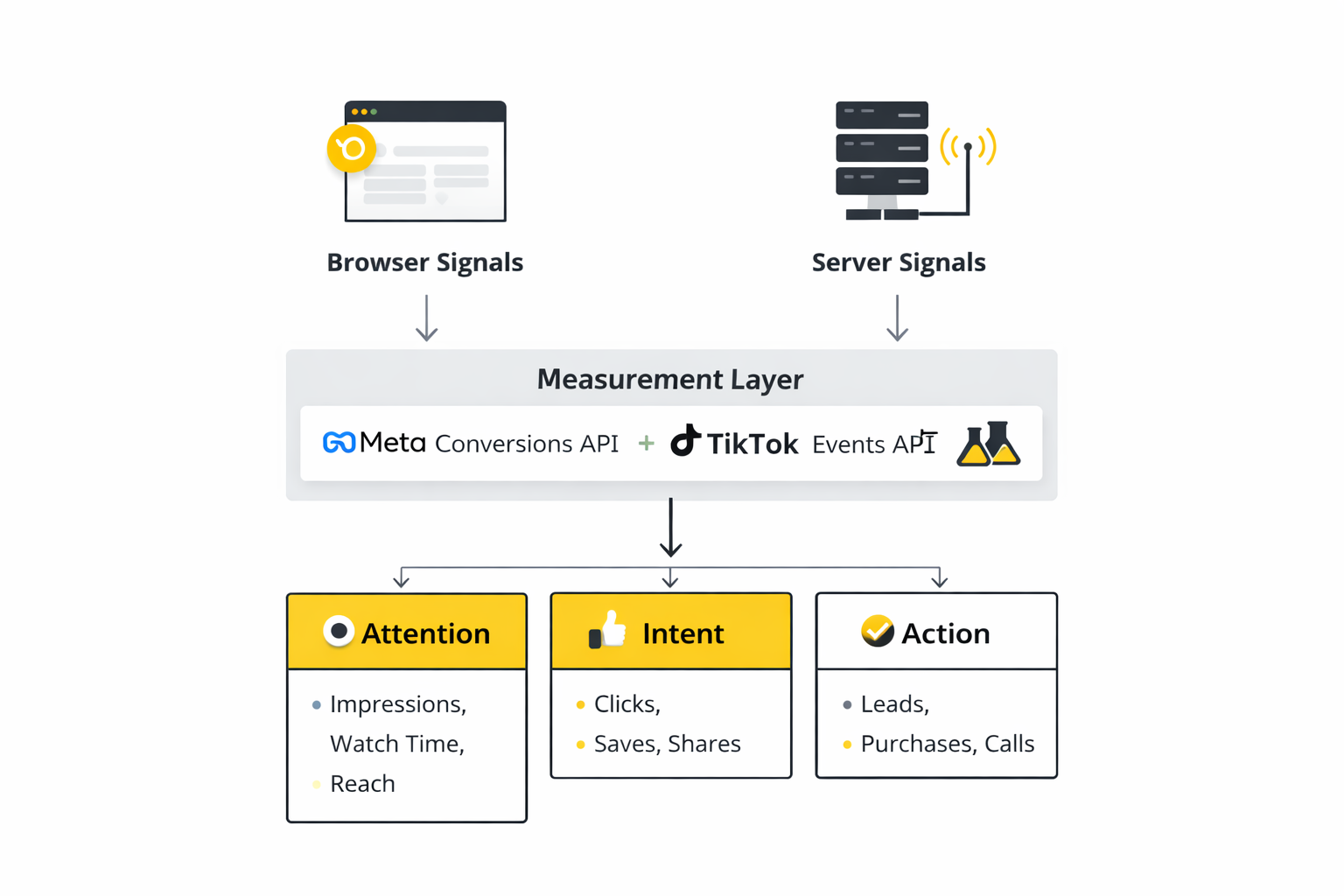

One practical note: conversion tracking is no longer just pixel-based. Server-side connections are increasingly a baseline expectation. Meta describes the Conversions API as a way to connect your marketing data directly to Meta’s optimization systems, which is why serious measurement stacks treat it as infrastructure, not a “nice-to-have plugin.”

Tool Comparison

Most tool comparisons fail because they judge features in isolation. The smarter comparison is: what kind of operating model are you running, and what failure are you trying to prevent?

Comparison By Team Stage

- Solo creator or freelancer: You usually need a lightweight scheduler, a simple content calendar, and analytics that won’t distract you. Your risk is overbuilding—buying an “enterprise” tool before you have consistent offers, content formats, and a repeatable lead path.

- Small team (2–8 people): You need shared planning, approval steps, and a unified inbox so community doesn’t fall through cracks. Your risk is fragmentation—five tools that don’t talk to each other, producing inconsistent reporting and duplicated work.

- Mid-market (multi-brand or multi-region): You need governance, role-based access, audit trails, and standardized reporting. Your risk is inconsistency—every region “does social” differently, so learnings don’t compound.

- Enterprise (high volume + customer care): You need case management, routing, multilingual workflows, and strict compliance controls. Your risk is operational collapse—response times explode during spikes, and brand trust takes the hit.

Comparison By The Job To Be Done

- If your biggest pain is speed: Prioritize a unified inbox, saved replies, routing, and mobile workflows. Response-time expectations are now part of brand perception, not just “support metrics.”

- If your biggest pain is creative output: Prioritize templates, approvals, and asset reuse. A tool that reduces time-to-publish often beats a tool with “more analytics.”

- If your biggest pain is proving ROI: Prioritize clean link tagging, dashboards that blend platform + web analytics, and server-side conversion signals. TikTok’s measurement research with WARC frames the modern problem well: attribution is fragmented and customer journeys are non-linear, so systems must be adaptable instead of pretending one model will answer everything.

- If your biggest pain is brand safety and compliance: Prioritize permissions, archiving, and audit trails first. Governance is becoming more formal across platforms and regulators, reflected in work like the OECD’s 2024 social media governance project summary and emerging audit-focused research.

A Simple Rule Of Thumb

Start with the smallest stack that protects your workflow. Expand only when you can name the exact constraint you’re solving. If you can’t clearly describe the bottleneck, new tooling usually adds complexity rather than performance.

When you do evaluate suites, be careful with headline ROI claims. Commissioned studies can still be useful, but they describe modeled outcomes for specific composites—not guarantees. For example, a Forrester Total Economic Impact study commissioned by Sprout Social modeled a composite customer and reported 268% ROI over three years. Use that kind of research as directional input, then validate with your own pilot and baseline metrics.

Real Tool Stack Stories

The most useful way to think about stacks is to see them under stress—when volume spikes, reputations are on the line, and “good enough” processes fall apart. These two stories are not about perfect tooling. They’re about what happens when the system either fails or finally becomes operational.

AJet’s Customer Conversations Spiked, And The Old System Couldn’t Keep Up

It started with a brand transformation that was supposed to feel exciting. AJet was rebranding from AnadoluJet and pushing hard into global markets, and the pressure to look “digital-first” was immediate. Then the messages hit like a wave—customers pouring into social channels with questions, changes, and complaints, and the team suddenly realized they were operating without a scalable model. The moment the brand promise met reality, the cracks showed.

The backstory mattered because the growth wasn’t random. AJet launched ticket sales through a new official website and leaned into a more elevated experience, which naturally drove more engagement and more inbound conversations across social. The ambition was global, and that meant languages, time zones, and expectations multiplied. The team wasn’t just managing comments anymore; they were managing a public-facing service operation across multiple channels and nine languages. The same move that modernized the brand also made the workload explode.

The wall came fast, and it wasn’t a “work harder” problem. Fragmented workflows and older systems were built for smaller volumes, not surges. When messages pile up, response time becomes reputation, and the team risked being seen as indifferent or incompetent. In their own write-up, the company describes how the surge “far outpaced existing processes and technology,” forcing a rethink of how they engaged at scale. That’s the moment most teams feel trapped: the work is visible, the backlog is public, and every delay becomes part of the brand story.

The epiphany wasn’t “we need a new dashboard.” It was realizing the operating unit had to change from individual messages to managed cases, with routing and closure like a real service desk. AJet partnered with Adba Analytics and implemented Sprinklr capabilities to move to a case-based approach, adding rule-based routing and queueing so requests reached the right people quickly. That shift changed the problem from “too many messages” to “a manageable flow of cases.” The system finally had a structure that could absorb spikes.

The journey was operational, not magical. They redesigned processes, consolidated work into a unified platform, and standardized routing so multilingual conversations could be handled consistently across channels. They tightened targets too—moving from a 90% within-40-minute goal to a 90% within-20-minute goal, which is a bold move when you’re already feeling overwhelmed. The key was building workflows that could scale, not relying on heroics. In their published story, AJet reports that within the first month they were consistently above 90% for within-20-minute responses and cut average response time from 7 hours to 17 minutes.

The final conflict was that volume didn’t politely slow down after launch. The conversations kept surging as growth continued, and the system had to prove it could hold under sustained pressure, not just a short burst. AJet reports conversation volume reaching 36,795 per month, described as an 875% increase versus pre-transformation levels. That kind of sustained load is where most “process improvements” collapse. The only reason it didn’t collapse here is because the operating model had changed, not just the tools.

The dream outcome wasn’t a viral post or a nicer report—it was control. The team stabilized service delivery even as volume stayed high, and they framed the result as making customer experience sustainable again, aligned with their promise of maximum satisfaction. The measurable outcomes are laid out directly in the customer story: 875% increase in conversation volume, a 96% reduction in average response time, and response rates above 90% within 20 minutes. That’s what a real stack is supposed to do: keep the brand credible when demand spikes.

Lucky Mobile Built A Self-Service Layer To Stop The Support Queue From Swallowing The Team

Some breakdowns are quieter, but just as dangerous. Lucky Mobile’s support demand wasn’t a single dramatic crisis—it was the slow grind of customers needing answers at all hours, on channels that never sleep. Each unanswered thread created more frustration, more repeat questions, and more people abandoning self-service to call or chat instead. The support queue becomes a black hole if you don’t build an escape route.

The backstory is that telecom support is uniquely unforgiving. Customers don’t just want information; they want certainty, quickly, because service issues feel urgent. The more your customer base grows, the more repetitive questions stack up, and agents spend time solving the same problem again and again. That repetition quietly drains morale and inflates costs. Without a better model, growth makes service worse.

The wall arrives when “being responsive” turns into an impossible promise. Teams try to keep up by adding scripts, extending hours, and working faster, but the demand curve usually wins. If customers don’t get answers, they escalate to higher-cost channels, and the business pays twice: once in staffing and again in reputational damage. At that point, the real enemy isn’t a single complaint—it’s the system design that forces every question into the same queue.

The epiphany is realizing that community and automation can be a pressure-release valve, not a vanity project. Instead of treating community as a marketing add-on, Lucky Mobile treated it as a support layer where customers could resolve issues without waiting for an agent. They used a 24/7 support approach and community-driven self-service as the first line of defense. The point was not to avoid customers; it was to meet them faster, at scale, in the channel they were already using.

The journey is reflected in the performance they publicly shared through the Khoros Kudos program. Lucky Mobile reported a community resolution rate and measurable movement in first-response timing, alongside rising solution views—signals that more customers were actually finding answers without escalating. They also framed the outcome in operational terms: contact deflection savings tied to fewer customers needing to call or use live chat after visiting community first. Those are the numbers that matter when you’re proving a stack pays for itself.

The final conflict is that communities don’t magically succeed just because you launch them. If content isn’t organized, if moderation is inconsistent, or if answers aren’t easy to find, the community becomes another place people complain. Maintaining trust requires steady participation, clear escalation rules, and continuous updates as products change. It also requires leadership to treat community outcomes as service outcomes, not “engagement.” Without that cultural shift, the tool fails even if the platform is strong.

The dream outcome is a support system that breathes. Lucky Mobile shared that their community drove contact deflection savings of $327K in 2024 YTD, along with progress on first-response timing and growing solution views. That’s what makes the story useful for social media marketing digital teams: a stack can reduce cost and improve customer experience at the same time, but only when it’s built around a clear operating model—self-service first, escalation second, and measurable outcomes throughout.

Implementation Foundations That Make Tools Work

- Clear ownership: One person owns publishing integrity, one owns community/service routing, and one owns measurement. When “everyone owns it,” nobody fixes it.

- Naming conventions: Campaign names, UTMs, creative labels, and versioning. This sounds boring until you try to compare creative tests and realize nothing is traceable.

- Access control and governance: Role-based permissions, approval flows, and audit trails. This becomes more important as governance expectations evolve, reinforced by public policy work like the OECD’s reporting on social media governance.

- Measurement infrastructure: Pixel plus server-side connections where relevant. Meta’s documentation frames the Conversions API as a direct connection between marketing data and optimization systems, and the developer guide shows how to implement it in practice.

- A single source of reporting truth: Platform metrics are useful, but they must connect to web analytics and outcomes. Otherwise teams optimize for what’s easiest to measure, not what drives growth.

A Weekly Rhythm For Using The Stack

- Monday: Pull learning from last week’s performance, plus a quick listening scan to catch emerging issues and opportunities.

- Tuesday–Wednesday: Produce and approve content in batches, using templates and asset libraries to protect speed without sacrificing quality.

- Daily: Work the inbox with routing rules, escalation paths, and saved replies so community care is consistent and fast.

- Thursday: Run creative tests for paid amplification and document what changed (hook, format, audience, offer).

- Friday: Ship a short decision memo: what to repeat, what to stop, and the next hypothesis to test. This keeps social media marketing digital work compounding instead of resetting.

Red Flags That Signal Your Tools Are Failing You

- “We can’t compare performance week to week.” That’s usually a tagging and reporting consistency problem, not an “analytics tool” problem.

- “Approvals take longer than production.” That’s a workflow design problem, often fixed by clearer roles and fewer gatekeepers.

- “Community feels overwhelming.” That’s usually missing routing rules, saved replies, and escalation standards, not a lack of effort.

- “Paid works, organic doesn’t.” That’s often a creative system problem: the team hasn’t built repeatable formats that match how people actually consume content on each platform.

In the next part, the focus shifts from tool categories to how the ecosystem connects—how data, creative, community, and paid media should flow together so the stack acts like one machine instead of a pile of subscriptions.

Step By Step Implementation

It’s easy to talk about social media marketing digital as if it’s one big thing. In real life, it’s a set of small decisions that either stack into momentum or collapse into chaos. The goal of implementation is simple: build a system you can run every week, even when the team is busy, leadership changes priorities, or platforms shift features.

This step-by-step plan is designed to be practical. It focuses on the handful of moves that create stability first, then adds performance layers once the basics are reliable.

Step 1: Define Outcomes You Can Actually Prove

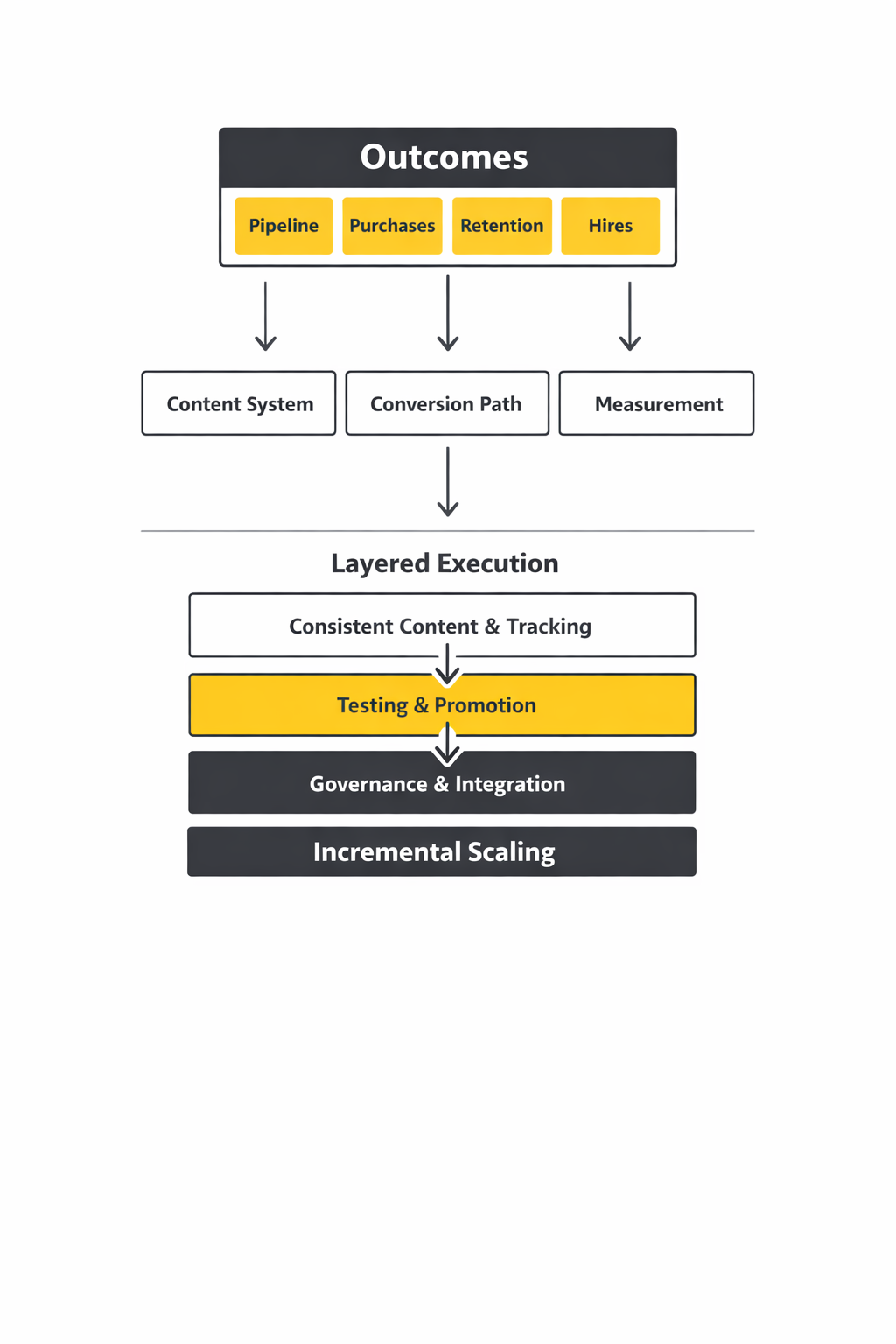

Start by deciding what “winning” looks like in language the business understands. For most teams, that means one primary outcome (pipeline, purchases, retention, hires) and two supporting outcomes (lead quality, conversion rate, customer resolution speed, assisted conversions).

To keep it honest, write down what data will prove it. If the outcome is revenue, your measurement has to connect social activity to web or CRM data, not just likes.

Step 2: Map The Audience And The Context They Live In

Audience research is not a persona PDF. It’s the vocabulary people use, the objections they repeat, and the situations that trigger buying decisions.

Use a mix of first-party inputs (sales calls, support tickets, reviews) and platform behavior. If you need a reality check on platform mix, the latest platform usage snapshots are easy to reference, like Pew Research Center’s 2025 social media use report.

Step 3: Build A Content System Before You Build A Content Calendar

Calendars don’t create consistency. Systems do. Choose 4–6 repeatable content formats that match how you sell and how people buy: quick “how-to” posts, teardown posts, short myth-busting videos, proof posts, founder POV, and customer stories.

Then assign each format a clear job. When every piece of content has a purpose, your creative becomes easier to brief, easier to review, and far easier to improve.

Step 4: Design A Conversion Path That Doesn’t Break

Attention is not the finish line. Every platform click should land somewhere that continues the story: a focused landing page, a simple lead magnet, a webinar registration, a product page, or a “book a call” flow that feels natural.

This is also where tracking discipline begins. If you can’t tell which post or campaign drove the session, you can’t learn. Google’s own guidance on how Analytics processes campaign data is a useful baseline for building consistent tracking rules, outlined in Google Analytics campaign and traffic-source documentation.

Step 5: Implement Measurement Signals That Survive Real-World Constraints

Modern measurement is messy: privacy controls, cookie limitations, app environments, and multiple devices. The practical move is to build redundancy. Don’t rely on a single pixel event and hope for clean attribution.

For paid social, this usually means combining browser-based signals with server-to-server signals where appropriate. Meta’s technical overview of sending server events is laid out in Meta’s Conversions API documentation, and TikTok describes its server approach in its Events API overview.

Step 6: Set A Weekly Operating Rhythm That Forces Learning

The difference between “posting” and social media marketing digital is a rhythm that produces learning. A simple weekly cadence works:

- Plan: pick one theme, one offer, and one hypothesis to test.

- Produce: batch-create assets in a way that protects quality and speed.

- Distribute: publish, amplify what’s working, and ensure community coverage.

- Review: decide what to repeat, what to stop, and what to test next.

When the rhythm is consistent, performance compounds. When it isn’t, every week becomes a restart.

Execution Layers

Implementation gets easier when you treat execution as layers. You don’t need everything at once. You need the right layer at the right time, so the system stays stable while it grows.

Layer 1: Foundation

This layer is about reliability. It’s your content formats, publishing cadence, basic community coverage, and tracking conventions. Foundation work isn’t glamorous, but it’s what prevents random results.

- Content: 4–6 formats, a realistic cadence, and a repeatable creation process.

- Community: response rules, escalation paths, and a consistent voice.

- Tracking: link tagging rules and campaign naming that stays consistent.

Layer 2: Performance

Once the foundation is stable, you add performance: structured testing, smarter distribution, and paid amplification tied to outcomes. This is where “creative” becomes a discipline, not an art project.

- Testing: controlled changes to hooks, formats, offers, and audiences.

- Amplification: boosting proven posts rather than guessing what might work.

- Conversion: refining landing pages, lead flows, and sales handoffs.

Layer 3: Scale

Scale is what happens when your system keeps working under pressure. That requires stronger governance, deeper measurement, and clearer role ownership.

- Governance: permissions, approvals, and audit trails.

- Integration: social data connected to analytics and CRM outcomes.

- Operations: playbooks so the system doesn’t depend on one person.

If you operate in the EU or work with regulated brands, scale also means transparency and compliance awareness. The European Commission’s overview of how the DSA drives transparency is a useful context check in the EU’s DSA transparency explainer, and advertising-specific implementation guidance is discussed by industry groups such as IAB Europe’s DSA transparency guidelines update.

Optimization Process

Optimization is not “checking metrics.” It’s a repeatable process that turns signals into better creative and better business results. The simplest mistake is changing too many variables at once and then pretending you learned something.

1) Set One Clear Hypothesis

Choose one change you believe will improve performance. Make it specific and testable, like “a clearer promise in the first sentence will increase saves and click-through,” or “a proof-focused creative will reduce objection friction on the landing page.”

2) Control The Variables

Change one variable at a time: the hook, the format, the offer, or the audience. Keep everything else stable so you can attribute results to the change you made.

3) Define Leading And Lagging Signals

Leading signals tell you if the content is resonating (watch time, saves, comments that show intent). Lagging signals tell you if it created business value (leads, pipeline, purchases, retention).

When your lagging signals depend on ad platforms, make sure your conversion tracking is implemented correctly. LinkedIn’s measurement flow is described in LinkedIn’s conversion tracking overview and the technical mechanics are documented in the Insight Tag conversion tracking documentation.

4) Review Weekly, Not Randomly

Daily checking creates anxiety and bad decisions. Weekly review creates better decisions. Use a short weekly review to answer three questions: what worked, why it worked, and what we’re testing next.

5) Document So Learning Compounds

Write down the test, the result, and the next move. That simple habit turns social media marketing digital from a stream of posts into a system that gets smarter every month.

Implementation Stories

Implementation looks clean in a framework and brutal in real life. These stories are useful because they show what happens when a system is forced to perform under pressure: high volume, high expectations, and real operational consequences.

Autopass Hit A Public-Service Wall, Then Rebuilt Social Care As A System

The crisis didn’t start as a campaign problem. It started as a customer experience problem that played out in public, in real time, with people demanding answers. Every delay was visible, every unanswered mention felt like neglect, and the brand’s credibility was on the line. When the workload spikes in social, you don’t get time to “prepare later.”

Autopass operates transportation technology in Brazil, and that kind of service is unforgiving when something goes wrong. Millions of passengers depend on smooth operations, and any disruption creates immediate online conversation. The company described the stakes clearly in its own story: social mentions can escalate quickly and even become headline news, which turns customer care into reputation management. That context is laid out inside Sprinklr’s Autopass customer story.

The wall was structural. Autopass had separate teams using separate tools, which sounds manageable until volume surges and nobody has the full context of the conversation. Mentions had to be turned into cases through manual steps, which slows resolution and creates backlog pressure. The bottleneck became more than speed; it became the risk of missing critical signals entirely.

The epiphany was realizing that social media marketing digital wasn’t only about publishing. It also had to include a serious social care operating model: one unified view of conversations, one place to route issues, and one process for resolving them. Autopass chose to unify teams and tools onto a single platform and build a command-center model for social care. That shift turned “chaos across channels” into “cases that can be managed.”

The journey was operational and disciplined. They consolidated interactions across major channels into one environment, added automation for turning mentions into cases, and used structured responses for common issues. They also treated listening as an early warning system, so emerging problems could be handled before they grew. The story describes how the team moved from manual ticket creation toward automatic case generation and faster handling.

The final conflict was that operational pressure doesn’t politely disappear once a tool is installed. The system still has to hold when conversations surge, when teams are lean, and when the public expects immediate answers. The risk is that automation creates new failure modes: misrouted cases, wrong canned replies, or issues stuck without escalation. That’s why the operating model matters as much as the platform.

The dream outcome is stability and control. Autopass describes the results as a transformed social care operation, with faster resolution and tighter service standards, and it shares the details directly in the published outcome section of the customer story. What makes the example valuable is not the tool name—it’s the implementation lesson: unify first, automate second, then scale.

Ray-Ban Turned Performance Social From Manual Work Into An Automated System

The pressure came from a familiar performance marketing fear: “we’re spending, but the results aren’t predictable.” When campaigns demand constant manual tweaks, teams burn time on operations instead of learning and creative improvement. That’s the trap where social feels expensive even before you look at ad spend. And when peak season hits, the workload intensifies at the exact moment you need clarity.

Ray-Ban wanted to connect with modern audiences and drive online sales for best-selling sunglasses during peak summer months in the US. The challenge wasn’t only reach; it was delivering relevant ads efficiently while maintaining performance. Their case study frames the problem as needing a full-funnel approach with better targeting, optimization, and creative freshness. That context is described in TikTok’s Ray-Ban Smart+ Catalog Ads customer story.

The wall was the classic performance ceiling: manual campaign management can’t scale the way demand scales. You can adjust audiences, rotate creatives, and refine setups, but the process becomes fragile and time-consuming. Even when you do everything “right,” ad fatigue and signal gaps can erode results. At that point, teams often feel like they’re operating the platform instead of running a strategy.

The epiphany was recognizing that automation only works if the signal foundation is strong. Ray-Ban leaned into TikTok’s automation approach with Smart+ Catalog Ads, and the story highlights the importance of implementing TikTok Pixel and Events API with Advanced Matching to enrich signals. That’s not a small technical detail; it’s the difference between optimization systems guessing and optimization systems learning. TikTok’s technical overview of how server signals work is explained in the Events API help documentation.

The journey was about reducing operational drag while improving creative performance. The case study describes a simplified setup where automation handled targeting and optimization, and creative was optimized quickly to reduce fatigue. Instead of treating creative refresh as a constant emergency, the system shifted budgets toward what performed best. That freed time to focus on what actually moves performance: better inputs, not more manual adjustments.

The final conflict is that automation can tempt teams into complacency. When campaigns “run themselves,” it’s easy to stop asking hard questions about creative direction, offer strength, and measurement quality. It’s also easy to lose clarity on what caused improvement if you don’t document changes and hold out control groups. A professional implementation keeps a learning loop running even when automation is doing heavy lifting.

The dream outcome is a performance system that’s both efficient and scalable. TikTok publishes the results and the implementation details directly in the Ray-Ban case study, and the lesson is portable: when you pair strong event signals with a structured testing rhythm, social media marketing digital stops feeling like constant firefighting and starts feeling like an engine.

A Practical Playbook You Can Run Every Week

- One page strategy: audience, promise, proof, and one primary outcome.

- Content formats library: a small set of repeatable formats with clear jobs.

- Tracking standards: naming conventions and link tagging rules that never change.

- Signal redundancy: pixel plus server-side where appropriate, aligned with platform guidance like Meta’s Conversions API documentation and TikTok’s Events API overview.

- Weekly learning loop: one hypothesis, one controlled test, one documented insight.

Governance That Keeps Social Stable As You Scale

When your brand grows, so do the risks: miscommunication, inconsistent voice, compliance mistakes, and measurement drift. Governance isn’t bureaucracy when it prevents expensive errors.

- Access control: role-based permissions and clear ownership.

- Approval flow: fast enough to ship, strict enough to protect the brand.

- Compliance awareness: transparency expectations, especially in regulated markets, informed by resources like the EU’s DSA transparency overview.

What To Fix First If Your Implementation Feels Messy

If you’re overwhelmed, don’t add more channels, more tools, or more meetings. Fix the bottleneck that’s actually breaking the system.

- If reporting feels unreliable: standardize tracking and conversion signals first.

- If content feels inconsistent: lock a formats library and a simple weekly cadence.

- If community feels chaotic: define escalation rules and response ownership.

Once those are stable, the system becomes easier to optimize. That’s when social media marketing digital turns into a long-term advantage instead of a constant scramble.

Statistics And Data

If social media marketing digital feels overwhelming, data is what turns it back into something you can steer. Not “more metrics,” but a small set of numbers that answer real questions: Is attention growing? Is trust forming? Is intent rising? Are conversions happening? And if conversions are happening, are they incremental?

Here are the few market-level signals worth knowing because they explain why measurement discipline matters more every year:

- Social is where the audience is. Global social media user identities reached 5.24 billion, reinforced by Kepios’ social section and summarized in We Are Social’s 2025 guide.

- Digital budgets keep climbing. The US digital ad market hit $258.6 billion in 2024, echoed on IAB’s release page and in coverage that references the same report like MediaBrief’s summary.

- Social media is a major slice of that spend. Social media ad revenue reached $88.8 billion in 2024, also reflected in the same ecosystem context on IAB’s official announcement and referenced widely by outlets summarizing the report’s findings like Yahoo Finance.

- Creators are no longer a “side budget.” US creator ad spend was projected to reach $37 billion in 2025, with the same figure discussed in Business Insider’s reporting and additional context in Forbes’ coverage.

- Global ad spend crossed a psychological threshold. GroupM’s forecast pointed to global advertising revenues surpassing $1 trillion in 2024, also covered by Reuters and Bloomberg.

None of these numbers tell you what to post tomorrow. What they do tell you is why serious analytics is non-negotiable: when competition and spend both rise, guessing gets expensive.

Performance Benchmarks

Benchmarks are helpful when you treat them like guardrails, not goals. A “good” click-through rate or engagement rate changes wildly by platform, industry, creative format, audience maturity, and whether you’re measuring paid, organic, or blended performance.

So instead of copying someone else’s benchmark, build a benchmark system that fits your social media marketing digital reality:

- Baseline first, ambition second. Capture 4–8 weeks of steady performance before you set targets. If you change everything while you measure, you’ll never know what “normal” looks like.

- Separate brand signals from demand signals. Brand signals are attention and sentiment shifts. Demand signals are site sessions, product views, lead submissions, purchases, and qualified conversations.

- Hold paid and organic to different standards. Organic is your consistency and trust engine. Paid is your distribution accelerator. They work together, but they shouldn’t be judged the same way.

- Benchmark the funnel, not a single metric. A post can “underperform” on likes but overperform on saves, clicks, or assisted conversions. What matters is whether it pushes people forward.

If you need one simple benchmark to start with, use business reality: “How fast are we learning?” When budgets are rising at the category level, like the $258.6 billion US digital ad market, teams that learn faster win because they waste less and scale what’s proven.

Analytics Interpretation

Most dashboards look intimidating because they mix signals from different stages of the customer journey. Once you separate them, the story gets clearer.

Attention: Are People Stopping?

Attention metrics are reach, impressions, video views, watch time, and repeat exposure. They answer one thing: did you earn a moment of focus?

Attention is not vanity if you connect it to the next stage. If attention rises but clicks don’t, your message may be interesting but not specific. If attention rises and clicks rise but conversions don’t, your offer or landing page is the bottleneck.

Intent: Are People Leaning In?

Intent shows up in saves, shares, long comments, DMs, profile visits, and clicks to deeper pages. Intent is the bridge between “I saw it” and “I might buy.”

This is where you stop treating social as a broadcast channel and start treating it like a conversation channel. In many industries, intent is also where creators influence outcomes, which is why the projected $37 billion creator spend in 2025 matters: intent is often built through human voice, not brand voice.

Action: Did It Convert, And Was It Incremental?

Action metrics are leads, purchases, subscriptions, calls booked, and qualified chats. This is where measurement discipline matters most, because platform attribution is rarely the full story.

Two practical moves make action measurement stronger:

- Use redundant signals. Browser tracking alone is fragile. Server-side signals help stabilize attribution and optimization when cookies and devices get in the way. Meta documents how server events connect directly into optimization in its Conversions API guide, and TikTok outlines the same principle in its Events API overview.

- Validate incrementality when it matters. If you’re making big decisions, conversion lift tests and holdout designs can answer the question “Would this have happened anyway?” A recent industry example is highlighted in Adweek’s overview of the 2025 Meta Agency Awards, where a DSW campaign included a conversion lift test with a 30% holdout group to confirm results were incremental.

The best interpretation habit is brutally simple: every metric should point to a decision. If it doesn’t change what you do next week, it’s noise.

Case Stories

These stories matter because they show analytics as a turning point, not a reporting exercise. In both cases, the “win” came from connecting measurement to execution decisions, then using those signals to scale.

Naughty% Went All-In, Then Had To Prove The Spend Was Real

It looked like a breakout moment, but it was also a risk. Naughty% devoted its entire advertising budget into TikTok Ads Manager for a campaign window that ran from January to March 2024, and the pressure to justify that decision was immediate. If the results were muddy, leadership wouldn’t see “learning,” they’d see waste. And because the campaign was performance-focused, every day without clarity made the risk feel bigger.

The backstory is that Naughty% had already tested other advertising platforms and saw potential in leaning into trend-driven, platform-native creative. The team believed content built for a video-first environment would outperform recycled assets designed for “every platform at once.” That meant committing not only to ads, but to a different creative rhythm. It also meant committing to measurement strong enough to support the decision if performance spiked or dipped.

The wall appeared in the most common place: attribution confidence. When results look good, everyone celebrates; when they look inconsistent, the first question becomes “can we trust the numbers?” Naughty% wanted accurate web attribution and clearer optimization signals so they could confidently target people most likely to purchase. Without that, scaling would be guessing, and guessing with your full budget is how brands get burned.

The epiphany was treating measurement as infrastructure rather than a checkbox. With a Cafe24-supported webstore, they implemented TikTok’s Events API integration to send server-to-server signals, tightening the connection between site behavior and the ad platform’s reporting and optimization. That wasn’t just for cleaner dashboards; it was to feed better signals into targeting and bidding so the system could learn faster. The case study describes this as building “best-in-class web attribution” and improving how results were measured inside Ads Manager.

The journey was a mix of signal work and creative discipline. They ran placements across TikTok and Pangle, used a “Maximum Delivery” bidding strategy to prioritize conversions, and built videos with strong hooks in the first three seconds to earn attention. As performance data came in, the campaign could be optimized with more confidence because measurement wasn’t drifting. That’s the hidden advantage of good analytics: it accelerates iteration without second-guessing.

The final conflict is that scaling amplifies every weakness. If creative fatigue hits, ROAS drops. If attribution gets noisy, the team hesitates. If the landing experience lags, clicks don’t convert. Naughty% had to keep creative fresh while validating outcomes fast enough to maintain confidence in the strategy.

The dream outcome was clear, published, and specific. TikTok’s case study reports 900% growth in sales attributed to TikTok and Pangle ads from January to March 2024, alongside 1.1M impressions, more than 76K clicks, and over 670 conversions, with ROAS figures listed for both placements. The lesson isn’t “use TikTok.” The lesson is: signal quality plus a structured creative approach makes scaling feel grounded instead of reckless.

DSW Found The Missing Revenue, Then Used Measurement To Change The Strategy

The problem didn’t show up as a dramatic crash. It showed up as something more dangerous: campaigns that looked fine, but never felt fully trustworthy. DSW’s digital efforts weren’t accurately accounting for revenue happening in-store, which meant performance reporting was quietly undercounting what mattered most. When your analytics is incomplete, you don’t just lose insight—you risk making the wrong budget decisions for months.

The backstory is that DSW is an omnichannel retailer, and real shopping behavior doesn’t stay inside one channel. People browse online, see products in social ads, then purchase in-store, and the journey doesn’t always leave clean fingerprints. If your model only credits ecommerce, the team can start optimizing for what’s easiest to track, not what actually drives total revenue. Over time, that creates a strategy that looks “data-driven” while drifting away from reality.

The wall was attribution blindness. If in-store revenue isn’t connected, you can’t measure true return, you can’t optimize confidently, and you can’t defend your strategy internally when budgets get questioned. The campaign might be working, but the dashboard can’t prove it. That gap forces teams into awkward arguments: marketing says “it’s helping,” finance says “show me.”

The epiphany was deciding to connect online and offline signals so the business could see one performance picture. Adweek’s write-up on the 2025 Meta Agency Awards describes how Tinuiti and DSW incorporated offline sales data into campaign tracking to build a unified view connecting digital efforts with in-store results. It also highlights the use of a conversion lift test design, including a 30% holdout group, to validate incrementality.

The journey was operational work that many teams avoid because it isn’t glamorous. It required aligning data sources, agreeing on how offline sales would be ingested, and standardizing how results would be evaluated. The campaign execution then had a measurement foundation solid enough to support real optimization, rather than optimization-by-feel. This is what “professional” looks like in social media marketing digital: not better opinions, better evidence.

The final conflict is that omnichannel measurement can create new complexity. If the team can’t maintain data quality, models drift again. If stakeholders don’t agree on what “success” means, reporting becomes political. And if lift testing isn’t designed carefully, it can be misread or dismissed. The work only pays off when the process becomes repeatable.

The dream outcome is a strategy the business can trust. Even without publishing every number publicly, the campaign’s inclusion as a performance award winner is meaningful because it’s explicitly tied to the measurement problem being solved: connecting offline revenue to digital optimization and validating incrementality, described in Adweek’s campaign overview. The real win is that the team’s next decision is based on a fuller picture of revenue, not a partial one.

Professional Promotion

“Promotion” becomes professional when it’s driven by evidence, not impulses. The goal is not to boost everything. The goal is to promote the right creative, to the right people, at the right time, with measurement strong enough to learn what actually changed.

What To Amplify

- Proof posts that already earned intent. If a post is getting saves, shares, thoughtful comments, or DMs, it’s telling you the message landed. That’s the kind of creative worth amplifying.

- Offers that match the moment. If your audience is discovering you, lead with clarity and credibility. If they already know you, lead with conversion assets.

- Creator content that reduces friction. When creator partnerships are a growing budget line, reflected in the projected $37 billion US creator spend, amplification should prioritize content that feels human and answers real objections.

How To Measure Promotion Without Fooling Yourself

- Keep tracking consistent. If UTMs and naming conventions change every week, you lose the thread and end up debating opinions again. Google’s guidance on campaign data handling in Analytics documentation is a practical reference for keeping this clean.

- Use server-side signals when you rely on conversion optimization. Meta and TikTok both explain how server event sharing supports more stable measurement and optimization in Meta’s Conversions API docs and TikTok’s Events API overview.

- Validate incrementality when budgets get serious. Lift tests and holdouts are not “enterprise-only.” They’re what you use when you need to know what your ads truly caused, illustrated by examples like the holdout-based lift validation described in the DSW campaign overview.

Professional promotion is really a promise: every euro you put behind a post should produce learning, not just spend. When your analytics setup supports that promise, social media marketing digital stops feeling like noise and starts behaving like a growth system you can confidently scale.

Advanced Strategies

Once your social media marketing digital foundation is steady, “advanced” stops meaning complicated and starts meaning deliberate. You’re no longer trying to prove that social can work. You’re trying to make results repeatable at higher volume without losing efficiency, trust, or clarity.

The biggest shift at this stage is moving from single-post thinking to system thinking: portfolio creative, audience orchestration, signal quality, and a measurement approach that can survive privacy limits and multi-device journeys.

Build A Creative Portfolio, Not A Single Winner

Scaling breaks “one-hit” strategies. A single winning ad or format will fatigue, and when it does, the whole program wobbles. A creative portfolio spreads risk across multiple angles: problem-led, proof-led, objection-led, comparison-led, and identity-led content.

Short-form video is usually where this portfolio expands fastest because it supports many angles quickly. The shift toward short-form is visible in benchmark work like Emplifi’s 2025 benchmarks noting Reels’ reach engagement rate and year-over-year changes in video engagement and in the platform trend narrative that video continues to accelerate across professional networks as well, with LinkedIn video views growing 36% year over year and uploads rising over 20%.

Portfolio thinking also makes your learnings portable. Instead of “this post worked,” you learn “this angle works,” which can be applied across formats, creators, and campaigns.

Engineer Signals So Optimization Has Something Real To Learn From

At small scale, platforms can still deliver results even with imperfect tracking. At larger scale, messy signals become expensive because the systems optimize toward incomplete data. That’s why advanced social media marketing digital teams treat signal quality as infrastructure.

For paid social, this typically means pairing browser signals with server-to-server signals where appropriate, using documented integrations like Meta’s Conversions API and TikTok’s Events API. The goal isn’t “more tracking.” The goal is more reliable conversion signals so delivery systems can learn faster and your reporting can be trusted when decisions get political.

Move From Attribution Debates To Incrementality Proof

As spend rises, attribution debates become a tax on progress. Teams waste energy arguing about which touchpoint “deserves” credit instead of learning what actually caused incremental revenue. Incrementality methods solve the more important question: what happened because of the ads that would not have happened otherwise.

Conversion lift and holdout approaches are increasingly standardized in platform measurement ecosystems, described in Meta’s Conversion Lift overview and in TikTok’s measurement framing of incrementality experimentation in TikTok’s Conversion Lift Study explainer. When you validate incrementality, promotion becomes easier to scale because you’re buying proven impact, not just reported clicks.

Scale Distribution Through Creators Without Losing Control

Creators scale faster than brand accounts because they scale trust. The challenge is governance: approvals, usage rights, paid amplification rules, brand safety, and performance measurement that doesn’t collapse into vanity metrics.

Creator investment is large enough that it now behaves like a formal channel, not an experiment, reflected in the projected $37 billion US creator ad spend figure for 2025, also covered in mainstream reporting like Business Insider and Forbes. At this level, the best teams treat creator content like a library of assets: performance-tagged, reusable, and connected to outcomes.

Scaling Framework

Scaling social media marketing digital is not “posting more.” It’s expanding output, spend, and impact while protecting efficiency. The framework below keeps scale sane by enforcing constraints that prevent the most common failures: creative fatigue, measurement drift, and operational overload.

1) Governance That Moves Fast Without Breaking

Scaling increases risk. More people touch the brand voice, more accounts exist, more paid spend runs, and more public conversations can spiral. The fix is not bureaucracy; it’s lightweight governance with clear ownership, access control, and escalation rules.

In regulated markets, transparency expectations continue to formalize, and practical orientation materials like the EU’s DSA transparency overview help teams think ahead rather than scramble after something goes wrong.

2) Cadence That Protects Quality

At scale, the calendar becomes a production line. If quality drops, performance drops, and spend becomes less efficient. The answer is batching and templating: repeatable formats, reusable briefs, and creative direction that stays consistent while angles rotate.

Benchmarks can help you pick a realistic pace by showing how formats are shifting across brands, like the format and engagement trends described in Emplifi’s 2025 report and the platform engagement movement discussed in Sprout Social’s 2025 benchmarks by industry.

3) Portfolio Budgeting Instead Of Single-Campaign Bets

Scaling works best when you split investment into a portfolio:

- Always-on proof that builds trust and captures demand steadily.

- Offers and launches that create urgency and conversion spikes.

- Experiments that search for the next scalable angle or audience.

This protects performance when one segment slows down. It also keeps learning continuous, which matters in an environment where budgets are rising and competition is intense, reflected in the $258.6 billion US digital advertising revenue figure for 2024.

4) Measurement That Stays Stable As Volume Grows

Scaling breaks fragile measurement. UTMs drift, naming conventions degrade, pixels misfire, and teams start reporting different truths. Prevent this by locking a measurement standard: one tagging system, one dashboard definition, and a routine check that your conversion events are still firing properly.

For web analytics consistency, practical documentation like Google Analytics campaign and traffic source guidance is useful as a baseline. For platform optimization stability, build redundancy using integrations described in Meta’s Conversions API documentation and TikTok’s Events API overview.

Growth Optimization

Optimization at scale is not “more tweaks.” It’s choosing the smallest set of levers that reliably move outcomes, then running them as a weekly system. The discipline is in deciding what not to change.

Creative Iteration As A Weekly Process

At small budgets, you can sometimes get away with “pretty good” creative. At scale, creative becomes your primary efficiency lever, because audience saturation arrives faster and fatigue arrives harder. Your goal is to rotate angles while keeping the message consistent.

Use a simple weekly loop: ship new hooks, refresh proof assets, and repurpose the best-performing creator content into new cuts. Keep a record of what changed so you learn patterns rather than celebrating random wins.

Audience Orchestration Across The Journey

Scaling means you stop treating “the audience” as one group. You build layers: cold discovery, warm consideration, hot retargeting, and post-purchase retention. Each layer needs a different message and a different measure of success.

When you do this well, organic content builds trust and paid promotion accelerates reach to the right segment at the right time. It also makes your reporting clearer because performance is evaluated against the job each layer is meant to do.

Incrementality As A Routine, Not A One-Off

At scale, you need a regular way to confirm that growth is real. That’s where lift testing or holdout designs become more than a “special project.” They become a periodic health check.

Platform measurement ecosystems increasingly emphasize this direction, described in Meta’s Conversion Lift materials and TikTok’s emphasis on incrementality experimentation in its Conversion Lift Study explainer. When your team can say “this is incremental,” scaling decisions get easier and internal skepticism fades.

Scaling Stories

Scaling stories are useful when they show what actually changes when a program grows: the pressure, the constraints, the mistakes, and the moment measurement turns chaos into confidence. This one is grounded in publicly shared campaign documentation and focuses on the implementation lesson, not the hype.

Domino’s Spain Tried To Prove TikTok Was Incremental, Not Just Loud

The pressure hit when the campaign wasn’t just “another test.” It was tied to a major moment, and the business needed proof, not vibes. The team couldn’t afford a story that sounded good while the numbers stayed ambiguous. If performance was questioned later, they needed an answer stronger than “TikTok drove awareness.”

The backstory was already ambitious. Domino’s Spain wanted to push app purchases with a strong promotional offer and make the app the default place people ordered, not just a backup option. They also wanted to understand the role TikTok played across the funnel, not only in last-click reporting, described in TikTok’s Domino’s Spain campaign overview.

The wall showed up where most scaling programs get stuck: measurement credibility. If you can’t isolate what ads caused, every stakeholder reads the same dashboard differently and decisions slow down. The bigger the budget, the more dangerous that becomes because uncertainty turns into hesitation or politics.

The epiphany was choosing a method designed to answer the real question. Instead of arguing about attribution models, they ran an incrementality experiment using a conversion lift design with test and control groups to measure what changed because of exposure. TikTok frames this methodology directly in its conversion lift materials, including the brand-specific write-up in the Domino’s conversion lift study page and the broader explanation in TikTok’s Conversion Lift Study overview.

The journey was about building confidence through structure. They treated measurement like part of the strategy, not a report at the end, and used the lift framework to connect exposure to outcomes across search behavior, web, and app actions. They also approached it as a full-funnel effort tied to a major cultural moment, documented in the Euro 2024 conversion lift case summary.

The final conflict was that scaling always tempts teams into oversimplifying results. If one metric moves, people want to declare victory and move on, even if other signals don’t align yet. A lift study can also create friction if teams aren’t aligned on what “success” means before the test begins. The process only works when the organization commits to accepting the outcome and using it to make the next decision.

The dream outcome was clarity strong enough to scale. TikTok’s published results highlight lift across multiple outcomes, including a +6% lift in brand-related search conversion rate, +14% lift in app installs, and +9% lift in web and app complete payments. The bigger lesson for social media marketing digital isn’t “copy this campaign,” it’s “scale what you can prove is incremental,” because proof turns promotion into a confident business decision instead of a leap of faith.

What To Promote When You’re Scaling

- Content that already earned intent. When a post triggers saves, shares, thoughtful comments, or qualified DMs, it’s showing you the message is landing. Promotion turns that signal into reach.

- Proof that reduces friction. Case studies, demos, before-and-after explanations, and creator content that answers objections scale better than generic brand claims.

- Offers with clean conversion paths. Scaling exposes weak landing pages and confusing handoffs instantly. Promote only what converts reliably under pressure.

How To Spend Without Losing Efficiency

Use a portfolio approach, not one big bet. Keep an always-on layer for steady demand capture, a seasonal layer for moments that spike intent, and an experimentation layer that hunts for new winners. This helps you avoid the “everything depends on one campaign” trap.

It also aligns with broader market behavior where marketers continue to increase investment in social. Nielsen’s survey results show 29% of global marketers planned to increase social media spend by more than 50% in the next 12 months, which makes efficiency and proof even more important as competition rises.

How To Measure Promotion Like A Professional

- Lock your tracking standards. Consistent UTMs and naming conventions keep learning intact. If your definitions drift, your reporting becomes a debate.

- Protect signal quality. Build redundancy using documented platform approaches like Meta’s Conversions API and TikTok’s Events API so optimization and reporting stay stable as spend grows.

- Validate incrementality on a schedule. When budgets become meaningful, periodic lift testing prevents false confidence and helps defend scaling decisions, reflected in the lift-based measurement approaches described in TikTok’s incrementality explainer and Meta’s Conversion Lift overview.

Professional promotion is where social media marketing digital becomes a real growth engine. When you promote what already proves intent, measure what’s incremental, and keep the system stable, scaling stops feeling like gambling and starts feeling like compounding.

Future Trends

The next wave of social media marketing digital will feel less like “posting on platforms” and more like operating inside an ecosystem that blends search behavior, creators, commerce, AI, and measurement constraints. The teams that win won’t be the loudest. They’ll be the most adaptable, because they’ll build systems that still work when distribution shifts.

These trends are shaping what that ecosystem looks like right now:

- Social discovery becomes a default search behavior. People are increasingly using social feeds to discover products, ideas, and “how-to” answers in the moment, and platforms are leaning into that behavior. TikTok frames this shift directly in its 2026 trend forecast for marketers, while broader industry reporting continues to track how discovery patterns are changing.

- Authenticity beats polish, but only when it’s operationalized. “Real” content is not a vibe; it’s a production decision. TikTok’s Next 2026 trend hub and the downloadable TikTok Next 2026 Trend Report both emphasize process, behind-the-scenes, and unfiltered storytelling as a driver of resonance.

- Short-form video keeps expanding into serious business contexts. LinkedIn’s momentum is a signal that video is no longer “only for entertainment,” highlighted by reporting that LinkedIn video views grew 36% year over year as the platform pushes deeper into video and creator ecosystems.

- Creator media becomes a planning line item, not an experiment. Paid creator investment is now large enough to shape channel strategy, reflected in the IAB’s 2025 annual reporting that references $37B in creator-economy spend projections and reinforced in the dedicated IAB creator ad spend report.

- Measurement becomes privacy-safe by design, not by patchwork. Attribution will continue to be noisy. The advantage goes to teams that build stable signal flows and validate incrementality. Meta’s product direction is visible in its Conversions API overview, including the note that Offline Conversions API was scheduled for discontinuation in May 2025, nudging teams toward cleaner, modern measurement patterns.

- Remote work expands the talent market for both companies and freelancers. For freelancers, this matters because the supply of remote marketing work is massive and easy to access if you have proof and a tight offer. Job-market snapshots show scale at a glance, like FlexJobs listing 18,870 remote marketing jobs (Feb 2026) and LinkedIn showing 54,000+ remote marketing jobs in the US.

Future-proofing social media marketing digital doesn’t require predicting every platform move. It requires building a system that can absorb change: portfolio creative, resilient measurement, and a distribution plan that doesn’t depend on a single channel.

Strategic Framework Recap

This guide has treated social media marketing digital as an operating system, not a content calendar. Here’s the recap in plain language:

- Start with outcomes. Define what the business needs (pipeline, purchases, retention, hires) and how you’ll prove it with clean measurement rules.

- Build a repeatable content system. Use a small set of formats with clear jobs, so quality stays high even when the team is busy.

- Design a conversion path that doesn’t break. Every post should lead somewhere meaningful, and your tracking should stay consistent so you can learn.

- Layer execution. Foundation first (consistency and tracking), then performance (testing and promotion), then scale (governance, integration, stability).

- Optimize with discipline. One hypothesis at a time, weekly reviews, documented learnings that compound.

- Scale what you can prove. When budgets rise and competition intensifies, incrementality validation becomes the difference between confident growth and expensive guessing.

If you implement nothing else, implement this: a weekly learning loop that forces decisions. It’s the simplest habit that turns effort into compounding results.

FAQ – Built For A Complete Guide

What does “social media marketing digital” actually mean?

It’s the full system of how you use social platforms to create demand and capture demand, using content, community, creators, paid distribution, and measurement. The “digital” part matters because success depends on tracking, conversion paths, and data quality—not just publishing.

What metrics matter most if I want business outcomes, not vanity metrics?

Use a small stack: attention (watch time, reach), intent (saves, shares, qualified comments, clicks), and action (leads, purchases, qualified calls). The key is tying action to measurement infrastructure you can trust, using standards like clean campaign tagging in Google Analytics and stable conversion signaling where relevant.

How often should I post to see results?

Post as often as you can maintain quality and consistency. A reliable cadence beats bursts. Many teams win by choosing 4–6 repeatable formats and publishing a sustainable weekly schedule rather than chasing daily output.

Should I focus on organic content or paid promotion?

Use both, but give them different jobs. Organic builds trust and creates a steady learning stream. Paid accelerates distribution for what already shows intent. The professional move is promoting proven creative instead of guessing what might work.

How do I choose which platforms to prioritize?

Choose based on audience behavior and format fit. If your audience uses a platform to discover and research, you need a presence there. If the platform is increasingly video-led in your category, prioritize video formats. Trend signals like LinkedIn’s accelerating video growth can help you validate where attention is moving.

Why does social tracking feel unreliable compared to other channels?

Because people switch devices, platforms restrict data, and browser tracking gets blocked. The practical solution is redundancy: consistent campaign tagging plus stronger event signaling where needed. Meta’s Conversions API overview explains how server-side event sharing supports measurement and optimization when browser signals degrade.

What is incrementality, and why should I care?

Incrementality asks: what happened because of your marketing that wouldn’t have happened otherwise? It matters when budgets scale and leadership wants proof. Lift and holdout approaches exist specifically to answer that question, described in Meta’s Conversion Lift overview and TikTok’s Conversion Lift Study explainer.

How do creators fit into a serious growth strategy?

Creators help scale trust, not just reach. The best approach is treating creator content like a performance asset library: tag what works, reuse it, and amplify it responsibly. Creator media’s importance is reflected in market-level reporting like the IAB creator ad spend report.

What’s the fastest way to improve performance without blowing up the whole strategy?

Pick one bottleneck and fix it. If content earns attention but not clicks, your messaging is unclear. If clicks happen but conversions don’t, your landing page or offer is the bottleneck. If conversions happen but reporting is messy, your tracking and signal setup needs work.

If I’m a freelancer, what proof matters most to win clients?

One tight case study beats a long resume. Show the baseline, what you changed, and what moved. Keep it simple and visual. Freelancers who connect work to outcomes are easier to hire because the risk feels lower, and market context for freelancer impact is discussed in research like Upwork’s 2026 in-demand careers resource and the broader trend framing in Upwork’s 2026 in-demand skills report.

How do I stay ahead as trends change in 2026?

Build adaptability into the system: portfolio creative, weekly testing, and stable measurement. Trend work like the TikTok Next 2026 Trend Report is useful for direction, but your advantage comes from executing consistently and learning faster than competitors.

Work With Professionals

There’s a frustrating moment almost every marketer hits: you know what to do, you can see the strategy clearly, and you still feel stuck because the next client doesn’t show up fast enough. You’re not short on skill. You’re short on a clean pipeline that brings the right opportunities without endless pitching.