Most teams don’t fail at social because they “didn’t post enough.” They fail because social quietly becomes five jobs at once: brand, performance, community, creator partnerships, and customer care.

That’s why social media marketing companies have become less like “a vendor who posts” and more like an operating partner: they bring a system, specialist talent, and measurement discipline that’s hard to maintain in-house when priorities shift every quarter.

This series breaks down what these companies actually do, why they matter now (not just “because TikTok”), and a framework you can use to choose, brief, and manage them without wasting months on misalignment.

Article Outline

- What Are Social Media Marketing Companies?

- Why Social Media Marketing Companies Matter

- Framework Overview

- Core Components

- Professional Implementation

What Are Social Media Marketing Companies?

Social media marketing companies are specialist teams that plan, produce, distribute, and optimize social media work across both organic and paid channels. In practice, they sit somewhere between a creative studio, a media buying function, and a customer experience layer.

The best ones don’t treat “social” as a single skill. They split it into distinct capabilities: creative strategy and production (what you say and how it looks), distribution (where and to whom it’s shown), community and customer care (how you respond and build trust), and measurement (what actually drove outcomes).

This distinction matters because the economics of attention keep moving. Global social media usage continues to grow, and the amount of inventory being bought and sold through social platforms keeps expanding, which changes what brands expect from partners and what partners must be good at to deliver results. Kepios’ Digital 2025 report highlights the scale of global social media use, and it’s only useful if a partner can translate that scale into focused reach for your audience. Kepios’ running global social media statistics also show how fast the baseline keeps moving.

In other words: social media marketing companies aren’t “posting agencies.” They’re teams that build a repeatable system for attention, trust, and conversion inside environments that change every few months.

Why Social Media Marketing Companies Matter

Social has become one of the largest pipes in the modern advertising economy, and that changes the stakes. Multiple independent forecasts point to global ad spend pushing past the trillion-dollar mark in 2025, with digital taking an increasingly dominant share. WPP Media’s December 2025 forecast projects global advertising revenue reaching about $1.14T for 2025, while Insider Intelligence (eMarketer) also frames 2025 as a “$1T+” year with digital exceeding 75% share. Even when projections differ due to economic assumptions, the direction is consistent: more budget is flowing into measurable, auction-based media where execution quality directly impacts cost and outcomes.

Within that, social is a major growth engine. WARC’s 2025 outlook pegs social media ad spend in the hundreds of billions and growing at double-digit rates. WARC has projected social ad spend rising to roughly $306B in 2025, and their broader social media “big picture” view also expects spend to exceed $300B in 2026. When that much money is in motion, small operational advantages compound quickly.

Then there’s the expectation gap. Brands aren’t just competing on creative anymore; they’re competing on responsiveness and trust. Consumer research keeps landing on the same pressure point: if you’re slow to respond on social, people move on. Sprout Social’s Index-based guidance notes that a large share of consumers expect a response within 24 hours, and the “speed” threshold shows up again in other datasets: Emplifi’s 2025 consumer-brand survey frames 24 hours as the outer limit for acceptable replies, while MarketingCharts’ coverage of social response expectations shows many frequent users want replies in hours, not days.

Finally, the work itself is becoming more technical. Platform automation and AI-driven optimization are accelerating, but that doesn’t remove the need for experts; it shifts where experts create leverage. Meta’s own roadmap and earnings communications underline how heavily its ad systems are leaning into AI and automation. Meta’s full-year 2025 results (released January 28, 2026) emphasize continued investment in AI and core advertising performance, and Meta’s Advantage+ product positioning highlights automation as a central performance lever. The practical takeaway: partners need to understand what the machines do well, where they fail, and how to feed them better inputs (creative, audiences, conversion signals, and clean measurement).

That’s the “why now.” Social media marketing companies matter because they sit at the intersection of budget scale, customer expectations, and increasingly automated platforms. They’re there to keep you from paying the “complexity tax” in wasted spend, inconsistent creative, slow response times, and unclear attribution.

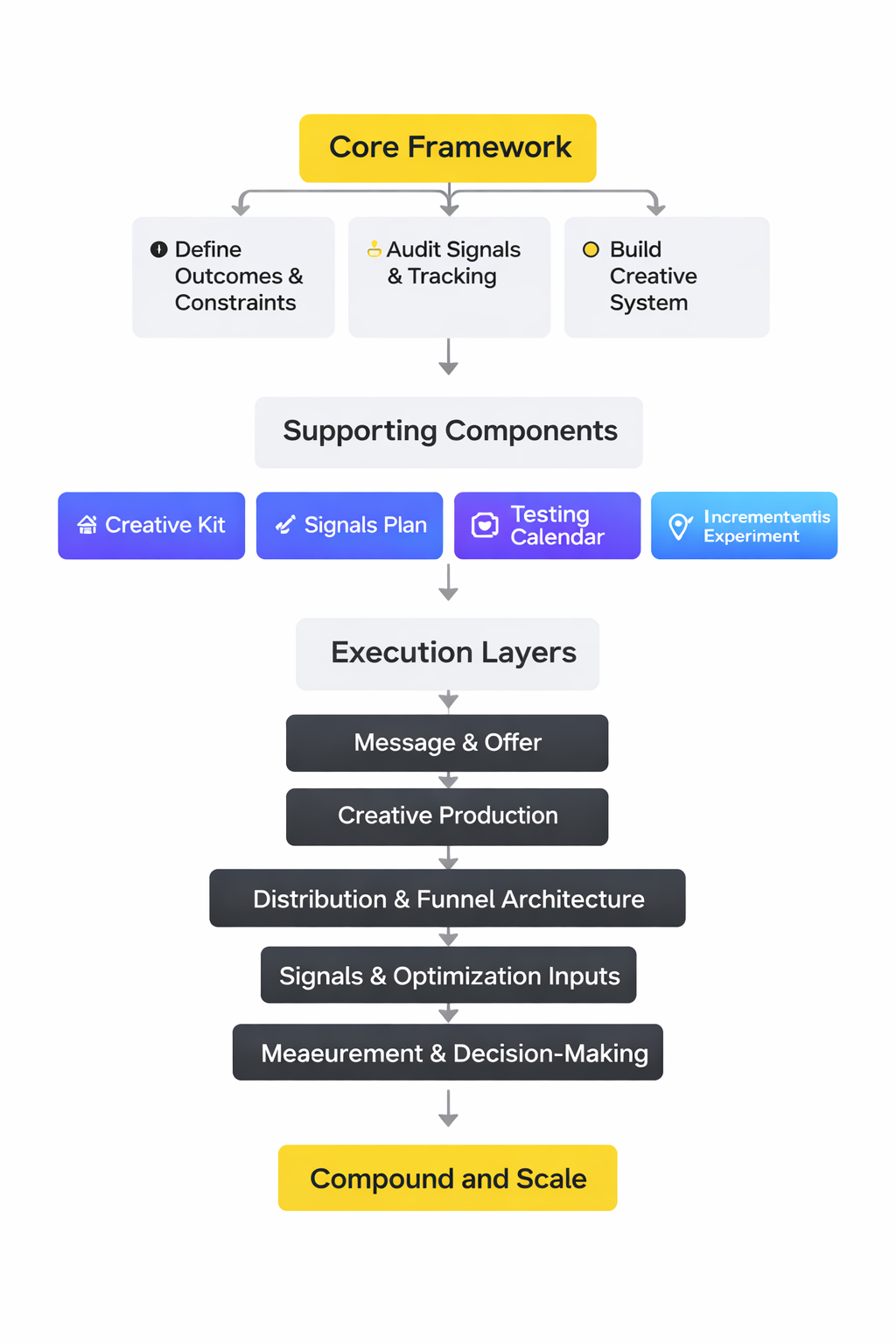

Framework Overview

This framework is designed to help you evaluate social media marketing companies the way you’d evaluate a high-impact internal function: by system design, not vibes. It’s also built to prevent a common failure mode where a partner looks great in the pitch, then turns into a “posting calendar” once the contract starts.

Think of the framework as five connected layers:

- Strategy and positioning: the narrative and POV you can consistently defend in-market, not just campaign slogans.

- Creative operating system: how ideas become high-volume, platform-native assets fast enough to keep pace with content cycles.

- Distribution and experimentation: paid and organic working together, with clear hypotheses and controlled tests.

- Community and trust: response standards, moderation, escalation paths, and how social becomes a CX advantage.

- Measurement and learning: the analytics spine that turns performance into decisions, not dashboards.

Why these layers? Because marketing budgets are under pressure, and teams are prioritizing productivity and paid media efficiency. Gartner’s 2024 CMO survey notes marketing budgets at 7.7% of company revenue and paid media taking a larger share of budget, while Gartner’s 2025 update emphasizes budgets staying flat with many CMOs reporting insufficient funds to execute strategy. In that environment, a partner must prove they can run a tight system across creative, distribution, and measurement.

Core Components

Before you compare proposals, it helps to know what “good” must include. The core components below are what separate social media marketing companies that reliably create business outcomes from those that mainly produce activity.

1) Strategy That Survives the Content Calendar

A practical strategy is built around decisions: who you are for, what you will be known for, and what you will not chase. This is increasingly important because attention is being fragmented across formats and platforms, and “more content” is not the same as “more clarity.” HubSpot’s State of Marketing positioning for 2025 highlights how distinct brand POV and format choices shape results, and Salesforce’s 2026 State of Marketing commentary reinforces that AI and operational execution are now inseparable from strategy.

2) A Creative System Built for Speed and Variation

On modern social platforms, performance is often a function of how quickly you can test creative variations without degrading the brand. That’s why top partners operate like a lab: modular concepts, consistent formats, rapid production, and clear learning loops. Hootsuite’s trends work for 2026 focuses heavily on agility as a competitive advantage, and their earlier research still shows the same operational reality: teams that can move quickly and learn win. The 2025 Hootsuite Social Trends report explains its marketer sample and reinforces the shift toward insight-led execution.

Platform-native creative matters too. TikTok’s own research focuses on first impressions, early branding, and emotional impact as drivers of creative effectiveness. TikTok for Business highlights creative factors tied to stronger “return on creative” outcomes, and their measurement guidance emphasizes learning systems over one-off attribution takes. TikTok’s marketing science perspective frames measurement as an ongoing learning journey.

3) Distribution That Connects Organic, Paid, and Influence

Distribution is where many engagements die: great content with no reach plan, or paid spend with weak creative inputs. A strong partner designs distribution as a portfolio: organic for signal and trust, paid for scale and control, and creators/influencers for cultural adjacency and credibility.

Influencer spend is a useful proxy for how much brands value creator distribution, but the headline numbers vary by methodology, so treat them as directional rather than precise. Still, multiple trackers show the market is large and growing. Influencer Marketing Hub’s 2025 benchmark report estimates the influencer marketing market in the $30B+ range for 2025, while HypeAuditor’s 2025 state-of-influencer-marketing view presents a smaller estimate with its own assumptions. The key takeaway isn’t the exact dollar figure; it’s that creator distribution has become mainstream enough that partners need a real methodology for selecting, briefing, and measuring creator work.

For B2B, distribution increasingly includes professional video and thought leadership ecosystems, not just ads. Reuters’ August 25, 2025 reporting on LinkedIn’s video ad push shows how quickly professional platforms are building creator-style inventory, and LinkedIn’s 2024 B2B Marketing Benchmark report reflects the pressure for agility and data-driven execution in B2B social.

4) Community and Customer Care as a Growth Lever

Community is not “replying when you have time.” It’s a set of response standards, moderation rules, and escalation paths that protect trust. This matters because customer expectations are moving toward immediacy and personalization. Sprout Social’s customer service statistics summarize Index-based expectations for fast responses, and Emplifi’s 2025 survey connects slow responses with churn risk.

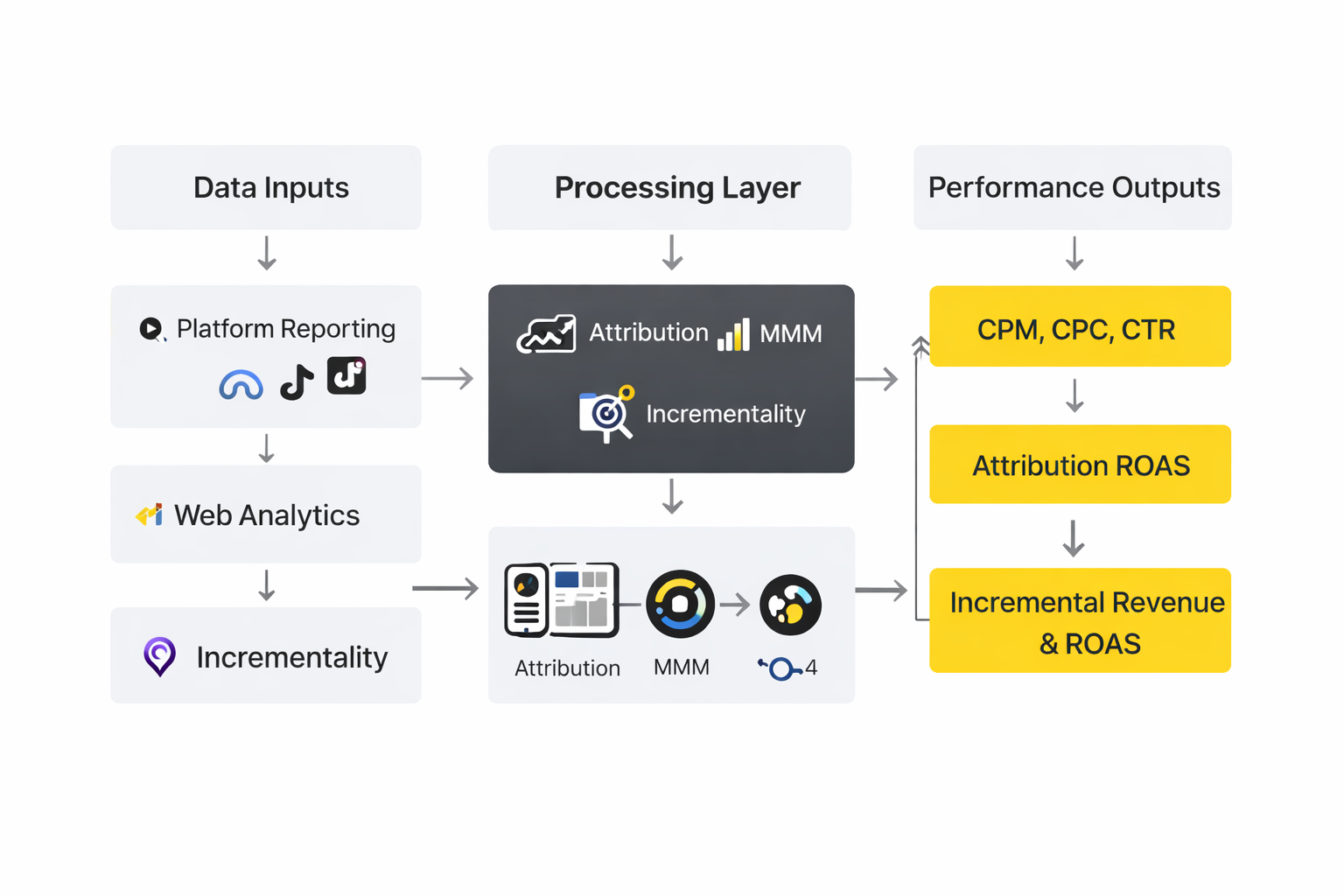

5) Measurement That Matches the Real Buying Journey

Social rarely works in a single-click universe anymore. People watch, save, return, ask friends, search, and only then convert. Measurement needs to acknowledge that messy reality while still enabling clear decisions.

One way to sanity-check a partner is to ask how they triangulate performance across platform reporting, on-site analytics, and incrementality signals. Industry measurement bodies continue to emphasize that digital and social are major revenue categories, which raises the bar for accountability. The IAB/PwC full-year 2024 internet ad revenue report highlights social as a major category with substantial year-over-year change, and broader research on influence argues that video and social touchpoints shape demand well beyond the last click. Google’s research with BCG on “influence” reframes how touchpoints affect demand across the journey.

Professional Implementation

Even a great framework fails if execution is fuzzy. Professional implementation is where you convert “we hired one of the best social media marketing companies” into a working operating rhythm.

At a minimum, strong partners make four things explicit from day one:

- Decision rights: who approves what, how fast, and what happens if approvals stall.

- Cadence: weekly performance reviews, monthly creative learning, quarterly strategy resets.

- Inputs and outputs: what the client must provide (offers, priorities, constraints) and what the partner must deliver (creative volume, test plan, measurement plan).

- Quality controls: brand safety, claims review, comment moderation rules, and escalation paths.

This matters because marketing leaders are being pushed to do more with budgets that are not growing. The CMO Survey’s 2025 highlights show ongoing pressure on marketing spend and digital investment, and Duke Fuqua’s summary of CMO Survey insights notes how spending mix and scrutiny are evolving. Under that scrutiny, the “implementation layer” is what prevents social from becoming a pile of disconnected tasks.

In Part 2, we’ll use the framework to map the service landscape: the main types of social media marketing companies, what each is best at, and the tradeoffs that matter before you sign anything.

Step-by-Step Implementation

The fastest way to spot experienced social media marketing companies is to watch how they start. Not with “let’s post more,” but with a disciplined implementation sequence that makes creative, targeting, and measurement work together without constant firefighting.

Below is a step-by-step rollout that fits most brands, whether the goal is membership growth, lead generation, or ecommerce. The steps look simple, but the order is the difference between a campaign that learns and a campaign that just spends.

Step 1: Define Outcomes and Constraints

Begin with a short “decision brief,” not a long deck. One page is enough: the business outcome, the primary audience, the offer, the non-negotiables (brand, legal, privacy), and what you’re willing to test.

This matters because the platforms can optimize almost anything, but they can’t fix vague goals. When Basic-Fit partnered with TikTok, the brief wasn’t only “drive subscriptions.” It also included a brand perception goal tied to well-being, which shaped the full-funnel build. Basic-Fit’s TikTok case study spells out both the membership and perception objectives.

Step 2: Audit Signals and Tracking

Before creative production ramps up, validate the conversion path end-to-end. That means confirming what counts as a conversion, where it fires, whether it dedupes properly, and whether each platform receives consistent event data.

For TikTok, teams often combine browser-side and server-side signals so the platform can see more of the journey. Basic-Fit implemented both the Pixel and Events API as part of its setup, explicitly positioning it as a way to share marketing data reliably and enable better optimization. Basic-Fit’s case study describes integrating Events API and TikTok Pixel for more precise targeting and optimization.

On Meta, server-side event passing is formalized through Conversions API, which is designed to connect advertiser marketing data with Meta for measurement and optimization. Meta’s Conversions API documentation describes the data connection purpose and supported event types.

Step 3: Build a Creative System Before You Build a Creative Calendar

Social media marketing companies that scale performance don’t start by filling dates. They start by defining creative building blocks: a small set of repeatable formats, hooks, proof points, and CTAs that can be mixed into many variations without losing the brand.

In practice, this looks like a “creative kit” your team and the partner can use together: brand-safe claims, product shots, UGC guidelines, voice rules, and a workflow for approvals. The goal is to make iteration easy, because the learning rate is the whole game.

Basic-Fit leaned into TikTok-first creative by using TikTok Creative Exchange to create authentic videos alongside official campaign assets, which made variation part of the plan rather than an afterthought. Basic-Fit’s case study describes using TikTok Creative Exchange to produce TikTok-first videos.

Step 4: Launch in Controlled Waves

Instead of “launch everything,” start in waves: one audience cluster, one creative set, one conversion event, and one measurement plan. Wave one is for validation, not scale.

This approach reduces the risk of misattribution and lets the team find obvious breakpoints early. It also creates a shared language between stakeholders: what’s working, what’s uncertain, and what needs a clearer test.

Step 5: Measure Incrementality, Not Just Clicks

As soon as the campaign is stable, plan at least one incrementality experiment. Click-based reporting is useful, but it can’t tell you what would have happened without the ads.

TikTok frames Conversion Lift as the “gold standard” for understanding true impact through experimentation, which is why it’s often used to validate whether a full-funnel approach is actually driving new outcomes. TikTok’s Conversion Lift Study overview focuses on incrementality through experimentation.

Research in marketing measurement increasingly recommends triangulating multiple methods (attribution, MMM, and incrementality) to reduce blind spots in any single model. A 2025 study in the Journal of Digital & Social Media Marketing argues for a triangulation approach combining attribution, MMM, and incrementality.

Execution Layers

Once implementation is underway, execution becomes a layered system. Strong social media marketing companies operate these layers in parallel, because a campaign can look “busy” while still failing at one critical layer.

Layer 1: Message and Offer

This is where clarity lives: what problem you solve, why now, and what action you want. If the offer is fuzzy, creative becomes entertainment instead of persuasion. If the message is inconsistent, the platform can optimize delivery, but it can’t optimize trust.

Layer 2: Creative Production

This layer is speed plus quality. The goal is to ship enough variations to learn, while keeping the brand recognizable and the claims defensible.

Basic-Fit’s build shows what “layered creative” can look like: official campaign assets plus TikTok-first content designed to feel native to the platform. Basic-Fit’s case study describes pairing official videos with TikTok-first creative created through TikTok Creative Exchange.

Layer 3: Distribution and Funnel Architecture

Distribution is how you sequence attention into action. That usually means top-of-funnel reach, mid-funnel education, and bottom-funnel retargeting that feels like a continuation of the conversation, not a sudden hard sell.

LinkedIn’s Innodisk story outlines a clean version of this: broad awareness with thought leadership content, mid-funnel website-driving content guided by demographics insights, then retargeting with the Insight Tag for bottom-funnel actions. LinkedIn’s Innodisk customer story lays out the three-stage full-funnel roadmap and Insight Tag role.

Layer 4: Signals and Optimization Inputs

This layer is unglamorous but decisive. Platforms learn from the signals you send them: events, match keys, audiences, and conversion definitions. If the signals are incomplete or inconsistent, optimization becomes noisy and fragile.

Meta’s Conversions API exists precisely to create a direct connection between marketing data and Meta systems, which is why teams pair it with browser-side signals and deduplication strategies. Meta’s Conversions API documentation explains the purpose of the server-side connection.

Layer 5: Measurement and Decision-Making

Measurement is only valuable if it ends in decisions. This layer defines what gets reviewed weekly, what gets reviewed monthly, and which numbers are used for learning versus reporting.

Optimization Process

Optimization isn’t “tweak targeting until it works.” It’s a disciplined loop that protects learning. The loop below is how many high-performing social media marketing companies keep progress steady even when the platform algorithms shift.

The Weekly Optimization Loop

- Monday: Review performance by creative theme and funnel stage, not just by campaign name. Decide what to pause, what to scale, and what needs a cleaner test.

- Tuesday–Wednesday: Produce and ship the next creative batch with a clear hypothesis (new hook, new proof point, new format, new CTA).

- Thursday: Validate signals and conversion integrity. If tracking drifted, fix it before drawing conclusions.

- Friday: Summarize learnings in plain language and update the next week’s test plan.

Testing Guardrails That Keep Results Trustworthy

Two pitfalls ruin optimization: running too many uncontrolled changes at once, and confusing A/B setup with causal impact. Platform delivery algorithms can create misleading outcomes if variants end up reaching different sub-audiences, even when the test looks clean on the surface.

A 2025 paper on experimentation tools in digital advertising highlights how A/B tests can suffer from “divergent delivery” when algorithms deliver variants to different audience segments, which can distort conclusions if you assume exposure is comparable. A 2025 arXiv paper explains divergent delivery risks in platform A/B testing.

That’s why incrementality experiments matter. TikTok’s Conversion Lift framing emphasizes experimentation to understand true impact, and it’s a practical complement to day-to-day A/B testing. TikTok’s Conversion Lift Study overview describes incrementality through experimentation.

Creative as the Main Lever

When tracking is stable and the funnel is coherent, creative becomes the strongest lever you control. Your partner should be able to explain what they learned from creative performance in a way that changes what gets produced next week, not just how budgets are moved.

Basic-Fit’s campaign intentionally combined multiple ad formats, creative variations, and measurement tools to strengthen performance across the funnel rather than relying on a single tactic. Basic-Fit’s case study describes bundling formats, TikTok-first creative, and signals solutions to maximize performance.

Implementation Stories

Implementation is where strategy gets tested by reality: deadlines, messy data, stakeholder approvals, and the uncomfortable fact that audiences don’t react the way you hoped. The stories below are built from published platform case studies, keeping the details grounded in what those companies publicly described.

Basic-Fit: Building a Full-Funnel System That Could Prove It Worked

The pressure wasn’t subtle. Membership growth needed acceleration, and the brand wanted to shift how people felt about it, not just how many people clicked. Every week that passed without momentum felt like the market choosing someone else.

Basic-Fit was already a major fitness brand in the Netherlands, known for affordability and 24/7 access. The ambition was bigger than a quick spike in sign-ups; it wanted long-term loyalty and a stronger association with well-being. That’s why TikTok wasn’t treated as a side channel—it became the stage for a “full-funnel” effort. Basic-Fit’s TikTok case study describes the membership goal and the well-being perception goal.

The wall showed up in the usual place: execution complexity. Full-funnel campaigns can collapse when the creative doesn’t feel native, when formats don’t work together, or when measurement can’t separate noise from true impact. Without a system, “more spend” just magnifies the uncertainty.

The epiphany was that the campaign needed three things working together: TikTok-first creative, a format mix that matched the funnel, and reliable signals for optimization. Basic-Fit didn’t try to solve this with one ad type or one video. It built a stack of tactics designed to reinforce each other.

The journey started with creative production that could actually live on TikTok. Through TikTok Creative Exchange, the team created fun, authentic brand videos alongside official campaign assets focused on membership promotions. That meant variation wasn’t a last-minute scramble; it was baked into the plan. Basic-Fit’s case study details the TikTok Creative Exchange approach and creative mix.

Then the final conflict arrived: proving performance without fooling themselves. Basic-Fit integrated both Events API and TikTok Pixel so marketing data could be shared in a reliable way for more precise targeting and optimization, but measurement still needed a credibility check. The campaign’s conversion impact was verified through a conversion lift study, which is exactly the kind of validation teams reach for when they want causality, not just clicks. Basic-Fit’s case study describes using Events API and Pixel and verifying conversion impact with a conversion lift study.

The dream outcome was a campaign that delivered both business and brand movement, without guesswork. The case study reports lifts in ad recall and brand preference, with conversions improving as well. Just as importantly, the story shows how a structured implementation can make “full funnel” feel like a controllable system instead of a buzzword. Basic-Fit’s reported results include a 15% lift in ad recall, 5.9% lift in brand preference, and an 8.2% increase in conversions.

Innodisk: Turning Full-Funnel Structure Into New Visitor Growth

At first, the results looked like effort without momentum. Campaigns ran at different funnel stages, but the journey between them didn’t feel connected. When that happens, the audience experiences your brand as fragments instead of a story.

Innodisk markets globally to a niche audience, which makes wasted impressions painfully expensive. The company needed a way to nurture the right professionals from awareness to action without losing them in the middle. LinkedIn’s own customer story frames this as a shift from stage-by-stage campaigns to a repeatable full-funnel approach. LinkedIn’s Innodisk story describes the move to a full-funnel strategy and why it became repeatable.

The wall was translating top-of-funnel attention into bottom-of-funnel leads without guessing who was actually ready. Broad targeting can build awareness, but it can also create a fog where it’s hard to see intent. Without a clean way to identify and retarget high-intent audiences, lead generation becomes a patience game you can’t reliably win.

The epiphany was to treat the funnel as a sequence with explicit handoffs. Broad awareness would stay broad, but it would feed a mid-funnel layer shaped by real demographic response data. Then conversion efforts would focus on the audiences that showed the strongest intent signals.

The journey followed a simple roadmap: top-of-funnel Sponsored Content to build thought leadership, mid-funnel content to drive website visits using Campaign Demographics insights, and a conversion layer powered by retargeting once the Insight Tag was installed. Instead of treating the Insight Tag as “just tracking,” it became the tool that made bottom-funnel targeting more precise. This is described directly in LinkedIn’s roadmap section for the campaign. LinkedIn’s Innodisk story explains the three-stage roadmap and the Insight Tag role in conversion-stage retargeting.

The final conflict was sustaining performance without reverting to siloed campaigns. Full-funnel systems can drift if the team stops learning from each stage and starts optimizing only the last step. Innodisk avoided that by using campaign insights and learnings from each experience to strengthen the next, which is how “one successful campaign” becomes an operating model. LinkedIn’s Innodisk story notes the company uses insights and learnings to bolster the next full-funnel campaign.

The dream outcome was measurable growth in the audience that actually matters: net-new visitors who hadn’t been on the site before. The customer story reports a 143% increase in website visitors and 88% new visitor traffic from the first full-funnel campaign. That kind of result is why social media marketing companies push for structure—because structure is what makes improvement repeatable. LinkedIn’s Innodisk story reports a 143% increase in website visitors and 88% new visitor traffic.

Implementation Non-Negotiables

- One source of truth for conversions: a shared definition of what counts, where it fires, and how it’s validated across platforms.

- A documented signals plan: which events are sent, how deduplication works, and who owns fixes when something breaks.

- A creative throughput target: how many variations ship weekly, and what “good variation” means for your brand.

- A testing calendar: a rolling plan with hypotheses, not random experiments based on vibes.

- An incrementality checkpoint: at least one lift experiment per quarter for major initiatives to confirm causal impact. TikTok’s Conversion Lift Study framing is a useful reference for why experimentation matters.

What to Ask Your Partner in Week One

- “Show me the first two weeks of outputs.” You’re looking for a concrete sequence: tracking validation, creative kit, first wave launch, first learning review.

- “How do you prevent misleading test results?” A thoughtful answer will mention controlled changes and the risk of algorithmic delivery differences in A/B tests. The divergent delivery problem in platform A/B tests is described in a 2025 arXiv paper.

- “How do you reconcile platform reporting with reality?” The best answers will reference triangulation across multiple methods rather than promising a single perfect number. A 2025 triangulation framework argues for combining attribution, MMM, and incrementality to mitigate weaknesses in any one approach.

Part 4 will zoom in on analytics and the decision layer: what to measure, how to interpret it without self-deception, and how the best teams turn performance data into a creative advantage.

Statistics and Data

Analytics is the quiet line between social that “feels busy” and social that actually compounds. The strongest social media marketing companies treat data as a steering wheel, not a scoreboard. They use it to answer simple, expensive questions: Are we buying attention efficiently? Are we earning trust? Are we creating incremental demand, or just harvesting people who were already going to buy?

It also helps to zoom out and remember what kind of machine you’re operating inside. Meta’s 2025 results show ad impressions grew while average price per ad increased year over year, which is a polite way of saying the auction is competitive and getting more expensive for sloppy execution. Meta’s full-year 2025 results summarize impression growth and average price per ad increases.

At the market level, digital budgets are still expanding, which means you’re not only competing with your direct competitors. You’re competing with everyone who can profitably buy attention. In the U.S., digital advertising revenue hit $259B in 2024, up 15% year over year. The IAB/PwC full-year 2024 Internet Ad Revenue Report provides the $259B figure and growth rate.

So the question becomes: what should you track day-to-day so your partner can keep performance stable, improve week over week, and prove real impact?

Performance Benchmarks

Benchmarks are helpful only when you use them the right way. They’re not targets, and they’re not a verdict on your team. They’re a quick “sanity check” that tells social media marketing companies whether they should investigate creative, targeting, offer, or tracking before spending another month guessing.

The Safe Way to Use Benchmarks

- Use medians and ranges, not a single “average.” Averages hide outliers, and paid social performance is full of outliers.

- Compare within the same objective and placement mix. A CTR benchmark for traffic campaigns won’t help you judge lead gen forms.

- Benchmark at the creative theme level. When one theme is healthy and another is weak, the fix is usually creative clarity, not budget shuffling.

- Benchmarks trigger questions, not conclusions. If you’re below range, ask “why” before you change everything at once.

Paid Social Signal Checks

When you’re working with social media marketing companies, a simple set of “signal checks” keeps everyone honest:

- CPM trend: rising CPM often means competition, weak relevance, or an audience that’s too narrow for the spend level.

- CPC trend: rising CPC often points to creative fatigue, unclear offers, or landing page friction.

- CTR trend: falling CTR usually means the message isn’t resonating or the format is wrong for the placement.

- Conversion rate trend: falling conversion rate is frequently a landing page, tracking, or offer issue—not an ad issue.

For Meta properties, multiple benchmark sources converge on a fairly consistent reality: Facebook CPC commonly sits in the low single dollars for many verticals, while Instagram Feed CPC is often higher and Stories can be more cost-efficient. WebFX’s Meta benchmarks summarize CPM and CPC ranges across Facebook and Instagram placements. Similar directional patterns appear in other industry benchmark summaries, including Facebook/Instagram CPC figures compiled from multi-source benchmark research. WordStream’s 2025 Facebook ads benchmarks discuss year-over-year CTR and CPC movement by objective. A paid-social dataset perspective also shows how platform performance differs by account and spend mix, with Facebook typically showing stronger CTR and lower CPC relative to some peers in late 2024. Emplifi’s 2025 Social Media Benchmarks Report describes Facebook’s relative strength in CTR and CPC in Q4 2024 based on thousands of ad accounts.

Organic Engagement Reality Check

Organic benchmarks are even more sensitive to industry, posting cadence, and creative style. Still, they can be useful for checking whether your content is earning attention or merely being tolerated.

A recent 2026 dataset summary shows TikTok engagement rates far exceeding Instagram and Facebook for many brands, while Instagram engagement stayed relatively flat and Facebook remained low on average. Socialinsider’s 2026 social media benchmarks summarize engagement rates by platform. The same findings are echoed in industry coverage that cites the same underlying dataset, which is helpful when you want a second confirmation that the broad pattern is real. Social Media Today’s 2026 benchmarks infographic highlights the same platform engagement gaps. And for teams that need industry-specific context, benchmark guidance is often broken down by sector and channel so you can compare against similar pages instead of a global average. Hootsuite’s 2025 benchmark guidance explains how to interpret benchmarks by industry and goal.

Analytics Interpretation

The biggest analytics mistake is treating every metric as equally meaningful. Social media marketing companies that perform well are opinionated about interpretation. They separate metrics into three layers: attention, intent, and impact. The layer tells you what decision the metric can support.

Layer 1: Attention Metrics

Attention metrics tell you if you’re earning the right to keep speaking. They include reach, video views, view-through rate, watch time patterns, and engagement signals. They’re useful because weak attention makes everything else expensive.

But attention metrics can’t prove business impact by themselves. They’re an early-warning system: creative fatigue, audience mismatch, or messaging that doesn’t land.

Layer 2: Intent Metrics

Intent metrics are the bridge between “people noticed” and “people moved.” Think clicks to meaningful pages, lead form starts and completions, add-to-cart events, and high-intent site actions.

This is where interpretation gets nuanced. If CTR is strong but conversion rate is weak, the ad is doing its job and the landing experience is failing. If CTR is weak but conversion rate is strong, you likely have a great offer but a weak hook—or you’re over-targeting warm audiences and flattering yourself with easy conversions.

Layer 3: Impact Metrics

Impact metrics answer the only question that ultimately matters: did this create incremental results? For that, you need experiments or triangulation, because attribution alone will lie to you in predictable ways—especially in longer journeys.

TikTok positions Conversion Lift Studies as a way to measure true incremental impact by comparing groups that saw ads versus groups that didn’t. TikTok’s Conversion Lift Study overview describes incrementality and its use across objectives. TikTok’s help documentation reinforces that the purpose is to answer whether ads produced incremental growth. TikTok’s help center page explains what Conversion Lift Study is designed to measure.

On the broader measurement side, academic work increasingly recommends not betting your decisions on a single model. A 2025 study argues for combining attribution, marketing mix modeling, and incrementality approaches so each method covers the others’ blind spots. A 2025 Journal of Digital & Social Media Marketing paper outlines a triangulation approach using attribution, MMM, and incrementality.

Case Stories

Benchmarks and dashboards become real when you watch how teams use them under pressure. The stories below are based on published case studies and focus on the analytics choices that made results believable and repeatable.

Domino’s Spain: When the Only Way to Trust the Result Was to Prove Incrementality

The campaign pressure didn’t feel like “marketing.” It felt like a deadline with consequences: spend was going out, creative was live, and leadership needed to know whether TikTok was actually driving paid outcomes or just collecting credit. When every platform can report conversions, the real fear is paying for overlap you can’t see.

The backstory was a classic performance ambition: Domino’s Spain wanted to drive tangible actions—web conversions and app installs—using TikTok In-Feed Ads while optimizing toward outcomes that matter (like completed payment). That’s a harder bar than views or engagement, because it forces the funnel to work end-to-end. Domino’s Spain’s TikTok case study describes optimizing toward web conversions (complete payment) and app installs with In-Feed Ads.

The wall showed up exactly where it usually does: measurement credibility. If the platform reports success, skeptics can still say the conversions would have happened anyway. If the platform reports weakness, optimists can say “we didn’t give it enough time.” Without a proof method, the conversation never ends.

The epiphany was to stop arguing about attribution and test for incrementality instead. The team used a Conversion Lift Study design that compares a group exposed to ads with a group that wasn’t, so the measurement is anchored in a controlled comparison. That turns “we think” into “we can demonstrate.” Domino’s Spain’s case study explains using a Conversion Lift Study that compared exposed and unexposed groups.

The journey then becomes a disciplined analytics loop: optimize toward the conversion event, validate that the event is meaningful, and use the lift study to confirm whether the platform is generating incremental growth. This isn’t a glamorous process, but it’s the kind of system social media marketing companies build when they’re protecting budget trust as well as performance.

Then the final conflict appears: a lift study can prove impact, but it can also surface uncomfortable truths. If the lift is smaller than expected, you need the humility to change creative, offers, or audience strategy instead of defending the plan. If the lift is strong, you still need to scale carefully so you don’t inflate costs and lose the advantage you just validated.

The dream outcome is not just “a good result,” but a result that leadership will fund again because it’s credible. Domino’s Spain’s story is valuable because it shows the measurement move that de-risks growth: proving incrementality with an exposed vs. control design, rather than relying on one platform’s attribution view. TikTok’s help documentation reinforces the intent of Conversion Lift Study as measuring incremental growth.

Sprout Social: When Response Time Became a Measurable Brand Asset

When message volume spikes, brands don’t just lose time—they lose trust in public. A few hours of silence can turn a small issue into a visible thread, and once the thread grows, the story stops being yours. That’s the kind of pressure that makes teams realize social isn’t only marketing anymore.

The backstory is straightforward: social inboxes can become unmanageable when multiple teams handle DMs, comments, and mentions across platforms. Without a system, you can’t reliably route issues, maintain tone, or track whether customers are being helped. What starts as “we’ll respond when we can” becomes a reputation risk.

The wall was operational: high message volume plus inconsistent workflows created slow response times and unpredictable customer experiences. Even if individual responders were great, the overall system couldn’t guarantee speed. And speed is exactly what consumers increasingly expect from brands.

The epiphany was to treat social response as a workflow problem with measurable outcomes. A unified inbox, tagging, routing, and visibility into performance allowed the team to manage social customer care like a real service channel—not a pile of notifications.

The journey is described with hard operational results: handling tens of thousands of messages while reducing response time meaningfully. The case study describes managing 36,000 messages and reducing average response time by 55%, which is the kind of metric social media marketing companies can use to prove value beyond ads.

The final conflict is the part most teams don’t anticipate: once response improves, expectations rise. Faster replies invite more conversation, and more conversation requires stronger tone guidelines, escalation paths, and staffing discipline. If you don’t plan for that, the system you built to win trust can become the system that overwhelms you.

The dream outcome is a customer care channel that protects the brand while building loyalty. Consumer research continues to reinforce why this matters: people want fast, genuine engagement from brands on social, and delays erode trust. Emplifi’s 2025 Social Pulse survey focuses on trust and expectations for brand engagement. And social leaders are increasingly expected to prove that this kind of operational improvement affects the broader customer journey. Sprout Social’s 2025 Impact of Social Media report frames social as influencing the full journey even when measurement is challenging.

Professional Promotion

This section isn’t about “promoting your posts.” It’s about promoting your work internally and externally in a way that earns budget, trust, and patience. Social media marketing companies that keep accounts long-term are usually the ones that communicate analytics like adults: clear claims, clear caveats, and clear next steps.

The Analytics Story That Wins Support

- Start with the business question: “Did this produce incremental revenue/leads/memberships?” not “did engagement rise?”

- Show the funnel narrative: attention moved first, intent followed, impact was validated (or still being validated).

- Separate what you know from what you’re testing: this is where credibility is built.

- End with a decision request: scale, pause, or fund the next test.

Credibility Guardrails

If you want your reporting to survive scrutiny, build these guardrails into every performance summary:

- Avoid single-source “truth.” Pair platform reporting with your web analytics and, when possible, an incrementality method.

- Watch for algorithmic testing traps. Platform delivery can create misleading A/B outcomes if variants are delivered to different sub-audiences, which is one reason controlled interpretation matters. A 2025 paper explains “divergent delivery” risks in platform A/B testing.

- Benchmark responsibly. Use benchmarks as investigation triggers, not promises to executives.

A Simple Reporting Template Social Media Marketing Companies Can Use

Here’s a structure that keeps reporting short and decision-friendly:

- What changed: one sentence on performance movement (up/down/stable) and what moved it.

- What we learned: one sentence on creative/audience/offer insight.

- What we’ll do next: one sentence on the next test or scale decision.

- What we need from you: approvals, landing page changes, offer decisions, or budget confirmation.

In Part 5, we’ll zoom out to the ecosystem: how social media marketing companies fit alongside creators, in-house teams, agencies, and platforms—and how to design collaboration so execution stays fast without losing control.

Future Trends

The next wave of change will feel less like “new platforms” and more like a rewrite of how distribution works. Social media marketing companies are already adapting to feeds that behave like search engines, ads that look increasingly auto-generated, and audiences that can smell synthetic content from a mile away.

AI-driven media buying becomes the default, and creative becomes the steering wheel. Meta’s plan to push toward fully automated ad creation and targeting by the end of 2026 has been widely reported, and the implication is simple: manual knobs matter less, while inputs (creative, offers, signals) matter more. Reuters’ report on Meta’s AI automation ambitions is a useful reality check for how quickly “campaign management” is turning into “creative and data management.”

Authenticity becomes a measurable competitive advantage. As AI content spreads, brands that overdo it risk being labeled as “slop,” which can quietly erode trust even when performance looks fine short-term. The recent coverage of AI-heavy brand ads shows how fast audiences spot inconsistencies and how inconsistent disclosure still is across platforms. The Verge’s reporting on AI-generated ads and disclosure gaps captures why the best social media marketing companies will treat authenticity as a strategy, not a vibe.

Culture moves faster, but communities decide what sticks. TikTok’s own 2026 trend work leans into AI-assisted creativity shaped by human instincts, with participation and community-driven formats continuing to dominate attention. TikTok’s Next 2026 Trend Report is one of the clearest signals that “passive consumption” is losing ground to content people can join, remix, or respond to.

Social video becomes a major layer of live sports and event distribution. FIFA selecting TikTok as a preferred platform for video content at the 2026 Men’s World Cup is a loud indicator of where audience attention is moving and how creators will be pulled deeper into official ecosystems. AP’s coverage of FIFA’s TikTok partnership for the 2026 World Cup shows how platform partnerships can reshape what “real-time marketing” looks like.

Ad spend keeps rising, so weak systems get punished. When the market expands, competition expands with it. Statista projections referenced in a Digital 2026 overview point to global social media ad spend growth continuing into 2025, which increases pressure on teams to build measurement credibility and creative velocity rather than relying on easy targeting hacks. Digital 2026 overview commentary on social media ad spend growth.

The companies that win won’t be the ones with the most tools. They’ll be the ones that can ship real creative quickly, protect measurement trust, and stay close enough to communities that they don’t drift into “content that looks like ads.”

Strategic Framework Recap

By now, the pattern should feel clear: great outcomes aren’t the result of a clever campaign. They’re the result of a system that learns faster than the auction changes.

Here’s the framework you can reuse whenever you evaluate social media marketing companies, hire in-house, or rebuild your own operating model:

- Define the outcome clearly: one business goal, one primary audience, and constraints that protect the brand.

- Make signal quality non-negotiable: conversion definitions, event integrity, and consistent measurement rules across platforms.

- Build a creative system, not a content calendar: repeatable themes and formats that produce variation without breaking the brand.

- Launch in controlled waves: validate, then expand—so you can always explain what changed and why.

- Optimize with discipline: creative is the main lever, but only when tracking and offers are coherent.

- Prove impact at the right level: attribution for direction, experiments for truth when budget stakes rise.

- Scale by compounding: faster learning, better creative throughput, and clearer decision-making.

If you remember one thing, make it this: social media marketing companies don’t “manage social.” They run a learning engine that turns attention into measurable business impact, and then protects that impact with credible measurement.

FAQ – Built for the Complete Guide to Social Media Marketing Companies

How do social media marketing companies actually make money for a business?

The best partners create a repeatable system that attracts attention, builds trust, and drives actions you can measure. That can mean lead generation, ecommerce purchases, memberships, booked calls, or qualified pipeline. Their real value is not a single “winning ad,” but a process that keeps producing learnings and improvements week after week.

What should be included in a proposal from social media marketing companies?

A strong proposal is specific about outcomes, scope, roles, and measurement. Look for: defined goals, platform mix, creative production plan, tracking responsibilities, reporting cadence, and what “success” means in practical terms. If it’s mostly buzzwords and generic posting promises, it’s not a serious operating plan.

How quickly should we expect results?

Timelines depend on funnel complexity, budget, and creative readiness. In many cases, you can validate early signal health (CTR, cost trends, conversion tracking integrity) within weeks, while stable efficiency and confident scaling typically takes longer because the system needs iteration. What matters most is whether the team can explain what it’s learning and what it will change next.

Do we need to run paid ads, or can organic be enough?

Organic can work when you already have distribution leverage (strong brand awareness, creators, community, or a product people naturally share). Paid becomes important when you want predictable reach, faster testing, and controllable scaling. Many high-performing teams do both: organic to build trust and relevance, paid to accelerate learning and outcomes.

How do we compare agency reporting to what we see in GA4 or our CRM?

Expect differences. Platforms measure what happens inside their ecosystems and what they can attribute to impressions or clicks, while GA4 and CRMs often represent more conservative views. The healthiest approach is triangulation: use platform data for optimization signals, business analytics for reality checks, and incrementality methods when spend stakes rise. A 2025 triangulation framework combining attribution, MMM, and incrementality.

What’s the biggest red flag when hiring social media marketing companies?

Overconfidence without a measurement plan. If a team promises specific ROAS outcomes without verifying tracking, conversion definitions, and offer quality, they’re selling certainty that doesn’t exist. Another red flag is refusing to document learnings or explain why performance changed.

How many creatives should we produce each month?

There’s no universal number, but there is a universal truth: creative fatigue is predictable at scale, so you need a steady pipeline of new angles and formats. Some brands refresh aggressively (for example, a TikTok case study describes refreshing creative every 7–14 days as part of a performance approach). Quay’s TikTok case study referencing a 7–14 day creative refresh cadence.

Should we trust A/B tests inside ad platforms?

A/B tests can be useful, but interpretation needs caution because delivery systems can route variants to different audience pockets, which can distort conclusions. That’s why many teams treat A/B tests as directional and use lift studies or controlled experiments when they need causal proof. Research discussing divergent delivery risk in platform experimentation.

What’s the difference between social media marketing companies and freelance specialists?

Companies typically provide a broader team (strategy, creative, media buying, analytics, community) and standardized processes. Freelancers can be faster and more specialized, often working best when the scope is clear and the business already knows what it needs. Many brands build hybrid models: a freelancer for paid social, another for content, and internal ownership for approvals and brand direction.

How do we protect brand safety when scaling social?

Brand safety comes from structure: approval workflows, documented claims and tone rules, escalation paths for community management, and careful creative review—especially when AI tools are used. As AI-generated content increases, brands that ignore authenticity risks can face visible backlash and subtle trust erosion. A recent example of audiences reacting to AI-heavy ad content.

Which trend will matter most in the next 12 months?

Automation plus authenticity. Ads will become easier to launch and harder to differentiate. The teams that win will be the ones who can supply genuinely human creative, protect measurement integrity, and keep their strategy close to real community behavior. Meta’s push toward deeper AI automation and TikTok’s emphasis on participatory culture are two strong signals of where the market is heading. Meta automation direction and TikTok’s 2026 trend framing.

What’s the simplest way to audit whether our current partner is doing a good job?

Ask for three things: (1) the conversion definitions and tracking ownership map, (2) the last 30 days of creative learnings summarized in plain language, and (3) the next 30 days of planned tests with hypotheses. Strong social media marketing companies can answer those without defensiveness or chaos.

Work With Professionals

There’s a moment every marketer hits where skill isn’t the problem anymore. You know how to run campaigns. You know how to write, design, or optimize. The problem is the pipeline. Great work can’t compound if you’re spending your best hours chasing the next client.

That’s where a focused marketplace can change your week. Markework is built around one simple idea: marketing work and marketing talent should match faster, with fewer gates and fewer fees. The platform positions itself as “no middleman” and “no project fees,” so deals happen directly between companies and specialists. Markework’s “Why Us” page explains the direct communication approach and no project fees model.

If you want momentum, the difference is seeing real opportunities without begging for introductions. Markework lists active jobs and projects, with the work page showing 1007 active listings visible as the current inventory snapshot. Markework’s active listings view showing 1007 active listings. Pricing is subscription-based and emphasizes access to “thousands of job listings,” plus direct communication—without commission-based deductions on what you earn. Markework’s pricing page describing access to thousands of job listings and no project fees and Markework’s FAQ confirming no commissions or transaction charges.

Imagine what changes when you’re not negotiating through a platform that inserts itself into every conversation. You build a profile that shows your rates, your proof, and your specialty. You apply to work that actually matches your lane—paid social, lifecycle, SEO, content, analytics—and you message directly to close faster. Markework’s homepage highlights marketing specialties and the direct-connect workflow.

For freelancers and small teams who want to grow, the appeal is emotional as much as practical: you keep control, you keep the relationship, and you keep the upside. You’re not paying a tax on every invoice. You’re building a client roster you actually own, while your pipeline stays warm in the background.

If that’s the kind of work-life tradeoff you want—less chasing, more doing—start where the marketplace is designed for your exact skills.