Most brands don’t fail on social because they lack ideas. They fail because the ideas never become a real social media campaign—one with a clear audience, a tight promise, a production plan, and a way to prove what worked.

If you’ve ever shipped a week of posts, watched the numbers bounce around, and still felt unsure what to do next, you’re not alone. Social platforms change fast, attention is expensive, and even executives struggle to connect social activity to business outcomes.

In this guide, you’ll build a social media campaign the way a professional team would: anchored in a single goal, designed around the audience’s behavior, and engineered to learn quickly—without burning your budget or your creative team.

Article Outline

- What Is a Social Media Campaign?

- Why a Social Media Campaign Matters

- Framework Overview

- Core Components

- Professional Implementation

What Is a Social Media Campaign?

A social media campaign is a coordinated set of messages and experiences designed to move one specific audience toward one measurable outcome—within a defined time window. It’s not “posting consistently,” and it’s not “trying a few ads.” It’s a planned arc: a promise, a reason to believe, creative that repeats that promise in different ways, and measurement that tells you whether the promise landed.

That time window can be short (a two-week launch) or long (a quarter-long demand push). What matters is that the campaign has a beginning, a middle, and an end. Your audience should feel the momentum: the first touch makes them notice, the next makes them care, and the next makes them act.

Good campaigns also respect how people actually use platforms. For example, platform reach and habits differ sharply by channel and demographic, which is why “same post everywhere” usually underperforms. If your audience mix includes the U.S., Pew’s latest platform usage breakdown is a useful reminder that people cluster by platform in predictable ways, and your plan should reflect that behavior rather than fight it: recent social platform usage data.

Finally, a campaign isn’t only marketing. On many platforms, social is also where customers expect service and reassurance. If you’re running a campaign while leaving replies unanswered, you’re spending money to create conversations you refuse to join. That’s a quiet way to sabotage performance—especially when consumer expectations for brand responsiveness keep rising in major surveys like Sprout Social’s annual impact research: social media impact findings.

Why a Social Media Campaign Matters

A campaign matters because social has become a business system, not a side channel. Customers discover products, compare alternatives, ask questions, and form opinions before they ever hit your website. When you treat social as “content output,” you miss the part where it shapes demand.

The money following attention is a blunt signal here. Global advertising revenue has been projected to reach about $1.14 trillion in 2025 in WPP Media’s forecast, which reflects how aggressively brands are investing in paid distribution, creator partnerships, and performance measurement across digital media: WPP Media global ad forecast.

At the platform level, the growth is even harder to ignore. Meta’s own investor reporting shows how central advertising demand remains to its business, and how much optimization is now driven by machine learning systems that reward structured creative testing rather than one-off “hero posts”: Meta quarterly results.

Campaign thinking also protects you from the most expensive mistake in social: confusing activity for progress. A campaign forces you to decide what success looks like before you publish, and it gives you a repeatable learning loop. Even when the first version underperforms, you come away with evidence—what message got attention, what format held it, and what offer actually converted.

And when social intersects with commerce, a campaign becomes the difference between “people liked it” and “people bought it.” Surveys and market reporting from major logistics and commerce organizations show how normal it has become for shoppers to buy directly through social experiences, not just discover there: global social commerce trends.

Framework Overview

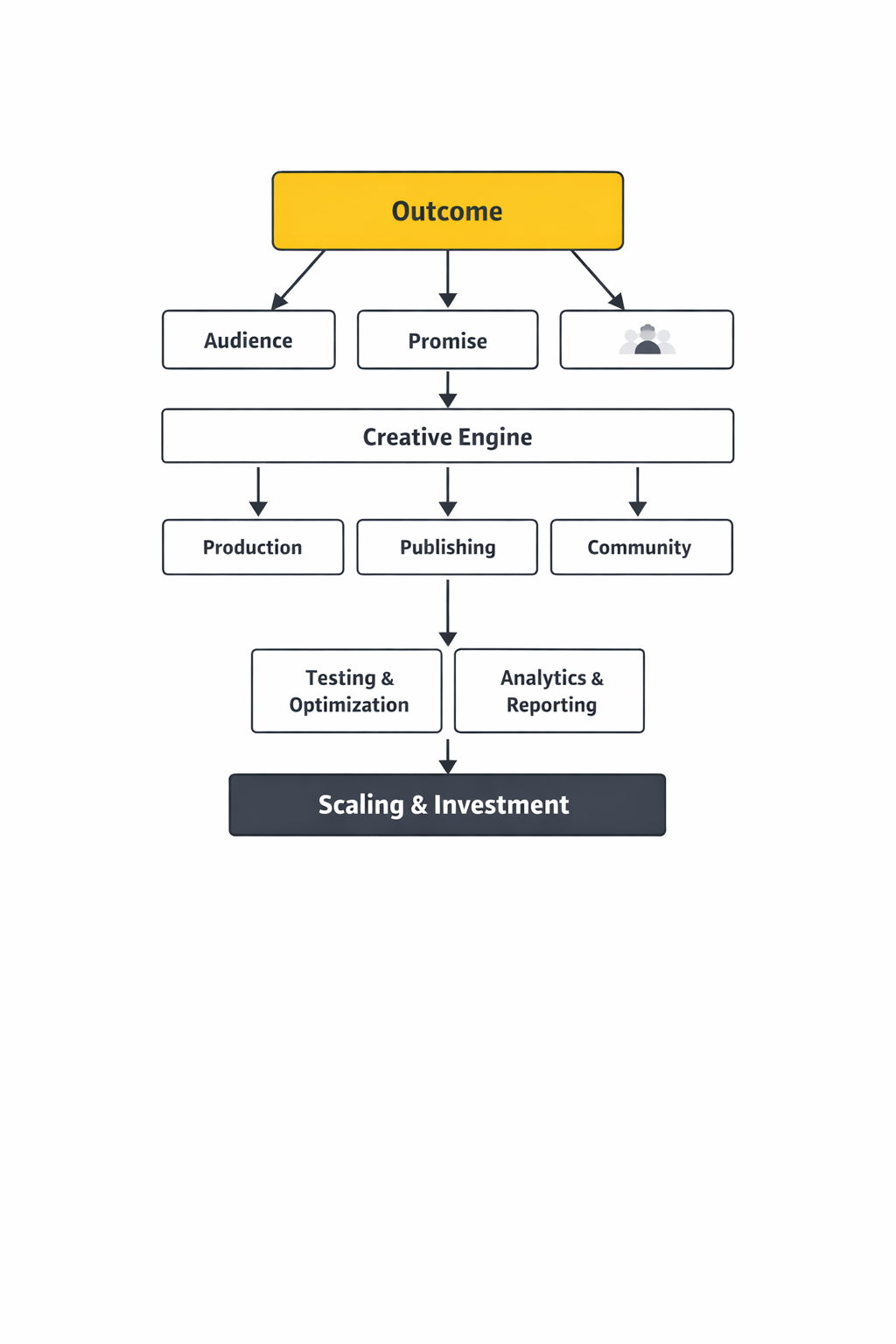

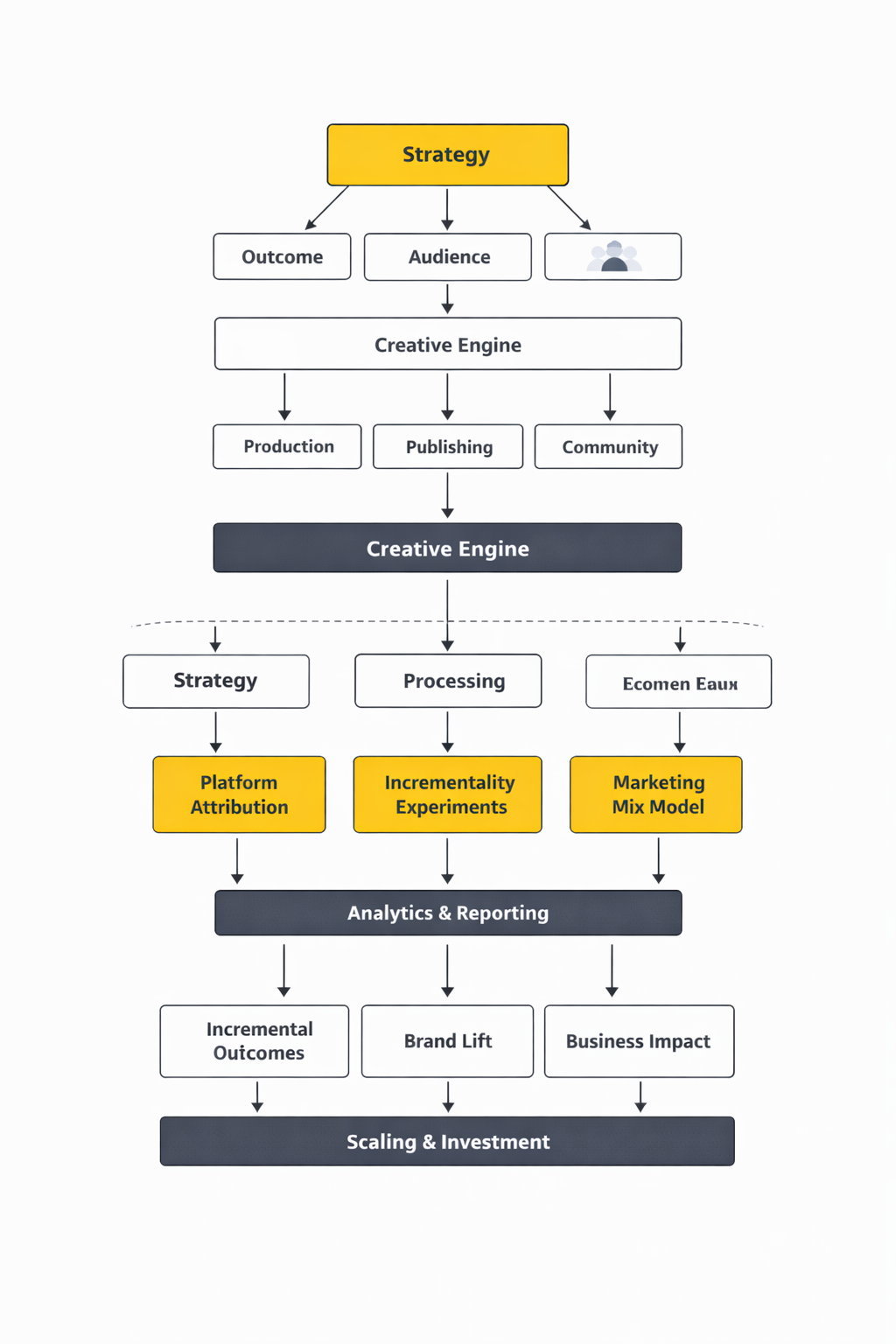

To make a social media campaign predictable, you need a framework that turns “ideas” into decisions. The one in this article is built around five moves that professional teams repeat, regardless of platform:

- Outcome: choose one measurable goal and the metric that proves it.

- Audience: define one priority segment and the moment they’re most persuadable.

- Promise: craft a single, specific value proposition that’s easy to repeat.

- Creative system: build a small set of formats you can test and scale.

- Measurement loop: instrument tracking and run experiments that tell you what caused the lift.

This approach is practical because it matches how platforms themselves encourage advertisers to operate: iterate quickly, run multiple creatives, and measure incrementality when you can. TikTok’s own guidance increasingly emphasizes experimentation and lift-based measurement to understand what ads truly drive, not just what they touched: incrementality testing overview.

In other words, the framework isn’t a “content calendar with nicer labels.” It’s a decision engine. It helps you avoid vague objectives, messy targeting, and creative that’s impossible to learn from.

Core Components

Every high-performing social media campaign is made from the same few building blocks. The difference is how intentionally you assemble them.

1) A Single Objective That Can’t Hide

Pick one primary outcome: pipeline, trials, purchases, registrations, qualified leads, or retention. If you choose three, you’ll design for none. A clean objective also helps you defend the campaign internally—especially when leadership asks whether social is “really driving growth.” Research summaries like Deloitte’s annual digital media work are useful here because they show how consumption habits continue shifting toward social video and creator-led formats, which changes what “effective” looks like: digital media trends research.

2) One Priority Audience, Not Everyone

Campaign targeting works best when it’s based on a real moment of intent: a pain point, a purchase window, or a change in situation. You can always broaden later. Starting narrow makes your message sharper and your learning faster.

3) A Promise People Can Repeat Back to You

If someone can’t summarize your campaign in one sentence, the campaign isn’t ready. The promise should be specific enough to feel true and broad enough to support multiple pieces of creative. Think of it as a headline you can remix across formats without losing the meaning.

4) A Creative System Built for Variation

One “perfect” video is a fragile plan. A creative system gives you repeatable formats: short demo cuts, objection-handling clips, creator-style testimonials, carousels that teach, and simple offer reminders. Variation is not clutter—it’s how algorithms and audiences find what resonates.

5) Measurement That Separates Signal From Noise

Social metrics are easy to collect and easy to misread. Reach can rise while sales stall. Clicks can spike while customer quality drops. Your measurement needs at least two layers: platform signals (engagement, view-through behavior, CTR) and business signals (conversions, revenue, retention, cost per qualified action). When possible, use experimentation methods that estimate what the campaign caused, not just what it correlated with—especially for upper-funnel work.

Professional Implementation

Frameworks feel great until you have to ship. Professional execution is where most campaigns quietly break: the brief is vague, production drags, approvals multiply, tracking is incomplete, and the team “tests” by swapping random elements without a hypothesis.

To prevent that, implementation should run like an operating system: a written brief that locks the objective and audience, a production plan that creates multiple creative variations quickly, and a measurement plan that’s set up before launch day. If your campaign includes paid spend, your tracking and conversions configuration should be treated as production-critical—not something you “fix later.”

In the next parts of this guide, you’ll see how to translate the framework into a campaign brief, a creative testing plan, and a reporting cadence that makes results legible to stakeholders—so your social media campaign doesn’t just look busy, it gets better every week.

Tools Supporting the Framework

A social media campaign looks simple from the outside: publish content, reply to comments, run a few ads, report results. The reality is messier. A single week can involve half a dozen stakeholders, multiple platforms that disagree with each other’s metrics, and a tracking setup that breaks the moment browsers tighten privacy rules.

That’s why “tools” isn’t a shopping list—it’s the operating system behind your framework. The right stack makes your decisions faster, your workflows calmer, and your measurement more defensible when someone asks, “Are we sure this worked?” The wrong stack turns every campaign into a fire drill where people argue over screenshots.

When your social media campaign is run professionally, tools do three jobs at once: they keep execution moving (publishing + approvals), they keep signal clean (tracking + attribution), and they keep learning compounding (analytics + insight pipelines).

Tool Categories

Most teams buy tools in the order they feel pain. That’s normal, but it can create a “Frankenstack” where nothing talks to anything. It helps to map tools to the decisions your social media campaign needs to make each day.

Planning, approvals, and publishing

- Editorial planning: content calendars, briefs, versioning, and approval trails so posts don’t get stuck in Slack purgatory.

- Publishing and scheduling: native schedulers or social management platforms that support role-based approvals and governance for multiple brands.

- Asset readiness: workflows that tie creative files to the post they belong to (so the “final_final_v7” problem dies).

Creative production and content velocity

- Design and editing: tools that keep templates consistent across formats and platforms.

- UGC and creator pipelines: systems that manage creator briefs, usage rights, deliverables, and content handoff.

- Brand safety checks: governance layers that reduce risk when many people publish under one brand.

Community management and social care

- Unified inbox: one place to triage comments and DMs across channels, assign owners, and track status.

- Routing and case creation: turning a messy DM into a structured ticket when it needs escalation.

- Response quality: saved replies, knowledge base links, and coaching workflows that keep voice consistent.

Social listening and insight discovery

- Trend and sentiment monitoring: catching demand signals, creator momentum, and emerging issues early.

- Audience language mining: pulling real phrasing from the market so your campaign copy sounds like customers, not a board meeting.

- Competitive intelligence: understanding what’s getting traction (and why) without copying blindly.

Paid social activation and optimization

- Campaign build and QA: structured naming, creative testing discipline, and audience logic that stays readable weeks later.

- Experimentation systems: lift studies and controlled tests so “it worked” isn’t just a vibe.

- Creative performance feedback loops: getting learnings back to the creative team fast enough to matter.

Tracking, attribution, and data plumbing

- Pixels and events: clean event taxonomies that align with your framework goals.

- Server-side options: when browser tracking drops signals, server-to-server approaches become the reliability layer for a social media campaign. Meta’s Conversions API is one common path, and TikTok’s Events API is another.

- Enterprise routing: setups that forward events through trusted hubs, like Adobe Experience Platform’s TikTok Web Events API extension, when teams need governance and scale.

Analytics, reporting, and decision support

- Campaign reporting: dashboards that reduce “manual spreadsheet archaeology.”

- Stakeholder-ready narratives: reporting that ties back to the framework goals and explains tradeoffs.

- ROI models and productivity impact: when leadership asks whether the stack is worth it, studies like Forrester’s commissioned Total Economic Impact™ of Sprout Social show how teams try to quantify operational value.

Tool Comparison

You don’t pick tools in a vacuum—you pick tradeoffs. The cleanest way to compare is to decide what you want to optimize for: speed, control, measurement reliability, or depth of insight. Most mature social media campaign stacks choose “two and a half” and build processes to cover the rest.

All-in-one suite vs best-of-breed stack

- All-in-one suites: smoother workflows, fewer logins, faster onboarding, cleaner governance. The risk is shallow depth in specialized areas (advanced listening, complex attribution, enterprise data routing).

- Best-of-breed: stronger capabilities per function, especially for listening and measurement. The risk is integration drift—if ownership isn’t clear, the stack slowly stops behaving like a system.

Native platform tools vs third-party layers

- Native tools: great for first-party signal and fastest access to new features. The risk is fragmentation—every platform is its own universe with its own truth.

- Third-party layers: better for governance, cross-channel workflows, and consistent reporting. The risk is dependency—if a platform changes an API or metric definition, you need a plan B.

What to evaluate before you commit

- Governance: roles, permissions, approvals, audit trails, and brand safety controls.

- Integration realism: whether “integrates with X” means “a button exists” or “data actually flows where your team works,” like the Sprout Social + Salesforce ecosystem described in Sprout’s Salesforce collaboration update.

- Measurement durability: whether you can keep conversion signals stable when browsers, consent rules, and ad blockers shift—often where APIs like Meta Conversions API and TikTok’s Events API matter.

- Time-to-truth: how quickly you can answer basic questions (what worked, what didn’t, what to change) without manual stitching.

- Vendor transparency: whether metric definitions and documentation are stable and accessible.

Real Tool Stack Stories

The best way to understand stacks is to watch what happens when a brand hits pressure—when volume spikes, timing matters, and the campaign can’t afford slow approvals or fuzzy measurement. Here are two real-world stories where tooling wasn’t a “nice to have,” but the thing that made the work survivable.

Lemonade: making an “un-social” category feel human

The comments were sharp, the attention span was brutal, and every post carried the same unspoken dare: “Say something that doesn’t sound like an insurance company.” The Lemonade team could feel the gap between what they wanted social to be and what the category usually delivers. When content landed flat, it didn’t just hurt engagement—it reinforced the idea that insurance brands are background noise.

The backstory is simple and uncomfortable. Lemonade sells insurance, a product most people only want to think about when something goes wrong. They also built their business around a tech-forward experience, so the brand promise depends on speed, clarity, and trust. Social had to communicate all of that while still being entertaining enough to earn attention in the feed.

The wall showed up in two places at once. First, “creative bravery” without structure can turn into chaos: you try bold ideas, but you can’t reliably learn what’s working fast enough to double down. Second, community management becomes a reputational risk when message volume rises and response consistency slips. If social is where trust is built in public, slow or scattered handling becomes its own kind of campaign failure.

The shift came when they treated tooling like a lab bench, not a megaphone. In Lemonade’s case study, their team describes using Sprout Social as the hub for publishing, reporting, and community management, with tagging and sentiment features helping them turn day-to-day interactions into usable insight. That’s the moment the work stops being “posting” and starts becoming a repeatable social media campaign system. You can trace the logic in Sprout Social’s Lemonade case study.

From there, the journey is less glamorous than people expect—and that’s the point. They built a workflow where content performance could be checked across channels without hunting through multiple dashboards. They organized incoming messages so patterns weren’t lost in the noise, and they used insight to guide what kinds of creative risks were worth repeating. Over time, social becomes a feedback loop: the audience reacts, the system captures it, the strategy adjusts, and the next wave of content gets smarter.

Then the final conflict: social never stays still long enough to reward perfect planning. A campaign idea can start strong and suddenly collide with an unexpected news moment, a platform algorithm wobble, or a burst of customer questions that hijacks the inbox. Without a unified workflow, those moments become the week you “almost” shipped the campaign you designed. With a system, you can absorb the hit and keep moving.

The dream outcome isn’t just better posts. It’s a brand that can sound like itself, move quickly, and keep learning without burning out the team. Lemonade’s story matters because it shows what a mature social media campaign looks like in a hard category: creative risks informed by data, and community engagement treated like a trust-building product, not an afterthought. For a public-company view of how Lemonade describes its business and ongoing strategy, their investor materials and filings offer context, including their Q4 2025 shareholder letter.

ScottsMiracle-Gro: when social care volume turns into operational strain

Peak season hits, the DMs surge, and suddenly social isn’t a marketing channel—it’s a customer operations channel with a public front door. The ScottsMiracle-Gro team felt that pressure in the form of rising message volumes and a growing expectation that brands respond quickly. When response time stretches, customers don’t see “workflow issues”; they see a brand that isn’t listening.

ScottsMiracle-Gro’s backstory is the kind many established brands share. They have a long-standing reputation in their category, a portfolio of well-known products, and a customer base that spans generations. At the same time, they needed to connect with younger audiences while keeping service quality high during seasonal spikes. That combination forces social teams to become bilingual: part brand voice, part support desk.

The wall was operational complexity. Their case study describes agents juggling multiple systems, which creates friction, slows resolution, and makes training harder than it needs to be. When a team’s workflow requires constant context switching, the campaign side of social gets squeezed by the care side. The worst part is that the strain is invisible until it becomes a reputation problem.

The epiphany was integrating where people already work. ScottsMiracle-Gro’s story centers on using Sprout Social’s integration with Salesforce Service Cloud so social interactions could flow into a customer service environment. That’s a different mindset than “let’s buy a tool for social”—it’s “let’s connect social to the system that runs service.” The mechanics are described in Sprout Social’s ScottsMiracle-Gro case study, and the broader direction of the Sprout + Salesforce relationship is outlined in Sprout’s update on expanding collaboration with Salesforce.

The journey from there is what professional implementation looks like in practice. You reduce tool sprawl so agents don’t have to jump between tabs just to answer a simple question. You standardize routing and escalation so social care doesn’t depend on who happens to be online at the moment. And you build reporting that can show what’s happening, not weeks later, but while the season is still in motion.

The final conflict is that systems always get tested by the unexpected. A viral moment can spike message volume beyond forecasts, or a product issue can trigger a wave of similar questions that need consistent answers. If your stack can’t absorb that shock, the campaign calendar gets derailed and trust erodes publicly. If your stack can absorb it, you protect the brand while still keeping marketing moving.

The dream outcome is a social media campaign environment where service and marketing stop fighting for oxygen. Social care becomes faster, training becomes simpler, and teams can actually use social insight to improve messaging and product communication. It’s not “better software”—it’s a calmer operation that leaves room for strategy.

Professional Implementation With Tools

Tools only become leverage when you implement them like a system. The jump from “we have tools” to “we run a reliable social media campaign engine” usually comes down to five implementation habits.

1) Assign tool ownership like it’s a product

Every platform needs an owner who is accountable for outcomes: governance, naming conventions, integration health, and reporting quality. Without this, stacks decay slowly—until a critical campaign arrives and the data doesn’t match. Tool ownership is also what prevents “shadow workflows” from becoming the real workflow.

2) Standardize taxonomy across the stack

If a campaign name means one thing in the planner, another in the ad account, and a third in analytics, reporting becomes a debate. Build a naming structure once, document it, and enforce it. It’s boring work that pays back every single week.

3) Design your data flow before you buy more tools

Start with one question: “Where will campaign truth live?” For some teams, it’s a BI layer; for others, it’s the social suite plus a web analytics system. Then choose integrations that protect measurement durability, including server-side options when needed via Meta Conversions API and TikTok’s Events API, or managed enterprise routes like Adobe’s TikTok Web Events API extension.

4) Build workflows that match real-life pressure

Create an approval path that can move fast during launches and still stay safe. Create escalation rules for crises and spikes, so community management doesn’t rely on heroics. Then rehearse those workflows before you need them.

5) Run a post-campaign tool debrief

After every major social media campaign, review the stack like you’d review creative. What slowed you down? What data was missing? Where did manual work creep back in? That’s how tools become compounding advantage instead of recurring subscription costs.

Step-by-Step Implementation

A social media campaign becomes “real” the moment you turn strategy into a sequence of decisions that can survive busy calendars, approvals, and platform chaos. This implementation flow is built to keep momentum high while protecting measurement quality, so you don’t end the campaign with a folder of content and a pile of questions.

Step 1: Lock the campaign brief before you build anything

Start with a one-page brief that everyone can repeat back to you. The goal is to prevent hidden assumptions from showing up mid-launch, when fixes are slow and expensive.

- One outcome: the primary action you want people to take and how you’ll recognize it in your analytics.

- One audience: a tight segment and the moment they’re most persuadable.

- One promise: a sentence that explains why this is worth their attention right now.

- One constraint: what you won’t do (for example: “no new landing pages,” “no discounting,” or “must include customer care messaging”).

Step 2: Build measurement like it’s part of production

Measurement breaks when it’s treated as a “tracking check” the day before launch. Treat it like a build phase: define events, verify they fire consistently, and make sure your team agrees on what success will look like.

If you’re relying on paid social, plan for signal loss and build redundancy. Many teams now use server-side options to stabilize conversion signals, which is why platforms like Meta document their Conversions API and TikTok documents its Events API as core building blocks rather than niche upgrades.

Step 3: Create a creative system, not a single “hero” asset

One polished video can be a beautiful failure if it teaches you nothing. A professional social media campaign ships with planned variation so you can learn fast and scale what works.

- Build 3–5 message angles: each angle is a different way to explain the same promise.

- Build 2–3 formats per angle: short video, carousel, static, creator-style, or product demo, depending on platform fit.

- Build 2–3 hooks per format: the first seconds and first lines do most of the work.

On Meta, creative variation can be amplified through tools designed to generate and test versions, which is why Meta describes Advantage+ creative as a way to automatically optimize creative elements for different audiences and placements.

Step 4: Plan channel roles so platforms stop competing with each other

Instead of pushing the same content everywhere, assign each platform a job. This reduces creative sprawl and makes results easier to interpret.

- TikTok and Reels: fast attention, strong hooks, creator-style credibility, and cheap learning.

- Instagram feed and carousels: clarity, proof, and “save-worthy” education.

- LinkedIn: trust-building for B2B, POV content, proof points, and decision-maker reach.

- YouTube Shorts: repeatable discovery and longer shelf life for strong concepts.

If your campaign includes LinkedIn, it helps to align creative with what the platform is rewarding. LinkedIn’s own product leadership has described video being watched more year over year and creation growing faster than other formats, which matters when you’re deciding what to produce first: LinkedIn’s video trend update.

Step 5: Launch with a controlled test window

Launch isn’t a single moment. It’s a controlled window where you protect learning. Keep budgets stable long enough to read results, avoid changing five variables at once, and document what you’re testing so your team can explain decisions later.

If you plan to run incrementality testing, schedule it early. Platforms increasingly position lift studies as the clean way to answer “did this campaign cause the result,” which is why Meta centers Conversion Lift as an experimentation methodology and TikTok frames Conversion Lift Study as a way to measure true impact on business outcomes.

Execution Layers

Execution is easier when you think in layers. A social media campaign isn’t one stream of work; it’s several layers running in parallel, each with its own cadence and its own failure modes. When you name the layers, you can staff them, schedule them, and keep them from stepping on each other.

Layer 1: Strategy and governance

This layer protects clarity. It owns the brief, brand voice decisions, risk checks, and the “no surprises” rule for leadership. When it’s weak, you see last-minute rewrites, unclear approvals, and content that tries to please everyone.

Layer 2: Creative production and iteration

This layer protects speed. It’s responsible for templated formats, creator collaboration, and turning performance learnings into the next wave of assets. When it’s weak, the team ships one batch, waits too long, and the campaign gets stale before it gets smart.

Layer 3: Distribution across organic and paid

This layer protects reach and timing. Organic establishes rhythm and narrative. Paid expands reach, scales winners, and stabilizes delivery when algorithms shift. When it’s weak, you get uneven exposure: great content that nobody sees, or heavy spend behind weak creative.

Layer 4: Community management and social care

This layer protects trust. A social media campaign creates conversations; this layer decides whether those conversations turn into credibility or frustration. Case studies like Lemonade’s emphasis on using a unified social platform for engagement and insight show how much this layer can shape brand perception when it’s treated as core work: Lemonade’s social management case study.

Layer 5: Data integrity and insight

This layer protects truth. It owns tracking health, naming conventions, and the reporting narrative that ties outcomes back to the campaign promise. When it’s weak, teams argue over mismatched dashboards and end up “optimizing” based on partial or inconsistent signals.

Optimization Process

Optimization is where most campaigns quietly waste effort. Teams change too much at once, chase short-term metrics, and mistake platform volatility for meaningful insight. A good optimization process feels slower in the moment and faster over the life of the campaign, because every change teaches you something you can reuse.

1) Turn changes into hypotheses

Every tweak should have a reason. Instead of “let’s refresh creative,” write a hypothesis like: “If we lead with the outcome in the first two seconds, more qualified people will watch long enough to understand the offer.” That single sentence makes your results interpretable.

2) Isolate one variable per test cycle

When you change creative, audience, landing page, and offer at the same time, you can’t learn. Pick the highest-leverage variable for that week, and keep the rest stable long enough to read the signal.

3) Build a creative feedback loop that runs weekly

Creative teams need clarity, not confusion. Your report should feed them specific learnings: which hooks held attention, which messages sparked saves or shares, which objections appeared in comments, and which formats triggered meaningful clicks.

If you’re using automated creative tools, keep human judgment in the loop. Features like Advantage+ creative can create useful versions, but your team still needs to decide which message the brand wants to be known for.

4) Validate impact with experimentation when the stakes are high

Attribution can flatter campaigns that would have won anyway. When budgets are meaningful or leadership scrutiny is high, lift studies help answer the only question that matters: did the campaign cause incremental outcomes?

Meta’s documentation on Conversion Lift testing and TikTok’s documentation on Conversion Lift Study describe the same core idea: compare groups who saw ads against groups who didn’t, then measure the difference. That’s the logic that turns “performance reporting” into decision-grade evidence.

5) Use guardrails so the campaign doesn’t optimize itself into a corner

Short-term efficiency can destroy long-term growth if you let one metric dominate. Keep guardrails like brand safety checks, frequency limits, creative fatigue monitoring, and audience expansion rules. A social media campaign should get more effective over time, not narrower and more repetitive.

Implementation Stories

Stories are where implementation becomes real, because campaigns don’t fail in theory. They fail on Tuesdays, when approvals stall, tracking breaks, and the team has to make decisions anyway. These are real examples where teams used disciplined execution and measurement to keep a social media campaign moving under pressure.

Quay: when “TikTok is great for awareness” wasn’t good enough

The campaign looked like it was working, but the team couldn’t prove it in a way that would survive budget conversations. Views came in waves, comments were enthusiastic, and the creative felt aligned with the brand. Then the uncomfortable question landed: if the paid spend stopped tomorrow, would sales actually change, or were they just riding the tide?

The backstory was a familiar tension for consumer brands. Quay was building attention where people spend time, but the internal story still depended on attribution models that don’t always capture what social truly influences. When a platform drives discovery and intent indirectly, last-click reporting can make the work look smaller than it is.

The wall was measurement credibility. If a social media campaign can’t demonstrate incremental impact, it becomes vulnerable to the next planning cycle. The team could feel the disconnect: TikTok was clearly shaping customer behavior, but the reporting wasn’t telling a story strong enough to protect investment.

The epiphany was to stop arguing with attribution and run an experiment instead. TikTok positions Conversion Lift Study as a way to measure true business impact, and Quay leaned into that methodology rather than trying to “fix” attribution from the outside. Their case study frames the work as proving incrementality across the journey, not just chasing clicks: Quay’s conversion lift case study.

The journey became more structured than most people expect. They treated the campaign like a testable system: keep the message consistent, control what changes, and let the experiment reveal what the ads caused. Instead of optimizing based on instinct, they used the lift framework to translate creative performance into a more defensible business narrative.

The final conflict is that experiments can be politically and operationally messy. You have to accept holdouts, align teams on what “success” means, and keep campaign variables stable enough to trust the read. That’s hard when everyone wants to tweak creative daily, especially if a post underperforms in the first hours.

The dream outcome was clarity that could travel. When you can explain impact through experimentation, the campaign becomes easier to scale because the team isn’t guessing what’s real. Even Quay team members highlighted publicly that they ran a lift study in partnership with TikTok as a way to connect platform engagement to business outcomes: Quay’s lift study reflection.

Turkish Airlines: when clicks weren’t the point, but demand still had to be proven

Peak season pressure doesn’t feel like a marketing problem at first. It feels like a timing problem: crowded markets, expensive attention, and a limited window to influence travelers before they lock plans. The campaign could not rely on cheap clicks, because the real win was demand—people searching, comparing, and choosing Turkish Airlines on purpose.

The backstory is that airlines compete in a category where intent is fragile. A traveler might notice an ad today, search tomorrow, and book next week after comparing multiple options. That gap makes it easy for social to be underestimated if the team only looks at immediate click paths.

The wall was proving influence beyond the platform. If the campaign’s effect shows up as search activity and delayed conversion behavior, last-click reporting can mislead decision-makers. The team needed a measurement approach that could capture what the ads caused, not just what they were adjacent to.

The epiphany was treating search behavior as an outcome worth measuring directly. Meta highlights Conversion Lift testing as a way to measure incremental impact, and its training materials describe how search lift methodology can connect ad exposure to search activity. Turkish Airlines’ Meta case study describes measuring search traffic driven by exposure to Meta ads during a defined campaign window using this approach: Turkish Airlines search lift case study.

The journey became a measurement-and-creative partnership. Rather than optimizing only for in-platform actions, the team built a social media campaign designed to influence intent, then used lift measurement to understand whether that intent was actually moving. That kind of setup changes what you create: you start writing hooks and narratives that people will remember when they later open a search bar.

The final conflict is that cross-channel measurement invites skepticism. Stakeholders will ask whether the lift is “real,” whether it would have happened anyway, and whether the methodology is trustworthy. That’s why teams often reinforce the story with multiple views, including agency perspectives on how search lift fits into broader measurement and budget decisions: a practical breakdown of Meta search lift use.

The dream outcome is a campaign you can defend and repeat. When you can show that social influences demand, you stop treating social as a content channel and start treating it as an intent engine. Turkish Airlines’ work has also been discussed in partner case-study ecosystems that focus on search behavior shifts during peak moments, reinforcing the idea that social can push people into active consideration: a partner case study on peak-season demand.

The implementation checklist that keeps campaigns sane

- Brief discipline: one outcome, one audience, one promise, written in plain language.

- Tracking readiness: events verified, naming standardized, dashboards aligned with the brief.

- Creative velocity: scheduled production cycles so the campaign can refresh without panic.

- Community coverage: response ownership and escalation routes so trust doesn’t break publicly.

- Optimization rhythm: weekly hypothesis reviews, one-variable testing, documented learnings.

- Evidence standards: when decisions are big, use experimentation frameworks like Meta Conversion Lift or TikTok Conversion Lift Study to reduce guesswork.

If you build your campaign this way, execution stops feeling like constant improvisation. The team knows what “good” looks like, measurement stays credible, and every week produces insight you can apply to the next social media campaign without starting from zero.

Statistics and Data

A social media campaign gets easier to manage the moment you stop treating metrics like “scores” and start treating them like clues. Your dashboard isn’t there to impress anyone. It’s there to answer a handful of uncomfortable questions: did people pay attention, did they understand the promise, did they act, and did the campaign cause anything new to happen?

Two forces are shaping what “good” measurement looks like right now. First, spend keeps flowing into digital. The U.S. digital ad market hit a record level in 2024, reaching $259B in revenue, and the same figure is reinforced in both the IAB/PwC full report PDF and coverage summarizing the findings at launch, including PwC’s reported results.

Second, the “creative economy” is no longer a side tactic inside a social media campaign—it’s a budget line. U.S. creator ad spend was projected to reach $37B in 2025, a figure repeated across IAB’s 2025 report PDF and IAB’s official release.

And while the marketing team is chasing efficiency, customers are judging the campaign by something simpler: whether the brand shows up when someone asks a question. Social care expectations have stayed stubbornly high, with Sprout highlighting that 73% of social users expect a brand response within 24 hours, echoing related Sprout Index summaries like customer service statistics and their guidance on response time expectations.

Performance Benchmarks

Benchmarks are useful, but they’re also dangerous. The fastest way to wreck a social media campaign is to chase a generic “average CTR” while ignoring whether the clicks are qualified, whether the creative is building memory, or whether the platform’s reporting is over-crediting itself.

The benchmarks that actually help are the ones that set expectations for the campaign system, not just for a single metric.

Benchmark 1: Budget behavior is shifting toward digital and social

When leadership asks “why invest here,” it helps to anchor the conversation in how the market is behaving. WPP Media’s latest outlook projected global ad revenue growth to reach $1.14T in 2025, reiterated in their alternate regional posting and summarized by independent trade coverage like Marketing Brew’s breakdown.

Benchmark 2: Social ROI can be strong, but variance is the real story

Social can outperform, but not automatically. Nielsen’s 2024 report notes that over the past three years, the average ROI of social media spend was 36% higher than the average ROI across all media, which is consistent with Nielsen’s own release announcing the report and its themes in their news center update and practical summaries that quote the same figure when discussing channel allocation, such as industry recaps referencing Nielsen’s findings.

The practical benchmark here is not “beat the average.” It’s “build a campaign process that reduces variance”: clean tracking, repeatable creative testing, and a measurement plan strong enough to justify scaling when performance improves.

Benchmark 3: Response expectations are now part of campaign performance

If your social media campaign creates demand but the brand responds slowly (or not at all), you’re paying to produce dissatisfaction. A useful operational benchmark is to staff coverage so the team can meet the expectation Sprout highlights: respond within 24 hours. That expectation shows up in multiple Sprout Index-based summaries, including their customer service stats and their response-time guidance, which is a good signal that this isn’t a passing trend.

Benchmark 4: When you need “proof,” use lift, not vibes

Attribution can flatter channels that were already close to the sale. Lift studies are a benchmark for seriousness: they show whether a campaign caused incremental outcomes. TikTok explains how Conversion Lift Study is designed to answer that incrementality question, and Meta similarly positions Conversion Lift as the methodology for understanding true impact beyond standard reporting.

Analytics Interpretation

Most reporting fails because it tries to do everything at once: prove ROI, diagnose creative, justify spend, and predict next month. A better approach is to read analytics in layers, so each metric has a job and you don’t confuse short-term movement with long-term progress.

Layer 1: Attention quality

Start with signals that show whether the campaign earned attention from the right people. Video watch behavior, completion patterns, and saves can be more informative than raw views, especially when your campaign depends on understanding rather than impulse. This is also where creative fatigue appears first: watch time drops, hook performance decays, and comments become repetitive.

Layer 2: Meaningful intent

Intent signals are where a social media campaign starts to feel “real.” These include high-quality clicks, searches for the brand or product, add-to-cart behavior, and lead form completion. They matter because they’re closer to business outcomes, but they still don’t prove causality by themselves.

Layer 3: Business outcomes with integrity

At this layer, you care about purchases, qualified leads, pipeline, retention, or whatever your campaign outcome is. The trap is taking platform-reported conversions as “truth” without asking what would have happened anyway. This is where incrementality frameworks matter, and why teams lean on controlled testing approaches described in guidance like Google’s overview of incrementality testing, alongside platform-specific lift tools like TikTok’s Conversion Lift Study and Meta Conversion Lift.

Layer 4: Operational reality

Finally, interpret data through the lens of operations. Did response times slip as comments increased? Did approvals slow down iteration? Did tracking break on a key landing page? If you can’t run the campaign smoothly, performance will eventually reflect it—even if the creative is strong.

Case Stories

Numbers become useful when you can see the decisions behind them. Here’s a real example where analytics weren’t just “reporting,” but the mechanism that let a team scale confidently.

Bolt: the moment a full-funnel social media campaign had to prove it was real

The campaign was running, spend was flowing, and the team still faced the hardest question in performance marketing: what if the results would have happened anyway? Bolt wasn’t trying to win an argument over dashboards; they needed a measurement approach that could survive scrutiny. Without that, scaling the campaign would feel like gambling with budget.

The backstory is bigger than one market. Bolt operates a large mobility platform, and its own materials have described the company as serving over 100 million customers across dozens of countries, a scale echoed across external profiles like the World Economic Forum’s organization page and Bolt’s own public communications such as company updates referencing scale. Growth at that level doesn’t come from one viral post; it comes from repeatable systems that can be rolled out with confidence.

The wall showed up in measurement. A full-funnel social media campaign can lift perception and still drive conversions, but traditional attribution often fractures that story into competing narratives. Bolt had already tested incrementality, and they wanted deeper proof of how brand and performance together influenced behavior, not just what last-click reporting happened to credit.

The epiphany was to treat measurement as an experiment, not an afterthought. Bolt ran a Unified Lift Study in Romania to measure the combined impact of brand and performance activity, aligning questions and windows with the real conversion cycle. TikTok’s success story describes how they adjusted the intent question to match the user journey and attribution window, rather than forcing a metric that looked neat on a slide.

The journey became disciplined and specific. The campaign emphasized safety messaging and used a unified audience exposed to both brand and performance campaigns so the test reflected real-world exposure. The measurement didn’t just validate the campaign direction; it helped identify which messaging elements were actually moving intent.

Then the final conflict: lift studies are unforgiving. If the campaign structure is sloppy, the results won’t save you with flattering attribution. Bolt still had to execute cleanly enough for the experiment to mean something, and to keep creative aligned while the test ran.

The dream outcome was evidence that could travel. TikTok reports the study delivered a 15.65% relative lift in purchases, alongside a 9.2% uplift in ad recall and a 3.7% uplift in intent, turning the campaign from “it seems to be working” into something a team can scale without guessing.

Professional Promotion

Promotion is where many social media campaigns accidentally become noisy. The fix isn’t “spend more.” The fix is promoting with intention: pick the moments where paid support multiplies what’s already working, and use measurement that can tell you whether you created lift or just collected credit.

Promote across the journey, not just the final click

If you only promote bottom-funnel ads, you’ll often hit the same small pool of ready buyers until the campaign exhausts itself. Full-funnel promotion keeps the top of the funnel fresh while performance units harvest demand. Bolt’s Unified Lift example shows why this matters: it measured brand and conversion impact together, rather than forcing the team to choose one story.

Use creators as a distribution strategy, not a novelty

Creators are no longer a “nice extra” you add after creative is done. Budgets have moved there for a reason, with IAB projecting U.S. creator ad spend at $37B in 2025, backed by the full report and IAB’s release. In practice, that means designing briefs so creators can deliver multiple hooks and angles you can test, then promoting the winners like you would any other high-performing asset.

When budget decisions are big, require incrementality evidence

Professional promotion has a standard: the ability to answer “what did this change?” TikTok’s Conversion Lift Study and Meta’s Conversion Lift exist for exactly this reason. They’re not just measurement features; they’re tools for making the next budget decision with fewer assumptions.

Protect the campaign experience as you scale

Promotion increases volume, which increases pressure. If your campaign drives more comments and DMs, treat responsiveness like part of performance, not a separate department’s problem. The expectation that brands respond within a day shows up repeatedly in Sprout’s Index-based guidance, including their 24-hour response stat, and it’s an easy place for a high-spend campaign to break trust if staffing doesn’t keep up.

Advanced Strategies

Once your social media campaign is consistently shipping and learning, the game shifts. You’re no longer trying to “make it work.” You’re trying to make it scale without turning fragile—without the team burning out, without measurement drifting into fantasy, and without creative turning into a repetitive loop that audiences learn to ignore.

The strategies below are the ones that show up in mature teams because they solve the real scaling problems: proving incrementality, protecting signal quality, and increasing creative output without losing the plot.

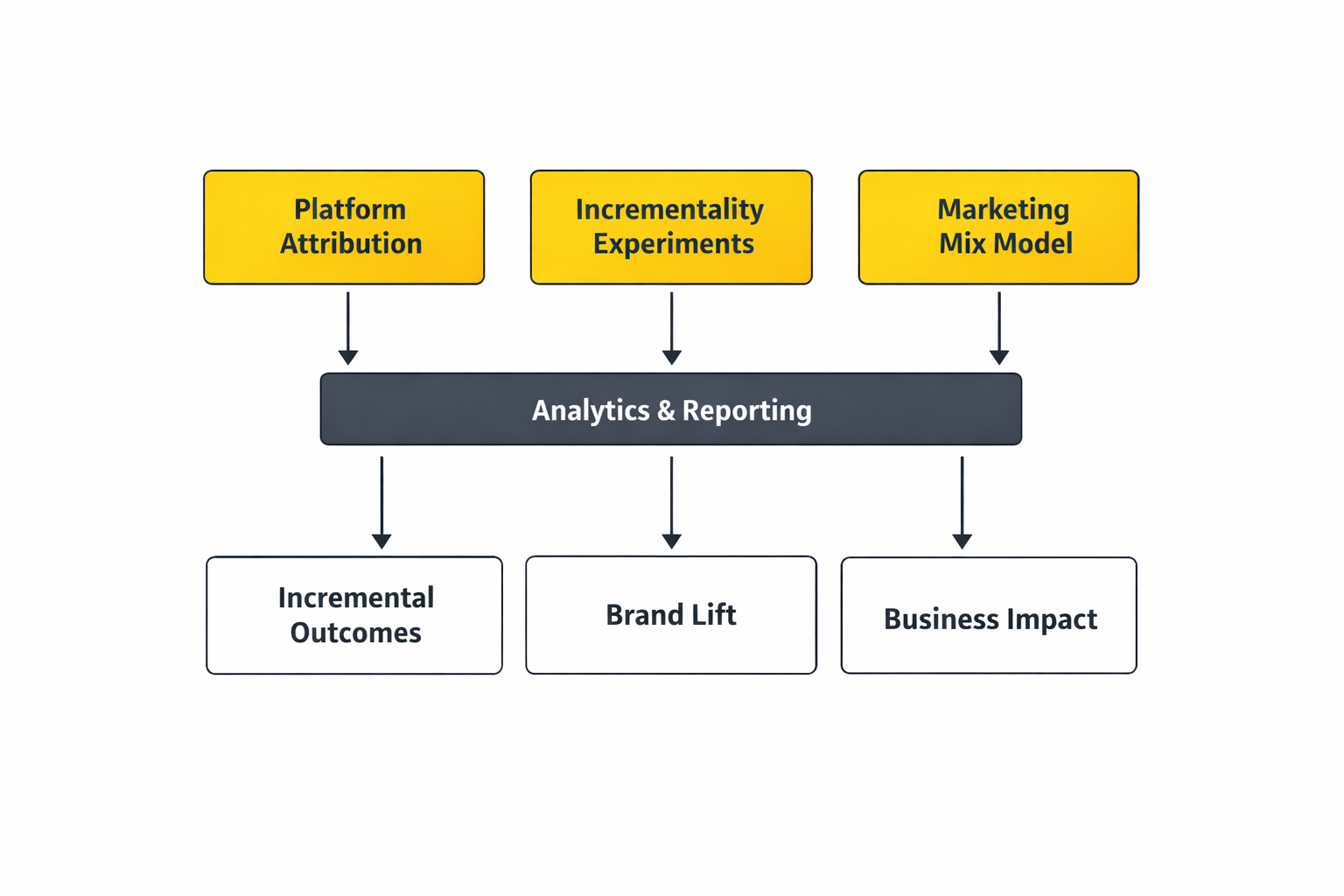

Triangulate measurement instead of trusting one dashboard

Scaling breaks when one platform’s reporting becomes the single source of truth. A professional approach uses three lenses at once: platform attribution, incrementality experiments, and Marketing Mix Models (MMMs). Google’s own guidance frames this as a more durable measurement toolkit, combining incrementality experiments, MMMs, and attribution together so you can make decisions with fewer blind spots.

When leadership asks for confidence, it’s easier to answer with evidence from multiple methods than to defend one number that could be inflated by view-through credit, cross-device gaps, or shifting privacy rules.

Make incrementality the default for big decisions

A social media campaign can look “efficient” while mostly harvesting demand created elsewhere. That’s why teams lean on lift studies when the budget is meaningful or the category is competitive. TikTok positions its Conversion Lift Study as a way to measure what ads truly drove, and Meta frames Conversion Lift in the same spirit: prove what changed, not what got credit.

Incrementality is also the cleanest way to protect your campaign from internal politics. When someone wants to cut social spend, the conversation becomes about controlled evidence, not opinions.

Use MMM to manage scale, not to “audit” the past

MMMs are most useful when they inform what to do next—how much to invest, where diminishing returns start, and how channels interact over time. Think with Google’s Marketing Mix Modeling Guidebook is helpful here because it treats MMM as an integrated decision system rather than a retrospective scorecard.

When your social media campaign includes creators, paid social, and search effects, MMM is often where those interactions become visible enough to plan around.

Scale creative diversity, not just spend

At small spend levels, one great asset can carry a campaign. At scale, creative fatigue becomes the bottleneck. Teams that scale well treat creative output like a product pipeline: multiple angles, multiple formats, and clear rules for what counts as “meaningfully different.” Meta’s documentation on creative testing reflects this mindset: structured testing helps you keep delivery learnings while bringing in new creative.

The practical move is simple: when you find a winning angle, don’t just make “more versions.” Build a family of distinct executions that still deliver the same promise, so the platform can expand reach without showing the same idea to the same people until they’re tired of you.

Engineer demand that shows up outside the platform

As you scale, you want your social media campaign to create demand that travels—brand searches, direct traffic, and word-of-mouth that keeps working after the ad stops running. That’s one reason search-lift style measurement and cross-channel thinking keep showing up in modern playbooks, including Google’s broader emphasis on measurement and durable growth planning.

This also changes what you produce: you start building memorable hooks and repeatable phrases people will recall when they’re not scrolling anymore.

Scaling Framework

Scaling a social media campaign isn’t “turn the budget up.” It’s widening distribution while keeping the system stable. The easiest way to do that is to scale in layers, so you always know what you changed and why performance moved.

Layer 1: Scale what already works before you expand anything else

Start by scaling the winners inside the same campaign logic: same promise, same audience definition, same landing path. The goal is to increase volume while keeping variables stable enough to read the signal.

- Increase budget gradually: let delivery stabilize so you don’t confuse learning resets with real performance drops.

- Expand placements thoughtfully: widen reach without forcing the same creative into formats it wasn’t built for.

- Scale creative supply: if your output can’t keep up, performance will decay even with perfect targeting.

Layer 2: Expand audiences with guardrails

Once the campaign is stable at higher spend, expand audiences in a controlled way. This is where a lot of teams accidentally erase what they learned by changing everything at once.

- Broaden in steps: adjacent segments first, then lookalikes and broader interest pools.

- Keep one anchor audience: maintain a stable segment so you can tell whether creative improvements are real.

- Watch frequency and fatigue: scaling should increase reach faster than it increases repetition.

Layer 3: Add channels only when you have a repeatable creative machine

Cross-platform expansion looks exciting and often underdelivers when creative operations aren’t ready. The campaign needs a reliable way to produce platform-native assets, not just resize the same post. LinkedIn’s own marketing guidance stresses the importance of platform-fit and building a steady content pipeline when brands run across multiple networks, which is why its curated playbooks keep returning to the same theme: consistency is a production system, not a motivational quote.

Layer 4: Require measurement rigor to “unlock” bigger spend

Create a rule that protects your budget decisions. For example: when a campaign crosses a certain spend threshold, it must run an incrementality test. That pushes the team toward evidence instead of habit, using tools like TikTok’s Conversion Lift Study or Meta Conversion Lift.

Growth Optimization

Optimization at scale is less about “tweaks” and more about preventing three slow leaks: wasted spend from non-incremental credit, creative fatigue, and operational drag that delays iteration. The best growth optimization feels like maintenance—quiet, consistent, and relentless.

Build an experimentation roadmap, not a random testing habit

When your social media campaign is small, you can test impulsively. At scale, random testing becomes expensive. Pick a 90-day roadmap with clear themes: hook strategy, offer framing, creator formats, landing page friction, and audience expansion. Use a consistent testing method so learnings compound instead of getting lost.

Measurement guidance that emphasizes durable decision-making through multiple methods helps here, especially the approach of combining incrementality, MMM, and attribution described in Google’s measurement overview.

Turn creative into an operating system

Scaling usually fails because creative production can’t keep up with what the platform needs. A campaign that scales well has a predictable rhythm:

- Weekly: ship new hooks and iterations on winning angles.

- Biweekly: introduce new angles based on audience language and objections.

- Monthly: refresh your “proof assets” (testimonials, demos, comparisons) so the campaign doesn’t rely on the same claims forever.

Meta’s creative testing guidance supports this kind of structured approach because it’s built around introducing new creative without throwing away what the delivery system has learned.

Protect conversion signals as you grow

As spend rises, tracking problems become more expensive. Build redundancy into measurement so the campaign doesn’t “go blind” when privacy settings shift or a tag breaks. Server-side options like Meta Conversions API and TikTok’s Events API exist because teams needed more durable event capture than browser-only tracking can provide.

Look for demand effects, not just direct response

At scale, your social media campaign often creates demand that shows up outside the platform: brand searches, direct traffic, and higher conversion rates in other channels. This is where MMM and incrementality become especially valuable, and why modern strategy discussions keep circling back to measurement that can survive real-world complexity, like the emphasis on measurement readiness in Google’s 2025 marketing strategy guidance.

Scaling Stories

Scaling stories are rarely about a clever trick. They’re about what a team did when the campaign started working and the stakes got higher. These are real examples where the “how” mattered as much as the “what.”

Tesco: scaling a campaign when the pressure was cultural, not just commercial

The pressure wasn’t subtle. The campaign window was fixed, the audience expectations were intense, and every creative decision carried the risk of feeling performative. The team could feel it: if they got it wrong, the campaign wouldn’t just underperform—it would damage trust.

The backstory is that scaling isn’t always about budget. Sometimes it’s about scaling relevance, and doing it publicly, under scrutiny, while trying to connect with communities that are tired of shallow messaging. Tesco’s marketing team has spoken about leaning into “show up and learn” rather than waiting for perfect certainty, which captures what scaling often feels like in the real world: imperfect action with strong intent.

The wall arrived when the team had to balance speed with care. A fast social media campaign can miss nuance; a slow one can miss the moment entirely. They needed a process that could move quickly while still protecting meaning and credibility.

The epiphany was treating measurement as reassurance, not just reporting. Instead of relying on vibes, they used brand impact signals to understand whether the work was landing with the audience they hoped to reach. Google’s write-up on the campaign highlights the importance of measurement and brand lift in modern planning, and includes Tesco’s own perspective on the mindset behind the approach: Google’s 2025 marketing strategy piece featuring Tesco.

The journey became a disciplined loop: plan, launch, listen, and adjust without losing the campaign’s core promise. They treated feedback as part of the work rather than a distraction from the work. That’s the kind of scaling that keeps a social media campaign human while it grows.

The final conflict is that cultural moments don’t wait for marketing operations. Teams still have to ship creative, moderate conversation, and maintain consistency while the internet does what it always does: interpret everything in public. Without a strong system, scaling collapses into reactive messaging and fragmented decision-making.

The dream outcome is a campaign that scales trust, not just reach. When the work is measured thoughtfully and adjusted responsibly, it earns something more durable than short-term clicks: credibility that makes the next campaign easier, because the audience believes you’re showing up for real.

Duolingo: scaling a social media campaign without letting virality become the job description

The moment you “become the brand that goes viral,” the pressure changes overnight. Every post is judged against the last hit, and the internet starts demanding a constant stream of escalation. That’s when a social media campaign can quietly turn into a burnout machine.

The backstory is that Duolingo didn’t just stumble into a tone of voice; it built a recognizable character and a format that could travel across trends. Over time, the brand’s TikTok presence became large enough that it shaped public perception of the company far beyond language learning features. That kind of reach is an asset, but it also creates a new kind of risk: the audience expects entertainment on demand.

The wall was sustainability. When a team is pushed to chase virality, the work becomes reactive, and the emotional cost climbs fast. That tension is captured in reporting on the team’s experience and the mental-health reality behind high-visibility social roles, including the description of a “writer’s room” model and the pressure of constant performance in The Wall Street Journal’s interview with Duolingo’s departing social media manager.

The epiphany was treating content as a system, not a stunt. A writer’s room approach creates repeatability: you can generate ideas, workshop them, and keep quality high without relying on one person’s nervous system to power the entire engine. That’s a scaling move that protects the brand and the team at the same time.

The journey becomes operational: set boundaries, define what the brand will and won’t do, and build workflows that make consistency possible. When a social media campaign is run this way, you can still catch cultural moments, but you’re not held hostage by them. The system keeps producing even when one trend fades.

The final conflict is that audiences don’t care about your internal process. They care about the output. If the team slows down to protect itself, the feed can punish you; if the team speeds up to chase attention, the work can become sloppy and risky. Scaling demands a balance that’s hard to find and easy to lose.

The dream outcome is a brand voice that stays sharp without consuming the people who maintain it. When your campaign is system-driven, you can be creative, fast, and culturally relevant—without turning “going viral” into the only measure of success.

Set budget guardrails that prevent self-deception

Create rules that keep spend tied to evidence. For example: if the campaign is scaling beyond a threshold, run a lift study to validate incrementality using tools like TikTok’s Conversion Lift Study or Meta Conversion Lift. When the stakes are high, this turns promotion into a controlled decision instead of a hopeful bet.

Use creators as a distribution layer you can scale responsibly

Creator work scales best when it’s treated like performance creative: clear briefs, multiple hooks, and structured iteration. This is where market direction matters. U.S. creator ad spend was projected to reach $37B in 2025, supported by the full IAB report and IAB’s official release. That shift is one reason professional promotion increasingly includes creator pipelines as a core scaling tactic, not a side experiment.

Promote with tracking durability in mind

Scaling spend without stable signal is how teams end up optimizing to noise. Protect the integrity of the campaign by ensuring conversion events are captured reliably through approaches like Meta Conversions API and TikTok’s Events API, then connect those signals to your broader measurement system so you can compare what platforms report against what your business actually experiences.

Scale the operational layer, not just the ads

Promotion increases message volume and public scrutiny. If your social media campaign creates more comments, more DMs, and more customer questions, plan staffing to match. Otherwise the campaign grows demand while the brand experience degrades in public—an avoidable way to lose trust right when you’re paying the most for attention.

Future Trends

The next wave of social media campaign performance won’t come from louder creative or bigger budgets. It’ll come from teams that can earn trust faster, ship content faster, and measure impact with less guesswork—while platforms and audiences keep changing the rules mid-game.

Here are the shifts worth planning for now, so your next campaign doesn’t feel like it’s fighting the internet.

Social search becomes the new front door

People are already using TikTok, Instagram, and YouTube as discovery engines, especially for “what should I buy,” “what should I do,” and “what does this mean” queries. That changes what a social media campaign needs to produce: content that answers questions, shows proof, and earns saves, not just content that looks good in a deck. You can see how this is changing marketing playbooks in discussions like Search Engine Land’s social search visibility analysis and broader platform guidance on optimizing for social media search behavior.

AI content forces a trust premium

As AI-generated media floods feeds, audiences will increasingly reward brands that feel real: clear disclosure, credible proof, and consistency between what you show and what you deliver. Meta has already described efforts to label AI-generated images across Facebook, Instagram, and Threads, while ongoing reporting shows how messy provenance can be in practice and why trust will become a competitive advantage, not just a compliance issue: coverage of C2PA labeling gaps and “AI slop” pressure.

Platform growth shifts the opportunity map

Most teams treat channel strategy like a habit. The smarter move is to treat it like a portfolio. In 2026 reporting, TikTok, LinkedIn, and Instagram were highlighted as the fastest-growing major platforms in 2025, which matters if your social media campaign depends on reaching new people rather than recycling the same audience: Hootsuite’s 2026 social media statistics roundup.

At the macro level, the ecosystem keeps expanding and fragmenting, which is why reports like Digital 2026 from We Are Social and Kepios remain useful for sanity-checking audience reach, device behavior, and where attention is actually moving.

Social commerce becomes normal, not experimental

If your social media campaign sells anything—even indirectly—you’ll be planning around in-platform shopping behaviors, creator-led recommendations, and purchase journeys that don’t start on your website. DHL’s global research frames this shift clearly, noting that 7 in 10 global shoppers buy on social media, and many expect social to become a primary shopping channel over time.

Measurement swings back toward incrementality and MMM

The era of “ROAS solves everything” is fading because privacy changes and cross-device journeys make simple attribution less trustworthy. Marketers are rebuilding measurement stacks around incrementality experiments and Marketing Mix Models, which is why Google has emphasized the resurgence of MMM and measurement effectiveness in 2025. The teams that win will be the ones who can explain what changed in the business, not just what happened in the platform dashboard.

Strategic Framework Recap

A social media campaign works when it’s treated like a system: one outcome, one audience, one promise, a creative machine built for variation, and measurement that can prove impact without relying on fragile assumptions.

If you want a practical way to remember the full approach, keep it in four layers that fit together like an ecosystem:

- Strategy: the brief that locks the outcome, audience, and promise.

- Execution: production + publishing + community, run with consistency.

- Optimization: hypothesis-led testing, creative iteration, and budget decisions tied to evidence.

- Measurement: platform reporting plus incrementality and MMM so you can defend decisions at scale.

This is what makes a campaign feel professional: it keeps learning compounding, even when platforms change, even when audience behavior shifts, and even when the team is under pressure.

FAQ – Built for the Complete Guide

What makes something a real social media campaign instead of “just posting”?

A real social media campaign has a defined time window, one primary outcome, and a coordinated set of messages designed to move a specific audience. “Just posting” can be valuable, but it usually lacks a measurable objective and a learning loop, so it’s harder to improve week to week.

How long should a social media campaign run?

Long enough to learn, short enough to stay sharp. Many campaigns run 2–6 weeks for launches or offers, while always-on programs run in monthly cycles with clear “waves” of new creative. The right answer depends on your buying cycle and how quickly you can ship new variations.

What metrics matter most in a social media campaign?

The best metrics are the ones tied to your objective. Attention signals (watch time, saves) tell you if the message landed. Intent signals (high-quality clicks, searches, sign-ups) tell you if people cared enough to act. Outcome signals (purchases, qualified leads, pipeline) tell you if the campaign moved the business.

Should I use industry benchmarks to judge performance?

Benchmarks are useful for setting expectations, but they’re a weak reason to change strategy. A campaign can beat generic benchmarks and still fail to drive qualified outcomes. Use benchmarks for context, then make decisions based on your own baselines and what changes after you run tests.

How do I optimize a social media campaign for social search?

Start by publishing content that answers real questions in the language your audience uses. Use clear on-screen text, straightforward captions, and consistent naming for products, categories, and use cases. Social search behavior is becoming more important, which is why guides like Sprout’s social media search overview are worth baking into your planning.

How do I know if creative fatigue is hurting the campaign?

Fatigue usually shows up as declining watch time, weaker hook performance, rising frequency, and comments that feel repetitive or more negative. The fix isn’t “new content” in general—it’s new angles, new hooks, and new proof, while keeping the core promise consistent enough to build memory.

When should I use incrementality testing?

Use incrementality testing when decisions are expensive: scaling budgets, expanding audiences, or defending investment to stakeholders. Lift studies exist to answer whether ads caused incremental outcomes, not just whether they were present near conversions, which is the core promise of tools like Meta Conversion Lift and TikTok’s Conversion Lift Study.

Is Marketing Mix Modeling only for big brands?

MMM used to be expensive and slow, which pushed it toward large advertisers. That’s changing. Many teams now revisit MMM because privacy changes and media fragmentation make it harder to rely on user-level attribution alone, a shift discussed in Google’s measurement focus on MMM’s resurgence.

Do I need to disclose AI use in campaign creative?

If AI materially shapes the content—especially realistic imagery, audio, or video—disclosure protects trust and reduces risk. Platforms are moving toward clearer labeling standards, including Meta’s efforts to label AI-generated images, and broader coverage shows why the trust problem is growing, not shrinking: reporting on provenance and “AI slop” dynamics.

How important is social commerce for campaign planning now?

It’s increasingly hard to ignore. Even if you don’t sell directly in-app, shoppers often discover and validate products on social before buying elsewhere. DHL’s research highlights how common social purchasing already is, including the finding that 7 in 10 global shoppers buy on social media.

Work With Professionals

If you’re serious about running a social media campaign that doesn’t just “look active,” you eventually hit a point where effort stops being the bottleneck. Systems become the bottleneck. You need cleaner workflows, better proof assets, faster iteration, and a steadier stream of opportunities that match what you’re actually good at.

That’s the frustrating part about freelancing in marketing: you can be excellent at campaign strategy, creative testing, and measurement—and still waste weeks chasing leads that aren’t a fit. The momentum-killer isn’t your skill. It’s the noise.

MARKEWORK.com is built to reduce that noise. It’s a focused marketplace for marketing work where you build a profile, browse opportunities, and connect directly—without commissions or per-project fees. The platform describes this clearly: no middleman and no project fees, with plans that include access to thousands of job listings and direct communication so you can negotiate scope and pay like a professional.

If you want more stability, the best move is simple: pick a campaign outcome you can own, package it into a repeatable offer, and then put it in front of the right clients consistently. Markework’s model supports that approach because you’re not paying a cut of your earnings—you’re building a pipeline you control, on a platform that emphasizes direct communication and no project fees and confirms there are no commissions or transaction charges.