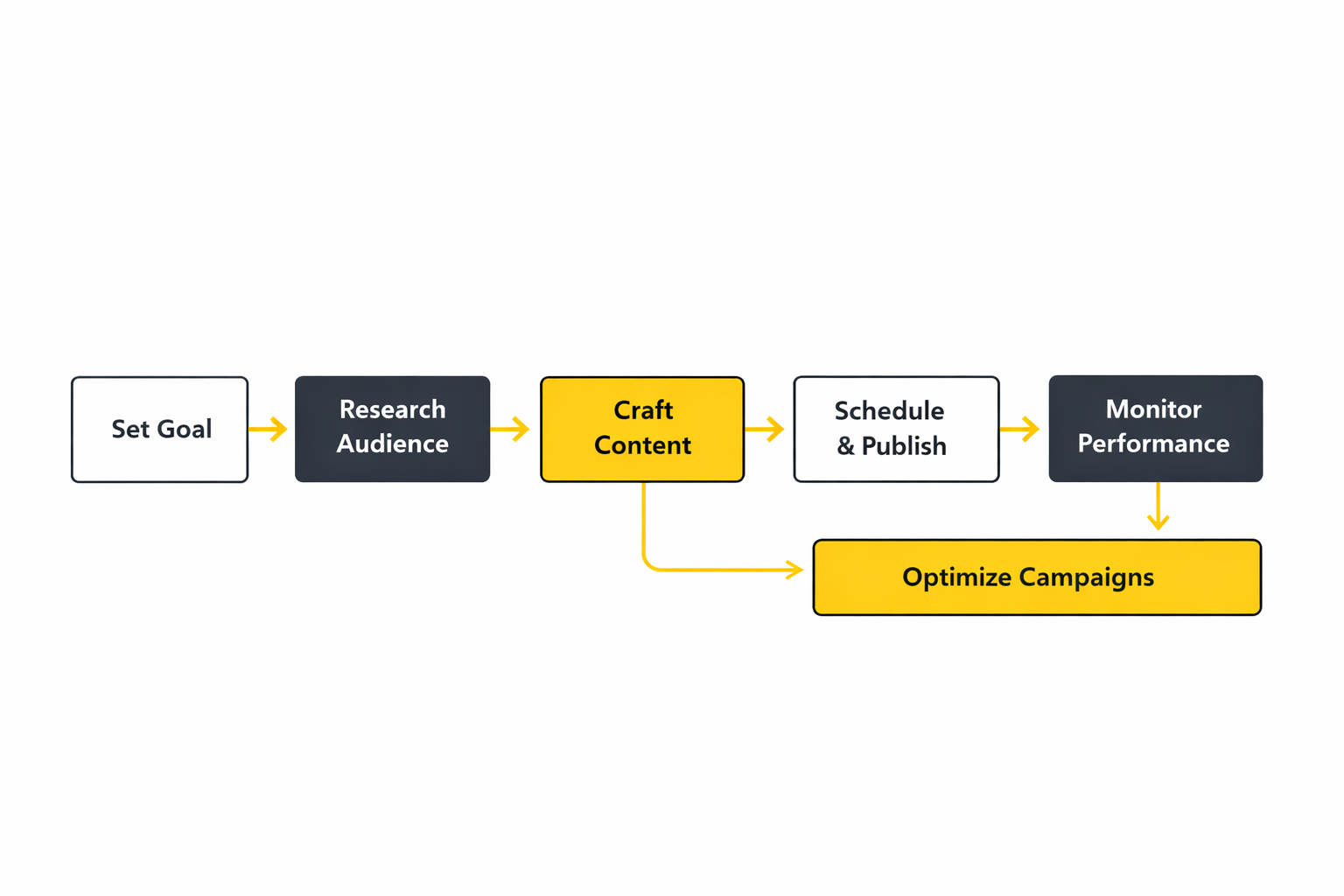

Step-by-Step Implementation

A social marketing business doesn’t become consistent by “trying harder.” It becomes consistent by turning intention into a repeatable system: the same inputs, the same checkpoints, the same definitions, and the same weekly rhythm—so the work holds up even when the team is busy, the client is anxious, or the platform changes something overnight.

This implementation path is designed to get you out of the motivational cycle (big plan, messy execution, rushed reporting) and into an operating cadence where quality is normal and results are explainable.

Step 1: Lock the business outcome and the proof you’ll accept

Before you touch creative or tools, decide what “worked” means in plain language. Is it qualified leads, booked calls, trial activations, in-store sales, or incremental revenue? Then decide what evidence you’ll accept to prove it, because platform dashboards alone can be misleading when attribution shifts.

If you’re running paid social, consider measurement approaches that isolate incremental impact, like Meta’s Conversion Lift methodology or TikTok’s Conversion Lift Study, especially when you need stakeholder trust.

- Outcome: one primary result (the “why we exist” metric) and two supporting metrics (the “how we’re getting there” signals).

- Proof: the measurement method you’ll use when someone asks, “But did this actually cause the result?”

- Time horizon: what can realistically move in 30 days vs 90 days.

Step 2: Build a content engine, not a posting plan

A posting plan is a calendar. A content engine is a pipeline that keeps producing even when inspiration runs out. The simplest engine is built from three repeating content “lanes” that map to real buying behavior: trust-building, problem-solving, and conversion-support.

The goal is to remove decision fatigue. Your team shouldn’t have to reinvent the purpose of every post. They should be choosing from proven lanes that match the stage of the audience.

- Trust lane: credibility, behind-the-scenes, customer proof, leadership POV.

- Problem lane: education, objections, myth-busting, “here’s how to think about this.”

- Conversion-support lane: offers, demos, booking prompts, product framing, retargeting assets.

Step 3: Put approvals on rails so speed doesn’t kill quality

Most teams don’t fail because they lack ideas. They fail because approvals turn every post into a negotiation. Set a workflow where approvals are predictable, with clear “needs review” categories and a default time limit for feedback.

If you’ve ever had a launch delayed because feedback arrived too late, you’re not alone. Approval systems exist to protect brands, but they can also quietly destroy momentum. A practical guide to building approval workflows (and choosing the right model for your team) is laid out in Sprout Social’s approval workflow guidance.

- Pre-approved templates: formats that can ship without legal/brand review every time.

- Escalation posts: anything sensitive gets routed automatically.

- Review SLA: a clear expectation for turnaround time and what happens if it’s missed.

Step 4: Fix measurement early, before you “optimize” the wrong thing

Optimization is only as good as the signal you feed it. If your tracking is fragile, you’ll end up “improving” surface metrics while the business stays flat. Start with a measurement foundation that can survive privacy changes and platform shifts.

For Meta ecosystems, that often means building a more direct data connection via Conversions API so the platform receives stronger signals for optimization. For site analytics, ensure your event framework is coherent inside Google Analytics 4, so you’re not reporting different “truths” in different dashboards.

- Define conversions: what actions matter and how they map to revenue or pipeline.

- Standardize UTMs: so reporting isn’t a weekly archaeology project.

- Validate events: confirm they fire correctly and match what the team thinks they mean.

Step 5: Launch in controlled cycles, not chaotic bursts

A professional social marketing business launches in cycles: publish, measure, learn, adjust. That keeps you from “changing everything at once” and never knowing what caused the result. Even in fast-moving platforms, discipline is what gives you speed that compounds instead of speed that burns you out.

- Weekly: content performance review, community themes, top questions, creative winners and losers.

- Biweekly: structured experiments (one variable at a time).

- Monthly: outcome review tied to the business metric, not just engagement.

Execution Layers

Think of execution as layers that stack. If an upper layer is strong but the layer beneath it is weak, results look random. A social marketing business that performs consistently usually has all layers working together—even if they’re not “fancy.”

Layer 1: Foundation

This is where the rules live: brand voice, response standards, tracking definitions, and who owns what. When foundation is unclear, teams argue about basic decisions every week, and execution slows down without anyone noticing why.

- Voice and boundaries: what you will say, what you won’t, and how you handle risk.

- Measurement map: the conversion events and the reporting view everyone agrees on.

- Cadence: the weekly meeting rhythm that keeps learning alive.

Layer 2: Production

This is the pipeline that turns insight into content. It includes ideation, scripting, design, editing, approvals, and scheduling. Most teams over-focus on creativity and under-focus on throughput. The professionals protect throughput so the creative actually ships.

- Content lanes: trust, problem-solving, conversion-support.

- Asset system: templates, reusable frameworks, organized libraries.

- Approval rails: predictable review so publishing doesn’t stall.

Layer 3: Distribution

Distribution is not “post and pray.” It’s deliberate packaging (hooks, formats, timing), plus paid support where needed. Many brands create great content that never reaches the right people because distribution is treated as an afterthought.

- Channel roles: what each platform is for in your system.

- Paid support: boosting winners, building retargeting pools, proving incrementality when needed.

- Partnership leverage: creators, employees, and community members as trusted distributors.

Layer 4: Community and customer experience

Community is where trust becomes tangible. It’s also where brands either build loyalty or quietly lose it—one ignored DM at a time. If you want social to drive business outcomes, the customer experience layer can’t be “whenever we have time.”

Response expectations are no longer a “nice-to-have.” Consumer expectations for timely replies have been tracked repeatedly, including findings summarized in Sprout Social’s 2025 Index discussion of response expectations.

- Triage: what gets answered fast, what gets escalated, what gets documented.

- Tagging: consistent labels so conversations become insight.

- Feedback loops: recurring themes sent to product, sales, or support.

Layer 5: Measurement and learning

This layer is what turns activity into progress. It includes reporting that leadership trusts, experimentation that isolates variables, and measurement methods that can handle messy attribution.

- Scorecards: a single weekly view tied to the business outcome.

- Experiments: structured tests that change one variable at a time.

- Incrementality tools: lift studies and controlled tests when stakeholders need causality.

Optimization Process

Optimization isn’t “tweaking until something works.” It’s a loop that protects what’s already winning and systematically improves what’s uncertain. The fastest teams aren’t the ones who change the most. They’re the ones who learn the fastest with the least chaos.

The loop: Diagnose, decide, deploy, validate

Diagnose: start with the simplest explanation. Is performance down because of creative fatigue, audience mismatch, weak offer framing, or broken tracking? Don’t jump to solutions until the diagnosis is grounded.

Decide: choose one lever to pull. A professional social marketing business resists the urge to “change everything,” because that destroys learning.

Deploy: ship the change in a controlled way. If you’re testing creative, keep the audience stable. If you’re testing audiences, keep the creative stable.

Validate: check the result using the proof method you agreed to. For paid social, experimentation and incrementality methods like Meta Conversion Lift exist specifically to answer, “Did this cause incremental outcomes?”

What to optimize first when time is limited

When teams are busy, optimization should follow the path of highest leverage. These priorities usually outperform random tinkering:

- Offer clarity: if people don’t understand the “why,” no creative will rescue you.

- Creative packaging: hook, format, pacing, and the first seconds of attention.

- Signal quality: ensure conversions and events are tracked reliably, including using options like Conversions API where relevant.

- Retargeting experience: your warm audience should not see the same message as cold prospects.

When lift studies are worth the effort

If you’re trying to convince a CFO, defend a budget, or scale a channel beyond “it seems to be working,” lift studies can be the difference between opinion and evidence. Meta provides guidance on how conversion lift measures incremental effect, and TikTok frames lift as a “gold standard” approach in its Conversion Lift Study overview.

On the upper-funnel and shopping intent side, platforms have also been publishing aggregate lift findings. For example, Pinterest described a meta-analysis of UK offline sales lift studies where Pinterest campaigns achieved statistically significant sales lift in 70% of cases and compared it to a benchmark of 55% in the same context, presented in Pinterest’s sales lift write-up.

Implementation Stories

The hardest part of implementation isn’t knowing what to do. It’s doing it while the business keeps moving. Real social marketing business execution usually changes because something breaks: reporting becomes untrustworthy, scaling becomes risky, or a campaign is too important to “guess” your way through.

Guerlain: When performance data stopped being believable

The tension built quietly at first, then all at once. Campaigns were running, budgets were being spent, and yet the numbers started to feel like they belonged to someone else’s reality. When reporting loses credibility, every meeting becomes defensive because nobody trusts the scoreboard. The danger wasn’t just wasted spend—it was making decisions from shaky data and scaling the wrong thing.

Guerlain wasn’t new to performance marketing, and it wasn’t lacking creative firepower. But the ecosystem had changed: browser-side limitations and shifting data collection made it harder to reconcile what happened online with what the business needed to plan next. They needed a way to strengthen signal resilience and make their first-party data more useful, not just more “collected.” The context and challenge are laid out in fifty-five’s Guerlain case study.

The wall was trust. When your conversion numbers wobble, optimization becomes guesswork and stakeholders start questioning every recommendation. It becomes nearly impossible to answer simple questions like “which campaigns actually drove purchases?” without adding a dozen caveats. Even worse, the platform algorithms that rely on signals for optimization become less reliable when the signal is weak. The team needed stability before they could even talk about growth.

The epiphany was practical: stop relying on fragile collection paths and create a more direct connection for marketing data. Conversions API wasn’t just a technical upgrade—it was a way to regain confidence in performance reporting and feed richer signals back into optimization. That’s the core intent of Meta’s Conversions API design, and it aligned with what the team needed. The conversation shifted from “why did reporting change?” to “how do we make our signals durable?”

The journey combined implementation and discipline. They worked to stabilize data over time, improve matching quality, and use the stronger signal to monitor and refine media planning. The case study reports measurable outcomes: +18% newly collected purchase conversions, x2 ROAS on incremental sales, and an event matching quality score of 6/10, documented directly in the published results. The point wasn’t the number—it was getting back a scoreboard the team could trust.

The final conflict came from reality: implementation doesn’t magically fix everything. Signal improvements still need clean event definitions, consistent naming, and teams that don’t “change ten things at once” when performance wobbles. There’s also the organizational friction of aligning tech, marketing, and partners around a shared measurement truth. In most companies, that alignment is harder than the code.

The dream outcome was confidence that compounds. With more resilient signals, the team could optimize with fewer blind spots, defend decisions with clearer evidence, and scale what was working without feeling like they were gambling. That’s what a professional social marketing business looks like at a serious brand: fewer arguments about the data, and more energy spent improving outcomes, grounded in the case study’s documented shift toward stable reporting and optimization signals.

Gruvi: Proving impact when “it looks good” isn’t enough

Holiday season pressure doesn’t politely wait for perfect measurement. The campaigns had to launch, the creative had to ship, and the team had to make calls quickly while competitors fought for the same attention. But there was a deeper tension: performance marketing can produce beautiful dashboards that don’t actually prove causality. When budgets get bigger, “it looks like it worked” stops being acceptable.

Gruvi wanted incremental holiday sales, not just inflated attribution. They leaned into Meta’s tooling stack and structured the work around measurement that could separate real impact from noise. The campaign period and measurement approach are documented in Meta’s Gruvi success story. This was less about chasing a metric and more about defending a decision: what should we scale, and why?

The wall was uncertainty. If you can’t clearly answer whether ads caused incremental outcomes, scaling becomes risky because you might just be paying for conversions that would have happened anyway. Stakeholders feel that uncertainty immediately, especially when budgets rise. The team needed a way to validate impact with more rigor than last-click reporting.

The epiphany was to measure like a scientist instead of a commentator. Instead of treating attribution as truth, they used a structured incrementality approach through a Conversion Lift study with a search lift methodology, described in the official case study. This creates a clearer line between exposure and outcome, which is the entire point of Meta’s conversion lift framework.

The journey forced operational discipline. Measurement like this requires clean setup, stable definitions, and the patience to let a test run without constantly changing variables. It also forces teams to think in terms of hypotheses: what do we believe will happen, why, and what would prove us wrong? That mindset makes optimization calmer because you’re learning, not panicking.

The final conflict is that “rigor” can collide with speed. Holiday campaigns move fast, creative swaps happen daily, and teams feel the urge to react to every fluctuation. The risk is contaminating learning by changing too many things at once. That’s why professional implementation is less about intelligence and more about restraint.

The dream outcome is clarity that earns budget. When a social marketing business can demonstrate incremental impact, it becomes easier to scale winners, kill losers, and speak to leadership in language they respect. Gruvi’s story matters because it shows the maturity shift: from reporting performance to proving impact, supported by the documented measurement approach and the broader logic of conversion lift methodology.

Professional Implementation

The difference between “posting consistently” and running a real social marketing business is the system behind the work. Professional implementation is what makes results repeatable across clients, campaigns, and quarters—not just when one talented person is having a great month.

Standardize the basics so you stop re-deciding them

- Definitions: what counts as a lead, what counts as a qualified lead, what counts as success.

- Tracking map: conversion events, UTMs, naming conventions, and where reporting lives.

- Content lanes: the repeating categories that keep output steady and purposeful.

Build quality control that doesn’t slow you down

Quality control should be lightweight and consistent, not a bureaucratic event. The goal is fewer “oops” moments without turning publishing into a monthly ritual. Approval workflows exist for a reason, and a practical blueprint for building them (including choosing the right model for your team) is laid out in this approval process guide.

- Pre-approved formats: templates and series that can ship with minimal review.

- Sensitive content checklist: what triggers escalation automatically.

- Feedback rules: one place for feedback, one deadline, and a clear owner.

Use measurement that matches the decision you need to make

If the decision is “what content should we make more of?” you can often use platform and analytics signals. If the decision is “should we invest more budget here?” you’ll eventually need stronger proof. That’s why incrementality tools and lift studies matter, including Meta’s Conversion Lift approach and TikTok’s Conversion Lift Study framing.

- Weekly: performance patterns and audience questions.

- Monthly: business outcome movement and what likely drove it.

- Quarterly: deeper tests when budgets or strategy direction are on the line.

Protect a rhythm that keeps learning alive

The fastest-growing teams aren’t the ones who work the most hours. They’re the ones who keep the learning loop running without drama. When the cadence is consistent, optimization becomes calmer, reporting becomes clearer, and your social marketing business stops feeling like it’s reinventing itself every week.

Statistics and Data

In a social marketing business, data is the difference between “we feel busy” and “we can explain why this works.” The trick is that data becomes dangerous the moment it turns into decoration: dashboards that look impressive, metrics that move without meaning, and reports that are technically correct but strategically useless.

The easiest way to keep analytics honest is to anchor everything to a business question. What’s the one decision this number should help you make? If a metric doesn’t change a decision, it’s not a KPI—it’s background noise.

It also helps to zoom out once in a while. The bigger market signals show why measurement is getting more serious: global ad revenue forecasts have been clustering around the same magnitude, with WPP Media’s 2025 projection of $1.14 trillion echoed in coverage from The Wall Street Journal’s write-up of the same forecast and Marketing Brew’s summary.

On the platform side, even “inside the walls” signals are shifting. Meta’s latest results noted that the average price per ad increased 9% year over year for full-year 2025, and the 2025 annual filing reinforces how pricing and impressions drive advertising revenue dynamics in the core business model described in Meta’s Form 10-K.

That context matters because a social marketing business lives inside moving systems. If you don’t build measurement that can survive change, you’ll spend more time debating numbers than improving results.

Performance Benchmarks

Benchmarks are useful when they keep your team grounded. They become harmful when they make you chase averages you don’t actually want. The best way to use benchmarks is to treat them like guardrails: they tell you when something is unusually strong, unusually weak, or simply normal for your category.

Benchmarks start with the market reality, not your dashboard

If you want one simple signal for why brands are obsessing over performance and measurement, look at the scale of spend. U.S. internet advertising revenue hit $259 billion in 2024, a number that is repeated across coverage such as Yahoo Finance’s summary of the IAB/PwC findings and Adweek’s breakdown.

Inside that growth, social advertising rebounded aggressively. The IAB/PwC report shows social media advertising reaching $88.8 billion in 2024, with the same growth pattern echoed in Adweek’s reporting and Search Engine Land’s summary.

This matters for your social marketing business because rising spend tends to create two side effects: fiercer competition for attention and less tolerance for reporting that can’t connect activity to outcomes.

Organic engagement is trending downward for many brands, so “good” needs context

Teams often panic when engagement drops, but the broader pattern has been volatile and, in many cases, downward. Rival IQ’s 2025 benchmarking found engagement rates falling across major networks, including platform-level drops like Facebook down 36% and TikTok down 34% year over year.

That doesn’t mean organic is dead. It means the social marketing business has to be more intentional about formats and distribution. One example: Emplifi’s large-scale benchmarking noted that Instagram Reels became the most-used brand format, with Reels reaching 38% of brand posts by the end of 2024. If your content mix is stuck in an older rhythm, your performance will often look “mysteriously worse” even when your strategy is fine.

What to benchmark in a practical way

- Output benchmarks: are you publishing enough to learn, or just enough to stay visible?

- Engagement benchmarks: are you earning reactions, replies, and shares that show genuine attention?

- Responsiveness benchmarks: do people get answers fast enough to trust you?

- Business outcome benchmarks: are leads, trials, purchases, or bookings moving in a way you can defend?

To ground these benchmarks in real-world datasets, Sprout Social’s 2025 content benchmarking analyzed more than 3 billion messages from over 1 million public profiles, which is helpful when you need to sanity-check your volume, inbound engagement, and outbound engagement patterns by industry.

Analytics Interpretation

Interpretation is where most teams get stuck. A social marketing business doesn’t win by collecting more data; it wins by knowing what the data is trying to say. The same number can be a warning sign or a success signal depending on what layer of the system you’re looking at.

Read metrics in layers, not in isolation

- Attention layer: reach, impressions, view rates, and watch time answer “did anyone even notice?”

- Trust layer: saves, shares, replies, and meaningful comments answer “did this feel useful or believable?”

- Action layer: clicks, landing page views, signups, and purchases answer “did people move?”

- Profit layer: qualified leads, pipeline, and revenue answer “did this matter to the business?”

When the layers don’t line up, that’s your clue. High reach with low action often signals a message mismatch. High clicks with low conversions often signals a landing page or offer problem. Strong organic engagement with weak business impact can mean your content is entertaining but not positioned toward buying behavior.

Be careful with attribution, especially when budgets rise

As ad pricing and competition evolve, attribution debates tend to get louder. A clean way to reduce those arguments is to separate “platform-reported performance” from “incremental impact.” Incrementality tools exist because teams keep asking the same question: did this cause extra outcomes, or did we just take credit for them?

TikTok’s measurement framework explains Conversion Lift Studies as a randomized control trial style approach in TikTok’s Conversion Lift overview, with implementation guidance in the TikTok help center documentation. Even if you don’t run lift studies every month, understanding the logic behind them makes your analytics interpretations far more defensible.

Turn reporting into decisions, not summaries

A weekly report should feel like a decision document. It should answer: what’s working, what isn’t, what we’re testing next, and what we’re stopping. If your reporting doesn’t clearly lead to those four outputs, it’s usually too descriptive and not strategic enough.

The fastest teams keep their “core reporting” boring and consistent, and reserve deep dives for moments that matter: big launches, budget increases, or performance that changes direction. That rhythm protects focus and prevents analytics from becoming a weekly argument club.

Case Stories

Analytics gets real when there’s pressure. A social marketing business doesn’t adopt better measurement because it’s fashionable; it does it because someone needs to make a call that could waste money, miss a moment, or risk a quarter.

Aerie: When “search intent” showed up on TikTok and the budget had to follow

The campaign pressure didn’t come from a spreadsheet. It came from the feeling every retail team knows too well: the season is moving, competitors are loud, and your current mix is close to plateauing. The creative was shipping, the feed was active, and yet there was still a gap between attention and purchases that made the next budget decision feel risky. If they scaled the wrong thing, they wouldn’t just lose efficiency—they’d lose momentum during a period where momentum is the entire game.

Behind the scenes, the audience behavior had already shifted. People weren’t only browsing TikTok for entertainment; they were using it like a discovery engine, searching for ideas, opinions, and products with intent. That shift is spelled out in TikTok’s write-up on how search behavior is changing and why search placements inside TikTok matter in TikTok’s Search Ads Campaign article. When audience behavior changes, a social marketing business has to either adapt quickly or keep optimizing yesterday’s funnel.

The wall was attribution confidence. Plenty of teams can drive engagement, but the hard question is whether those touches are creating incremental outcomes or just looking good inside platform reporting. Without a clear measurement method, a “new budget split” becomes an opinion fight. Retail teams don’t have time for opinion fights in-season.

The epiphany was to treat measurement like a product requirement, not a reporting afterthought. Instead of only watching downstream numbers, they leaned into a lift-based approach designed to separate causality from coincidence. TikTok describes Conversion Lift Studies as incrementality measurement via experimentation in its Conversion Lift methodology overview, which is exactly the logic needed when you’re deciding whether a new placement deserves budget.

The journey looked like a controlled rollout rather than a chaotic pivot. They combined a search component with in-feed delivery, kept the structure stable enough to learn, and used the measurement framework to understand whether the search layer was adding something genuinely new. TikTok’s own narrative of the Aerie example sits inside the Search Ads Campaign story, framed around incremental evaluation rather than vanity metrics. The point wasn’t to chase a novelty format—it was to match the campaign design to how people were actually behaving.

The final conflict was operational: once a new lever shows promise, everyone wants to pull it harder, faster, and in ten different ways at once. That’s where teams accidentally destroy learning by changing too many variables. A professional social marketing business protects the test long enough to get a trustworthy read. That restraint is part of what makes the result scalable instead of fragile.

The dream outcome is the kind of clarity that changes how teams plan. When measurement can answer “did this create incremental impact?” budget decisions stop being emotional and start being strategic. The best part is that this clarity doesn’t only help one campaign; it builds a repeatable pattern for how you test, validate, and scale across future launches, rooted in the measurement logic explained in TikTok’s Conversion Lift Study documentation.

Professional Promotion

“Promotion” sounds like spending money, but in a social marketing business, professional promotion really means something deeper: knowing when to amplify, what to amplify, and how to prove the amplification was worth it.

Promote when you have proof of resonance

Throwing budget behind weak creative is one of the fastest ways to burn trust. A more professional rhythm is to let organic performance act like a screening tool, then promote the content that already earned attention. When you do this, paid media stops being a rescue mission and becomes a multiplier.

Measure promotion in business terms, not platform terms

Platform metrics are helpful, but leadership usually cares about outcomes. The wider market signals show why this matters: social advertising surged to $88.8 billion in the U.S. in 2024, and that same rebound is reinforced in reporting like Adweek’s industry breakdown. When spend grows like that, scrutiny grows with it.

Professional promotion keeps a tight chain between spend and intent: who did we reach, what did we want them to do, and what did they do next? When you can answer those three questions cleanly, your promotion stops feeling like gambling and starts feeling like controlled investment.

Raise the bar with incrementality when the stakes rise

As budgets increase, teams eventually need a stronger standard than attribution alone. That’s where lift studies and experimental measurement become a serious advantage, because they’re built to answer the uncomfortable question: did this create additional outcomes, or did we simply take credit?

TikTok frames this approach directly through Conversion Lift methodology, and the help center clarifies how the study is used to evaluate true impact in the platform documentation. You don’t need to run experiments constantly, but having the capability changes the tone of every performance conversation.

Future Trends

The next phase of any social marketing business will feel less like “posting on platforms” and more like running a real-time demand and insight engine. The winners won’t be the teams with the flashiest tactics. They’ll be the teams that can adapt faster than the feed changes.

Three shifts are already shaping how serious teams operate.

Social search becomes a buying pathway

People are using social platforms to research, compare, and validate decisions. That means your content isn’t only competing with other creators—it’s competing with the buyer’s own question: “Can I trust this?” If you’re not intentionally building content that answers real objections, you’ll get attention that never turns into action.

The practical move for a social marketing business is to build “searchable” content: direct answers, clear naming, and formats that make your expertise easy to scan and easy to remember.

Proof starts to matter more than attribution

As platform measurement shifts and competition intensifies, more stakeholders want to know what’s truly incremental. That’s why lift-style measurement frameworks keep showing up in serious conversations. It’s not about being academic—it’s about being able to defend a scaling decision when budgets grow and pressure rises.

Specialized marketplaces win over general-purpose noise

Freelancers and companies are both overloaded. Companies don’t want to sift through unrelated profiles, and marketers don’t want to fight for attention in crowded, generic directories. That’s why focused platforms are gaining momentum—clearer profiles, clearer listings, and direct communication that reduces friction.

MARKEWORK.com positions itself as a marketing-only marketplace built around direct communication and “no project fees,” framed on the site’s core messaging in its “Why Us” page and reinforced on the pricing page.

Strategic Framework Recap

If you want one clean takeaway from this guide, it’s this: a social marketing business grows when it stops treating social like a channel and starts treating it like a system.

- Strategy: one outcome, clear proof, and a content engine that maps to buying behavior.

- Execution: workflows that remove bottlenecks, plus creative systems that scale without losing identity.

- Measurement: dashboards that lead to decisions, and stronger proof methods when scaling matters.

- Scaling: solve constraints first (creative, approvals, signal), then scale inputs with control.

The ecosystem view matters because the parts reinforce each other. When you treat social as a system, you stop “starting over” every month, and you start compounding learning.

FAQ – Built for the Complete Guide

What does “social marketing business” actually mean?

It’s a way of running social like a business function, not a posting habit. You still publish content, but you also build workflows, measurement, and repeatable systems so social contributes to outcomes you can explain and scale.

How long does it take to see results?

It depends on what result you mean. Early signals (attention and engagement) can shift within weeks, but business outcomes typically need a longer cycle because trust takes time. The fastest progress usually comes from building a steady cadence and learning loop instead of chasing viral spikes.

Which metrics matter most for a social marketing business?

The metrics that change decisions. If your business goal is qualified leads, your measurement should connect social activity to lead quality, not just views. Keep a small set of outcome metrics, then use supporting metrics to diagnose what’s working or failing.

Should I focus on organic social or paid social?

Most strong programs use both. Organic builds trust, gathers insight, and surfaces winning messages. Paid lets you scale the messages that already resonate and reach the right people consistently. If you can only choose one at the start, choose the one that fits your offer and sales cycle—then add the other as a multiplier.

Why does engagement sometimes drop even when content quality improves?

Because the environment changes. Platforms shift distribution, audiences change habits, and competition rises. That’s why benchmarks matter as guardrails, and why content systems should evolve with formats and behavior instead of staying locked in last year’s playbook.

How many posts per week is “enough”?

Enough to learn. If you’re publishing so little that you can’t spot patterns, you’re flying blind. A practical approach is to pick a cadence you can sustain without burning out, then improve quality and consistency over time rather than trying to sprint forever.

How do I stop running out of content ideas?

Stop relying on inspiration and build a pipeline. Capture customer questions, objections from sales calls, themes from comments and DMs, and topics your audience searches for. Then convert them into repeatable formats so your team isn’t reinventing the wheel every week.

What tools do I actually need to run this professionally?

You need tools that remove friction where you feel it most: publishing and approvals, community inbox, analytics, and a clean way to organize assets. The “right stack” isn’t the biggest stack—it’s the one that makes your workflow faster and your reporting more trustworthy.

Is it worth using a marketplace to find clients or talent?

It can be, especially when the marketplace is focused and reduces noise. Specialized platforms can shorten the distance between businesses that are actively hiring and marketers who have clear proof, pricing, and positioning.

What’s the fastest way for marketing freelancers to get new clients?

Position yourself where demand already exists, then make it easy to evaluate you. That means a profile with clear skills, proof, and a realistic offer—and a consistent routine for applying, following up, and improving your positioning based on what gets replies.

Is it true there are 10K+ remote marketing opportunities out there?

Yes—if you look at the broader market. Upwork shows 12,102 open marketing jobs, and LinkedIn displays 12,000+ digital marketing remote jobs in the United States at the time of publication. The opportunity is real; the challenge is being visible and moving fast when a good listing appears.

Work With Professionals

If you’ve read this far, you already know the uncomfortable truth: most social programs don’t fail because people aren’t smart. They fail because the system isn’t built. The workflow breaks. The reporting gets fuzzy. The pressure rises. And then you’re back to “posting more” instead of building momentum.

Now imagine the opposite. Imagine your social marketing business running like an engine: clear positioning, consistent output, defensible proof, and a steady stream of real opportunities—without begging for attention in crowded directories.

This is exactly where a focused marketplace changes the game. MARKEWORK.com is built around direct communication and “no project fees,” so you’re not donating a cut of every contract to a platform middle layer, described clearly in the MARKEWORK.com FAQ and reinforced in the platform’s positioning. The platform also highlights “access to thousands of job listings” for marketers on its pricing page.

And the demand isn’t theoretical. The marketplace itself shows a live feed and notes 1007 active listings with limited visibility unless you unlock the full view, visible directly on the active listings page. When you combine that kind of focused pipeline with the broader reality of 12K+ open marketing jobs and 12K+ remote digital marketing roles, the question becomes less “is there work?” and more “are you positioned where companies can actually find you?”

If you’re a marketing freelancer who wants more clients without paying commissions, and you want a place where your skills are evaluated in a marketing-only context, build your profile, publish your proof, and start applying with momentum.