Most teams don’t struggle because they “need more content.” They struggle because their digital and social activity isn’t connected to a clear business outcome, a repeatable creative system, and measurement they actually trust.

This guide treats marketing digital social media as one connected discipline: how you earn attention, convert it into demand, and protect your brand while platforms, algorithms, and regulation keep moving. Part 1 sets the foundation and the working framework you’ll use in the rest of the series.

Article Outline

- What “Marketing Digital Social Media” Means

- Why It Matters

- Framework Overview

- Core Components

- Professional Implementation

What “Marketing Digital Social Media” Means

In plain terms, marketing digital social media is the practice of using social platforms (and the paid media systems around them) to create demand and capture it. That includes organic publishing, community and creator partnerships, paid distribution, landing experiences, and the measurement layer that proves what worked.

It’s not the same as “posting on social.” Social posting is a tactic. Digital and social media marketing is a system that decides what you say, who sees it, how you convert interest into leads or sales, and how you learn fast enough to keep improving.

The audience scale is also why this discipline can’t be treated as an add-on. Recent global reporting shows social platforms now represent a true mainstream channel, with Digital 2026’s global overview, the official release summary, and We Are Social’s companion analysis all pointing to the same big reality: social media usage is now a “supermajority” behavior at a global level.

But reach alone isn’t the point. The point is how you translate attention into outcomes without relying on luck, viral spikes, or one platform’s temporary advantage.

Why It Matters

Social platforms have become one of the fastest feedback loops in business. You can test positioning, creative angles, offers, and audience segments in days, not quarters. When you build a disciplined system, you stop guessing and start learning.

They’ve also become a major piece of the overall advertising economy. In the U.S., the latest IAB/PwC Internet Advertising Revenue Report (Full Year 2024) breaks out social media advertising revenue at scale, and the full PDF report makes clear that social is not a side channel—it’s a core budget line for performance and brand teams.

At the same time, the environment is harsher than it looks from the outside. Platforms are flooded with content, auctions are more competitive, and trust is harder to earn. Even the platforms and regulators are signaling that integrity and transparency are now inseparable from growth: the EU’s Digital Services Act transparency approach and DSA updates on ad transparency and targeting limits sit alongside ecosystem enforcement reporting like Google’s 2024 Ads Safety Report.

So “why it matters” comes down to this: digital and social can be your most efficient growth engine, but only if you treat it like an operating system—creative, distribution, conversion, and measurement—rather than a stream of posts.

Framework Overview

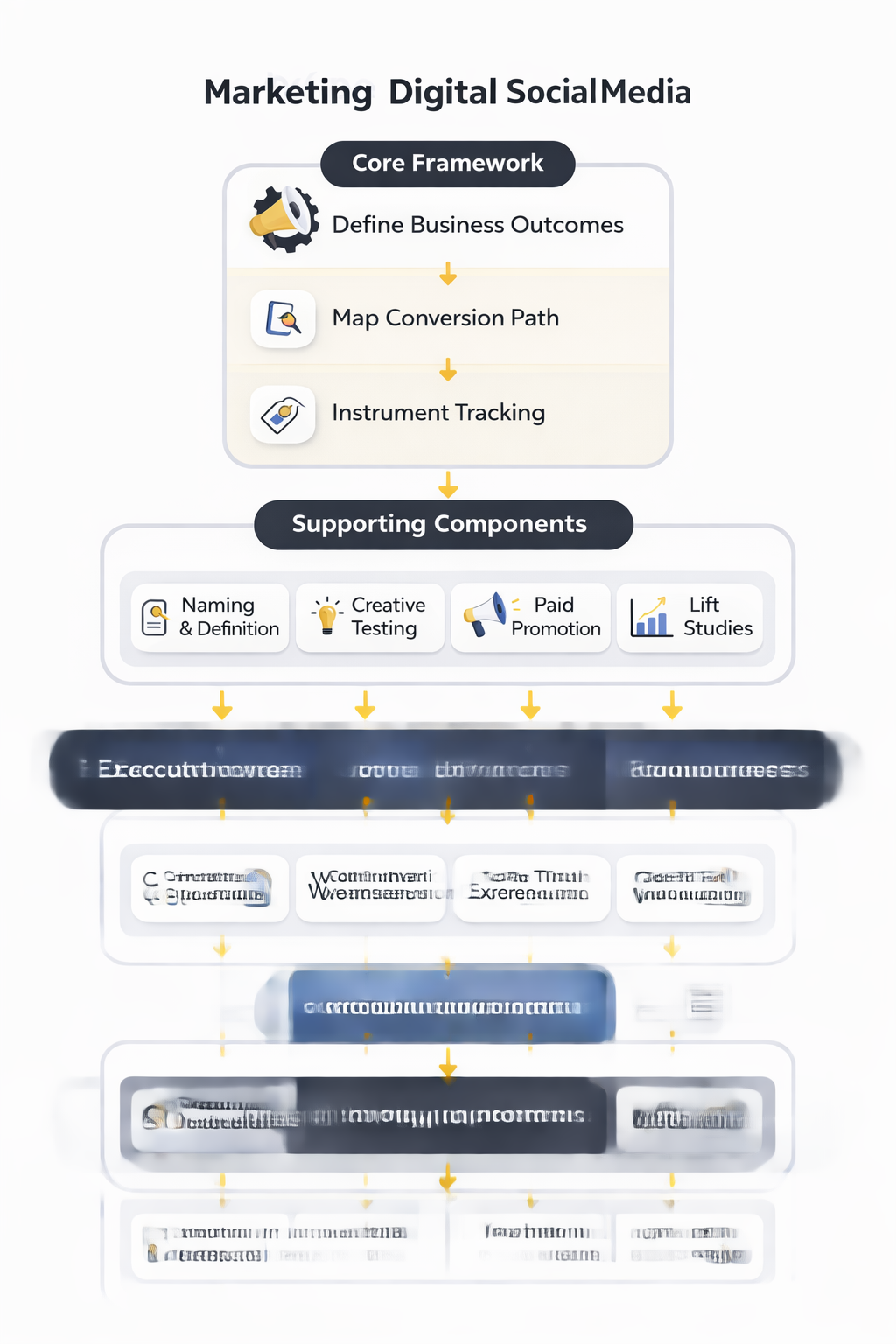

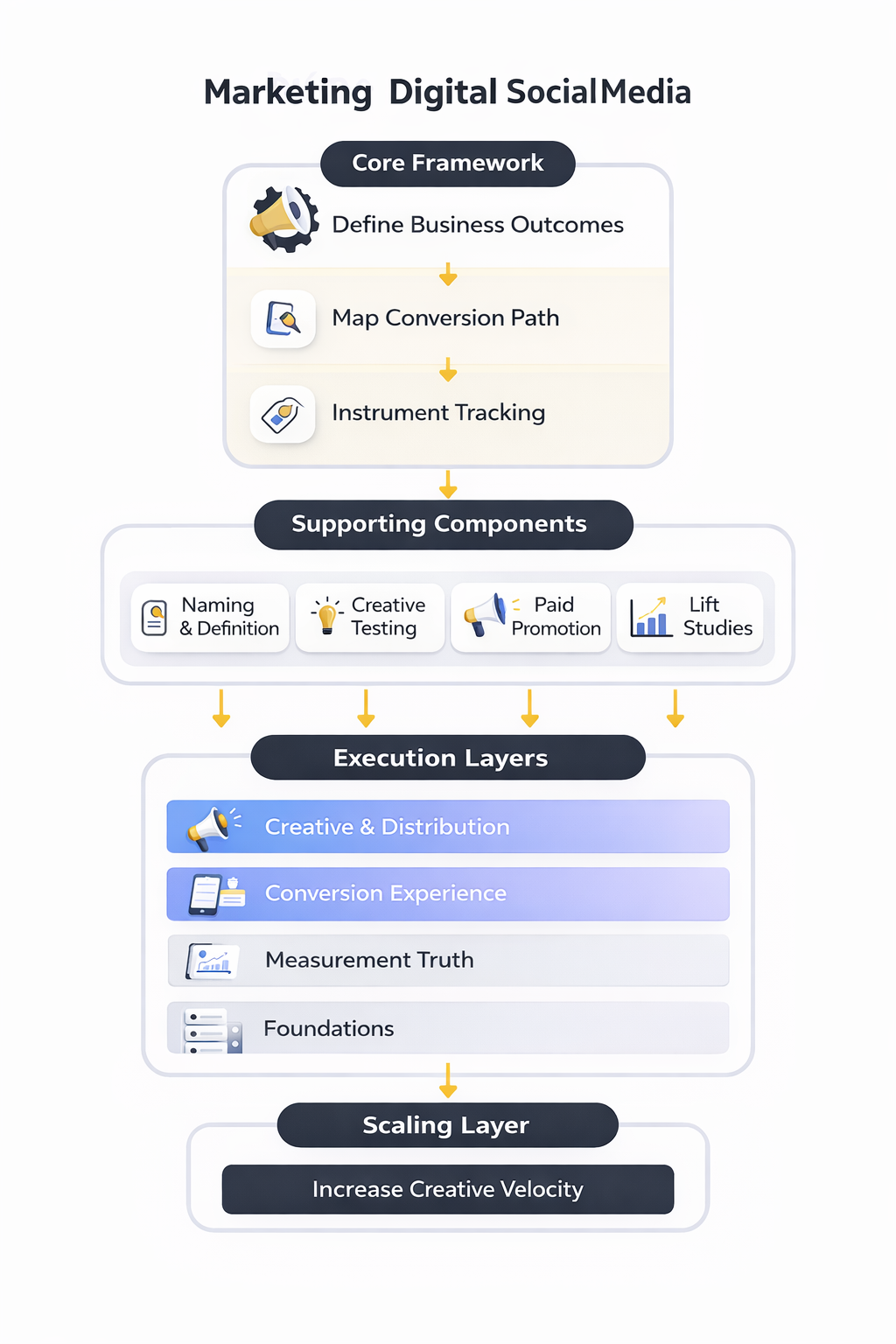

The framework in this series is built to answer five questions every serious social program must answer:

- Who are we trying to move? Not “everyone who might like us,” but the specific buyers, users, and influencers who change revenue outcomes.

- What do they need to believe? A clear message that earns attention and reduces uncertainty, not a list of features.

- How will we repeatedly earn attention? A creative system that produces consistent patterns, not random one-off ideas.

- Where does conversion happen? A designed path from platform attention to an owned action (lead, signup, purchase), not “link in bio and hope.”

- How do we prove impact? Measurement that matches reality, including the limits created by privacy, platform attribution, and multi-touch journeys.

That structure keeps you from over-optimizing one layer while the rest quietly breaks. For example, you can have great creative—but if the landing experience is slow or mismatched, your results will look “mysteriously” weak. Or you can have great targeting—but if your message is generic, the auction will punish you with rising costs.

In later parts, each layer becomes a working playbook: from strategy and creative production to professional analytics and ecosystem governance.

Core Components

When marketing digital social media works consistently, you can usually trace it back to five components that are intentionally designed to reinforce one another.

1) Strategy that’s anchored to a real business constraint

Start with one constraint you must solve: pipeline volume, close rate, retention, recruiting, regional expansion, or category credibility. The constraint determines the channel mix, the content cadence, and the KPI design. Without that anchor, teams chase engagement because it’s available—even when it doesn’t compound.

2) Audience definition that’s more specific than demographics

Good audience work includes the context that shapes decisions: what triggers the need, what risks the buyer fears, what alternatives they compare, and what “proof” they trust. This is why platform research matters; for example, platform usage patterns and reach differ meaningfully by market and age, and datasets like Pew Research Center’s detailed platform use reporting help teams avoid assumptions when building channel priorities.

3) Creative built for how feeds actually work

Feeds reward clarity and relevance fast. Strong creative systems typically include a small set of repeatable formats (series, templates, POV structures), a clear visual language, and a testing rhythm that treats each new asset as a learning unit—not a precious “campaign.”

4) Distribution that combines organic, paid, and creator leverage

Organic gives you compounding reach when you earn it, paid gives you controllable scale, and creators give you cultural access you can’t manufacture alone. The smartest programs don’t pick one—they design how each lane supports the others.

5) Measurement that accepts the messy truth

Attribution is not a single number you “find.” It’s a model you choose. The goal is to build a measurement approach that’s stable enough to guide decisions, even when platform reporting changes, user journeys stretch across devices, or privacy limits reduce observable data.

Professional Implementation

“Professional” execution is not about bigger budgets. It’s about reliability: the ability to run the machine every week, learn from results, and protect the brand while doing it.

That starts with roles and operating cadence. High-performing teams assign clear ownership across (1) strategy and channel planning, (2) creative production, (3) community and partnerships, (4) paid media operations, and (5) analytics. Even if one person wears multiple hats, the responsibilities should still be explicit so nothing quietly becomes “nobody’s job.”

Next is governance. Social and digital growth now sits inside a tighter trust and safety environment than most marketers admit. Regulatory expectations around transparency and targeting have hardened in Europe through the Digital Services Act transparency framework, and enforcement and integrity reporting has become a visible part of the ecosystem, including Meta’s ongoing Integrity Reports and Google’s Ads Safety reporting on blocked and removed ads.

Finally, professional implementation treats creative and measurement as one system. You plan what you will test, you publish with enough volume to learn, you instrument conversion paths, and you document decisions so the program improves even when team members change.

In Part 2, the framework turns into an actionable planning method: how to choose channels, define a practical audience model, and build a content system that scales without turning into noise.

Step-by-Step Implementation

When marketing digital social media is implemented well, it feels less like “we’re posting and boosting” and more like a reliable operating rhythm. You know what you’re shipping this week, what you’re testing next week, what signals you trust, and what decisions the data will drive.

This step-by-step is designed to be practical even if you’re a one-person team, but it scales cleanly for larger organizations. The idea is to set foundations first, then accelerate output without breaking measurement or brand trust.

Step 1: Define one outcome you will be judged on

Pick one outcome that is obviously tied to the business: qualified leads, booked calls, trial starts, purchases, repeat purchases, or resolved support cases. This is not “awareness” or “engagement” in the abstract; it’s an outcome someone else in the business cares about. If you can’t describe the outcome in one sentence, your implementation will drift.

Step 2: Map the conversion path from platform to owned action

Decide exactly where the user lands, what action they take, and what happens after that action. If you’re collecting leads, define what “qualified” means and how the lead gets contacted. If you’re selling, define what counts as a purchase event and how refunds, cancellations, or subscriptions are handled in reporting.

This is where teams usually lose money, because ads get launched before the landing path is predictable. A small amount of friction here can quietly erase the value of great creative.

Step 3: Instrument tracking the right way

Set up conversion tracking before you scale spend, and verify it end-to-end. If you’re running TikTok, build a resilient web data connection using the TikTok Pixel and Events API together so you can deduplicate events and keep signal quality stable as the ecosystem changes. TikTok’s own guidance recommends pairing these connections and explains why it improves sustainability. TikTok’s Events API overview, the Events API getting-started steps, and the official web data connection setup guide all describe this hybrid approach and verification flow.

If you’re running LinkedIn, install the Insight Tag and verify conversions in Campaign Manager so you don’t spend weeks optimizing to incomplete data. LinkedIn’s Insight Tag overview, LinkedIn’s conversion tracking documentation, and LinkedIn’s verification steps outline the setup and troubleshooting logic.

Step 4: Build your first creative batch, not a “perfect” campaign

Start with a batch of assets that explore different angles, not one single polished video. Your first job is to teach the platform what “good response” looks like and teach yourself what your audience actually reacts to.

On Meta, build a clean creative testing workflow so you can isolate what’s changing and avoid confusing results. Meta provides a direct workflow for creative tests inside Ads Manager. Meta’s creative test setup guide is a practical starting point, and Advantage+ creative guidance helps you understand what automation can do once you have enough variation.

Step 5: Launch with a learning window you will respect

Decide how long you’ll let tests run before you judge them, and commit to it. If you change everything daily, you’ll never know what caused the result. You don’t need to overcomplicate the math, but you do need discipline.

For controlled testing outside social, Google’s own experiments tooling is designed for exactly this kind of learning structure. Google Ads Experiments describes the workflow for splitting traffic and evaluating changes without guesswork.

Step 6: Measure incrementality when it matters

Platform-reported conversions are useful, but they can’t answer the question leadership eventually asks: “Would those results have happened anyway?” That’s where lift studies matter, especially when you’re investing heavily or running upper-funnel creative.

Meta and TikTok both provide lift-study approaches that are designed to estimate incremental impact using holdout methods. Meta’s overview of Conversion Lift, Meta’s Conversion Lift hub, and TikTok’s Conversion Lift Study explainer show how these tests are framed and why they’re different from standard attribution.

Step 7: Lock your weekly ops cycle

Implementation becomes real when you can repeat it weekly without losing quality. Set a weekly rhythm: planning and briefs, production, launches, mid-flight checks, and a learning review that decides what you do next. If your cycle doesn’t end with clear decisions, you don’t have a system yet.

Execution Layers

Think of execution as layers stacked on top of each other. The higher layers (creative and distribution) move fast and change often. The lower layers (tracking, governance, conversion instrumentation) move slower but determine whether the whole system is trustworthy.

Layer 1: Foundations that prevent silent failure

This is where you set naming conventions, event definitions, UTM discipline, and access control. It’s also where you document what “counts” as success so reports don’t turn into debates. Verification matters here because it’s the difference between optimizing a real signal and optimizing noise, which is why LinkedIn emphasizes status checks and verification steps in its conversion tracking workflow. LinkedIn’s conversion tracking documentation and its verification process are useful references for what “foundation-first” looks like.

Layer 2: A creative system that can ship volume without chaos

Your creative system is the set of repeatable formats, briefs, and production habits that let you ship consistently. Without it, teams oscillate between over-polished assets that take too long and low-effort posts that don’t teach the algorithm anything.

Use testing structure to keep the creative layer honest. Meta’s built-in creative test workflow exists precisely so teams can compare variations without muddying results. Meta’s creative test guidance gives you a clear starting point for separating what you’re testing from what you’re leaving constant.

Layer 3: Distribution controls that make reach predictable

Organic distribution is earned and inconsistent by nature, which is fine, but your paid distribution should be engineered. This is where you decide budgets, placements, bid strategies, and how you handle retargeting versus prospecting.

When you’re buying media beyond social, structured experimentation helps you avoid “we changed ten things and sales moved” confusion. Google Ads Experiments is a clean reference point for how large platforms encourage controlled change.

Layer 4: The conversion experience that turns attention into action

This layer is your landing page, your offer clarity, your form friction, and your follow-up speed. Social can generate curiosity quickly, but curiosity is fragile. If the landing path is confusing or slow, you’ll see performance drop and assume the platform is the problem when it’s actually the experience.

Layer 5: Measurement truth that can survive scrutiny

This layer decides whether you can scale confidently. It’s where hybrid tracking setups matter, because you want stable event capture and deduplication rather than trusting a single fragile source of truth.

TikTok’s documentation is unusually direct about recommended setups for web conversions: pair Pixel and Events API, deduplicate, and verify the connection. TikTok’s Events API overview and its web data connection verification guide are practical checkpoints for hardening this layer.

Optimization Process

Optimization is not “tweaking things until it feels better.” It’s a controlled loop that turns learning into predictable improvement. The only way marketing digital social media becomes stable is if your optimization process is stable.

1) Create a hypothesis log you actually maintain

Every meaningful change should start as a hypothesis: what you believe will happen and why. This prevents the most common mistake in social performance work: changing variables without knowing what you’re trying to learn. Your hypothesis log becomes your memory when you’re three months in and can’t remember why an angle worked.

2) Separate creative tests from structure changes

Creative tests should compare creative. Structure changes should compare structure. If you mix both at once, you’ll “win” a test and still not understand why you won.

On Meta, keep creative comparisons clean by using the creative test workflow designed for that exact purpose. Meta’s creative test guide is a useful guardrail because it forces you to define what you’re comparing.

3) Define decision rules before you look

Decide what happens if a test wins, loses, or stays unclear. A “win” should have a pre-defined scaling action. A “loss” should have a clear learn-and-kill rule. An unclear result should be treated as a signal that your test design needs improvement, not as permission to cherry-pick.

4) Add lift studies at the right moments

Lift studies become valuable when you’re past early testing and now making decisions that affect real budget and real revenue. They are especially useful when leadership needs confidence that social is adding new demand rather than capturing what would have arrived anyway.

Both Meta and TikTok outline lift approaches designed to estimate incremental impact with holdout logic rather than last-click assumptions. Meta’s Conversion Lift overview and TikTok’s Conversion Lift Study explainer provide the conceptual framing for when and why teams use these tests.

5) Turn learning into creative briefs, not just charts

The output of optimization should be better briefs and better creative, not prettier reporting. If the team can’t translate results into what they will shoot, design, or write next week, the process isn’t helping.

This is where “creative automation” features become more useful, because they can only optimize what you feed them. Meta’s Advantage+ creative guidance is a reminder that automation supports iteration, but it doesn’t replace a steady supply of strong variations.

Implementation Stories

Implementation becomes real when you see what it looks like under pressure. The following story is a real example of a brand building a repeatable social machine, then using it to pull off a high-risk moment without losing control.

Duolingo’s “Duo is dead” moment, and the operating system underneath it

It hit like a siren. One day the brand mascot was everywhere, then suddenly the brand announced the owl had “died,” and the internet did what it always does: it turned the moment into a shared game. Brands, creators, and regular users piled on, and the conversation moved faster than any approval chain could handle. The risk wasn’t just “will this get attention,” but “will this get attention we can actually survive.” Business Insider’s coverage of the campaign mechanics and localization choice and Axios’ breakdown of the moment and why it mattered capture how quickly it became a cultural event.

The backstory is that Duolingo didn’t wake up one morning and decide to gamble the brand. The company had spent years building a social-first identity, tuning its voice and training teams to move quickly without sounding corporate. That long commitment shows up both in mainstream marketing coverage and in Duolingo’s own public communications about brand building. Adweek’s reporting on Duolingo’s social-first approach and Duolingo’s Q4/FY 2024 shareholder letter filed on the SEC’s EDGAR system point to a consistent theme: the brand treats cultural relevance as a growth lever, not as a side project.

The wall was scale and scrutiny at the same time. When a moment gets that big, you can’t rely on luck, because every response gets amplified and every misstep becomes a screenshot. You also can’t coordinate by committee, because the internet doesn’t wait for internal alignment. The campaign’s global nuance made this even harder, because Duolingo didn’t run the exact same “death” narrative in every market. Business Insider’s note on why Japan was handled differently illustrates the kind of operational complexity that only shows up when you’re truly implementing at scale.

The epiphany was that the stunt wasn’t the strategy; the operating system was. Duolingo could do something this bold because it already had a machine for fast publishing, trend response, and brand-consistent humor. The company’s own shareholder communication frames “unhinged and viral” work as something they believed contributed to growth, which matters because it signals internal buy-in rather than a rogue social team running wild. You can see that framing directly in Duolingo’s investor relations version of the Q4/FY 2024 shareholder letter and the SEC-filed version of the same letter.

The journey was a layered execution, not a single post. Social publishing was coordinated with product-visible changes like the app icon and public-facing story beats, which kept the narrative consistent wherever users encountered it. The team also leaned into interaction, letting the community co-create the moment rather than trying to control it tightly. That “ship the story everywhere” pattern is exactly what strong marketing digital social media execution looks like: a narrative that moves across touchpoints while still feeling native in each place. The broader brand-building philosophy behind that approach is discussed across Adweek’s reporting and Axios’ analysis of the moment’s cultural pull.

The final conflict was the comedown. Big moments create a dangerous temptation to chase the next stunt, and the audience quickly adapts if you try to repeat the same trick. Duolingo has publicly signaled that it experiments with tone and content, not just intensity, which is a subtle but important operational truth: strong teams don’t only optimize ads, they optimize the brand voice itself over time. MediaCat’s interview about experimenting with “less unhinged” content reflects that ongoing calibration mindset, where the team adjusts without abandoning the core voice that made the brand distinctive.

The dream outcome is what most teams want but rarely achieve: a social system that can take risks without breaking trust. The moment didn’t work because it was shocking; it worked because it was coherent, consistent, and executed by a team that could move fast while staying on brand. When you build marketing digital social media as an operating system, big moments become a tool you can use, not a fire you hope you can contain. Duolingo’s own framing of its approach in a formal shareholder letter is a strong signal that this wasn’t accidental virality. The SEC-filed shareholder letter makes that internal perspective unusually explicit.

Build a launch checklist that forces verification

Every launch should pass the same gates: creative approved, landing page tested, conversion events firing, and reporting views agreed. Platform documentation is helpful here because it tells you exactly what “verified” means in that ecosystem. For LinkedIn, the workflow is clear: install the Insight Tag, create conversion rules, then confirm status in Campaign Manager. LinkedIn’s Insight Tag installation steps and LinkedIn’s conversion verification checklist are practical guardrails.

Harden your data connections early

Resilient measurement is not a luxury anymore, especially on conversion-focused campaigns. TikTok’s official help center is direct about recommended web setups: use Pixel and Events API together, deduplicate, and verify the connection. TikTok’s Events API overview and TikTok’s web data connection verification guide show the exact logic teams should follow.

Put experimentation governance in writing

Professional teams decide in advance what they will test, what they won’t, and what counts as evidence. That’s how you avoid “we changed everything and performance changed” storytelling. If you’re running broader experimentation in paid channels, align the team on test design and use built-in tooling that enforces structured splits, like Google Ads Experiments.

Know when to move from attribution to incrementality

If you’re making big decisions, it’s worth validating impact with lift studies instead of relying only on platform attribution. Meta and TikTok both offer lift frameworks designed for causal questions rather than credit assignment. Meta’s Conversion Lift overview and TikTok’s Conversion Lift Study explainer are the cleanest starting points for building an internal “when do we run lift?” rule.

Document the system so it survives team changes

Write down event definitions, naming conventions, campaign structure logic, and reporting views. This is boring work, but it prevents expensive resets when someone leaves or when a stakeholder asks for “the real numbers.” The best marketing digital social media teams don’t rely on memory; they rely on a system that’s legible.

Part 4 will move deeper into analytics and decision-making: how to build reporting that leadership trusts, how to avoid false certainty, and how to translate performance signals into creative and budget decisions without getting trapped in platform bias.

Statistics And Data

Good analytics in marketing digital social media starts with a simple mindset shift: you’re not collecting numbers to “report,” you’re collecting evidence to make decisions. That evidence needs context, because social platforms evolve fast, buying behavior is rarely linear, and attribution is always an interpretation.

Here are a few reality checks worth keeping on your dashboard, not as vanity facts, but as the backdrop for smarter decisions:

- Scale is no longer the question. The world had 5.66 billion social media user identities at the start of October 2025, which is why tiny improvements in targeting, creative relevance, or landing-page clarity can compound quickly.

- Investment follows attention. Social ad spend was forecast to reach $247.3B in 2024, and that growth only increases competitive pressure on the feed.

- Digital advertising is still expanding, not shrinking. The U.S. digital ad market reached $259B in 2024 revenue, which is a helpful reminder that “it’s saturated” is not the same thing as “it’s dead.”

- Creators are now a budget line, not a side quest. U.S. creator economy ad spend grew from $13.9B (2021) to $29.5B (2024) with a $37B projection for 2025, which changes how you should think about distribution, credibility, and measurement.

- B2B is leaning hard into video and trusted voices. LinkedIn and Ipsos found 78% of B2B marketers already use video, and the benchmark report shows 56% plan to increase usage in the next year.

- Platforms are pushing more inventory into the market. Meta reported ad impressions grew 12% year-over-year for full-year 2025 while average price per ad increased 9% year-over-year for full-year 2025, a combination that quietly reshapes what “normal” efficiency looks like.

The takeaway isn’t “chase these numbers.” It’s that marketing digital social media happens inside a market that’s getting bigger, noisier, and more expensive. Your analytics job is to separate true signal from platform-shaped storytelling.

Performance Benchmarks

Benchmarks are useful when they help you ask better questions, and dangerous when they make you stop thinking. The cleanest way to use benchmarks is to treat them like guardrails: they tell you when something is obviously broken, not what “good” looks like for your brand.

In marketing digital social media, a professional benchmark stack usually has five layers. Each layer answers a different business question, which is why mixing them together leads to confusion.

Layer 1: Delivery benchmarks (Are we actually getting distribution?)

This is where you watch reach, frequency, impression growth, and auction pressure. If delivery is unstable, everything above it becomes noise. Broad market indicators can help you interpret shifts here, like WARC’s view of social spend climbing or Meta’s disclosure that ad impressions and ad prices both increased in 2025.

Layer 2: Attention benchmarks (Did people choose to stay?)

This is where you watch scroll-stopping signals: hook rate, video view progression, saves, shares, meaningful clicks, and comment quality. The point isn’t to celebrate engagement; it’s to understand whether the creative earned attention from the right audience.

This is also where B2B teams often unlock surprising wins. LinkedIn’s benchmark framing emphasizes trust-building formats, and shows how widely video has become embedded in B2B marketing workflows, with 78% already using video.

Layer 3: Intent benchmarks (Did attention turn into curiosity?)

Intent is what happens after the first yes. Watch landing-page engagement, click depth, return visits, branded search, and assisted conversions. If attention is high but intent is weak, you don’t have a distribution problem. You have a message-to-offer gap.

TikTok’s measurement content is a useful reminder here because it explicitly tracks cross-channel effects like search lift and traffic lift in controlled studies. Artlist’s TikTok study shows how “it worked” can look like search behavior and registrations, not just last-click conversions.

Layer 4: Action benchmarks (Did intent turn into business outcomes?)

This is your conversion rate, cost per qualified lead, cost per purchase, or revenue per visitor. Benchmarks can flag landing-page friction and offer weakness quickly, but only if your tracking is clean and your definition of success is consistent.

Layer 5: Incrementality benchmarks (Did social create new outcomes?)

Incrementality is the most under-used benchmark layer because it’s harder to measure and harder to explain. But it’s also the layer leadership trusts the most, because it answers the real question: would we have gotten these results without this spend?

Lift studies are designed for exactly this. TikTok’s own explainer describes why conversion lift can reveal value that last-click models miss, including situations where a brand sees more conversions than attribution credits. TikTok’s conversion lift overview is a solid reference point for how to frame these tests without turning them into a statistics lecture.

Analytics Interpretation

Most analytics mistakes in marketing digital social media aren’t math mistakes. They’re interpretation mistakes. The dashboard isn’t lying, but it also isn’t telling the full truth unless you know what the platform can and can’t see.

1) Attribution is not causality

Attribution assigns credit; it does not prove impact. It’s easy to optimize toward whatever the platform can measure most confidently, even when that measurement is only one slice of reality.

This is why lift studies matter so much: they create a controlled way to estimate incremental impact instead of guessing. TikTok’s examples show this clearly, where lift measurement can reveal performance beyond what last-click would claim. The conversion lift explainer is useful because it frames lift as a business decision tool, not a vanity measurement.

2) Watch the shape, not just the number

A flat conversion rate can hide two opposite realities: stable performance, or offsetting changes where one segment improved while another collapsed. Look at distributions: new vs returning, geography splits, device splits, audience cohorts, and creative batches.

If performance changes right after a creative swap, you’re probably looking at a creative-driven effect. If performance changes while creative stays constant, you may be seeing delivery changes, seasonality, or landing-page friction.

3) Separate signal by time horizon

Short-term signals answer “did this creative earn attention today?” Longer-term signals answer “did this campaign change behavior over weeks?” If you judge a trust-building campaign like a flash sale, you’ll kill it too early. If you judge a promo campaign like a brand film, you’ll waste budget.

This time-horizon thinking shows up in the way industry research talks about shifting attention toward creator-driven media and social video ecosystems. The creator economy spend curve in IAB’s 2025 creator report is a clue that more outcomes will be influenced indirectly, not always captured in a neat last-click box.

4) Measure creative fatigue like a product problem

Creative fatigue is rarely “people got bored.” More often, the same message has simply reached its marginal audience. Watch frequency, watch attention decay, and watch whether incremental spend is buying lower-quality impressions.

When a platform reports that impression volume is rising while price is rising too, like Meta’s 2025 impression and price increases, it’s a reminder that the environment is shifting under you. The fix isn’t only bid strategy. It’s a stronger creative operating system.

5) Keep one source of truth for business outcomes

Platforms are excellent at platform metrics. Your CRM, your commerce platform, or your analytics stack should be the judge of business outcomes. You can still use platform reporting for direction, but your revenue and lead quality should live where the business can audit it.

Case Stories

Numbers get clearer when you see them inside a real situation, with real constraints and real trade-offs. The stories below are pulled from published measurement case studies, and they’re written the way they actually feel when you’re the team accountable for the result.

Domino’s Spain used lift measurement to prove full-funnel impact during Euro 2024

The pressure hit right as the matches ramped up. Everyone was bidding harder, attention was fragmented, and the obvious fear was that TikTok would deliver views while sales stayed stubbornly flat. In a high-intensity event window, “we think it helped” isn’t a defensible answer when the budget is real and the clock is ticking. TikTok’s Domino’s Spain case study documents the campaign and the measurement design.

The backstory is straightforward but familiar. Domino’s is already a global delivery brand, yet the business still wanted to know whether TikTok could move outcomes across web, app, and even search behavior around a major cultural moment. They chose Euro 2024 as the proving ground because consumer attention was concentrated, and that kind of concentration makes measurement both more important and more complicated. The company framed the goal as understanding TikTok’s influence on sales, not just engagement. The case study’s objective section lays out that intent.

The wall was attribution uncertainty. If a user sees an ad, then searches later, then buys on web or app, last-click models can easily erase the role of the first touch. The team needed evidence that could survive internal skepticism, especially when multiple channels would naturally spike during big matches. Without controlled measurement, TikTok’s contribution could be dismissed as “correlation.” The case study explains the control vs exposed group structure used to break through that wall.

The epiphany was that the question wasn’t “did TikTok convert,” but “did TikTok change behavior.” That immediately expanded what the team tracked: not just purchases, but app installs and search lift. They used an omni-channel conversion lift approach so web and app events could be counted as incremental outcomes, and they measured search lift to capture delayed curiosity that shows up off-platform. That reframing made the data more believable, because it matched how people actually behave during live events. TikTok’s description of omni-channel lift and search lift details the approach.

The journey was a full-funnel setup built for the event rhythm. They combined brand and performance ads, then optimized toward web conversions and app installs instead of treating the campaign like a single objective sprint. The lift study compared an exposed group to a control group to quantify incremental impact, and the reporting tracked outcomes during key match moments where behavior spikes. That structure let the team learn in real time rather than argue after the fact. The solution section describes the full-funnel and measurement setup.

The final conflict was that performance didn’t show up in a single, neat metric. Search behavior surged during the final, app installs behaved differently than purchases, and the team had to interpret multiple signals without overselling any one of them. That’s the reality of analytics: the more honest you get, the less “simple” it becomes. The only way through is to tie each signal back to a business narrative the team agreed on before launch. The results section breaks out lifts by outcome.

The dream outcome was measurable proof that TikTok influenced the whole funnel, not just one click. The study reported a +14% lift in app install conversion rate, a +6% lift in brand-related search conversion rate, and a +9% lift in complete payments across web and app. More importantly, it gave the business a defensible reason to invest in the channel during peak cultural moments, because the impact was measured incrementally rather than assumed.

Artlist measured TikTok’s cross-channel impact when last-click couldn’t explain growth

The alarm wasn’t a single bad week; it was the slow kind of confusion that kills budgets. The team could feel demand growing, but attribution wasn’t giving TikTok credit in a way that finance would accept. Meanwhile, acquisition paths were getting messier: cross-device journeys, long consideration cycles, and users who discovered the brand on social but converted somewhere else. TikTok’s Artlist case study captures the measurement problem and the solution.

The backstory is that Artlist sells to creators and businesses with longer funnels than typical impulse-commerce. That meant TikTok could be influencing discovery and evaluation without showing up as the final click. The team needed a measurement approach that would reflect reality, not just what the dashboard could easily attribute. Their goal was to uncover TikTok’s impact on search, site traffic, and registrations across regions. The objective section outlines those KPIs.

The wall was credibility. If you can’t prove incremental impact, you end up making emotional arguments for channels you personally believe in. That’s not sustainable when budgets tighten. The team also faced the classic “multi-touch mess,” where improvements show up across Google and direct traffic, and nobody can confidently assign cause. They needed an approach designed to isolate TikTok’s contribution without freezing the entire marketing plan. The case study explains the controlled methodologies used to isolate impact.

The epiphany was to stop asking TikTok to prove itself through last-click conversions alone. Instead, they designed measurement specifically to capture discovery and cross-channel behavior. They used randomized conversion lift to compare exposed vs control groups, and they paired it with a geo lift test to quantify incremental impact with statistical precision. That combination turned “TikTok helps” into “TikTok measurably changes outcomes.” The solution section details both methodologies.

The journey was structured and disciplined. They used conversion lift to track total traffic, Google search traffic, and registrations, then used geo lift with cooldown periods to confirm sustained effects after spend changes. UTMs were used to reveal indirect effects that last-click would normally hide, which helped the team map the real conversion path rather than the platform-shaped one. The work wasn’t glamorous, but it produced evidence the business could act on. The case study’s measurement description lays out the mechanics.

The final conflict was interpretation under scrutiny. When results show up as lifts in traffic and search, someone always asks, “But did it sell?” The team had to connect the dots between top-of-funnel impact and downstream revenue without overstating certainty. That’s where the geo lift results mattered, because they reinforced that the impact persisted beyond the immediate click window. The results section provides the lifts and the cooldown confirmation.

The dream outcome was a clearer view of TikTok’s role in the mix. The study reported a 24% lift in total website traffic, a 51% lift in Google Search traffic, and a 64% lift in website registrations. The geo lift test showed a sustained purchase lift, including a 13.7% significant lift after a two-week cooldown, which made the business case far easier to defend.

Professional Promotion

Professional promotion in marketing digital social media isn’t “boosting posts.” It’s engineered distribution with measurement guardrails, so you can scale what works without lying to yourself about why it worked.

Build distribution around proof, not vibes

When a piece of content performs, don’t just celebrate it. Identify what it proved: a new audience segment, a stronger hook, a clearer offer, or a trust signal that reduced friction. Then promote it in ways that preserve the learning, which usually means controlled variations rather than endless tweaks.

Use creators as trust infrastructure

Creators are not just reach; they’re a credibility layer, which is why budgets have shifted so quickly. The creator economy’s growth to $29.5B in 2024 with a $37B projection for 2025 is a signal that professional promotion now includes creator distribution as a core channel, not a “nice to have.”

The practical move is to design creator partnerships around measurable outcomes: content reuse rights, tracked landing experiences, and lift-friendly testing windows. If you can’t measure it cleanly, keep it smaller until you can.

Promote full-funnel, not just bottom-funnel

Bottom-funnel promotion is tempting because it looks measurable, fast. But many categories need trust and familiarity before action. LinkedIn’s benchmark framing around video and influence is useful here, because it shows how widely marketers are using video to build trust across the journey, with most already using video and many planning to increase investment.

Protect the landing experience like it’s part of the ad

Promotion multiplies whatever is true downstream. If your landing experience is unclear, promotion amplifies waste. If your follow-up is slow, promotion amplifies lead decay. Professional teams treat the conversion path as part of creative, not as a separate department’s problem.

Upgrade measurement before you upgrade spend

If you want to promote aggressively, you need measurement that can hold up when results get questioned. Lift studies are one of the cleanest ways to do that when budgets become meaningful, because they’re designed to estimate incremental impact rather than platform credit. TikTok’s materials on conversion lift measurement and the real-world breakdowns in Domino’s Spain and Artlist show how professional teams turn “promotion” into a measurable growth system.

Future Trends

The next phase of marketing digital social media won’t be won by the teams that post the most. It’ll be won by the teams that build the best systems for speed, trust, and measurement while the ecosystem keeps shifting underneath them.

AI-driven ad creation and targeting will become the default

Platforms are moving toward automation that can generate variations, test them, and optimize delivery with less hands-on media buying. Meta’s roadmap toward broader ad automation by 2026 is one of the clearest signals that “campaign craft” will increasingly be about inputs: conversion events, creative variety, and brand guardrails. Meta’s automation ambitions reported by Reuters is a useful reminder that the creative supply chain will matter more than button-pushing.

Creator-led distribution will keep absorbing budget and attention

As feeds get noisier, credibility becomes the bottleneck, and creators are one of the fastest ways to borrow trust. That shift is already visible in how teams plan their social roadmaps, and it’s echoed in industry trend work that focuses on culture-first content, fast iteration, and creator behavior. Hootsuite’s Social Trends 2026 captures how quickly social norms evolve and why brands need to build for agility, not perfection.

Social commerce will keep compressing the path from discovery to purchase

Social platforms are steadily reducing the friction between “I saw it” and “I bought it,” especially as short-form video and integrated checkout patterns mature. Broader marketing trend research is increasingly framing social as a commerce engine, not just a media channel. Kantar’s Marketing Trends 2026 highlights the continued pivot toward commerce behaviors inside social environments.

“Zero-click” discovery and AI summaries will reshape what traffic means

More discovery is happening without a traditional website visit, whether it’s within platforms or inside AI-generated summaries that reduce the need to click through. This changes how you measure impact and why you should invest in owned audiences and repeatable distribution, not only SEO traffic forecasts. The media industry’s warning lights about traffic loss reflect the same structural shift that marketers are feeling in analytics: attribution will get harder, and brand-led demand generation will matter more. The Reuters Institute-driven coverage in The Guardian is a strong example of how “the traffic era” is being challenged by AI summaries and platform consumption.

First-party measurement will become a survival skill, not a nice-to-have

As browsers and platforms tighten data access, durable measurement moves closer to first-party infrastructure: consent-aware tracking, server-side collection, and cleaner event design. European tracking conversations are increasingly treating server-side approaches as a practical necessity, reinforced by both local expert commentary and formal research. JENTIS’ Tracking Report and region-specific guidance like Czech tracking trends for 2026 reflect how quickly “measurement hygiene” is becoming part of everyday marketing operations.

Strategic Framework Recap

This series treated marketing digital social media as an operating system, not a collection of tactics. Here’s the recap you can keep as a working checklist.

- Define the outcome: One business result you will be judged on (pipeline, purchases, trials, retention, resolved cases), not a vague engagement goal.

- Build a clear conversion path: Platform attention should lead to an owned action with minimal friction and a follow-up system that protects value.

- Create a creative system: Batches, formats, and repeatable angles that let you ship volume without lowering quality.

- Engineer distribution: Organic for compounding, paid for controllable scale, creators for credibility and cultural access.

- Measure what’s real: Platform dashboards for direction, business systems for truth, and lift methods when you need to prove incremental impact.

- Scale responsibly: Increase creative velocity first, scale budgets with guardrails, and keep learning loops stable so improvements compound.

If you do nothing else after reading this guide, do this: write down your conversion event definitions, your creative testing rhythm, and your decision rules. That single act turns “social activity” into “a system you can scale.”

FAQ – Built for the Complete Guide

1) What does “marketing digital social media” actually include?

It includes the full system: content and community, paid distribution, creator partnerships, conversion experiences (landing pages and funnels), and the measurement layer that ties activity to outcomes. If your plan ends at “post more,” you’re missing the parts that make results repeatable.

2) Where should I start if my results are inconsistent?

Start with your conversion path and measurement. If you can’t trust your events and your funnel is friction-heavy, creative and targeting changes won’t fix the underlying leak. Once the path is clean, build a weekly creative testing rhythm you can maintain.

3) How many platforms should I run at once?

Fewer than you think. Start with one “core” platform where your audience is active and one “support” platform that can repurpose your strongest formats. Expand only when your creative production and measurement can handle it without turning into chaos.

4) Is organic social still worth it, or should I just pay?

Organic is worth it when you treat it as compounding distribution: series-based content, consistent formats, and community interaction that builds familiarity over time. Paid is worth it when you need controllable scale and structured testing. The best systems make them support each other, rather than forcing a choice.

5) How do I know what metrics to measure weekly?

Measure what matches the stage you’re improving. For attention: hook and hold metrics. For intent: landing engagement and return behavior. For outcomes: qualified leads, purchases, or booked calls. If a metric doesn’t change a decision, don’t make it a weekly obsession.

6) Why do different dashboards disagree on results?

Because they measure different slices of the journey with different assumptions. Platforms see what happens on-platform and what they can match. Your analytics tools see what happens on your site or app. Your CRM sees what turns into revenue. This is normal, which is why teams increasingly rely on incremental measurement approaches as budgets rise. TikTok’s conversion lift overview is a good example of how platforms frame causal measurement beyond standard attribution.

7) What is creative fatigue, and how do I prevent it?

Creative fatigue is what happens when the same message saturates the reachable audience and incremental impressions become lower quality. Prevent it by maintaining three lanes: evergreen winners, fresh variations of winners, and experiments that may become the next winner. Rotation rules are a system, not a guess.

8) Are creators worth it for performance, or only for awareness?

Creators can perform across the funnel when the partnership is designed for outcomes: clear briefs, a strong offer, tracked landing paths, and reuse rights so you can turn creator content into paid creative tests. The biggest mistake is paying for a one-off post with no measurement plan and no way to compound the asset.

9) What should I do about AI in social and ad buying right now?

Use AI to increase creative variation, speed up iteration, and improve operational throughput, but keep humans responsible for brand voice, claims, and quality control. Platforms are moving toward deeper automation, so the winning edge becomes your inputs and your standards, not your willingness to micromanage. Reuters’ reporting on Meta’s AI ad automation direction is a clear signal of where the market is heading.

10) How do I prove impact to leadership when traffic and tracking are changing?

Start by aligning on one source of truth for business outcomes (CRM or commerce system), then use platform metrics to explain “how,” not just “how much.” When the investment is meaningful, add incrementality tests (lift studies or controlled experiments) so you can answer the real question: did this create new outcomes? Recent reporting on AI summaries reducing click-through behavior shows why businesses are increasingly forced to measure influence, not just last-click.

11) How long does it take to see results?

For direct-response campaigns, you can often see directional signal in days if tracking and creative are solid. For trust-building and category-shaping work, you should plan for weeks and measure behavior changes (search interest, assisted conversions, repeated engagement) rather than demanding instant purchases.

12) What if I’m a solo marketer or freelancer?

Reduce scope, not standards. Pick one platform, one conversion path, and one weekly testing rhythm. Keep your reporting simple and decision-driven. The goal is to build a system you can run consistently without burning out.

Work With Professionals

If you’ve made it this far, you already know the real challenge in marketing digital social media isn’t “knowing what to do.” It’s having enough momentum to do it consistently: shipping creative fast, testing like a scientist, and proving impact while the ecosystem keeps changing.

That’s also why so many talented marketers get stuck in the same loop: too much time pitching, too little time practicing the craft, and not enough access to serious companies that actually want outcomes.

If you want to spend the next quarter building a stronger portfolio with real contracts instead of chasing leads across random job boards, a focused marketplace can change everything. MARKEWORK is built to help marketing freelancers and teams connect quickly through clear listings, rich profiles, and direct communication. Its “Why Us” page highlights direct communication and no project fees, and its pricing page reinforces simple subscriptions with no commissions.

Here’s the part that matters emotionally: you don’t need another “platform that takes a cut.” You need a place where you can show proof, get in front of real opportunities, and negotiate directly, without a middle layer slowing you down. MARKEWORK is designed around that idea, with no project fees and direct company-to-marketer messaging baked into the model. The homepage describes the no-middleman approach, and the pricing FAQ confirms there are no commissions or per-project fees and that companies and marketers handle contracts and payments directly.

If you’re ready to stop treating your freelance growth like a gamble, build your profile, pick a plan that matches your pace, and start applying to real roles and projects. The platform currently shows over 1,000 active listings, and the subscription plans are positioned around access and momentum rather than taking a percentage of your work. The plan descriptions emphasize access to thousands of job listings and no project fees.