Digital marketing media used to be “pick a channel, run some ads, post a few updates.” Now it’s a living system: platforms, creators, retail ecosystems, algorithms, privacy constraints, and measurement that’s under constant pressure.

If you’ve ever felt like campaigns can be busy and still underperform, it’s usually not because you chose the wrong channel. It’s because the media system wasn’t designed end-to-end: audience intent, creative, distribution, landing experience, and measurement weren’t built to work together.

This Part 1 lays down a clear definition, explains why it matters right now, and gives you a framework you can use across brands, budgets, and industries.

Article Outline

- What Is Digital Marketing Media?

- Why Digital Marketing Media Matters

- Framework Overview

- Core Components

- Professional Implementation

What Is Digital Marketing Media?

Digital marketing media is the full set of digital environments where your brand can win attention and convert it into business value. That includes paid placements (search, social, video, display, retail media), owned surfaces (your website, app, email list), and earned reach (PR, reviews, shares, creators, communities).

But here’s the important part: digital marketing media isn’t just “where you show up.” It’s also how your message is delivered (formats, targeting, bidding, algorithms), when it’s delivered (context and frequency), and how you prove it worked (measurement and experimentation).

A simple way to think about it: digital marketing media is the operating system of modern demand. When it’s designed well, it creates a steady, compounding flow of customers. When it’s designed poorly, it creates noise, inflated costs, and reporting that looks confident but doesn’t hold up under scrutiny.

In practice, professionals treat digital marketing media as a connected set of decisions:

- Where to show up (channels and ecosystems)

- Who to reach (audiences built from intent, signals, and first-party data)

- What to say (creative strategy and offers matched to the moment)

- How to deliver it (buying, optimization, and brand safety controls)

- How to measure it (incrementality, attribution, MMM, and clean data foundations)

Why Digital Marketing Media Matters

Because money is already moving there, and the companies that treat media as a system are pulling away from the ones that treat it as a checklist.

On the macro level, digital has become the majority share of ad investment. Worldwide, forecasts have digital taking more than three quarters of total media ad spending, alongside total ad spend crossing the trillion-dollar mark in 2025 in leading forecasts and updates. That shift is visible in the 2025 worldwide forecast highlighting digital exceeding 75% of total media ad spend, and it’s reinforced by other market outlooks tracking a similar direction of travel. WARC’s global ad spend outlook updates also model the continued expansion of digital within global ad growth.

On the market level, the pattern repeats: the US set a new high for internet advertising revenue in 2024, and Europe recorded landmark digital totals as well. The IAB/PwC full-year 2024 report puts US internet ad revenues at $258.6B. In Europe, digital advertising crossed a major threshold, with the market surpassing €100B and reaching €118.9B in constant currency terms. IAB Europe’s AdEx Benchmark 2024 release details the €118.9B figure.

On the organization level, digital dominates how teams spend their marketing budgets even when budgets tighten. Gartner reported digital channels accounted for 61.1% of total marketing spend—a signal that the center of gravity is already digital, and the competitive edge comes from doing it better, not merely doing more of it.

Then there’s the structural shift: privacy, platform changes, and measurement reliability. The old comfort blanket—“we’ll just track everything perfectly”—keeps getting pulled away. Even the path of third-party cookies in Chrome has changed direction over time, and the takeaway for media professionals is consistent: build with durable, privacy-respecting data and measurement assumptions. Reuters’ reporting on Google’s decision not to introduce a standalone cookie prompt in Chrome captures how the landscape can pivot.

So why does digital marketing media matter for your day-to-day work? Because it’s the difference between:

- Activity (campaigns running) and outcomes (profitable growth)

- Platform-optimized performance (good inside one dashboard) and business-optimized performance (good on the P&L)

- Short-term wins and long-term compounding (brand memory, first-party data, and repeat demand)

When you treat digital marketing media as a system, you stop chasing tactics and start building an advantage your competitors can’t copy in a week.

Framework Overview

This framework is designed to be used the same way by a freelancer, an in-house team, or an agency: it forces clarity, prevents wasted spend, and makes measurement defensible.

Think of it as five connected layers. Each layer depends on the one before it:

- Strategy layer: business goals, category reality, margins, and growth constraints

- Audience layer: demand types (existing vs. new), intent signals, and first-party data

- Media layer: channel roles, formats, budgets, pacing, and reach/frequency logic

- Creative layer: message-market fit, offers, creative testing, and fatigue management

- Measurement layer: instrumentation, attribution, incrementality tests, and MMM

The key is that the layers are not independent. If the audience layer is fuzzy, the media layer turns into guesswork. If the creative layer is weak, you’ll “solve” performance by over-targeting or over-discounting. If measurement is fragile, you’ll optimize toward numbers that don’t reflect true lift.

Professionals use the framework to assign roles to channels instead of letting channels compete for credit. Search might harvest demand. Social video might create it. Retail media might capture it at the moment of purchase. The system works when each part has a job—and success criteria that match that job.

Core Components

Digital marketing media looks complicated because it contains many moving parts. The good news is that most of the complexity collapses into a small set of components you can design intentionally.

Channel Types and Their Real Jobs

Instead of treating every platform like a vending machine for conversions, assign roles that map to human behavior.

- Demand capture: search and high-intent retail placements that convert people already looking

- Demand creation: social video, creators, and high-attention formats that create preference before intent exists

- Demand nurture: email, CRM, and retargeting that turn “not yet” into “now” without spamming

- Demand defense: brand search protection, conquesting countermeasures, and reputation surfaces (reviews, listings)

Once roles are clear, you can stop forcing every channel to look like last-click performance. That single shift often improves decisions more than any “new tactic.”

Signals, Data, and Targeting Foundations

Modern digital marketing media runs on signals. Some are explicit (search queries, add-to-cart events). Others are probabilistic (interest patterns, lookalikes, platform predictions). The quality of your signals determines how efficiently platforms can deliver results.

That’s why first-party data has become strategic rather than optional: clean customer lists, high-quality conversion events, and consistent product data feeds help algorithms learn faster and waste less budget.

At the same time, the privacy landscape means you can’t build a plan that assumes perfect user-level tracking forever. The more your media system can operate with privacy-safe measurement methods, the more resilient it is when platforms or policies change. Google’s Privacy Sandbox guidance highlights how teams should test and migrate away from fragile cookie dependencies.

Creative as the Performance Engine

In many accounts, creative is treated like decoration. In high-performing accounts, creative is treated like the engine that changes unit economics.

Digital marketing media rewards relevance. Relevance comes from understanding what someone is trying to do in the moment, and delivering a message that reduces uncertainty and makes the next step feel obvious.

This is why creative systems beat “one hero ad” thinking. A system includes multiple angles, multiple proofs, and multiple formats. It anticipates fatigue, it learns fast, and it produces new iterations at a cadence the platforms can actually use.

Buying, Optimization, and Operational Discipline

The mechanics matter: budget pacing, bidding constraints, frequency controls, exclusions, placements, and brand safety. But the hidden lever is operational discipline—how consistently a team runs experiments, documents learnings, and applies them.

Automation can help, but only when it’s paired with clear intent. For example, automated campaign types can deliver lift when they’re tested as part of a structured plan rather than swapped in blindly. Meta’s case study for NOW discusses purchase lift measured through a defined approach to Advantage+ shopping campaigns.

Measurement That Can Survive a Tough Conversation

If your reporting only works when nobody asks hard questions, it’s not measurement—it’s reassurance.

Professional digital marketing media measurement usually blends four methods:

- Platform reporting: fast feedback loops for optimization, with known bias

- Attribution: directional crediting across touchpoints, sensitive to tracking quality

- Incrementality testing: experiments that estimate causal lift

- Marketing mix modeling (MMM): higher-level ROI and budget allocation, especially for cross-channel decisions

This is also why MMM is back in the spotlight: teams need privacy-safe ways to connect spend to outcomes at the business level. Google’s Meridian documentation describes an MMM framework designed for advertisers to run models in-house, and the broader industry conversation increasingly focuses on making MMM usable for decisions—not just analysis. IAB’s leadership commentary on MMM usability reflects this shift toward decision-ready measurement.

Professional Implementation

Here’s how experienced teams implement digital marketing media so it stays coherent as scale, channels, and complexity grow.

1) Define Success in Business Terms First

Start with the constraint that actually governs your growth: margin, payback period, capacity, inventory, or sales cycle length. Then translate that into measurable media objectives.

This prevents a common failure mode: optimizing for cheap clicks or low CPA that looks great on paper but produces customers who churn, return products, or never become profitable.

2) Map Channel Roles to the Customer Journey

Assign each channel a job and a primary KPI that fits that job. Don’t force everything into the same measurement box.

- Search: efficient demand capture, strong intent signals

- Social video and creators: attention and preference building, reach into new audiences

- Retail media: conversion at point of purchase, product-level optimization

- Email/CRM: retention, repeat purchase, and lowering reliance on paid media

When roles are defined, you can design budgets as a portfolio instead of a platform fight.

3) Build a Testing System That Produces Answers, Not Noise

Professionals don’t “test creatives.” They test hypotheses: which promise reduces friction, which proof builds trust, which offer changes conversion rate without destroying margin.

A practical testing system includes:

- Clear hypothesis and success metric before launch

- Minimum sample sizes and time windows that avoid false wins

- Structured creative inputs (hooks, proofs, objections, offers)

- A simple learning library so wins can be repeated

For causal truth, incrementality testing matters. Platforms are putting more emphasis on lift studies and experiment-driven measurement because it produces decisions teams can stand behind. TikTok’s conversion lift study overview explains experiment-based measurement for isolating ad-driven conversions.

4) Fix Data Foundations Before You “Scale”

Scaling broken tracking just scales confusion. Prioritize the unglamorous work:

- Clean conversion events and consistent naming

- Server-side or robust event forwarding where appropriate

- Product feeds that match what you actually sell (price, availability, variants)

- UTM discipline and a single source of truth for reporting

This is the difference between optimization that’s real and optimization that’s just your dashboards arguing with each other.

5) Measure Like a Professional: Blend Methods

Use platform reporting to move fast, but calibrate it with methods that are harder to game. Incrementality tests help you validate channel impact. MMM helps you make allocation decisions and understand diminishing returns, especially when you’re investing across many channels.

That blend is increasingly common because teams know budgets are under pressure. Marketing leaders are being asked to prove impact with fewer resources, and that forces better measurement habits. Gartner’s CMO spend survey highlights how budgets have tightened, increasing the demand for demonstrable performance.

6) Operate as a System, Not a Set of Campaigns

The final shift is cultural: you move from “launch campaigns” to “run a media system.” That system has rhythms:

- Weekly: creative refresh, pacing checks, anomaly investigation

- Monthly: channel role review, audience quality, offer performance

- Quarterly: measurement calibration, incrementality tests, MMM updates, budget reallocation

When you implement digital marketing media this way, the work becomes calmer. You stop reacting to every metric wiggle and start compounding learnings—because the system is built to produce clarity.

Step-by-Step Implementation

A solid digital marketing media plan becomes real only when it’s implemented as a system. The goal isn’t “launch campaigns.” The goal is to create a repeatable operating rhythm where strategy, tracking, creative, and optimization reinforce each other instead of fighting for attention.

This is the implementation sequence that holds up across clients, industries, and budgets—because it prioritizes truth first, then speed, then scale.

Step 1: Lock the Business Constraints

Start with the constraint that decides what “good performance” actually means: margin, payback window, sales capacity, inventory limits, or long-cycle lead quality. If you don’t define this up front, every platform will optimize you into a corner where the dashboard looks happy and the business feels stressed.

Write down the non-negotiables in plain language. Then translate them into measurable targets your media system can steer toward (for example: cost per qualified lead, contribution margin per order, or revenue per booked call).

Step 2: Design Measurement Before You Touch Spend

Measurement design is where professionals quietly win. It’s not glamorous, but it prevents the most expensive failure mode in digital marketing media: scaling a system that can’t tell the truth.

- Define the conversion events that matter, and make sure they map to real business outcomes (not vanity milestones).

- Decide the source of truth for revenue and lead quality (CRM/POS for most businesses, not an ad platform).

- Set naming conventions so campaigns can be analyzed without archaeology.

- Plan incrementality for the moments where you’ll need proof, using approaches like Meta’s conversion lift methodology or Google Ads conversion lift access updates.

Step 3: Stabilize Signal Quality

Algorithms don’t “need more data.” They need cleaner data. If your conversion signal is incomplete, duplicated, or inconsistent, optimization becomes expensive guesswork.

- Fix event reliability (dedupe rules, consistent parameters, correct revenue values).

- Harden tracking where browser loss is hurting you, often by adding server-to-server event delivery such as Meta Conversions API.

- Reduce reporting fragility by keeping a stable analytics layer (and, when needed, pushing event data into a warehouse via GA4’s BigQuery export).

Step 4: Assign Channel Roles and Budget Guardrails

Channels should not compete for credit inside your system. Give each one a job, then define what success looks like for that job.

- Capture: harvest existing intent efficiently (search, retail placements).

- Create: build new demand and preference (video, creators, high-attention formats).

- Nurture: convert “not yet” into “now” (retargeting, email, lifecycle).

- Defend: protect brand demand and reduce competitor leakage (brand search, listings, reputation surfaces).

Once roles are clear, set guardrails: maximum CAC for each segment, minimum creative refresh cadence, and a rule for when spend can scale (for example: only scale after signal stability and creative tests converge).

Step 5: Build the Creative Production System

Creative isn’t a one-time deliverable in digital marketing media. It’s a production system that feeds the platforms with fresh angles, proofs, and formats at a pace that prevents fatigue.

- Write a messaging matrix (promises, proofs, objections, offers, and audience-specific hooks).

- Design creative sprints (weekly or bi-weekly batches) instead of “big campaigns” that age badly.

- Protect brand consistency with simple review rules so speed doesn’t turn into chaos.

Step 6: Launch With Experiments, Not Opinions

Launch day should feel boring. If your launch feels dramatic, it usually means you’re gambling instead of testing.

- Start with a controlled budget and a clear learning agenda.

- Test one primary variable at a time (creative angle, offer, landing page, audience structure).

- Document what you learned so the system compounds, rather than resetting every month.

Execution Layers

The fastest way to get lost in digital marketing media is to treat execution as one flat “campaign task list.” Execution works better when you see it as layers, because each layer has different owners, timelines, and failure risks.

Foundation Layer

This layer is the plumbing: tracking, consent, data definitions, and reporting logic. If it’s fragile, everything above it will feel unreliable, especially when platforms change reporting fields or deprecate metrics.

Teams that take this seriously avoid panic later by building a measurement base that survives ecosystem shifts, including changes like the Meta reporting deprecations that affected “unique” conversion fields.

Architecture Layer

This layer is how you structure campaigns for learning and control: account segmentation, campaign objectives, bidding constraints, exclusions, and pacing rules. Good architecture prevents the two classic problems: platforms cannibalizing each other and budgets drifting into the easiest-to-measure conversions.

In practice, architecture also includes how you connect first-party audiences into your media ecosystem, like the workflow described in the AWS breakdown of Tealium audiences flowing into Amazon Marketing Cloud and Amazon DSP.

Creative Layer

This is where performance is actually negotiated with humans. A good creative layer includes clear messaging strategy, fast iteration, and enough format diversity that each channel can do its job without forcing everything into the same template.

Experience Layer

Media can only convert what the landing experience can hold. Page speed, clarity, trust signals, product detail quality, and checkout friction determine whether your paid traffic turns into customers—or into expensive “almosts.”

Measurement Layer

This layer turns activity into decisions. It includes platform reporting for speed, but it doesn’t stop there. It blends experiments and incrementality where needed so you can defend budget with confidence, using tools like conversion lift testing and the evolving accessibility of lift studies such as improved conversion lift thresholds in Google Ads.

Optimization Process

Optimization in digital marketing media isn’t “tweak bids every day.” That approach creates busywork and makes teams emotionally reactive to normal variance. Real optimization is a loop: diagnose, hypothesize, test, validate, then scale.

1) Diagnose What’s Actually Broken

Start by identifying the layer where the problem lives. If spend is high and results are flat, the issue might be creative fatigue, weak offers, a tracking break, or a landing page bottleneck—not “the algorithm.”

Use triangulation: platform dashboards for fast signals, analytics for journey patterns, and business systems (CRM/POS) for outcome reality.

2) Write a Hypothesis That Can Be Wrong

A usable hypothesis forces clarity. “Improve creative” isn’t a hypothesis; “a proof-first hook will reduce hesitation and increase add-to-cart rate in cold audiences” is.

Make the hypothesis measurable and tied to one primary success metric, plus one sanity metric that prevents you from “winning” by damaging profitability.

3) Run a Clean Test

Keep tests simple enough that you can trust the result. One variable, controlled budget, consistent measurement window, and a pre-defined rule for when you’ll call it.

When you need causal proof (especially for upper funnel), build the habit of incrementality testing. That’s the logic behind experiments like Meta’s conversion lift studies, designed to separate true lift from conversions that would have happened anyway.

4) Validate Against Business Reality

Before you scale, verify the win isn’t a reporting illusion. Check lead quality, refund rates, repeat purchase behavior, or pipeline progression.

If you can’t validate, treat the result as directional and keep testing until it’s defensible.

5) Scale With Guardrails

Scaling should be controlled, not emotional. Increase budgets in steps, monitor marginal returns, and keep creative production ahead of spend so the system doesn’t stall from fatigue.

Implementation Stories

The best way to understand digital marketing media implementation is to watch what happens when real teams hit real walls—then rebuild their system so it can survive pressure.

Barceló Hotel Group: When the Cookie Future Arrived Early

The pressure wasn’t theoretical anymore. Third-party signal loss was creeping in, and the team could feel the ground moving under their reporting. Every week introduced a new uncertainty about what could still be tracked cleanly, and what was becoming guesswork.

At the same time, the business still needed performance. Barceló wasn’t in the market for “privacy conversations” — it needed bookings, it needed efficiency, and it needed a way to reach the right travelers without betting everything on vanishing identifiers.

The backstory is a familiar one in modern digital marketing media. Barceló is a global hospitality group operating across many markets, which means their data and media processes naturally grew complex over time. Multiple stakeholders touched the customer journey, and every new tool added value while also adding friction.

Even with strong teams, complexity has a cost. When the system is fragmented, speed slows down, audiences get stale, and performance marketing becomes harder to steer with confidence.

Then they hit the wall: activation was too slow. Building and moving high-value audiences into media platforms could take weeks, which is an eternity when you’re trying to react to demand shifts. And when the ecosystem is changing under your feet, “slow” becomes “unsafe.”

The more the team tried to push, the more they felt the limitation: the process required too much technical coordination just to do the basics well. That meant fewer experiments, slower learning, and more reliance on whatever the platforms would optimize on their own.

The epiphany was realizing that the competitive advantage wasn’t a new channel—it was faster audience activation with first-party signals, inside privacy-safe environments. The project stopped being “improve campaigns” and became “improve how audiences move.” That’s where the Tealium + Amazon Ads integration became central to their approach, described in both the AWS technical breakdown and the Tealium press release on the Barceló implementation.

The journey was operational, not magical. They connected first-party signals through Tealium’s CDP and moved audiences into Amazon Marketing Cloud and Amazon DSP quickly, which meant media could run with better-defined segments. They also worked with Jakala for activation and performance management, turning “data strategy” into real campaigns instead of slide decks.

Most importantly, the system became easier to run. The same workflow that used to be slow and technical became something the marketing team could execute without waiting weeks for setup, highlighted in the implementation notes on faster connector setup.

The final conflict came from the hardest part of any stack shift: proving it wasn’t just “better plumbing.” Stakeholders still wanted to see performance movement, not architecture diagrams. And any time you introduce a clean room workflow, people worry it will slow teams down or reduce flexibility.

They had to keep execution moving while changing how execution worked. That’s the part nobody glamorizes, but it’s where most transformations fail—because teams try to rebuild the engine while driving the car.

The dream outcome was a system that could perform and stay resilient. The Barceló team described clean-room and DSP as a reliable path to reduce dependency on third-party cookies, and their partner team reported measurable improvements—framed as 23% incremental revenue and a 21% efficiency improvement in the consideration phase, echoed again in the GlobeNewswire distribution of the same announcement. That’s what good digital marketing media implementation looks like: not just better results, but a system that keeps producing results when the rules change.

DSB: When “We Track Conversions” Wasn’t Enough Anymore

The campaign was live, the spend was significant, and leadership wanted certainty. The problem wasn’t lack of effort—it was the creeping doubt that the numbers were incomplete. When results matter, “probably” starts to feel like a risk you can’t justify.

DSB’s reality is also emotionally loaded: public-facing outcomes, real demand patterns, and little tolerance for wasted spend. That kind of environment forces a higher standard of proof in digital marketing media, because performance isn’t just a growth story—it’s accountability.

The backstory is the modern tracking squeeze. Browsers and privacy controls make it harder to see the full picture, even when campaigns are working. That creates a dangerous gap where optimization decisions get made on partial signals.

When that happens, teams often over-correct. They chase what’s easiest to measure instead of what’s actually valuable, and performance starts drifting away from business reality.

The wall showed up as measurement uncertainty. If your conversion signal is missing or inconsistent, you’re not optimizing—you’re guessing. And when the reporting confidence drops, budget confidence drops with it.

DSB needed a way to strengthen measurement and understand what was truly incremental, rather than relying on assumptions baked into standard attribution views.

The epiphany was to treat signal quality as a performance lever, not a technical detail. They implemented Meta’s Conversions API and enriched it with more first-party data, then used a structured experiment to measure true lift. The Meta write-up describes the campaign being measured with a multi-cell conversion lift study during October 2–30, 2024.

The journey wasn’t just “install a tool.” It meant aligning data inputs, improving how conversions were sent, and making sure the test design could answer the question leadership actually cared about: what changed because of the ads. The story is anchored in the public Meta case study and reinforced in a broader market recap that cites the same result in PWX Solutions’ 2025 paid media white paper.

The final conflict is the part most teams feel but rarely say out loud: when you tighten measurement, the story can change. Some tactics look weaker, some look stronger, and internal politics can flare up because “credit” shifts. The team has to manage that tension while keeping performance stable.

That’s why the win isn’t just the metric—it’s the credibility gained when you can explain the metric and defend the method.

The dream outcome was measurable, defensible improvement. The case study frames the result as an 18% increase in conversions after enriching the Conversions API setup with more first-party data, a figure also repeated in the independent industry white paper recap. That kind of outcome is what professional digital marketing media implementation buys you: the ability to prove value when leadership is asking hard questions.

The Implementation Checklist Professionals Actually Use

- Measurement truth first: clear conversion definitions, consistent revenue values, CRM alignment, and a plan for incrementality when needed.

- Signal stability: hardened tracking and clean deduplication logic, using approaches like Conversions API where it improves reliability.

- Channel roles: each channel has a job, a primary KPI that matches that job, and budget guardrails to prevent drift.

- Creative cadence: a production rhythm that prevents fatigue and feeds learning, not occasional “hero launches.”

- Experiment discipline: hypotheses, controlled tests, documented learnings, and periodic causal measurement using tools like conversion lift studies.

- Scalable reporting: dashboards powered by governed definitions, with the option to deepen analysis through event-level exports when the business needs answers beyond surface metrics.

How to Avoid the Most Common Implementation Failure

The most common failure in digital marketing media isn’t choosing the wrong channel. It’s building a system that can’t keep up with itself: messy naming, inconsistent events, creative that arrives late, and optimization that reacts to noise instead of evidence.

If you implement the layers in the right order—truth, signals, roles, creative system, experiments—performance becomes calmer. And when the ecosystem shifts again (because it will), your system keeps producing decisions instead of panic.

Statistics and Data

In digital marketing media, data isn’t there to decorate a report. It’s there to answer one uncomfortable question: “Did this spend create net new business, or just create a story we like?”

The fastest way to make analytics useful is to separate market reality (what’s happening in the industry), platform reality (what the systems are optimizing on), and business reality (what actually hit revenue, margin, pipeline, and retention). When those three get blended into one chart, teams end up optimizing the wrong thing with a straight face.

It helps to zoom out occasionally. The U.S. digital ad market hit $258.6B in 2024 revenue, a number echoed in Adweek’s coverage and TVREV’s write-up. Europe crossed the symbolic threshold too, with the digital market reaching €118.9B in 2024 (constant currency), a milestone repeated by WARC’s summary and VideoWeek’s breakdown.

Those totals matter because they explain the pressure you feel day-to-day: more money flowing into more fragmented environments, with measurement getting harder at the exact moment CFOs want cleaner proof.

Performance Benchmarks

Benchmarks can be useful in digital marketing media as long as you treat them like weather forecasts: they help you plan, but they don’t tell you how your house is built. The goal isn’t to “hit the average.” The goal is to understand what the average implies about competition, intent, and creative fatigue—then decide what you’ll do differently.

Market Benchmarks That Shape Strategy

- Digital video is accelerating faster than legacy TV economics: U.S. digital video ad spend grew to $64B in 2024 (+18%) and is projected to reach $72B in 2025 (+14%), a trajectory reinforced by WARC’s coverage.

- Retail and commerce media aren’t “a tactic” anymore: the IAB/PwC 2024 revenue report highlights commerce media growth to $53.7B (+23%), and the broader 2024 digital total is repeated across IAB’s report hub and independent reporting like Yahoo Finance’s recap.

- The creator economy is now budget-line serious: U.S. creator ad spend is projected to reach $37B in 2025, with the same numbers carried in Marketing Dive and Deadline.

Platform Efficiency Benchmarks You Can Actually Use

Instead of chasing one “industry average CTR,” it’s more useful to benchmark directional movement in cost and volume. That’s the difference between thinking like a media buyer and thinking like an operator.

- Paid search is buying more volume when the auction lets it: Tinuiti’s benchmark reporting shows Google paid search spend up 11% YoY in Q2 2025 while clicks grew 7% YoY, with CPC growth slowing to 3%.

- Non-brand search is getting tighter in B2B: Dreamdata’s benchmark on B2B non-branded search notes average CTR around 4.04% (Aug 2024–Jul 2025) and CPC around $5.34. That aligns directionally with broader benchmark discussions drawn from large datasets like WordStream’s 2025 analysis window (Apr 2024–Mar 2025) and the recurring pattern Tinuiti shows in its quarterly trendlines (click growth and muted pricing in parts of 2025) across Q2 2025 and the Q4 2025 report hub.

- Cross-platform benchmarks only matter if you normalize goals: if one channel is set to “maximize clicks” and another is set to “maximize purchases,” comparing CTR or CPC is mostly a trap. Benchmarks are most honest when you compare profitability metrics (like MER or contribution margin) rather than platform-native efficiency metrics.

Analytics Interpretation

The difference between amateurs and professionals in digital marketing media isn’t access to dashboards. It’s the ability to interpret conflicting signals without panicking—and then turn that interpretation into a clear next move.

Start by Deciding What Gets to Be “True”

If a platform says ROAS is up but the business says profit is down, your job isn’t to pick a side. Your job is to reconcile the layers.

- Business truth: revenue, margin, returns, cancellations, pipeline progression, repeat purchase.

- Measurement truth: deduped conversions, consistent attribution logic, stable event quality.

- Platform truth: what the algorithm can see and optimize on right now, which can shift when reporting fields change or signals degrade.

This is also why measurement design keeps trending back toward experiments and modeling. The push to make MMM more accessible is a live signal of that shift, including Google’s open-source Meridian announcement and the newer push to bridge analysis into planning with Scenario Planner.

Read the Funnel Like a Story, Not a Spreadsheet

A healthy funnel doesn’t mean every metric is green. It means the story makes sense end-to-end.

- If reach rises and CTR falls, that can be normal when you expand audiences—unless quality metrics also collapse.

- If CTR rises but conversion rate falls, you may be attracting curiosity clicks rather than intent (creative mismatch, offer confusion, or landing page friction).

- If platform ROAS looks amazing but new customer rate drops, you might be harvesting existing demand instead of creating growth.

This is where lift testing earns its keep. A conversion lift study doesn’t care about attribution politics; it asks whether exposed audiences behaved differently than holdout audiences. Meta’s methodology pages for conversion lift exist for a reason: it’s a way to separate “credit” from “cause.”

The One Metric That Keeps Teams Sane

When stakeholders are overwhelmed, unify decisions around one metric that matches the business model:

- MER (Marketing Efficiency Ratio) for ecommerce or subscription brands that need blended performance clarity.

- Contribution margin per order when profitability swings wildly by product mix.

- Cost per qualified outcome for lead gen (SQL, booked demo, accepted quote), not just “leads.”

Then use platform metrics as diagnostic tools—not as the scoreboard.

Case Stories

When analytics is done well in digital marketing media, it changes how teams behave. They stop arguing over whose channel “won,” and start acting like one system chasing one outcome.

e.l.f. Cosmetics: The Week the Growth Story Almost Split in Two

The pressure hit fast. Germany was surging, the UK was heating up, and everyone wanted more budget—immediately. The problem was that growth wasn’t evenly distributed, and the team could feel the reporting narrative starting to fracture into “my channel did it” arguments.

At the same time, e.l.f. wasn’t buying ads for entertainment. They were trying to win market position in a category where momentum is fragile and competitors can copy what works within days. If they scaled the wrong levers, they wouldn’t just waste spend—they’d lose the window.

The backstory is that expansion adds complexity faster than it adds clarity. More markets mean more campaigns, more keywords, more product listings, and more ways for performance to look great in one dashboard while the business story gets muddy. Even a high-performing brand can drift into chaos if the operating system can’t keep up.

They also had the classic retail media dilemma: Sponsored formats deliver bottom-funnel volume, DSP can create new demand, and brand work matters—but only if you can see how it all connects. Without that connection, you either over-invest in what looks measurable or under-invest in what creates future sales.

Then the wall showed up as an optimization bottleneck. Scale required a deeper view of cross-channel behavior, time-to-purchase patterns, and how hundreds of product listings were interacting with campaigns. The team needed analytics that could handle complexity without slowing execution to a crawl.

And they needed it in a format stakeholders could believe, not a black-box “trust us” model that collapses the moment someone asks a hard question.

The epiphany was treating analytics as a steering wheel, not a rearview mirror. Amerge leaned into Amazon Marketing Cloud as a place to analyze full-funnel signals and segment performance across a large catalog, described in Amazon’s case study on Amerge’s work with e.l.f..

The story also became public beyond a single case page. Amazon highlighted the same work in its unBoxed 2025 recap, and Amerge documented the outcomes on its own case studies page, reinforcing that the results weren’t a one-off quote buried in a slide deck.

The journey was a mix of analysis and operational cleanup. They used AMC insights to understand how shoppers moved through the funnel, then translated that into targeting discipline, creative choices, and negative targeting rules that protected incremental growth. The work had to be fast enough to keep up with the market, but structured enough to remain interpretable.

That’s the trade: speed without governance turns into noise; governance without speed turns into missed opportunity.

The final conflict was what always happens when you tighten the analytics loop: the system exposes waste. When you start separating incremental growth from easy wins, someone’s favorite tactic often looks less heroic. Teams have to navigate that social friction while still delivering performance week after week.

It’s not just a technical fight—it’s a credibility fight.

The dream outcome was growth that could be defended and scaled. Amazon’s unBoxed recap references 106% sales growth in Germany and 54% growth in the UK, and Amerge echoes the same figures in its published case study summary, with independent industry coverage reinforcing the win in Marketing Brew’s Partner Awards write-up. That’s what analytics is supposed to do in digital marketing media: turn growth into something you can explain, not just something you hope continues.

MI Electro: When “We’re Getting Sales” Wasn’t the Same as “We’re Creating Lift”

The campaign looked strong—until the questions started. Sales were coming in, but the team couldn’t prove whether Meta was driving incremental demand or simply catching buyers who were already on their way. In a tight market, that uncertainty becomes a budget threat.

Stakeholders didn’t want another attribution screenshot. They wanted a straight answer: “If we turn this off, what disappears?” That’s the kind of question that makes teams realize how fragile their measurement story really is.

The backstory is that last-click and platform attribution often flatter the channel that closes the loop. That’s not “wrong,” but it’s incomplete—especially when multiple platforms touch the same customer journey. Without incrementality, the team risks optimizing for credit rather than impact.

And once that pattern sets in, budgets drift toward what looks provable inside one platform, even if the broader business would benefit from a different mix.

The wall arrived when optimization hit diminishing returns. If you can’t tell which conversions were truly caused by media exposure, scaling becomes a gamble. You either keep spending because the dashboard is green, or you cut spend and risk killing a channel that was actually doing real work.

Neither option feels professional.

The epiphany was moving from attribution claims to experimental proof. A conversion lift study uses a randomized holdout to measure incremental impact, and Meta documents that methodology through its conversion lift resources and partner programs.

In the MI Electro story, the measurement is framed through a partner-led conversion lift study and the results are published as a Meta marketing partner case study for Viva Conversion and MI Electro.

The journey was about building measurement discipline, not just launching more ads. They ran the lift study, identified incremental conversions, and used that clarity to adjust strategy without relying on guesswork. The result is described with a concrete business framing—incremental conversions tied to return—rather than vague “improvements.”

That’s the point: lift makes the conversation about cause, not credit.

The final conflict, again, is organizational. When you introduce incrementality, it can challenge the internal narrative of which tactics “work.” Teams have to accept that some conversions would have happened anyway—and that admitting that doesn’t make media pointless, it makes media accountable.

It’s a maturity leap, and it’s emotionally harder than most people expect.

The dream outcome is decision-grade proof. The published case highlights 211 incremental conversions and a 7.78× ROAS as the headline outcome, giving the team something rare in digital marketing media: a result they can defend without hand-waving.

Professional Promotion

This is where freelancers and consultants quietly separate themselves in digital marketing media: not by having more charts, but by turning analytics into a story a client can repeat in their own leadership meeting.

What Clients Are Really Paying You For

- Clarity under pressure: when performance dips, you explain what changed, what didn’t, and what you’ll test next.

- Proof that survives debate: triangulation between platform data, analytics, and business outcomes, with lift or modeling when the situation demands it.

- A decision rhythm: weekly operational moves, monthly strategy shifts, quarterly reallocation backed by evidence.

How to Package Your Analytics So It Sells Your Work

When you deliver reporting, structure it like a decision memo—not a data dump.

- Start with the business headline: revenue, margin, pipeline, retention—whatever the client truly cares about.

- Show the 2–3 drivers: what pushed the number up or down, anchored in one primary metric and one sanity metric.

- Explain the “why” in plain language: creative fatigue, auction pressure, audience expansion, tracking loss, offer mismatch.

- End with the next actions: the test you’re running, the budget change you’re making, and what success will look like.

How to Build Trust Without Sounding Defensive

Use credible external context sparingly and only when it improves decision-making. If a client asks “Is this market-wide?”, you can point to macro signals like digital revenue growth in 2024 or the rapid expansion of digital video—not to excuse results, but to explain the environment your strategy is operating within.

That’s how you promote yourself professionally inside the work: you become the person who makes the chaos make sense, then turns that clarity into the next win.

Future Trends

Digital marketing media is moving into a phase where the “channel list” matters less and the system design matters more. The next wave of advantage won’t come from finding a new platform first. It will come from building a media operating system that survives automation, privacy constraints, and the rapid collapse of the line between content, commerce, and community.

AI-native media execution is the clearest shift. Platforms are racing to make campaign setup, creative generation, and targeting more automated, with reporting signaling a push toward end-to-end automation in the next couple of years, including Reuters coverage of Meta’s ambitions to fully automate ad creation and targeting with AI by 2026. That doesn’t remove the need for marketers. It raises the bar: you’ll win by feeding the systems better inputs (signals, offers, creative angles) and by measuring what’s incremental, not just what’s attributed.

Creators keep absorbing budgets because attention has shifted. Brands are treating creators less like “influencer campaigns” and more like a core media channel, supported by IAB’s projection that U.S. creator ad spend reaches $37B in 2025 and the published report PDF that details the same headline figure in context of the broader media mix inside the IAB Creator report.

Retail and commerce media keeps becoming the third leg of the mix because it connects spend to purchase behavior inside closed ecosystems. The pressure point is measurement consistency, which is why standards work is accelerating, including IAB Europe’s commerce and retail media measurement standards.

Measurement is shifting toward privacy-durable methods. More teams are blending experiments and modeling because user-level attribution keeps getting noisier. The signal of where the industry is headed is visible in Google’s open-source MMM push via Meridian becoming broadly available, the technical documentation for running it in Google’s Meridian developer hub, and the framework itself published on GitHub.

Video keeps pulling budget, especially as CTV and digital video formats become more performance-measurable. That spend shift is reinforced by IAB’s reporting on digital video growth, including CTV and digital video growth in 2024 and the projection-driven view in the 2025 Video Ad Spend report hub.

The practical takeaway: the future of digital marketing media belongs to operators who can (1) design clean inputs for automated delivery, (2) produce creative systems that never run dry, and (3) prove incremental impact when attribution gets political.

Strategic Framework Recap

If you remember one thing from this guide, let it be this: digital marketing media is not a set of channels. It’s a connected system where every layer either strengthens the next layer or quietly sabotages it.

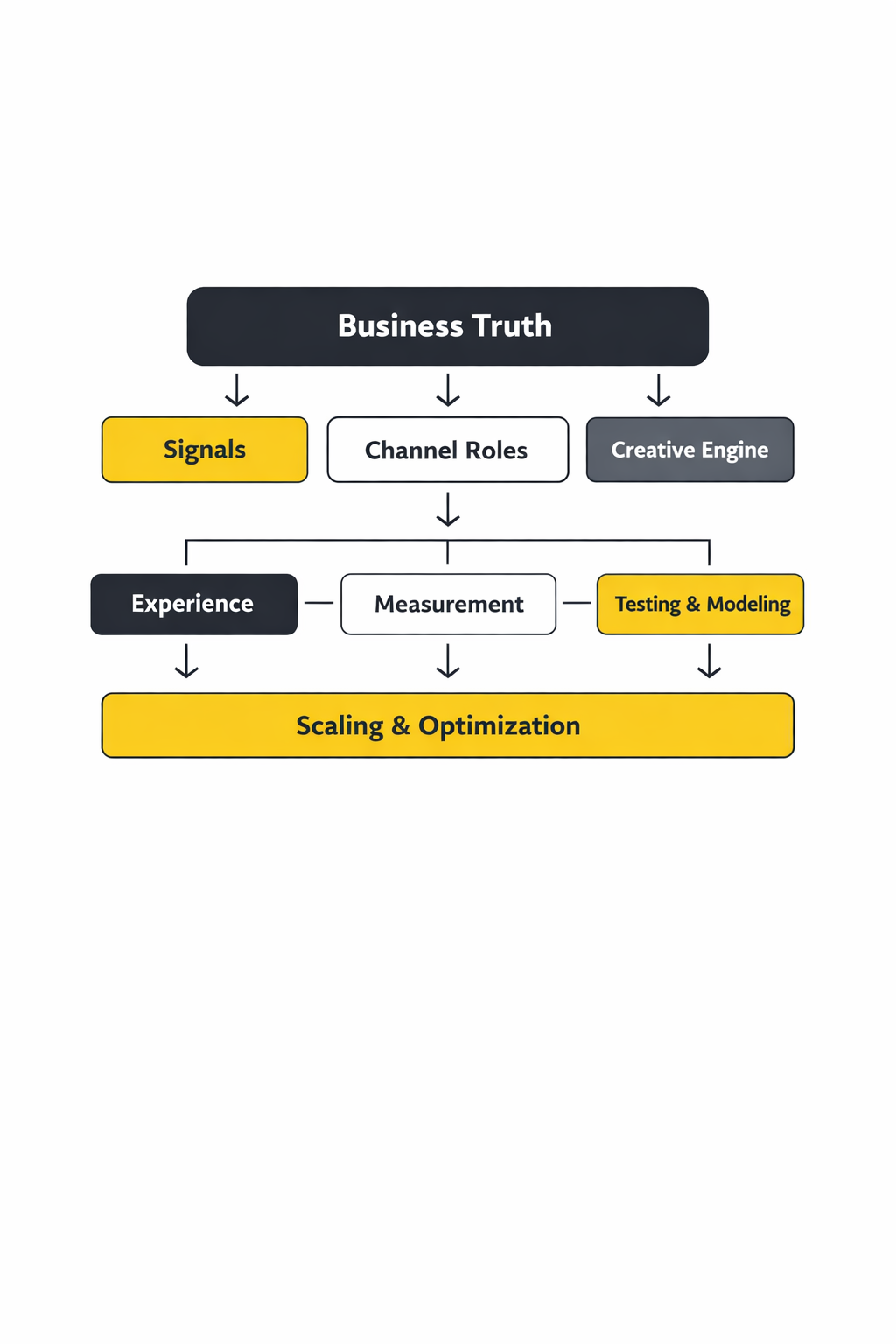

The framework you’ve built across Parts 1–5 can be summarized as an ecosystem loop:

- Business truth: goals tied to margin, payback, capacity, and growth constraints

- Signals: clean, durable conversion events and first-party outcomes that platforms can optimize toward

- Channel roles: capture, create, nurture, defend, each with the right success metric

- Creative engine: a production system that continuously tests angles, proofs, and offers

- Experience: landing and checkout flows that can actually convert the demand you buy

- Measurement: platform reporting for speed, experiments and MMM for truth, including privacy-durable approaches like Google’s Meridian MMM direction

When the ecosystem is healthy, scaling feels calmer: fewer “mystery swings,” fewer reporting arguments, and more confidence to invest where returns are real.

FAQ – Built for This Complete Guide

1) What does “digital marketing media” actually include?

It includes paid placements (search, social, video, display, retail media), owned distribution (site, email, app), and earned reach (PR, reviews, shares, creators). The professional mindset is to treat all of it as one system, not three disconnected buckets.

2) What’s the fastest way to make digital marketing media perform better?

Improve signal quality and creative quality at the same time. Stronger signals make algorithms less wasteful, and stronger creative improves conversion efficiency so you can scale without paying the “fatigue tax.”

3) Why do platform numbers disagree with analytics or CRM revenue?

Because each platform measures inside its own universe, with different attribution rules and different visibility into user behavior. That’s why serious teams triangulate outcomes and rely on experiments and modeling when decisions must be defended.

4) Should I optimize for ROAS, CPA, or something else?

Optimize for the metric that matches the business model. Ecommerce often benefits from a blended efficiency metric (like MER) tied to profit reality, while lead generation needs a qualified outcome metric (SQL, booked demo, accepted quote) rather than raw “leads.”

5) When do I need incrementality testing?

When you’re investing across multiple channels, scaling budgets, or leadership is questioning impact. Incrementality reframes the question from “who got credit?” to “what changed because we ran ads?” which is why it’s emphasized in modern guidance like Google’s incrementality testing explainer.

6) Is marketing mix modeling (MMM) only for huge brands?

It used to be, mostly because it was expensive and slow. That’s changing as open frameworks make MMM more accessible, including Google’s Meridian release and the practical docs for implementation in the Meridian developer portal.

7) How do I know if a channel is creating demand or just capturing it?

Look at new-customer share, time-to-purchase patterns, and what happens when you run holdouts or geo experiments. Demand creation often shows up as improved conversion efficiency later, not as immediate last-click wins.

8) What’s the biggest scaling mistake in digital marketing media?

Scaling spend before the system can tell the truth. If measurement is fragile or creative cadence is inconsistent, scaling mostly accelerates wasted learning and turns optimization into guesswork.

9) What trends matter most for the next 12–24 months?

Automation in ad creation and delivery, creator-led media becoming a core channel, retail media continuing to mature, and privacy-durable measurement. Signals include the creator economy investment described in IAB’s creator ad spend report and broader automation pushes reported by Reuters in Meta’s AI automation plans.

10) How can a freelancer prove value quickly to a new client?

Start with measurement hygiene and a clear channel-role map, then run one controlled test that produces a defensible outcome. Clients trust people who can explain what changed, why it changed, and what happens next without hiding behind platform screenshots.

11) Do I need a big tool stack to do this well?

No. You need a clean foundation, consistent definitions, and a decision rhythm. The stack should grow only when it removes a real bottleneck: signal loss, reporting contradictions, or slow activation.

12) What should I do if performance drops suddenly?

Don’t panic-optimize. First identify the layer where the break happened (signal, auction, creative, landing experience, or measurement). Then write a hypothesis you can test. Fast fixes are fine, but real recovery usually comes from one clean experiment and one clear change.

Work With Professionals

If you’re a marketer trying to win more clients, the hard part isn’t talent. It’s momentum. You can be great at digital marketing media and still spend weeks chasing the wrong leads, negotiating through middle layers, or losing deals because the process is slow.

There’s a cleaner way to build pipeline: be discoverable, connect directly, and keep your earnings predictable. That’s the promise behind MARKEWORK’s marketplace positioning as a marketing-only marketplace built to connect companies and specialists without a commission layer.

When clients are under pressure to make growth measurable, they don’t just hire “a marketer.” They look for someone who can design an operating system: clean signals, better creative, and proof that holds up in a finance conversation. If that’s your skill set, you want a place where the path from “seen” to “hired” is direct.

MARKEWORK is designed around that directness: it emphasizes direct communication and independent contracts, and it’s explicit about no project fees or commissions. Pricing is straightforward monthly access, positioned as plans for marketers and companies with access to thousands of listings.

If you want to spend less time chasing and more time doing the work you’re proud of, build a profile, show your proof, and let the marketplace do what cold outreach rarely does: put you in front of people already looking.

markework.com